3.

COSTS OF PUBLICATION

INTRODUCTORY COMMENTS

Floyd Bloom, The Scripps Research Institute

Cost concerns control quality, timeliness, and access so it is natural to begin the symposium with the consideration of the costs of scientific and medical publishing. We have to consider the costs of production, the costs of paper and ink, the costs of dissemination, the costs of acquiring the content that will be produced, and those costs that make a journal competitive with other journals. We also have to be concerned with the explicit, rigorous peer-review processes of scientific journals and the quality of what is published.

As we enter into the era of online publication, we encounter additional costs and concerns. How about the linking to the databases that give a publication equal access and immediacy, and carry it back into the perspective of the past? Why do these things cost so much, and which of these costs could we factor out and control if we knew how to?

The panel that addressed these issues included a variety of perspectives: a librarian, publishers of online and print journals of large and medium-sized societies, and a commercial publisher.

OVERVIEW OF THE COSTS OF PUBLICATION

Michael Keller, Stanford University

Michael Keller, Stanford University librarian and chairman of the board of HighWire Press, began the session by presenting some recent statistics compiled by Michael Clarke of the American Academy of Pediatrics, which provide a good overview of the marketplace for scientific, technical, and medical (STM) journals. Although these statistics are not directly on the cost of publications of electronic journals, they do set the stage for many of the issues discussed below.

According to a recent Morgan Stanley Industry Report,4 the STM journal market has been the fastest growing segment of the media industry for the past 15 years, with 10 percent annual growth over the past 18 years, and a predicted 5 to 6 percent annual growth over the next 5 years. At the same time, there has been a consolidation of STM journals into a small number of giant publishers. More than 50 percent of STM journals are published by the 20 largest publishers.

According to the Ingenta Institute,5 until recently, the number of scientific journals doubled every 15 years but the rate of growth of new titles has substantially slowed over the past 5 years; journal size has grown in order to compensate for the lack of new titles; cost of subscriptions has escalated due to size of issues and investments in online technologies; and library budgets are under increasing pressure. There has been a proliferation of library consortia over the past 25 years. These consortia are playing an increased role in electronic journal purchasing decisions and negotiations. The “big deal” began in 1997. It guarantees publishers steady income and provides libraries with steady prices and increased access to titles. The majority of big deals have been signed in the past three years, and the first large wave of contracts will be expiring in 2003. Predictions for the institutional marketplace include decline of library materials budgets, decline of the big deal, a move toward smaller collections, selection of titles will return to importance, and there will be animosity toward for-profit publishers.

Main Elements of Expense Budgets for Some STM Journal Publications

First, the processing costs for the content (i.e., articles, reports of the results, and methods of scholarly investigation) of STM journals come in several subcategories:6 (1) manuscript submission, tracking, and refereeing operations; (2) editing and proofing the contents; (3) composition of pages; and (4) processing special graphics and color images. Internet publishing and its capacity to deliver more images, more color, and more moving or operating graphics have made this expense grow for STM publishers in the past decade.

The second category of expense is a familiar one, but is also one of two targets for complete removal from the publishers' costs—the costs of paper, printing, and binding, as well as mailings. As researchers educated and beginning their careers in the 1990s replace retiring older members of the STM research community, publishers might finally switch over to entirely Internet-based editions and distribute no paper at all. This transformation would thus move the costs of printing and paper to the consumer desiring articles in that form and remove binding and mailing from the equation altogether.

A third category of expense is that of the Internet publishing services. These are new costs, and they include many activities performed mainly by machines, though in some situations staff perform quality control pre- and post-publication to check and fix errors introduced through the publishing chain. The elements of these costs vary tremendously among publishers and Internet publishing services. At the high end they can include parsing supplied text into a rigorously controlled version of SGML or XML; making hyperlinks to data and metadata algorithmically; presenting multiple resolutions of images; offering numerous elaborate search and retrieval possibilities; supporting reader feedback and e-mail to authors; supporting alerting and prospective sighting functions; delivering content for indexing to secondary publishers and distributors, as well as to Internet indexing services; and supporting individualized access control mechanisms. At the low end, those characterized by PDF-only e-publishing, the cost elements would include simple search, common access control mechanisms, and delivering content for indexing. The range of costs in Internet publishing services is quite wide, although the actual size of this category in the expense budget is relatively small.

The fourth cost category—publishing support—is everything from catering of lunches, to finance offices, including facilities and marketing.

The final category is the cost of reserves. Some organizations have money set aside for disasters or to address opportunities. Some of these reserves are for capital projects or to hedge against key suppliers failing. A great many not-for-profit organizations do not label reserves as such but have investments or bank accounts whose earnings support various programs, but whose principal could be used in a reserve function as needed.

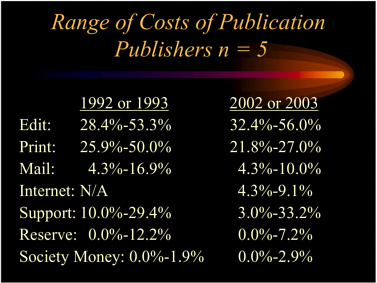

The results of a recent sampling by Michael Keller of six not-for-profit publishers' costs are presented in Table 3-1. The data were compiled in a wide variety of categories, so there is an element of interpretation in these results. The data are presented as a pair of ranges, one from the early 1990s and the other for the most recent data.

Table 3-1. Sample of six not-for-profit publishers’ costs.

|

|

How might these data be interpreted? First, it appears the publishers have much tighter control now over their budgets than they did 10 years ago. The definitions of categories are better. Second, it is clear that editorial costs have not changed much but that printing, paper, and binding costs are down, at least on a unit basis. Third, the costs of Internet editions have entered the budgets at the level of 4.3 to 9.1 percent.

In addition, respondents to Mr. Keller’s inquiry indicated that their publishing budgets have doubled since the early 1990s but that individual subscriptions are almost half in many cases, apparently cannibalized by institutional Internet subscriptions.

Cost increases reported by the not-for-profit publishers have been on the order of 6 percent annually. With a reduction in individual subscriptions, the number of copies of issues printed, bound, and mailed has gone down, but increases in other costs have kept budget numbers from falling.

The reduction in individual subscriptions arises from two pressures. The first is the rising subscription prices themselves or membership dues for individuals. The business models of some publishers involve extra charges for online access. The second is the more easily available content through library servers, which enables a scientist to go to different sources, including especially journals, without having to resort to lots of different user names and passwords. It is simply more convenient to do it that way.

Clearly, the publishers that HighWire has worked with are very concerned about cannibalization and have attempted in many ways to figure out how to cope with it. There may not be a single right answer.

Manuscript submission tracking and refereeing support applications have reduced mailing costs and made it possible for more manuscripts to be processed by existing staff. Increases in the size of the journal in page equivalents, increases in graphics and colors in some instances, and the adoption of advanced Internet features like supplemental information and so forth have increased the costs of Internet publishing services about 50 percent higher per year over other costs.

In short, there is a dynamic balancing act with regard to publishers' costs in the Internet era, with some costs increasing and others decreasing. What is most intriguing, however, is the possibility of removing from 25 to 32 percent of the costs of publishing by switching to electronic journals delivered

over the network, and eliminating printing, binding, and mailing paper copy to any subscribers at all.

One might further observe this as a transfer of costs from the publishers to the readers who desire to read articles on paper. Perhaps this is not a new transfer of costs to readers, because many already photocopy articles and presumably cover that time and cost somehow. Eliminating paper editions would also offer some promise of reducing prices to the institutions that are so clearly providing the publishers with the economic basis for publishing at all.

The successful operation of true digital archives—protected repositories for the contents of journals—would permit the removal of the printing, binding, and mailing costs. A true digital archive or repository in the view of most librarians is one that is not merely an aggregation of content accessible to qualified readers or users, but one that preserves and protects the content, features, and functions of the original Internet edition of the deposited journals over many decades and even centuries. True digital archives will have their standards and operational performances publicly known and monitored by publishers, researchers, and librarians alike. Their operations and content then will be audited regularly. However, it is not yet well known how costly automatic data migration will be over time. In any event, It is not enough for a publisher, a library, or an aggregator or other information business to simply declare themselves to be an archive. This function must be proven constantly.

The annual costs of true digital archives can have a broad range. One very inexpensive model is Lots of Copies Keep Stuff Safe (LOCKSS). These are network caches, a design that involves publishers and libraries in voluntary partnerships enabling dozens, maybe hundreds of local caches on cheap magnetic memory, and using very ordinary CPUs. Michael Keller estimates that each of these LOCKSS caches could operate for only tens of thousands of dollars per year.

Another model is that of the large, managed digital repository for multiple data formats and genres of publication. The estimate for operating and maintaining a very large repository, perhaps a petabit or two of data, at Stanford is between $1 million to $1.5 million per year, half for staff and half for technology. HighWire's database now is in excess of 2.5 terabits.

Which institutions will undertake such large managed digital repositories? Almost certainly a few national libraries and university libraries will. But publishers or their Internet service providers could develop and run them as well.

The European Union's laws will soon require deposit of digital editions in one or more national libraries. In the United States, the Library of Congress, along with other federal libraries, promises to develop both its own digital repository, as well as to stimulate and support a distributed network of them. If publishers undertake digital repositories, their costs will enter into the expense budget and, of course, drive journal prices higher.

Another cost currently confronting many publishers is the conversion of back sets of print journals to digital form, providing some level of metadata and word indexing to the contents of each article, and posting and providing access to the back sets. HighWire has done a study on converting the back sets of its journals. They estimate that about 20 million pages could be converted and that the costs of scanning and converting pages to PDF, keying headers, loading data to the HighWire servers, keying references, and linking references could approach $50 million, or about $150,000 per title. Most of that sum is devoted to digitizing companies and other sub-contractors to HighWire Press.

If all this retrospective conversion of back sets occurred in one year, HighWire would have to spend internally about $250,000 in capital costs and about $300,000 in initial staff costs, declining to annual staff expenditure of perhaps $250,000 or $275,000 thereafter. On average, for the 120 publishers paying for services from HighWire that would mean about an additional $2,500 in new operating costs to HighWire Press each year. In other words, the increase in annual costs to publishers for hosting and providing access to the converted back sets would be a fraction of 1 percent of their current expenses each year. These figures, of course, omit any costs for digitizing and other services provided by contractors and subcontractors.

Although the costs of back-set conversion are high, the experience of HighWire suggests that the payoff could be 5-10 times more use of articles in the back sets than is presently experienced. Articles on the HighWire servers are read at the following rates: Within the first three months of issue, about 95 percent of all articles get hits (this presumes that a hit means that somebody is actually reading something). In the next three months, that is, when the articles are four-to-six months old, slightly less than 50 percent of all articles get hits. And when articles are ten months or more old, on average of only 7-10 percent of all articles get hits. However, that rate of hits seems to persist no matter how old the online articles are.

Based on citation analyses, only 10 percent of articles in print back sets older than the online set of digital versions get cited, though not necessarily read. That they should do so is entirely consistent with the belief commonly held since 2001 by publishers associated with HighWire that the version of record of their journals is the online version. This is leading many publishers to digitize the entire run of their titles as the logical next step. In any case, unless other sources of funds are forthcoming, the costs of back-set conversion will become a temporary cost in the expense budgets. Other STM journal publishers, however, have indicated that their back-set conversion and subsequent maintenance costs have been considerably higher.

There are chickens and eggs in expense budgets that also complicate understanding them over time. For instance, the experience of HighWire Press has been that those publishers who first define and design advanced features pay the cost of developing those innovations for those that follow. At the same time, those early adopters reap the benefits of innovation in attracting authors and readers. Eventually, many of the innovative features become generally adopted, and usually at lower cost of adoption than paid by the innovators to innovate. In order to maintain a reputation as innovative, however, one has to continue adopting new features. Some innovation leads to lower costs. For example, HighWire recently announced reductions in prices, thanks to some processing innovations it recently implemented.

Certainly, readers of online journals value the search engines and strategies, the navigation devices, hyperlinks, and availability of various resolutions of images. Alerting services are particularly well thought of too. These sorts of online functions lead users to be more self-sufficient in their search for information and reduce calls upon librarians for help in finding relevant information. Yet it is unlikely that science librarians are volunteering to reduce their staff size as a result.

Concluding Observations

A close study of the costs and benefits of electronic journal publishing from the birth of the World Wide Web would be a good thing to do. It would document, in a neutral way, the profound transformation of an important aspect of the national research effort. That there is likely to be as much change in the next 10 years as in the past decade does not obviate the need for the study. Such a study may help develop new strategies or evolve current ones for accommodating needs of scientists and scholars to report their findings, and for ensuring the long-term survival of the history of science, medicine, and technology.

University budgets are under considerable strain and will be so for at least several more years. Will the deals for access to all journals from a single publisher survive or will there be new deals for multiple titles, cut just right to fit true institutional needs?

Why should there not be a highly diffuse distribution scheme based on authors simply posting their articles on their own sites or on an archive like Cornell arXiv.org e-Print archive (formerly at Los Alamos National Laboratory), and let Google or more specialized search engines bring relevant articles to readers on demand? Who needs all this expensive publishing apparatus anyway?

The answer lies partly in the strong need for peer review of content, expressed variously by most communities of science, and partly in the functions provided by good publishers that are valued and demanded by the scientific community itself. The e-journal survey mentioned earlier showed that the vast majority of respondents want, and have come to expect, a wide array of features making their regular searches for articles relevant to their work easy to find and to use. By implication, the readership focuses its attention in the constantly churning galaxy of new and old articles on a few journals with editorial policies and content that are known and trusted.

Providing relevant, reliable, and consistent levels of content in journals costs money. Highly distributed, diffuse STM publishing with sketchy peer review, dependent upon new search engines to replace the well-articulated scheme of thematic journals and citations in a multidimensional web of related articles, is a descent into information chaos.

Perhaps in the next decade the segment of STM publishing most at risk is the secondary publishers, the abstracters and indexers and the tertiary publishers, those producing the review and prospective articles long after the leading-edge researchers have made use of the most useful articles.

None of the alternative publishing experiments underway or about to get underway operates independently of a larger STM journal publishing establishment, and none operates without costs. Taking the example of the Cornell arXiv in particular, although it is certainly an archive of articles to which many

in physics, mathematics, and computer science go to first and constantly, it has had negligible effect, if any, in reducing the number of peer-reviewed articles published in these fields. It may have improved the articles by exposing them in preprint form to many readers, some of whom may have commented back to the author with helpful suggestions. But Physics Letters and Physical Review have continued to grow, have continued to publish peer-reviewed articles, and those articles have continued to be cited.

It also must be observed that some communities use and read preprints reluctantly. Although there are 200 articles in the British Medical Journal's Clinical Netprints, few have received online and public peer review from readers, and fewer, if any, have been cited in that form.

Finally, several experiments in journals that depend nearly entirely on fees paid by authors should be noted. The new journal Physics, for example, has published between 25 and 50 articles in each of its first five years of existence. But it has not yet had sufficient citations to be indexed by the Science Citation Index.

The change in the business plans of BioMed Central is instructive too. After trying author fees alone, it is selling memberships to institutions so that authors from member institutions do not have to pay for publications. But the membership fee is very high.

The Public Library of Science (PLoS) will enter this list with its first articles in the fall of 2003. It too will depend upon authors' fees for support of its operations. It is starting with an admirable $9 million war chest from the Moore Foundation. PLoS will charge $160 for print copies of its volumes.

The point of mentioning these efforts is that not one has done away with the costs of publishing. Someone always pays. And none of these journals with new business models has become self-sufficient. For the costs of peer-reviewed publishing in science, technology, and medicine to disappear, the requirement for peer review and the demand for thoughtfully gathered, edited, illustrated, and distributed articles must disappear too.

How the experiments in business models might provide competitive pressure on traditional business models and pricing is a topic for discussion and examination over time. Experiments should be tried, but in Michael Keller’s view, the solution to the serious crisis of escalating journal costs lies in the not-for-profit societies, whose purposes and fundamental economic model are very closely allied with the purposes and not-for-profit economic models of our research universities and labs.

COMMENTS BY PANEL PARTICIPANTS

Kent Anderson, New England Journal of Medicine

Kent Anderson provided comments from the perspective of the New England Journal of Medicine (NEJM), which resides at the interface of scientific research and clinical practice, and which has published continuously for 191 years. The journal mainly serves clinicians, physicians who take care of patients. It is a key translator and interpreter of new science to general and specialist physicians around the world. In many ways, the NEJM situation is unique: It is a large-circulation publication that relies on individual subscriptions, and it is owned by a small state not-for-profit medical society.

Mr. Anderson limited his comments to three areas: how the definition of “publication” is changing; how that change in definition is modifying how the NEJM conducts its business of getting the journal out week to week; and finally, some cautionary notes about conducting a study of publishing costs because of the diversity of STM publications and their users.

The Changing Definition of Publication

Publication used to refer to the act of preparing and issuing the document for public distribution. It could also refer to the act of bringing a document to the public's attention. These definitions served us well for more than 400 years.

Now, publication means much more. It now means a document that is Web-enriched, with links, search capabilities, and potentially other services nested in it. A publication may soon be expected to be

maintained in perpetuity by the publisher. A publication now generates usage data.

For publications serving physicians, however, the NEJM has found that physicians are not willing to give up print. They are too pressed for time, and the print copy is too convenient for them. A recent publication by King and Tenopir supported this finding as well.7

The Changing Conduct of Publishing at the New England Journal of Medicine

The mission of the NEJM is to work at the interface of biomedical research and clinical practice. As medical and scientific findings become more complex and sophisticated, the journal invests in editors, writers, and illustrators who can analyze and interpret these findings for a clinical audience, so that its readers understand precisely how medicine is evolving.

The NEJM also supports significant costs for Web publishing, data analysis, and reporting. It develops new services at a rapid clip. And it pays for new publishing modalities, such as online-only publication, early release articles, free articles to low-income countries, free research articles after six months, and selected free articles online.

The journal’s educational mission has become more complex. It has invested heavily in new continuing medical education initiatives, new ways of illustrating and presenting articles, and new ways of helping users find what they are looking for. It is more important, and in some ways more difficult than ever, to know who the journal’s readers are. The staff can no longer look at the print distribution and point to them as its readership. The Web amplifies and concurrently obscures the readership. So, investments in market research, Web data analysis, and warehousing and attending medical meetings with physicians have increased significantly.

Customer service demands have escalated, resulting in the need to develop new software and management systems, the hiring of new staff, and additional phone calls and mail. These service requirements are also adding to the cost of running a publication.

Online peer-review tools in parallel with paper systems have recently added a new layer of cost, with no apparent end to the investment, because periodic software upgrades will be necessary. The skill sets of the journal’s expert editors and workers also need continuous improvement to ensure that they can handle all the new inputs and maintain quality.

Marketing the journal’s new programs and services is another expense. The word needs to get out that there are new ways to access the journal’s information. E-mail systems are part of the marketing and distribution effort now, and these have to be supported, databases need to be developed and maintained, and e-mail notices consistently sent to existing and potential readers.

The pressures to be fast are growing, yet high quality must be maintained. The NEJM publishes information about health, and if it makes mistakes, they can be serious. Recently, the journal published a set of articles on SARS, the Sudden Acute Respiratory Syndrome, and it published them in two weeks or less of receipt—completely peer reviewed, edited, and illustrated papers. They were translated into Chinese within two days of their initial publication and distributed in China in the thousands in print, with the hope they made a major difference. As such demands for faster publication mount, the journal will need to find ways to accomplish them without sacrificing quality, and this is already leading to significant investments in people and systems. Often in these discussions of electronic journal publishing, the people behind all this become obscured.

Issues in Conducting a Study of Journal Publishing Costs

If the publishing costs are to be is studied well, there has to be an acknowledgment of the diversity of the publishing landscape, even in the scientific, technical, and medical publishing area. If only a few publishers participate, the selection bias could drive the study to the wrong answers.

It is necessary to consider which cohorts need to be analyzed. The null hypothesis must be clearly stated. The questions to be asked must be properly framed and a reasonable control group

selected. In short, a study of this nature could be valuable, but it needs to be well designed, rigorously conducted, and carefully interpreted.

Robert Bovenschulte, American Chemical Society

Electronic publishing has not only revolutionized the publishing industry, it has also tremendously changed the fundamental economics of the STM journal business. Many of these issues overlap both the commercial and the not-for-profit publishers.

What is driving this change? The costs are increasing very rapidly, much more than would have happened if publishers had stayed with print alone. There are two obvious factors responsible for this: the cost of new technology and the increasing volume of publishing.

Costs of New Technology

With all of this expansion of technology costs, the publishers are delivering a much more valuable product to their users. There are enormous new functionalities that are being made available to scientists. The access to information is swift, convenient, and is improving productivity.

Technology now imbues all facets of publishing—from author creation and submission, all the way through to peer review, production and editing, output, and usage. The costs of the technology are not just related to the Web, but apply to all the other technical systems that publishers have to create and integrate. For example, the American Chemical Society (ACS) has 186 editorial offices worldwide for its 31 journals, and all of those offices have to be technically supported. ACS must develop new technologies that support the functioning of those offices and make them more productive.

In 2002, ACS conducted a study through the Seybold Consulting Group with 16 other publishers to assess the future of electronic publishing and, in particular, to get a better understanding of the cost drivers. Both the median and the average cost among these 16 publishers was 5.5 percent going into just the information technology (IT) function. Large publishers allocated less than 2 percent, and the ACS spent about 9 percent. Although it is obvious that larger publishers with a lot more revenue can spend a much smaller percentage on IT, they are nonetheless far outspending the medium-sized and the smaller publishers in the total amount that they can invest in their IT operation. The predictions of the 16 publishers in aggregate were an average of a 21 percent annual increase in their IT spending for the foreseeable future.

The Increasing Volume of Publishing

The ACS has gone from publishing 15,000 articles in 1993, to 23,000 in 2002—a 53 percent increase over that 9-year period. Over the past 2 years or so, the ACS has experienced double-digit increases in submissions. This appears to be largely spurred by the fact that online submission makes submitting an article even easier than before. Moreover, the ACS is receiving a much larger fraction of its submissions from outside the United States.

During this same 9-year period, total costs of publishing at the ACS increased 64 percent, versus the 53 percent increase in articles published. But the cost per article published increased only 7.4 percent. In 1993, for every article the ACS published, it cost $1,712. In 2002, the cost was $1,838. Therefore, there has been quite a significant gain in publishing productivity at ACS, and probably at other publishers, attributable in large measure to technology. Although the technology does cost a lot more, it seems to produce efficiencies.

Digital Repositories

The ACS was one of the first publishers to create a full digital archive, PDF form only, of all of its journals. This entailed a very large upfront cost. In the future, it is likely that preserving the journal literature could be the responsibility of both the library community and the publishers.

Michael Keller’s notion of trying to move toward one system has a lot of merit. That is not going to be easy to achieve, however. In the interim, the publishers, especially the not-for-profit publishers, feel a very strong obligation to preserve that digital heritage. The end users, and particularly the scientists who write for ACS journals, are not ready to give up print. And, because of the preservation issue, most librarians are not yet ready to give up print either.

Once print versions are eliminated, there might be a savings of 15, 20, or 25 percent of costs. Ending print versions of journals is certainly a worthwhile goal. The position at the ACS is to do nothing to retard the rate at which the community wants to dispense with print. The ACS would be happy to reduce its price increases, possibly even hold them flat, or even provide a small reduction during a period when the print versions are being eliminated as a way of returning those costs to the community. The concern is that in very short order, with the rising volume of publication, the costs of handling those many more articles will in fact wipe out whatever transitory gains there may be from saving on print.

Bernard Rous, Association for Computing Machinery (ACM)

Reasons Why the Costs of Electronic Publishing are Poorly Understood

The costs of electronic publishing are not really well understood at all, and there are some very good reasons why this is the case. First, as has already been mentioned, electronic publishing is not a single activity. One can take PDF files created by an author and mount them on a Web server with a simple index, and that is electronic publishing of a sort. Or one can manage a rigorous online peer-review tracking system, convert multiple submission formats to a single structured-document standard, do some rigorous editing, digitally typeset and compose online page formats, apply style specifications to generate Web displays with rich metadata supporting sophisticated functions, and build links to related works and associated data sets integrated with multimedia presentations and applets that let the user interact and manipulate the data. This too is electronic publishing. It is miles apart and many decimal points away from the first approach.

Second, electronic publishing costs remain fuzzy because we are still living in a bimodal publishing world. Even some direct expenses arguably can be charged to either print or electronic cost centers. Where a publisher puts them often depends on the conceptual model of the publishing enterprise. On the one hand, if the publisher looks at online offerings as an incremental add-on to the print version, then more costs will be charged to the print version. On the other hand, if the publisher considers the print version to be a secondary derivative of a core electronic publishing process, more costs are likely to be charged to the digital side. When it comes to the indirect costs of staff and overhead, the same considerations apply, with perhaps even greater leeway because of the guesswork that is involved in these types of cost allocations.

Third, the decisions to charge costs to a print or to an electronic publication are part of a political process. There are times when you want to isolate and protect an existing and stable print business, so you attribute any and all new costs to the digital side. You may also want to minimize positive margins on the digital side to avoid debate over the pricing of electronic products. At other times, the desire to show that the online baby has taken wings, is self-sustaining, and has a robust future can tilt all debatable charges to the print side. This is not to say that the books are being cooked. It is just the way you look at the business that you are running.

Fourth, it is very difficult to compare print and electronic costs, because the products themselves are not the same. The traditional average cost per printed copy produced is meaningless and very hard to compare to the costs of building, maintaining, operating, and evolving a digital resource as a single facility.

Fifth, accounting systems sometimes evolve more slowly than shifts in publishing process. New costs appropriate to online publications are sometimes dumped into pre-existing print line items.

Sixth, electronic publishing has not reached a steady state by any means. There is still lots of development going on, some of which lowers costs, and some of which raises them.

These are some of the reasons why the costs of electronic publishing remain somewhat obscure and also why a study of those costs would be both very difficult to carry out and very important to attempt.

Unanticipated Costs of Electronic Publishing

It is also instructive to mention several components of electronic publishing costs that were not fully anticipated by ACM when they went online. First of all, customer support costs have been phenomenally different from the print paradigm. Not only is there a larger volume and variety of customer complaints and requests for change, but the level of knowledge and the expertise required to answer them is much more expensive.

The cost of sales is higher. The product is different, and the market is shifting. ACM no longer sells title subscriptions but rather licenses access to a digital resource. The high price tag for a global corporate license or for a large consortium means that more personal contact is required to make the sale. Furthermore, such licenses are not simply sold. They are negotiated, sometimes with governments, and this requires much more expensive sales personnel.

Digital services are also built on top of good metadata, and metadata costs are high. The richer the metadata, the higher the costs.

Subject classification is costly as well. The application of taxonomies is a powerful tool in organizing online knowledge. The costs for building what has been referred to as the “semantic Web” are still largely unknown. There are a surprising number of opportunity costs. There are so many new features, services, and ways of visualizing data and communicating knowledge. There is a lot more work that can be done than has been done so far.

Finally, some upfront, one-time investments in electronic publishing turn out to be recurring costs, and some recur with alarming frequency. For example, ACM is in the middle of its fourth digital library interface release since 1997.

Gordon Tibbitts, Blackwell Publishing, USA

Is there a cost-tipping point in e-journals, that is, the place where all of a sudden the costs will dramatically drop, and there will be a new golden era of electronic publishing?

There are two classes of commercial publishers that need to be considered in this context. One category of publishers provides publishing services for professional societies. The other type of publisher owns most of the actual material they publish. Figure 3-1 compares the current print plus electronic publishing costs for these two types of for-profit publishers. The major difference between the two is that society-oriented publishers pay a lot more royalties—profit shares, royalties, stipends—back to the societies, who are the gatekeepers for that peer-reviewed information. The other publishers keep most of their profits.

FIGURE 3-1. Current Print + e-Costs of For-Profit Publishers (% of Sale)

There is another point worth mentioning, an issue of scale. In the marketing and sales statistics in Figure 3-1, there is about a four point difference between the two types of publishers, but the mostly owned publishers have billions of dollars in revenues, so those four percentage points translate into massive marketing dollars.

Figure 3-2 presents the current electronic journal cost components. The pie chart on the left looks at “e-incremental costs,” or those things that could be separated out as being purely new costs that the business is incurring that are electronic. Many of the societies have a fundamental bylaw in their charter to disseminate information, sometimes for free. Nonetheless, there is a cost to that, which is quantified under “marketing” at about 7 percent.

FIGURE 3-2. Where is the cost tipping point?

There is consortia selling, a brand-new thing. Publishers have to negotiate with governments and large institutions, and that costs quite a bit of money—perhaps 13 percent of the e-incremental costs—to have large consortia selling for the journal.

The biggest cost in Figure 3-2 is content management. This includes many people whose job is to get the information, organize it, and disseminate it. This new dissemination can be through Web sites, Palm Pilots, libraries, and online systems. Of course, this is not new; this is the information business. In an information business there are three primary costs for developing and maintaining information and for operations. Every one of the new e-incremental costs has those components. You do not build something once. You go through versions 1, 2, 3, 4, and 10. Microsoft knows that very well; they keep upgrading their versions. There is an ongoing requirement to pay licenses, and ultimately humans are needed to actually operate these systems.

Figure 3-3 provides some diagrams of relative print (P) and electronic journal (e) costs. The Venn diagram on the far left shows there is an intersection between P and e costs. There are some costs that are purely print or electronic. Perhaps, since there is a lot of intersection of costs, there will be a collapsing of the cost structure of the electronic journal, resulting in a small p (small cost in print) and a small e (a small cost in electronic).

FIGURE 3-3. Schematic Diagrams of Scenarios of Relative Print (P) and Electronic (e) Journal Costs

More likely, the current situation has a large print cost and a small electronic cost. Or, as Bernard Rous put it, you could play accounting games, and you could actually go with a little p and a big E depending on what you are trying to accomplish on any particular day. Unfortunately, Gordon Tibbitts thinks that a big P and a big E are the most likely outcome.

Some trends—both positive and negative—affecting cost include the number of articles, supplementary data, back issues, customer support, new on-line features, speed requirements, archive/repository alternatives, and new business entrants. Technology trends are driving costs down, for example, Microsoft Office 03’, Word 11, and PDF+. Trends are down in individual subscribers in favor of consortia, the amount of money everyone has to spend, and perhaps in flagrant pricing. There are rapidly rising institutional prices responding to the shift to online publishing, the drops in individual subscribers, and in “acknowledged” business risks. The complexity of layout, graphics, and linking increase cost and the need for speed, as do the breadth of content and quality requirements.

Rather than a cost-tipping point, there is a cost-shifting point. It is shifting away from print toward electronic. We will see a lessening in the big P over time, but a big E will take its place. There will not be any savings.

If we are to achieve any cost savings, we have to stop trying to invent the moon landing. We do not need very complex systems. Some of the information just needs to get disseminated. Some technologies are simpler and more widespread, and we probably should embrace them to keep costs in check. Reducing the number of new versions of the same basic application is another way to control costs.

Finally, and most importantly, when many publishers get together and share standards and archiving, and collaborate, that is certainly a very good way to reduce costs across the board.

DISCUSSION OF ISSUES

What Would a Study of Journal Cost Accomplish?

Robert Bovenschulte began the discussion by noting that doing studies of electronic publishing costs has major definitional and methodological challenges, although that is not to say they cannot be done. If such a study were done perfectly with perfect information, what would one then do with the study?

Michael Keller said that the answer would depend on the position of the user. As a chief librarian responsible for managing a fairly substantial acquisitions budget and for serving many different disciplines, he would hope that such a study would affect the responses of the library community as a whole to these problems. The responses so far have been so fragmentary that librarians have not influenced the situation as effectively as they might.

From the perspective of an Internet service provider, that kind of study would help in the understanding of the comparative benefits or detriments of the various methods and operations, and cause Internet service providers to become more critical in some ways than perhaps they are at the present time.

As a publisher of books for Stanford University Press, Mr. Keller hopes constantly for some magic bullet that would help him take control of those costs, to better attain a self-sustaining posture. He thinks, furthermore, that it is very important for the research community to have a common understanding of what the problem set is. He would expect them, therefore, to elevate the level of discourse to something a lot more scientific than it has been to date.

Moving from Print to Electronic Versions

Bernard Rous noted that in several of the panel comments, it was implied that if only the publishers could get rid of print, there would be a huge savings in the publishing system. Although there is some truth in that, it is also true that although the publications of the ACM are rather inexpensive, there is a margin in the print publishing side of the business that the society cannot afford to do without. As such, there is not only a demand for print versions from some customers, libraries, and some users, but also from the publishers themselves, since the profit margins that are realized from the print side are actually necessary for them to continue in business.

Michael Keller responded that in observing the information-seeking and -using behaviors of his 15-year old daughter and her cohort, he has seen an almost total ability to ignore print resources. Although they certainly read novels and sometimes popular magazines, when they are writing a paper, most of them use the Internet entirely. Regular features of these papers now include media clips, sounds, and various graphics.

It will only be 10 years before that cohort is in graduate school. Another five years after that, some of them will be assistant professors heading for tenure. The students at Stanford are already driving the faculty in the same direction, and a great many of the faculty have figured it out as well.

Mr. Keller agreed completely with Kent Anderson’s point that, for a variety of reasons, there are hosts of readers in some communities for whom online reading and searching are not presently good options. Yet for many research communities, especially in the basic sciences that advance rapidly—such as physics, chemistry, mathematics, biology, and geology—there is real promise for removing the print version of journals altogether. That does not necessarily mean, however, that there will be a total removal of those paper, printing, and binding costs.

Robert Bovenschulte noted that the ACS adopted a policy five years ago of trying to encourage its members to stop subscribing to individual print journals, and instead to obtain them online from their institution’s subscription. The ACS creates a significant pricing differential between print and Web subscriptions, the electronic ones obviously being much cheaper. About three years ago the ACS created a new alternative model to print plus Web cost, which was Web cost only, with print being a low-cost option.

This approach was pioneered by Academic Press. This model has a lot of merit, because it makes publishers think about what the true costs are and what margin they need to sustain the business

economically, and not get caught up with the fact that the reporting system tends to indicate that the print version is profitable.

Michael Keller added that the American Society for Biochemistry and Molecular Biology (ASBMB), when it first came out with the Journal of Biological Chemistry (JBC) online, selected two separate models, one based on the price for the print subscription, and a separate lower price for the electronic-only version on the grounds that they did not have to print on paper. They were trying to encourage institutional subscribers in particular to migrate over to that electronic-only version.

Floyd Bloom next raised a question from a Web listener, who asked, Why if the British Medical Journal (BMJ) does everything that the New England Journal of Medicine (NEJM) does, and is available to the public without charge, can't the NEJM be made similarly available?

Kent Anderson responded that the BMJ sees the future in a different way than the NEJM. The NEJM provides a lot of free access for people who truly cannot afford it, people in 130 developing countries, and its research articles are free upon registration after six months. Nevertheless, he thinks the BMJ experiment is worth watching. They have a national medical society that has the means of supporting their publishing efforts, so they are able to conduct this experiment. The NEJM has chosen a path that relies on knowing that it is publishing information that people find valuable enough to pay for.

The Massachusetts Medical Society has 17,000 members in Massachusetts, but the circulation of the journal in print is between 230,000 and 240,000. The exact readership is hard to estimate, however. Mr. Anderson thinks it is somewhere between 500,000 and 1 million readers per week.

Dr. Bloom added that the British Medical Association, the publisher of the BMJ, has a required membership for anyone who wishes to practice medicine in the United Kingdom. Therefore, they have a huge subscriber base whether they sell it or not. They also have other publications for which they charge.

Digital Archiving Issues

Floyd Bloom asked Mike Keller if the true digital archive was achieved and allowed us to diminish the reliance on print, what organization would be responsible for trying to come to some agreement as to what the taxonomy and terminology should be for that kind of archiving?

Mr. Keller responded by reiterating that the support for the publishing industry does not come from individual subscribers, who receive their copies, whether online or in print, basically at the marginal cost of providing them. The real support for the publishing industry comes from institutional subscriptions from libraries and labs. It is the libraries and labs that are most unwilling at this time to give up paper, because no one has a reliable way to store bits and bytes over many years and over many different changes of operating systems, applications, data formats, and the like. When this problem is resolved, the libraries will be challenging the publishing industry to get rid of paper, or at least not deliver those costs to them.

So, who is going to do it? There are efforts underway in Europe. For the past couple of years, the Library of Congress has managed a big planning program, the National Digital Information Infrastructure and Preservation Program, with sustained congressional funding to support several different experiments around the country. There also is funding from the National Science Foundation (NSF) for the digital library initiative, and some of that money has gone to projects like LOCKSS. Finally, of course, there are industry segments that are quite interested in this. There are suppliers of content management systems, metadata management systems, and magnetic memory anxious to get involved.

In the next year, there will be some initial efforts to demonstrate the viability of these. We are going to have to simulate the passage of time and of operating systems and so forth, but the reliability of digital archiving can be improved. Within five years we will see general acceptance of some methods. Mr. Keller does not think that there will be a single system, but rather an articulated system with many different approaches.

Bruce McHenry, Discussion Systems, noted that all of the panel members have publications where peer review is the crucial distinction from simply having authors publish to the Web themselves. He asked each panelist to describe the unique parts of their process, the best and the worst things about it, and how they would like to see it improved.

Kent Anderson said that the peer-review process at the New England Journal of Medicine is rigorous. First, internal editors review paper submissions as they come in and judge them for interest, novelty, and completeness. If they move on from there, they go out to two to six external peer reviewers.

When those reviews come back, they are used to judge whether the paper will move forward from there. If it does, it is brought before a panel of associate editors, deputy editors, and the senior editors for discussion, where the paper is explained, questions are asked, and it is judged by that group, usually during a very rigorous discussion.

Then it is decided whether it moves on or not. If it does move on, it goes through a statistical review and a technical review. The queries are brought to the author, who must answer them or the paper does not move forward. The peer-review process lasts anywhere from a few weeks to a few years, depending on the requirements. Sometimes the NEJM asks the authors to either complete experiments or to give additional data.

As far as weaknesses in the peer-review process, or ways it could be improved, Mr. Anderson thinks that one of the concerns now is that the time pressures in medicine are so great that finding willing peer reviewers is increasingly difficult. The situation could be improved by having the academic community understand the value of this interaction in the scientific and medical publishing process, and by having some sort of reflection of that in academia as a reward system so that people continue to devote the time they need to the peer-review process.

Dr. Bloom added that as the number of submissions has risen, the number of people available to provide dependable reviews of those articles has not increased. So publishers are calling upon the same people time and time again to provide this difficult but largely unpaid service.

Robert Bovenschulte said there is a lot of commonality at the ACS with what Kent Anderson described, except that ACS publishes 31 journals. The editor-in-chief of each journal has some number, in some cases a large number, of associate editors. These are typically academicians scattered around the world in 186 offices. They basically handle anywhere from 250 to 500 manuscripts a year. This is an incredible load, and they do not have the second level that Kent Anderson described, where the editors meet and decide. That is done by an associate editor under the general guidance and policies of the editor-in-chief. The rejection rate is about 35 percent, although it varies from journal to journal. As more articles are submitted, there is additional work and costs. New associate editors and new offices need to be added in order to handle that load, even if the rejection rate goes up.

Finally, there is agreement with Kent Anderson’s observation that it is harder and harder to persuade people to serve as editors-in-chief, associate editors, reviewers, or members of editorial advisory boards. They simply do not have the time. The best people are in enormous demand. The Web helps and has the benefit of speeding everything up. But there is the potential for a meltdown of the system in the next five years or so, particularly for those journals that are not seen as quite as essential to the community as others.

Bernard Rous next pointed out that the ACM publishes several genres of technical material, and each receives a different type of peer review. The review of technical newsletters is generally done by the editor-in-chief.

Conference proceedings in computing are extremely important, and there is a very different review process for them. There is usually a program committee, and each paper is accepted or rejected upon review by any number of people on that committee, depending on the conference. The rejection rates for certain high-profile conferences are even higher than in some of the equivalent journals in the same subject area. There is no author revision cycle in the peer-review process for conference proceedings, and there is no editorial task.

The ACM also publishes magazines, where the material is actually solicited. There are very few submissions that come in over the transom, so there is a different review process there, and the emphasis is not on originality of content. The material is being written for very wide audiences, so there are different editorial standards. There is a heavy rewriting of these articles in order to achieve that, and that can be very expensive.

Finally, the ACM has about 25 journals that follow the typical journal review process, rigorous and independent review, generally using reviewers, with an author revision cycle and edit pass.

Gordon Tibbitts noted that Blackwell publishes 648 journals and the primary component of all of them is they are peer reviewed. Blackwell interrelates the societies because it has so many; it constantly commingles boards and introduces the editors of journals to one another, so that there is a good cross-section of board membership, not just nationally but all around the world. That is an added value that Blackwell brings to the process.

Blackwell also has spent considerable effort in automating the electronic office. The publisher has partnered with several other firms that work with electronic editorial office management systems,

which has really facilitated a better, faster peer-review process.

The final important characteristic is that Blackwell pays its editors. The publisher has been pressured quite heavily for many years now, and it has given into that pressure. There is a need to pay editorial stipends to the people who are working hard on the journals. It does drive profits down, but it also retains the editors. Many of these people have five or six jobs already—as chairs of medical institutions, authors, practicing surgeons, and professors—and it provides them with some extra funding so that they can hire support staff and things like that.

e-Books Different from e-Journals

Dr. Mohamad Al-Ubaydli, from the National Center for Biotechnology Information (NCBI), asked why electronic books are more expensive than books in print if electronic journals are cheaper to produce than the print version. Is there a difference in the cost or is there a marketing issue?

Gordon Tibbitts responded that although journals and books are similar, they are different product lines. The unit cost in books, and elasticity of that demand, determines the pricing. There are more people sharing e-books freely, even though they should not be. The past three years have seen eight bankruptcies in large organizations that have tried to make money publishing books online. So, it is a very dangerous business area, and people do charge more for the online versions because they realize they will get fewer unit sales. Michael Keller added that customers might be charged more for an electronic book because it can do more things, such as better searching, moving pictures, or other functions.

There are other business models for e-books, however, that include reading and searching for free, paying on a page rate per download or printing, or paying a monthly charge of $20 or so to get access to tens of thousands of books. It is an industry in development and different from the science journal business.

Difficulties in the Transition from Paper to e-publishing

John Gardenier, a retired statistician from the National Institutes of Health (NIH), commented that the electronic medium is going to require a major reconceptualization of the entire process of demand, supply, process, technology, and so forth. One of the most significant changes has been the transition from seeking copyright protection for the expression of facts and ideas, to seeking protection and ownership of the underlying facts themselves. This became a very serious issue in 1996, with the enactment in the European Union of the directive on the legal protection of databases. It has been a continuing battle ever since in the United States.

If the flow of scientific information is restricted either by cost or by legalities, the capability of a society to continue to generate technological innovation diminishes, and, therefore, it becomes disadvantaged relative to what it was before, and possibly to other societies. The reason this pertains to this session is that it is going to take new sources of money and new sources of ideas in order for the not-for-profits at least, and perhaps the for-profits as well, to complete the transition to electronic publishing, while maintaining academic freedom and innovation capability.

A lot of that money can come from sources that have not yet begun to be tapped. The NSF was mentioned but industry has a huge stake in this as well, not just manufacturing but service industries, information industries, and so forth. We, as a community, need to do a better job of outreach to industry, and having them help us to resolve these problems.

Michael Keller responded with two points. First, the STM publishing community in general has gone a long way in its transition to electronic publishing. Second, a stable position cannot be achieved. There will continue to be changes in delivery, in possibilities for new kinds of reports, and in distribution that will mean a great deal to the scholarly community. These publishers already have been quite innovative and have moved a long way from where they were 10 or 20 years ago.

Cost-Containment Strategies

Fred Friend, representing the Joint Information Systems Committee in the United Kingdom, said there was a consistent message from the publishers of the panel that their costs are increasing. What was not discussed was any strategy for dealing with that situation apart from just a few positive comments by Gordon Tibbitts. If the only strategy is to increase the subscriptions paid for by the libraries and laboratories, then there is no future in that. If the publishers are to survive, they must have a strategy for coping with increasing costs.

Bernard Rous responded that the panelists may have created a misimpression since they were addressing costs somewhat in isolation. Costs need to be taken into account together with access and with pricing. He believes that the cost per person of accessing the body of research has plummeted dramatically in the electronic context, even with the increasing costs.

Robert Bovenschulte added that the system is the solution and that we are beginning to see real economies now. He documented some of these in his opening remarks, such as using technology to make staff more productive or dealing with fewer staff to do an increased amount of work. Unless publishers continue to drive the technology to help them be more efficient, however, they will not get out of the box in which they are in danger of being trapped.

Gordon Tibbitts thought that publishers probably should not be in the archiving business. Libraries are probably better positioned to do that. Publishers are innovating in ways that certainly are creative and are making them money, but in some cases their innovations probably should be shared, rather than remaining proprietary.

There are many expenses publishers incur because they are still thinking in terms of printed copy and subscriptions, and not thinking of products. When publishers realize that their value-added might be in the branding of the society, their assistance to the peer-review process, and the move away from some of these technological innovations, the costs will go down, and they can then charge less. The STM publishers are still in the infancy of learning who they are.

There are many different ways of collaborating that can bring costs down dramatically. For example, open access and shareware of various types of search engines and online systems. There is no reason why publishers have to have proprietary online systems. Those are the kinds of things that if shared collectively—with funds from grant organizations, governments, publishers, and societies—can build a better environment.

Publishers do have value. They have value in their ability to facilitate the scientific process. They are collectively connected to more of the distribution channels, so there are economies of scale. And they add a lot of value in funding and innovating. Blackwell and other publishers invest in projects that do not have a payback for 15 years, or perhaps ever. New societies are born, new science is funded, and that is something publishers add to the community.

Michael Keller said that his solution is very different. Libraries should not necessarily support all scholarly communication efforts that are brought to them. He thinks it is irresponsible for libraries to subscribe to the big package deals in which fully 55 percent of the hits in those deals come from 15 percent of the titles. The small societies that want to publish should do so and should be supported in that. The incredibly elaborate overhead that a lot of publishing now entails is unnecessary.

The Cornell arXiv and similar efforts provide one possible solution to this problem. It would be fine if 50 percent of all the scientific journals that are published today disappeared. Those articles that have been through a cascade of rejections from journals could go into preprint archives, and receive recognition later by the number of times they are cited. Reducing the flow of formal publications in half would be the goal.

Kent Anderson observed that there is some risk involved in this. It is difficult for a publisher to know whether it is adding enough value to make a difference so that the investments will pay off. There may be some strategies for containing these new costs and bringing them in line, but at the NEJM their hope is that they are adding enough value so that, over time, the small risks that they are taking in multiple channels will pay off for them.

Vulnerability of Secondary and Tertiary Publishers

Jill O'Neill, with the National Federation of Abstracting and Indexing Services, noted that during the course of Michael Keller’s presentation, he suggested that secondary and tertiary publishers are perhaps in a more vulnerable position just by virtue of what they do. Yet, at the same time, he expressed his concern about the next generation of scientists not being well enough equipped to know where to go for research resources that are outside the Internet publishing environment. Is the problem that the secondary services do not add enough value, in which case they have to focus more on information competency to teach the younger researchers how to do those appropriate searches, or do they somehow need to develop better mechanisms for searching and retrieving the precise answer from this huge corpus of literature?

Michael Keller responded that he is supportive of secondary and tertiary publishing and has himself contributed to secondary publishing. He believes that the secondary publishers in particular are vulnerable, because they are being overtaken by the broad general search engines and by the assembling of peer-to-peer kinds of understandings about what is being used—the sort of feature and function you get when you go to Amazon.com to buy a book. You select the book you want to buy, put it in your cart, and go to check out, where they inform you that there are six more books that others who have bought this book have found very interesting. That sort of function could overtake the secondary publishers.

The secondary publishers have to find some ways of being more effective, more precise, but also more general. They have to look for many different approaches, and some of this must be done automatically. However, if we end up with a highly distributed and diffuse situation in which authors place their contributions on individual servers, then there will be a huge role for secondary publishing.

Bernard Rous added that he thinks the secondary publishers, as they are now, are vulnerable, but not because secondary publishing is vulnerable. What is currently happening is that primary publishers, in order to go online, have to create secondary services. They are in the business of collecting all of the metadata that they can, and presenting those as a secondary service that lies on top of full-text archives. Secondary publishing is merging with primary publishing, especially in the aggregator business.

Electronic Publishing by Small and Mid-sized Societies

Ed Barnas, journals manager for Cambridge University Press, noted that the panel is representative basically of big publishers, and there are certain economies of scale in production and technologies in developing their systems. He asked the panelists to address the question of costs in converting from print to electronic by the mid-sized societies that publish their own journals, or by mid-sized publishers, because that is not an inconsiderable concern on their part.

Gordon Tibbitts responded first that with innovations from commercial vendors, such as Adobe and Microsoft, and simple services, such as e-typesetting in China, India, and other places, the costs for both small and large publishers alike will be lower. He also advocated the use of simpler, standard formats that all publishers agree to adhere to, and removing the barriers of very complex Web sites, which are out of the reach of even publishers the size of Blackwell.

Kent Anderson offered the thought, just to be provocative, that it may be even riskier for a mid-sized society to stop delivering print. The situation there may be harder to change, because it is perceived by members to be such a strong benefit.

Having completed two terms as chair of the publications committee for the Society for Neuroscience, a 30,000-member society, Floyd Bloom noted that, in 1997, the decision was made by the society’s president that online publishing was the way to go, and they were one of the early journals to join the HighWire group. Within 2 years the individual subscribing base went down by 93 percent, but the readership went up nearly 250 percent, and members all over the world were thrilled to be able to have their information just as soon as those members residing in the United States had theirs. The society recognized, however, that the cost of doing so was considerably more than an initial one-time cost. Therefore, a segment of the dues, at least for the present time, has been earmarked specifically for the

support of the electronic conversion. Dr. Bloom then asked if there was anyone in the audience who wanted to comment for a 3,000-to-10,000-member-size society.

Alan Kraut, with the American Psychological Society (a 13,000-member society, but a new organization, only 10 years old), said that they published a couple of journals. Over the course of their brief lifetime they have several times tested in quite elaborate detail whether they could publish their own journals online, and recently have decided they cannot. The risks, particularly for a small organization with modest reserves, simply cannot be taken. They remain a very satisfied partner with Blackwell publishing.

Martin Frank, with the American Physiological Society (about 11,000 members), noted that they publish 14 scientific journals. None of those circulations comes close to what the New England Journal of Medicine does. The society has been publishing electronically for about 10 years, beginning on a gopher server back in 1993.

The reason small societies like the American Physiological Society can manage to publish electronically is because of the compounding effects of information exchange that occurs at the meetings that they have with HighWire Press. Technologically, he does not think the society could have done it alone. The fact that there are partners in the nonprofit STM publishing sector that come together with HighWire has made it possible for them to publish all their journals electronically.

Donald King, of the University of Pittsburgh, commented that if you look at the overall publishing cost, and divide it by the number of scientists, the publishing costs seem to be going down. If you look at it from the standpoint of the amount of reading, it is going down even more, because scientists seem to be reading more on a per-person basis. One of the concerns he has is that publishing has traditionally been a business in which some portions of each publishing enterprise subsidize other portions. That is, in any given journal there will be some articles that are not read very much. In other cases, there may be very high quality journals that only have a small audience. And, within a publisher’s suite of journals, some of those journals are being subsidized by other journals. When looking at the future system, there needs to be a recognition that there may only be a small audience for most articles, but the fixed costs for all articles are going to be the same. There is a need to be able to continue to satisfy the demand for all of them.

The Costs of Technological Enhancements

Pat Molholt, Columbia University, noted that all of the panelists have said that publisher IT costs are rising, and she questioned whether they have to do that across the board. Why not have both a vanilla version that may in fact satisfy many people, as well as an enhanced version for those people who need an animated graphic or some other added functions and for which they would pay some additional fee? The technology then can indeed be developed collaboratively, so that there is a standard plug-in when that is needed, and if it becomes important to the article being read, it can be invoked, but it would not have to be paid for all the time.

Robert Bovenschulte responded that this is an excellent point, and it is one of the questions that the ACS has wrestled with over the past four or five years in its strategic planning—questions such as how many innovations are needed and what costs should be introduced. He has seen some anecdotal research suggesting that what the scientist who is reading really values is the search capability, rapid access, and linking. Regarding the linking function through cross-referencing, there is now an industrywide, precompetitive endeavor that has great value.

The various technological enhancements can have value, however, for individual communities and for editors. There is pressure on the publishers to do something to compete with what other publishers have done. The publishers are competing less for the library dollars than they are for the best authors. Of course, that applies to editors and reviewers as well.

On the other hand, many of the recent innovations that tend to drive up the IT costs are used very little and are not of great value. However, it is very hard to predict which innovations will prove valuable in advance. Moreover, even an innovation that is not much used today may turn out to be one that is very valuable 5 or 10 years from now. So, the publishers are all experimenting and trying to stay on the learning curve. If you get off the learning curve as a publisher, you are in deep trouble competitively.

Kent Anderson added that the NEJM consistently provides new technological upgrades or services in response to its readers’ comments, and, in some cases, based on a little bit of guesswork.

Although some of these new technological capabilities are not highly used, more often than not they take off and have thousands of users. Then the journal has to support the application, iterate and improve it, and find out what it indicates about what other needs the customers may have. He has been surprised at how much demand there has been for new applications.

Michael Keller pointed out that when hyperlinking began in 1995, no one had done it before, and now it has become an absolute standard. The same is true about inserting Java scripts showing the operation of ribosomes with commonly available plug-ins. People began to use them. Some of these technologies that are developed on the margin eventually become quite popular.

Bernard Rous said that it is difficult to decide which innovations to pursue and which to leave, but the suggestion that there be differential service levels also presents problems. The ACM wanted to maintain a benefit for membership in the organization, as opposed to institutional subscriptions, so the society started differentiating levels of service, depending on whether someone was a member or a patron of an institution that licensed an ACM journal. But differentiating service levels adds real cost and a lot of complexity to the system. It also adds a lot of complexity for the end user, and a lot of trouble for the society to explain the differences to the different users.

Finally, Lenne Miller from the Endocrine Society pointed out that while most of the participants in this session are publishers, they all have different business circumstances. For example, the Endocrine Society has four journals and 10,000 members. The society is rather tied to its print clinical journal because of the revenue generated by the $2 million per year of pharmaceutical advertising in it. However, the Endocrine Society does not get nearly as much revenue from its annual meeting as, for example, the Society for Neuroscience does. So, there are different business models and different business circumstances and these must be kept in mind.