2

Human-Systems Integration Issues for UASs and Automation Technologies

This chapter covers two panel discussions on the integration of Unmanned Aerial Systems (UASs) into the National Airspace System (NAS). Both of them responded to a question posed by the steering committee and its guidance for the sessions:

What human-systems integration considerations are paramount as this evolution of new UAS and automation technologies into the NAS takes place? This session will review the state of research on human-automation interaction and considerations for operating UASs in the NAS. In addition, this session will also offer suggestions for supporting pilot situation awareness and UAS integration in the NAS and research needs to support these operations.

In the first session on this topic, Kim Cardosi (Volpe Center) began with the observation that the big picture concern is how well any automation will work within the NAS. Her main points included the need to recognize basic human limitations in three ways: (1) pilots of manned aircraft and controllers cannot be reliably expected to see and avoid small UASs, (2) UAS operators will make mistakes, and (3) surprises lead to distractions that can contribute to errors. Controllers have suggested that automated tools could assist their information needs, and pilots have urged transponders on UASs, but she noted that controller scopes cannot handle every UAS with a transponder.

Cardosi identified a range of UAS errors—such as wrong altitude, entering airspace without proper authorization—and emphasized two common themes emerging from safety reporting systems: the need for better information and for predictable UAS operations. She said pilots have recommended increased Federal Aviation Administration regulation of drone operations in controlled airspace plus increased education of UAS pilots regarding airspace regulations. She highlighted a comment from a pilot on her last slide: “Birds present hazards, too, but at least birds try to get out of the way, they weigh less, and don’t carry lithium batteries.”

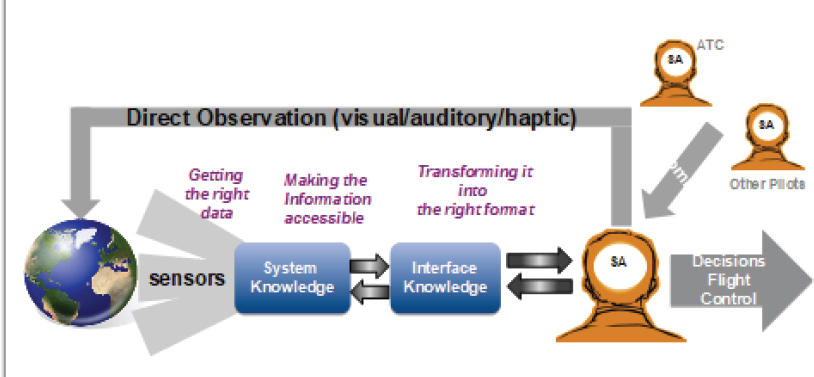

Mica Endsley (steering committee member) offered a presentation from a more theoretical perspective. With UASs, the aircraft is conceptually the same, but the cockpit is remote. She provided Air Force data showing the UAS mishap rate at six times the mishap rate for manned aircraft, and human factors are a significant source of UAS mishaps. She focused on situation awareness and described several ways in which situation awareness is diminished with UAS operations: see Figure 2-1. For example, there are significant difficulties in localization and orientation, sensors provide a very narrow field of view that limits or eliminates visual information, sensors are often not focused in the direction of flight, and there is impoverished visual imagery. Fortunately, past research has demonstrated ways to improve UAS operations, which include multiple cameras and a widening field of view, thus opening the way to considering how best to combine humans with various increasing levels of UAS

SOURCE: Endsley, M. (2018). Human-Systems Integration Issues for UAS Operations in the National Air Space. Presentation for the Workshop on Human-Automation Interaction Considerations for Unmanned Aerial System Integration (slide #6). Reprinted with permission.

autonomy. (After her presentation, a participant raised an interesting point about levels of automation; see questions and answers below.)

Unfortunately, Endsley said, given the need for operators to be aware of critical information, decades of research have shown that “the more automation is added to a system, and the more reliable and robust that automation is, the less likely that human operators overseeing the automation will be aware of critical information and able to take over manual control when needed.” Realizing this and other challenges, including the tension between operator engagement and distraction during unanticipated events, she said that much more research was needed into how to most appropriately team automated capabilities with human teammates in order to effectively manage highly complex systems.

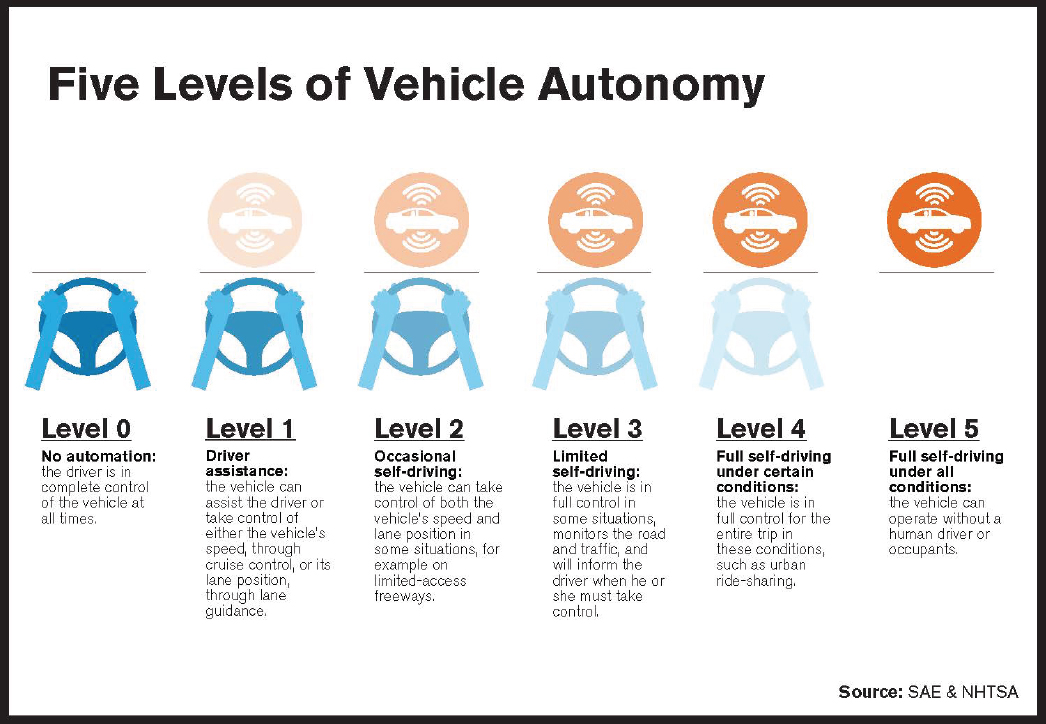

On day two, in the second session on this topic, Amy Pritchett (Pennsylvania State University) began with messages heard earlier: “The right relationship of man and machine is not a new question. . . . the same questions through the decades.” She noted that now the human is typically tasked as a supervisor or manager, yet humans are not good monitors. Considering five levels of autonomy, she asked “Will any vehicle ever be fully ‘autonomous?’”: see Figure 2-2.

As part of discussing architectures, she commented on the question of legal responsibility, which had also been raised by other participants, and observed that current architectures inherently create mismatches between a person’s authority to execute and a person’s responsibility for the outcome. During discussion of principles, she noted that “keeping the human in the loop better informs their abilities to make decisions.” Pritchett closed with several principles for effective architectures in aviation human-machine integration: be aware of the teamwork implications and what the work really involves; ensure that decisions are made by the responsible agent, not just in theory but through hands-on awareness and execution; and think about what really improves safety.

A range of questions, answers, and comments followed the presentations at both sessions. For example, illustrating the changing nature of UAS operations and training, a participant asked Cardosi: “You were talking about the issues of how little it seems that some of these UAS operators below 500 feet really understand. Could you talk to, or if the data show anything, or maybe some of your other work or research looks at how much ground school or other types of training might be required? In our center of excellence for UASs,” the participant said, “we have also looked at these issues. But it seems as though there are two issues, not understanding the airspace environment and not understanding control or interaction. I was curious what you thought about those issues.”

SOURCE: Reprinted with permission from the Governors Highway Safety Administration. Available: https://www.ghsa.org/resources/spotlight-av17 [February 2018].

Cardosi answered: “First of all, for the hobbyists, it is actually under 400 feet, and they have to stay outside of 5 miles of any airport. And again, it varies, so that for the hobbyists . . . , the rules are changing. They were registering, and then they didn’t have to register . . . and the requirement to even register the drone. My understanding is that is going to change again. . . . So the landscape is changing, but still, there is the possibility that you can go out and buy a toy. It may or may not have a way to see how high is the actual altitude of the vehicle. There are even some that will tell you how high it was once it returns to you. But we actually did a study to see how good people are at judging the altitude. We asked them to fly to a certain altitude and take a picture of something. They were all over the map. Some were quite conservative and actually were under the altitude. Others were way off in the other direction.”

Turning to training, Cardosi responded: “The training, getting information out there, I think is critical. I don’t know why we couldn’t have a pamphlet in every kit that tells you what the rules are. Clearly, there are ways to see. You can get on MapQuest and find out how far you are from any given airport. There are a lot of different ways we could inform the user. Really, it is just a matter of what the market will bear and what they are willing to go along with.”

On automation, another participant noted the discussion of levels of automation: “I think when we put automation on a single-line spectrum and say that we are just moving a slider back and forth, we are really oversimplifying things a lot. That adds to the confusion. I think we need to get more specific on how things are working

and how things are operating, and how automation is specifically different. How is it not the same? I think that is a conversation we need to start having.”

Also on the subject of automation, Bill Kaliardos (Federal Aviation Administration) and Kathy Abbott (Federal Aviation Administration) offered some observations. Kaliardos said: “We all think it is a good idea to have the automation tell us when it is about to tank in some way, but a lot of that has to do with the operational environment, the scenarios that it is in. For cars, [that environment can include] whether the roads are icy, and these kinds of things. It has to do with stuff outside of the automation and sort of the inability to assess the context and the bigger picture. Every piece of automation is limited by the understanding of the bigger picture. . . . Isn’t this a fundamental, sort of inherent problem of automation?”

In this context, Abbott said: “I think that it would be useful for this group to look at some of the Navy experience with the littoral combat ships, where they try to go towards the autonomous ships, and they found out that not only did they need more staff than they originally thought they would, but it was more senior staff, with more expertise.”