3

Planning Assessments

In this chapter, the committee reviews the portions of the chapters of the ORD Staff Handbook for Developing IRIS Assessments (the handbook) (EPA, 2020a) that describe processes related to planning the development of Integrated Risk Information System (IRIS) assessment:

- Chapter 1––Scoping of IRIS Assessments

- Chapter 2––Problem Formulation and Development of an Assessment Plan

- Chapter 3––Protocol Development for IRIS Systematic Reviews

- Chapter 4––Literature Search, Screening, and Inventory

- Chapter 5––Refined Evaluation Plan

- Chapter 7––Organizing the Hazard Review: Approach to Synthesis of Evidence

- Chapter 10––Analysis and Synthesis of Mechanistic Information

In reviewing those chapters, the committee considered whether the systematic review approaches used by the IRIS Program (outlined in Chapters 1-5 of the handbook) clearly describe and are consistent with methodologies considered to be state of the science (Question 2 in Appendix B), and whether the handbook clearly lays out a state-of-the-science approach for refinement of the scope and analyses of an IRIS assessment (Question 4 in Appendix B). The committee also assessed the handbook’s process for evaluating and integrating mechanistic data and considered specific areas for improvement or recommended alternatives (Question 5 in Appendix B).

As requested by the U.S. Environmental Protection Agency (EPA), the committee also used planning documents for specific assessments (i.e., for Vanadium [EPA, 2021a] and Inorganic Mercury Salts [EPA, 2021b], the release of which spanned the release of the handbook).

OVERVIEW OF THE HANDBOOK’S MATERIAL ON PLANNING IRIS ASSESSMENTS

Chapter 1 “Scoping of IRIS Assessments” outlines the process for identifying the programmatic needs of EPA in relation to a proposed IRIS assessment. It presents examples of agencies and stakeholders with whom IRIS may need to engage in the process of defining the objectives of an assessment. The chapter also presents a series of five scope-related and eight prioritization-related questions to facilitate focusing the objectives of the assessment into the form of one or more population, exposure, comparator, outcome (PECO) statements. The scoping process overlaps with the problem formulation process in the next chapter of the handbook.

Chapter 2 “Problem Formulation and Development of an Assessment Plan” describes two processes: (1) the creation of a preliminary literature survey and (2) the drafting of an assessment plan. The assessment plan is a document that describes in broad terms how the assessment will be conducted to address the programmatic needs determined by the scoping process. The preliminary literature survey identifies health effects that have been studied, as well as other types of information that the assessment may need to address. Confusingly, this chapter references Chapter 4 for conducting literature surveys, but Chapter 4 actually addresses a more systematic output referred to as a “literature inventory” (also referred to as a “systematic evidence map”). The literature inventory comprises data about populations, exposures, outcomes, study methods, and other study design features that are relevant to planning the specific systematic review questions and informing the other analyses that will deliver on the programmatic needs that are to be met by an assessment.

Chapter 3 “Protocol Development for IRIS Systematic Reviews” briefly describes how the IRIS program interprets the concept of a protocol for the conduct of a systematic review. Breaking with convention in systematic review terminology, the term “protocol,” as used here, is a strategic document outlining an overall process for developing a planned approach to an overall IRIS assessment, rather than a detailed methodology for conducting a particular systematic review. In a presentation to the committee, EPA clarified that there are two “assessment protocols”: an initial protocol that is published early and can change during the course of the assessment, and a final protocol that is published at the same time as the assessment and provides the full details on final methods that were chosen for conducting the systematic reviews and other elements of the assessment (Thayer, 2021). The initial protocol seems to be the subject of handbook Chapter 3; however, a template for the organization of what appears to be a final protocol is also presented.

Chapter 4 “Literature Search, Screening, and Inventory” provides guidance on conducting literature searches for IRIS assessments (Section 4.1), screening the results of search strategies for literature of actual relevance to an assessment (Section 4.2), and creating an inventory of the literature that has been determined to be relevant to an assessment (Section 4.3). Section 4.1 describes the use of bibliographic databases and methods for the development of search strategies for querying them. Section 4.2 describes the process of classifying (“tagging”) studies as relevant or not to the assessment objectives, and for those that are relevant, adding further tags related to study design characteristics, topic area, and other aspects. The tagging process results in a pool of studies that meet the PECO criteria and a pool of “potentially relevant supplemental material,” such as mechanistic studies, toxicokinetic (TK) studies, and pharmacokinetic or physiologically based pharmacokinetic modeling studies. Section 4.3 describes the process for creating the literature inventories, which is a database of the broad array of evidence relevant to the problem space as defined by the programmatic needs that were identified via the scoping process. The guidance on search and tagging explains the mechanics of building the literature inventory.

Chapter 5 “Refined Evaluation Plan” describes the process for moving from the assessment plan (handbook Chapter 2) and initial protocol (handbook Chapter 3 and as clarified by EPA [Thayer, 2021]) to a refined evaluation plan. The process is presented in the

form of a series of questions intended to facilitate analysis of the data in the literature inventory in the context of the assessment plan, as well as development of additional targeted searches and analyses that were not presented in the assessment plan. The refined evaluation plan can be refined further into the final protocol.

Chapter 7 “Organizing the Hazard Review: Approach to Synthesis of Evidence” describes the planning of the hazard review, a specific product of the IRIS assessment process that presents conclusions about hazards from exposure to chemical substances. This process also provides information that guides the dose-response analysis. The plan for the hazard review uses information obtained from more detailed analysis of the literature inventory, in particular the study evaluation process (handbook Chapter 6). The goal is to identify, prioritize, and group the set of endpoints on which the risk assessment is to be based and for which detailed data extraction will be conducted. The remaining endpoints are only summarized as supplemental material.

Chapter 10 “Analysis and Synthesis of Mechanistic Information” describes multiple activities related to the use of mechanistic information in the assessment process, including literature searching and screening, scoping and objective of analyses of mechanistic data, prioritization and evaluation of mechanistic studies, synthesis of mechanistic evidence, and use of mechanistic information in evidence integration and dose-response analysis. The material in handbook Chapter 10 is relevant to multiple chapters. As indicated in Question 5 (Appendix B), Sections 2.2, 4.3.3, and 6.6 of the handbook also discuss evaluation of mechanistic data.

RESPONSIVENESS TO THE 2014 NATIONAL ACADEMIES REPORT

The committee finds that the handbook is responsive to many of the planning-related recommendations made in the 2014 National Academies report Review of EPA’s Integrated Risk Information System (IRIS) Process (NRC, 2014). In particular, through its initial literature survey, the development of a broad PECO statement, and subsequent development of a literature inventory based on a broad search, EPA has established a “transparent process for initially identifying all putative adverse outcomes,” as recommended by NRC (2014, p. 37). Additionally, for all literature searches, the IRIS assessment process includes input from information specialists trained in conducting systematic reviews.

EPA’s approach for using “guided expert judgment to identify the specific adverse outcomes to be investigated, each of which would then be subjected to systematic review,” is partially responsive to the NRC (2014, p. 37) recommendation. While the handbook describes several steps for focusing IRIS assessments on specific health outcomes, as described further below, the process is not fully transparent in a manner consistent with conventional systematic reviews. Additionally, while the process outlined in the handbook is responsive to the NRC (2014, p. 37) recommendation to “include protocols for all systematic reviews conducted for a specific IRIS assessment as appendixes to the assessment,” the committee found additional aspects of protocol development and reporting that could be improved.

CRITIQUE OF METHODS FOR PLANNING

A challenge in evaluating the processes described in the handbook is their presentation as being linear or funneled when many steps are in fact iterative. This is evidenced by the numerous call-forwards and call-backs between chapters (e.g., how Chapter 4 needs to be read to understand how to deliver the outputs of the processes described in Chapter 2, and the challenge in understanding where Chapter 3 fits in the planning process).

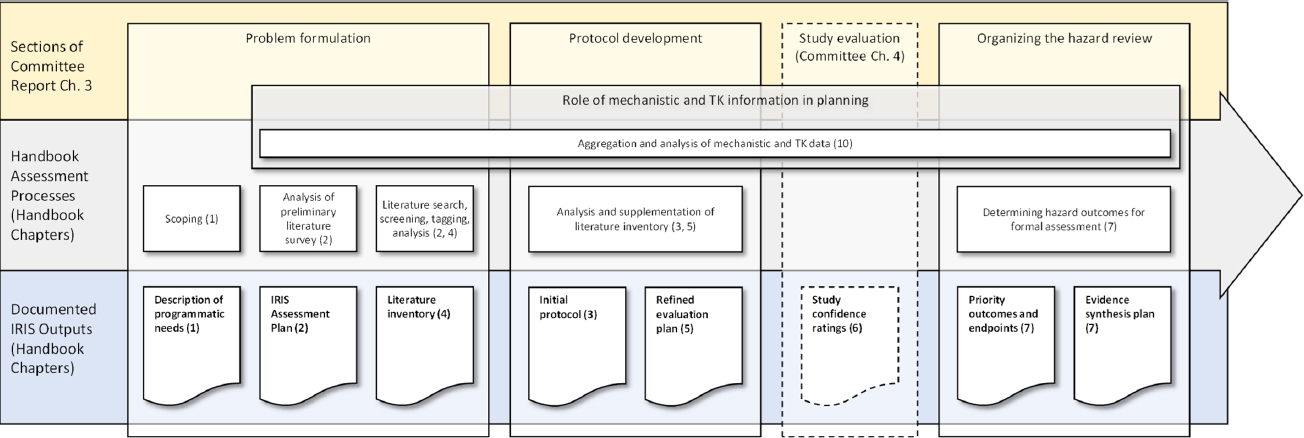

Therefore, in order to better communicate and render constructive its critique of the methods for planning IRIS assessments, the committee has divided the overall IRIS planning process into three stages, with a section of this chapter dedicated to each: problem formulation, protocol development, and organization of the hazard review (see Figure 3-1). Each stage has one or more accompanying processes, and each process is associated with one or more documented outputs relating to the overall assessment. Relatedly, addressing Question 4 (Appendix B), which asked whether the handbook lays out a state-of-the-science approach for refinement of the scope and analyses of an IRIS assessment, involved consideration of multiple processes described in handbook chapters 1, 2, 3, 4, 5 and 7. The various strengths and weaknesses of the processes regarding the state-of the-science are discussed throughout this chapter. However, it was not practicable to provide a summary response to the question.

This chapter also includes a discussion of the role of mechanistic and TK data in the planning process, and it covers special considerations for potentially susceptible populations in the planning of IRIS assessments. Although study evaluation is discussed in Chapter 4 of this report, it is included in Figure 3-1 to show the order of its presentation in the handbook.

Problem Formulation

The problem formulation process described in the handbook presents an important evolution in understanding how to use “literature inventories” or “systematic evidence maps” (databases of key features of scientific studies) to inform priority-setting in toxicity assessments. Literature inventories contribute to characterizing a general problem space by mapping existing relevant evidence (James et al., 2016). In the case of IRIS assessments, the space is defined by interpreting the problem into one or more broad PECO statements. The outcomes may be particularly broad, typically encompassing “all cancer and noncancer health outcomes.” This contrasts with the types of PECO statements used in systematic reviews (including those developed by the National Institute of Environmental Health Science’s Office of Health Assessment and Translation and using the Navigation Guide [Woodruff and Sutton, 2014] for environmental health), which are much narrower in scope.

Note: The figure illustrates the relationship of the stages discussed in Chapter 3 of this report with the processes and documented outputs described in the handbook. Study evaluation is shown in a dashed box because it is not covered in this chapter but is positioned before “Organizing the Hazard Review: Approach to Synthesis of Evidence” in the handbook.

The literature inventories are built using sensitive search strategies, the results of which are screened to remove off-topic research. The remaining studies are classified as to their likely role in the assessment. Features of the studies that are relevant to informing the objectives and approach of the assessment are extracted into a database. The results of this inventorying process help identify gaps, clusters, and patterns in a topic-relevant body of evidence, thereby informing decisions about when and how to conduct further primary or secondary research so as to fill critical gaps or synthesize important clusters. The creation of literature inventories is a key step for planning the assessment, and the general process described in the handbook reflects current best practice. This is to be expected, given the IRIS program’s prominent role in developing these methods.

The creation and use of literature inventories constitute important recent innovations in making priority-setting a more evidence-informed process and improving the efficiency of chemical assessments. Identification of novel evidence clusters can be particularly important to inform a new or updated hazard conclusion or toxicity value. Efficiencies can be gained via avoiding the unnecessary analysis of evidence that is unlikely to result in such changes, instead focusing resources on decision-critical clusters. As such, the literature inventory is a key milestone in the IRIS process, incorporating information obtained from the scoping and initial problem formulation steps, and forming the foundation of all subsequent analysis. Although not cited in the handbook, these issues have been discussed in the academic literature prepared by scientists at the IRIS program and the National Toxicology Program (Walker et al., 2018; Wolffe et al., 2019; Keshava et al., 2020; Radke et al., 2020). The committee was somewhat surprised that the handbook did not make more reference to existing EPA research and other materials related to the planning process for IRIS assessments.

The committee notes that literature inventories also have considerable potential value in and of themselves as a public good, providing a publicly accessible, queryable database that could be used by any research organization to identify knowledge gaps and clusters in toxicity research. Thus, literature inventories stand to increase not only the efficiency of EPA’s work but also the efficiency of toxicity research, in general.

However, major challenges in understanding the processes described in the handbook are the inconsistent way in which they are documented, the lack of clarity about the order in which the steps are taken, and how the handbook chapters map onto these steps. For example, Figure O-2 (handbook p. xix) does not mention the assessment plan. It also positions the literature search and inventory development as occurring after the development of the systematic review protocol, when in fact these two elements have a fundamental role in the development of the protocol. As discussed later in this chapter, the protocol itself seems ambiguously characterized as a product of the planning process of an assessment, and in a manner at odds with conventional understanding of its use in systematic reviews.

One area that did not receive much attention in NRC (2014), but which has been a recurring issue in other National Academies reports, is the need to address susceptible populations and life stages. The handbook recognizes their importance by indicating that they should be considered in scoping and problem formulation (handbook Chapters 1 and

2); PECO statements consider the need for a specific PECO question for susceptible populations (handbook Chapter 3); the assessment plan (handbook Chapter 3) and refined assessment plan (handbook Chapter 5) take into account susceptible populations; mechanistic information is to be used to assess the types of susceptibility that may exist (handbook Chapter 10); and evidence synthesis, integration, and dose-response all consider the possibility of susceptible populations (handbook Chapters 9, 11, 12, and 13). The committee notes that the literature inventory appears to be an ideal step during which a systematic search for information on susceptible populations and life stages could be performed. However, there is little mention of this issue in either the search strategy development or the discussion of literature tagging. Although susceptibility is mentioned in the discussion of supplemental literature searches in Chapter 4 of the handbook, there is an opportunity to more explicitly address this issue during the implementation of the broad literature search underlying the literature inventory, for instance through the use of tags reflecting categories of susceptibility identified in handbook Table 9-2 (p. 9-6).

Finally, a critical aspect of problem formulation is the identification of “key science issues” that the assessment will need to address. Some of the issues that the handbook mentions include conflicting data, adversity of endpoint, human relevance, and differential susceptibilities. However, the handbook does not provide a systematic process for identifying such issues. It appears that they are largely identified through the preliminary literature survey, stakeholder engagement, and public comment. Systematic stakeholder engagement and issue identification are themselves areas of active research for which no broadly accepted methodology currently exists. Some of the key concerns with existing stakeholder engagement and issue identification include the disparity in resources available to different stakeholders and bias in issue identification due to vested interests (Haddaway and Crowe, 2018).

The committee’s findings and recommendations regarding problem formulation are presented in the final section of this chapter.

Protocol Development

This section discusses the processes described in the handbook for developing and making publicly available, in the form of a protocol, the planned objectives and methods for conducting an assessment.

In a systematic review, the protocol is a complete account of planned methods that should be registered (i.e., publicly released in a time-stamped, read-only format). A protocol can be registered in a specialist protocol repository, on a preprint server, or via formal publication in a scientific journal. In some circumstances, organizations (e.g., public agencies) may register their protocols via publication on a website in a manner that safeguards against changes without the reader being aware of it. The committee considers EPA to be an organization that could employ publication via its public website as a means of protocol registration. Some advantages of protocol registration include discouraging the shaping of methods around expected findings, as methods are defined and published prior to data extraction and analysis; and rendering transparent any changes in planned

methods that might affect the integrity of the review process and credibility of its findings (Nosek and Lakens, 2014).

In the handbook, the term “protocol” refers to as many as three document types: the “initial” or “draft” protocol that is the product of the problem formulation process (Chapter 3); the refined evaluation plan that is to be the product of a protocol formulation process yet is also described as being “in the protocol” (Chapter 5); and a “final protocol,” which has no dedicated chapter but is published alongside the final assessment. Each document is different but their exact content, and how a later document builds on a previous one, is unclear.

The scope of each of these documents is also ambiguously described in the handbook. For example, the document described as a protocol in Chapter 3 seems to be intended as a broad plan for conducting a systematic review that delivers the objectives defined in the assessment plan, specifying the approaches and analyses for the entire IRIS assessment process, not just the systematic review portion. Additionally, when it is initially developed (prior to Literature Search), the steps subsequent to the literature inventory are at this stage fairly generic. While this protocol includes a broad PECO statement, the summary box “Organization of the Protocol” on page 3-3 of the handbook presents a level of detail more readily associated with a formal systematic review protocol and seems to better match the intent of the final protocol. To further complicate matters, EPA presented the IRIS protocol as consisting of two documents, an initial and final protocol, both of which seem potentially open to revision in the course of conducting an IRIS assessment (Thayer, 2021, slide 8).

The committee interpreted the handbook to indicate that there is one protocol that is revised over time, accumulating focus and detail as the scope of the problem and the plan for how to solve it using systematic reviews and other analyses evolves throughout the assessment process. This increase in focus seems reasonable given the potential scope and complexity of IRIS assessments in the context of the programmatic needs of EPA, and evolution of protocols is usually expected. However, the different names given to these documents and the unclear differentiation of each stage of the process documented in the handbook makes it very challenging to discern what methods are being documented, when, and to what purpose.

Of the three protocols or planning documents described in the handbook, the final protocol is the most consistent with a conventional definition of a systematic review protocol as being a complete record of planned methods. It is notable, however, that this plan is only published alongside the final assessment rather than in advance of it. Consequently, IRIS assessments can accrue only some of the benefits of protocol publication, such as improving planned methods in response to external feedback. Critically, the IRIS approach to protocol publication cannot be used to audit changes to planned methods, as these methods are not being publicly documented in a way that makes it possible to determine if any changes to the protocol are made before or after conducting the analysis. Other government agencies have registered protocols (e.g., EFSA et al., 2017) and, as noted above, publication of the protocol via the EPA public website could potentially be considered equivalent to such third-party registration.

Additionally, the processes for inclusion and exclusion of literature from the systematic reviews conducted as part of IRIS assessments diverges from current best practices. In conventional systematic reviews, the inclusion and exclusion of evidence, as well as delineation of units of analysis for evidence synthesis and strength of evidence conclusions, are strictly governed by prespecified PECO statements, in order to be fully transparent and minimize potential for bias via selective inclusion of literature in a review. For IRIS assessments, a unit of analysis could be defined at the endpoint level (e.g., clinical chemistry) or outcome level (e.g., liver toxicity). However, the handbook as well as recently released planning documents (e.g., for Vanadium [EPA, 2021a] and Inorganic Mercury Salts [EPA, 2021b]) all only include broad PECO statements, along with broad health effect categories. This approach contrasts with conventional systematic reviews, in which even within a relatively broad health effect category (e.g., cardiovascular disease), a detailed PECO statement particular to that health effect is prespecified so as to ensure that issues relating to differing target populations, windows of susceptibility, and measures of outcome can be addressed up front. Moreover, as currently described in the handbook, evidence may be triaged (excluded from further consideration) at the “refined plan” step (handbook Chapter 5) or after study evaluation at the “organize hazard review” step (handbook Chapter 7). The reasons for the triage of such evidence, and safeguards to ensure that evidence is not being used selectively, are not sufficiently clear in the handbook. The development of refined PECO statements for each health effect category at the assessment protocol stage would therefore provide clarity as to how specific pieces of evidence are included or excluded, as well as transparency as to any subsequent changes to those criteria.

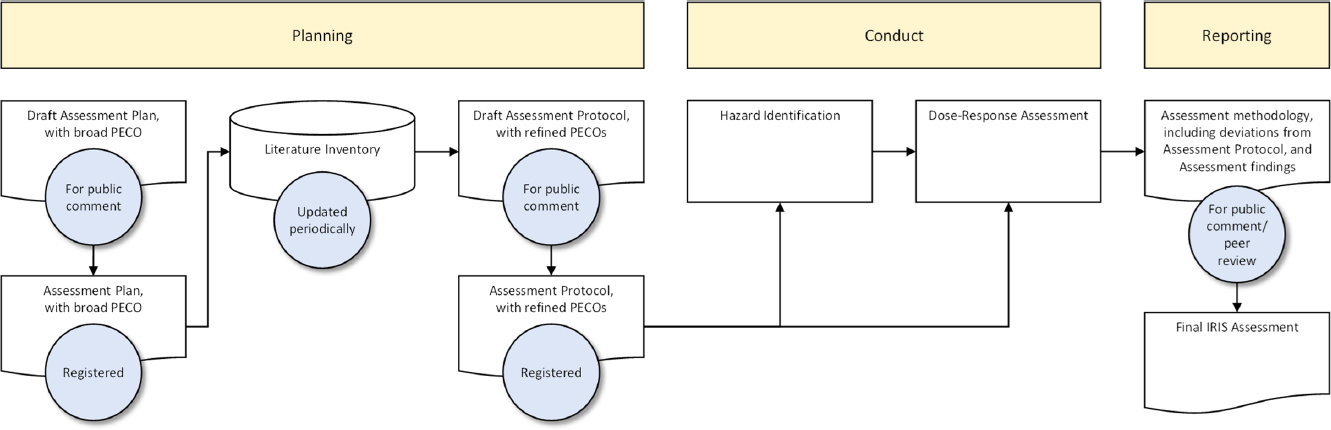

The committee recognizes that documenting the planning process for IRIS assessments is complex. It is clear that the IRIS process is not linear, but iterative, and that at several points the planned methods are revisited, revised, and refined. It may be possible to simplify the process and number of associated documents and state more clearly where and when planned processes are revised. One possible approach is to have two planning documents: (1) an assessment plan that is a broad statement of the overall problem and approach being taken to the assessment, and (2) an assessment protocol that describes in detail the specific methods to be used to conduct hazard identification and dose-response assessment. The assessment protocol would be developed based on information available from the literature inventory and could be subsequently revisited and refined (e.g., after public comment), with revisions or refinements documented and the final draft of the assessment protocol registered in advance of the detailed analysis of the hazard identification and dose-response stages of the assessment. Any deviations from the planned methods described in the registered assessment protocol can be documented when the draft IRIS assessment is released for public comment and peer review. For instance, the handbook (in the “Organizing the Hazard Review: Approach to Synthesis of Evidence” chapter) currently appears to suggest that changes as to what outcomes and endpoints are synthesized may be made after study evaluation. These are the changes from the registered protocol that would need to be documented.

Figure 3-2 and Table 3-1 illustrate how the planning documents and literature inventory could be related, consistent with the committee’s understanding of the Vanadium planning documents. Specifically, a broad PECO statement that was used to develop a literature inventory was included in the Vanadium planning documents (EPA, 2020b) released on July 1, 2020, for public comment, and the results of this inventory were subsequently described in the Systematic Review Protocol (EPA, 2021a) released for public comment in April 2021. However, two aspects of protocol development that are typically included in conventional systematic reviews are missing from the process for Vanadium: (1) registration of the protocol (after addressing public comments and prior to conducting analysis) and (2) inclusion of refined PECO statements to guide the unit of analysis at which evidence synthesis and strength of evidence judgments are conducted.

The committee’s findings and recommendations regarding protocol development are presented in the final section of this chapter.

Organization of the Hazard Review

The intent and scope of “Organizing the Hazard Review: Approach to Synthesis of Evidence” (handbook Chapter 7) were not clearly presented in the handbook. However, given that this step is presented after study evaluation, it appears that a main goal is to use judgments of study confidence to further identify and prioritize the most relevant endpoints for hazard identification. Of concern, however, is that criteria beyond study confidence are included in the text of Chapter 7 and Table 7-1 (p. 7-4). For example, study outcomes including evidence of greater sensitivity and dose-response are listed as elements to consider during the organizational process. Also page 7-3 of the handbook states, “These questions extend from considerations and decisions made during development of the refined evaluation plan to include review of the uncertainties raised during individual study evaluations as well as the direction and magnitude of the study-specific results” (EPA, 2020a). Thus, this step appears to be incorporating criteria other than study confidence to organize the hazard review. As such, this step would serve as another opportunity for refining the protocol prior to data extraction and synthesis. If that is the case, this purpose would need to be explicitly stated and the process thoroughly documented. Significantly, it should be noted that inclusion of this step deviates from typical systematic review approaches.

As written, the organizational process is poorly defined, too open-ended, and too vulnerable to subjectivity. Importantly, as other criteria should have been addressed in earlier steps, a compelling justification would be needed if any criteria other than study confidence are used to exclude data at this late stage of the assessment process. Ideally, the organization and planning of the hazard review are very narrow in scope and primarily focused on evaluating how well the identified data pool can address the PECO questions. If, instead, this step is effectively an opportunity for further refinement of the protocol or the PECO questions themselves prior to data extraction, that would need to be made explicitly clear and those refinements would need to be published and time stamped in the same way as was done for similar steps in the planning process.

Note: The figure illustrates which of the three products might be updated or registered, and where the products would feed into the IRIS assessment process. It is important to note that this is an illustration of a possible approach and is not a committee recommendation as to how the IRIS assessment should be documented.

TABLE 3-1 Illustrative Documentation of an Assessment Plan, Literature Inventory, and Assessment Protocol from the IRIS Planning Processa

| Planning Product | Contents | Initial Version | Subsequent Versions |

|---|---|---|---|

| Assessment Plan |

|

Draft for public comment, published after scoping and problem formulation stages |

|

| Literature Inventory |

Study database and categorization

|

Initial inventory made publicly available alongside assessment protocol draft for public comment |

|

| Assessment Protocol |

|

Draft for public comment, published alongside initial literature inventory |

|

a The table indicates the content of each suggested planning product illustrated in Figure 3-2, when each product might first be made available, the purpose of each product, and when each product would be revised. This is an illustration of a possible approach and is not a recommendation as to how the IRIS assessment should be documented.

Critically, the organization and refinement processes lack specific criteria to ensure transparency and reproducibility, and are not constructed to minimize subjectivity to the strongest degree possible. Although it makes sense that the outcomes and hazards with the strongest evidence should be considered first, Chapter 7 of the handbook does not operationalize how the strength of evidence would be established or ranked. Instead, it suggests questions to be potentially considered throughout the decision process. For example, the use of “probably” in the phrase “if the studies are all low confidence due to reduced sensitivity, the outcome should probably be summarized” (EPA, 2020a, p. 7-2) leaves the process too vague and vulnerable to subjectivity. Many of the questions should have been sufficiently addressed far earlier in the process including at what level the outcomes will be grouped. Life stage, exposure duration, and exposure route should have been factored into the crafting of the PECO statements. Dose-response would be better handled after the data extraction. It is inappropriate to use study outcomes to inform data extraction, because doing so would be a significant deviation from systematic review procedures.

Throughout Chapter 7 of the handbook, as written, the individual processes are confusing and do not provide a clear path to operationalization. For example, in the first paragraph when acknowledging that human and animal evidence do not always align the text states that “decisions can sometimes be informed by specific mechanistic evaluations, for example analysis of the extent of the linkage between related outcomes” (EPA, 2020a, p. 7-1). It is unclear what is meant by that statement, what mechanistic data or evaluations are included, or what is meant by “extent of the linkage.” If the goal is to identify endpoints in animals that are informative for a specific endpoint in humans (the example given in the text is spontaneous abortion), those linked endpoints would need to be stated earlier in the process (if those relationships are known) or reserved for the evidence integration step when the collective set of data are being interpreted.

It is also unclear when and how additional mechanistic data would enter the data organization process. For example, page 7-3 of the handbook states that “additional targeted searches for mechanistic information specific to those health effects and/or organ systems may need to be performed. These supplemental searches may involve new literature search strategies, and they may be health effect- or tissue-specific rather than chemical-specific” (EPA, 2020a). Explicit criteria for when those searches may be needed, how those searches should be conducted, and how the data will be incorporated into subsequent steps need to be provided.

The committee’s findings and recommendations regarding organizing the hazard review are presented in the final section of this chapter.

Role of Mechanistic and Toxicokinetic Information in Planning

As discussed in Chapter 2 of this report, there are multiple places where the roles of mechanistic and TK data are described in the handbook, and they are not entirely consistent. Moreover, the multiple instances of both backward and forward referencing make the handbook confusing as to when and how mechanistic and TK data are incorporated into the assessment. As described in NASEM (2017a), mechanistic and TK data can be

very informative during the scoping and problem formulation phase, helping determine what outcomes to focus on and how animal and human evidence could be integrated. For instance, in its systematic review of phthalates and male reproductive tract development, NASEM (2017a) focused on endpoints relevant to the anti-androgenic activity of phthalates, such as fetal testosterone, anogenital distance, and hypospadias, and did not include cryptorchidism in this grouping of endpoints. Thus, development of refined PECO statements can be informed by mechanistic and TK considerations with respect to which endpoints are grouped together, and which are grouped separately. It is recognized, however, that during this planning stage, a systematic and critical evaluation of mechanistic and TK data has not yet occurred, and that the IRIS program will need to rely on expert judgment and input from public comments in utilizing such information in the planning process.

In other cases, mechanistic data may warrant their own PECO statement (or set of PECO statements) because it is appropriate and possible to evaluate their strength of evidence using systematic review methods. In the example provided in EPA’s presentation, the mutagenicity of chloroform was a case in which a separate PECO statement was developed to inform analysis of a mutagenic mode of action (MOA) for cancer (Thayer, 2021). It is also possible that certain mechanistic endpoints that are considered to be precursors to apical endpoints or adverse effects warrant specification as a discrete unit of analysis, particularly if such endpoints are eligible for use in dose-response assessment. The handbook does not provide much guidance during the planning stages as to how these decisions are made.

With respect to the role of mechanistic data in planning IRIS assessments, the use of key characteristics (KCs) for searching and organizing mechanistic data for informing hazard identification is unclear in the handbook. However, it was clear from EPA’s presentation that the intention is to use KCs as they are available for different health effects to help organize mechanistic information (Thayer, 2021). KCs comprise the set of chemical and biological properties of agents that cause a particular toxic outcome, and were introduced first for carcinogens (Smith et al., 2016) and subsequently for several other types of toxicants, including male and female reproductive toxicants, endocrine disrupting chemicals, hepatotoxicants, and cardiotoxicants (Arzuaga et al., 2019; Luderer et al., 2019; La Merrill et al., 2020; Lind et al., 2021; Rusyn et al., 2021). It is generally agreed that KCs can be used for screening and organizing mechanistic data, in line with their originally stated purpose in Smith et al. (2016). As a result, KCs have been incorporated into hazard assessment approaches by several organizations (NTP, 2018a,b; IARC, 2019; Samet et al., 2020). On the other hand, there have been concerns that use of KCs will lead to an increase in false positives (Smith et al., 2021) and that they may not be predictive of cancer hazard, especially as some KCs such as oxidative stress lack specificity for cancer and other outcomes (Becker et al., 2017; Goodman and Lynch, 2017; Guyton et al., 2018; Smith et al., 2021). However, the approach used for identifying KCs explicitly emphasizes “sensitivity” rather than “specificity.” In particular, KCs are identified through a process that examines known toxicants for a particular outcome (e.g., cancer) and represents common characteristics of those toxicants. Thus, the logical conclusion is that “if X causes outcome Y, then X has one or more KCs.” On the other hand,

a predictive statement would be the converse: “If X has one or more KCs, then X causes outcome Y.” However, from elementary logic, a true statement does not directly imply that its converse is also true—only that its contrapositive is true. The corresponding contrapositive is “if X does NOT have one or more KCs, then X does NOT cause outcome Y.” Further research or other approaches are needed to make predictive statements about toxic outcomes.

This line of reasoning suggests that from a hazard identification perspective, KCs may be useful in the following senses if a toxicant X has been adequately investigated for all KCs:

- If toxicant X exhibits one or more KCs, it is biologically plausible that X causes outcome Y.

- If toxicant X exhibits none of the KCs, then it is biologically implausible that toxicant X causes outcome Y (unless a new KC has been identified).

Here, the committee understands the term “biological plausibility” in the sense of being consistent with existing biological knowledge, but not necessarily having an established causal mechanism. Put another way, toxicant X exhibiting one or more KCs is necessary, but not sufficient, for toxicant X to cause outcome Y. Used in this manner, evaluating whether an agent exhibits KCs and identifying data gaps as to what KCs have or have not been sufficiently studied can be informative for hazard identification, even independent of further analysis in the form of MOAs or adverse outcome pathways. Thus, a key benefit of organizing mechanistic data using KCs is that they can be readily used in evidence integration to transparently address biological plausibility. As discussed in NASEM (2017b), p. 121), the main advantage of this approach is that it “avoids a narrow focus on specific pathways and hypotheses and provides for a broad, holistic consideration of the mechanistic evidence.” As a result, their use promotes consistent approaches across chemicals and avoids only “looking under the lamppost.” These advantages are consistent with the purpose of the handbook, and thus it is appropriate for EPA to use KCs in this manner.

FINDINGS AND RECOMMENDATIONS

Findings and Recommendations Related to Problem Formulation

Finding and Tier 1 Recommendation

Finding: The process of developing the literature inventory is in accordance with established best practices in searching for and screening evidence, starting with a comprehensive search to build a database that can then be interrogated for refining the assessment objectives. It makes suitable use of information specialists and is notable for its comprehensiveness of coverage of both published and grey literature resources.

Recommendation 3.1: The handbook should make explicit which components of a literature inventory database are to be made publicly available and when. [Tier 1]

Findings and Tier 2 Recommendations

Finding: The relationship among the scoping process, the development of the literature inventory, and the analysis of the inventory to define the assessment plan is broadly appropriate and consistent with an understanding of best practice. Overall, EPA’s process is responsive to the recommendation from NRC (2014) that assessments identify all putative adverse outcomes and then prioritize them for systematic review. However, little of the relevant literature in this area, including publications by EPA scientists, is cited to provide support for the methods outlined in the handbook.

Recommendation 3.2: The handbook should more fully reference the literature in environmental health and other disciplines that relates to evidence mapping and its role in planning research, in order to strengthen the rationale for the method and provide relevant citations. [Tier 2]

Finding: The planning sections of the handbook, in particular the development of the literature inventory, could more directly support the identification of information and gaps with respect to susceptible populations and life stages. Adding a component to the literature inventory addressing this issue can be one way to better compile, organize, and assess the information available. This could help address the crosscutting question that each chemical assessment faces of whether the evidence base is adequate to address the possibility of susceptible populations and life stages and whether that evidence points to unique susceptibilities and/or particular uncertainties. Having this information identified in the literature inventory could support the refinement of the assessment plan and later steps in the process, including dose-response assessment.

Recommendation 3.3: The literature inventory process should consider tagging studies for the presence of data pertinent to identification of susceptible populations. [Tier 2]

Finding and Tier 3 Recommendation

Finding: Identification of key science issues remains an area of problem formulation where systematic methods are needed, but broadly accepted approaches that address potential for bias have not yet been developed.

Recommendation 3.4: EPA should consider developing a more systematic approach to identifying science issues. [Tier 3]

Findings and Recommendations Related to Protocol Development

Finding: Recognition in the handbook of the importance of publishing protocols is welcome. This responds to a key recommendation of the 2014 NRC Report.

Finding: Appropriate documentation of the planned methods requires refinement for consistency with best practice relating to protocol development and publication in systematic review. Consistent with the recommendation from NRC (2014), the handbook specifies publication of the final protocol as appendixes to the final assessment. However, the lack of any earlier public documentation of the protocol, and any modifications, is an important shortcoming because it does not sufficiently ensure the transparency and credibility of the methods and findings of the assessments.

Finding: The handbook lacks clarity regarding the products of the planning process, the relationships among them, which are expected to be updated or should be registered, and how they feed into the IRIS assessment.

Recommendation 3.5: The handbook should clarify and simplify the assessment planning process as follows: restructure the handbook to directly reflect the order in which each step is undertaken, unambiguously identify each of the products of the planning process, clearly define what each product consists of, and state if and when each product is to be made publicly available. [Tier 1]

Recommendation 3.6: EPA should create a time-stamped, read-only final version of each document that details the planned methods for an IRIS assessment prior to conducting the assessment. [Tier 1]

Finding: The handbook diverges from the best practices in systematic reviews in that the unit of analysis for evidence synthesis and strength of evidence conclusions is not clearly defined in refined PECO statement(s). The handbook includes a “broad PECO,” intended to support the literature inventory. However, it does not specify the processes for developing more refined PECO statements for each unit of analysis, which could be defined at the endpoint level (e.g., clinical chemistry) or outcome level (e.g., liver toxicity). PECO statements in clinical systematic reviews often employ tiering of outcomes, for instance using hierarchies of broader to narrower categories, distinguishing between “critical” and “important” outcomes, and flexibility to group outcomes (e.g., see Higgins and Thomas, 2019, Section 3.2.4.3). Similar approaches can be employed by the IRIS program to organize endpoints and outcomes. It is recognized, however, that during this planning stage, a systematic and critical evaluation of mechanistic and TK data has not yet occurred, and that the IRIS program will need to rely on expert judgment and input from public comments in utilizing such information in the planning process.

Recommendation 3.7: The IRIS assessment protocol should include refined PECO statements for each unit of analysis defined at the level of endpoint or health outcome. The development of the refined PECO statements could benefit from considerations of available mechanistic data (e.g., grouping together causally linked endpoints, separating animal evidence by species or strain) and TK information (e.g., grouping or separating evidence by route of exposure), and information about population susceptibility. [Tier 1]

Findings and Recommendation Related to the Organization of the Hazard Review

Finding: Explicit consideration needs to be given to the organization of the hazard review in the planning stages of the IRIS assessment, but the handbook leaves this planning process under-specified. This is problematic, given that this step represents the highest level of granularity in describing how evidence is to be selected, organized, and grouped going into the data extraction stage of an assessment. For transparency, there needs to be enough detail that evidence synthesis and integration can be readily mapped back to the planned methods.

Finding: Although the “organize hazard review” step is positioned in the handbook after the study evaluation stage of an assessment, it in fact seems that most of the questions being posed in Chapter 7 of the handbook can be answered after the assembly of the literature inventory. The exception is the prioritization of study groups based on the results of the study evaluation step. More clarity about what is being done at this stage of the planning process, in particular which elements would more appropriately be subsumed under other (earlier) stages, is needed.

Finding: This step deviates from best practices in conventional systematic reviews, where all outcomes are prespecified and not subject to change after study evaluation. The handbook does not provide sufficient justification for revisiting the design of the systematic review after study evaluation. In particular, it does not articulate clearly what findings from study evaluation would be sufficient to change the analysis plan.

Recommendation 3.8: The steps of organizing the hazard review should be narrowed to focus on new information obtained after the study evaluation stage. Organizing the hazard review should be structured by clear criteria for triage and prioritization, and aimed at producing transparent documentation of how and why outcomes and measures are being organized for synthesis. [Tier 1]

Findings and Recommendations Related to Mechanistic and Toxicokinetic Data

Finding: Mechanistic and TK data are potentially highly informative during the planning and protocol development process. The current handbook describes these roles in multiple places, and they are not entirely consistent.

Finding: The handbook is not clear as to when mechanistic and TK data may require a separate PECO statement defining a discrete unit of analysis for systematic review, synthesis, and strength of evidence judgments.

Recommendation 3.9: The handbook should describe how the IRIS assessment plan and IRIS assessment protocol can identify the potential roles of mechanistic and TK data, including if they are to be units of analysis for systematic review, synthesis, and strength of evidence judgments. At a minimum, all endpoints that may be used for toxicity values, including so-called “precursor” endpoints that might be viewed as “mechanistic,” should require separate PECO statements; however, application of systematic review methods to other mechanistic endpoints, such as mutagenicity, may depend on the needs of the assessment. The key mechanistic and TK questions should be identified to the extent possible in the IRIS assessment plan and IRIS assessment protocol. [Tier 1]

Finding: The use of KCs to search, screen, and organize mechanistic data is increasingly accepted and was highlighted in EPA’s presentation to the committee.

Finding: The role of KCs for informing hazard identification has been the subject of debate. They are appropriate for use in evaluating biological plausibility, or lack thereof. However, KCs as currently constructed tend to be sensitive but not necessarily specific. More research is needed into whether and how they can be used to be more predictive of hazard.

Recommendation 3.10: When available, KCs should be used to search for and organize mechanistic data, identify data gaps, and evaluate biological plausibility. Those uses should be reflected in the IRIS assessment plan and IRIS assessment protocol. [Tier 1]