6

Human-AI Team Interaction

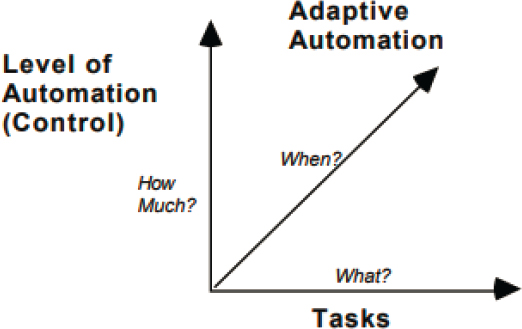

The interaction paradigms used to combine the human and AI system can have a significant effect on the joint performance of the team. These paradigms include level of automation (LOA) (i.e., the amount of control or authority granted to the AI system for a given task or function), when that control is given, the granularity of control needed, and how authority is distributed between the human and AI system (see Chapter 2). These interaction characteristics of the human-AI team are dynamic—the level of automation of the AI system can, in principle, change over time for any function of the system (Figure 6-1).

LEVEL OF AUTOMATION

The LOA, also called the degree of automation, is defined in terms of the ways portions of any given task can be allocated between the human and the automation or AI system (Endsley and Kaber, 1999; Kaber, 2018; Parasuraman, Sheridan, and Wickens, 2000; Sheridan and Verplank, 1978). Research on LOAs has primarily focused on reducing the risks associated with out-of-the-loop (OOTL) performance problems brought on by low situation awareness (SA) of the humans who monitor automation (Endsley and Kiris, 1995). OOTL performance problems can occur when humans have low SA while working with automation, due to (1) problems with monitoring, vigilance, and trust; (2) poor information feedback and low transparency of automated systems; and (3) lowered human engagement under higher LOAs (Endsley, 2017; Endsley and Kiris, 1995; Wickens, 2018). In addition, research has focused on understanding the effects of automation decisions on workload (Evans and Fendley, 2017; Harris et al., 1995; Kaber and Endsley, 2004), and understanding LOA effects on complacency and trust (Parasuraman and Manzey, 2010).

Some authors have criticized LOA taxonomies as not scientifically grounded or useful, focused on fixed function allocation, and as treating humans and automation as functionally substitutable (Bradshaw et al., 2013; Defense Science Board, 2012; Dekker and Woods, 2002). These claims are refuted by Kaber (2018) and Endsley (2018b), however, who make the case that LOA taxonomies (1) formalize the meaning of “semi-autonomous,” showing the various ways control can be shared across the team for a given function; (2) provide a systematic means of determining the effects of automation on human SA, workload, and performance, linked to cognitive theory; (3) can and do change dynamically over time and are not necessarily fixed or static; and (4) are central to decisions around implementation of automation that must be addressed in system design.

Considerable work has been done describing the effects of LOA on the human workload, SA, and performance of human users, showing that the aspect of task performance being automated can impact human performance.

SOURCE: Endsley, 1996, (p. 6). Reprinted with permission from Taylor and Francis Group, LLC.

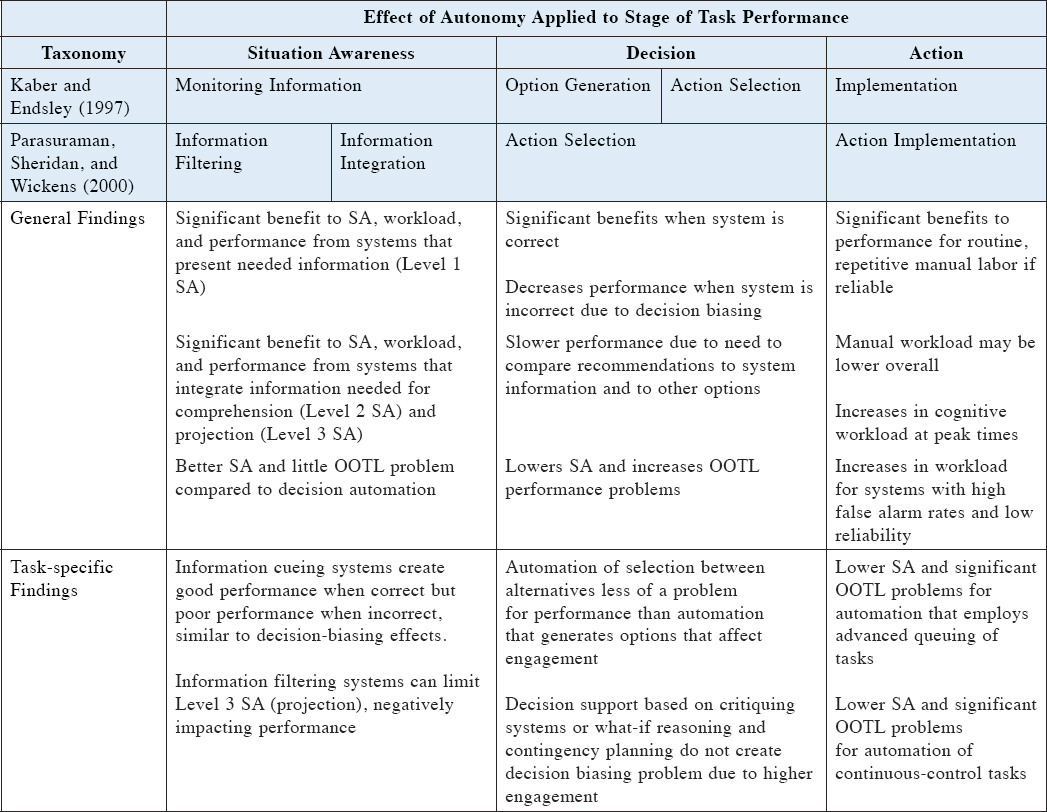

In this research, the roles and responsibilities of the automation were varied systematically according to the LOA taxonomy, and the automation performed a variety of simulated tasks in which it was interdependent with the human for achieving overall common goals; thus, this research met the conditions for investigating human-automation team performance. The stages of task performance that were variously assigned as the responsibility of the human or automation included SA, decision making, and action implementation, as detailed in Table 6-1 (Endsley, 2017, 2018b). The results of these studies showed that the effects of automation depend strongly on how it is applied and integrated with human tasks (see Onnasch et al. (2014) and Endsley (2017) for a detailed review of these findings).

Several general findings from this body of work may be relevant to human-AI teaming, including

- Ironies of automation: The more advanced the automation, the more crucial the contribution of the human, the less likely the human is to have the manual skills necessary to do the work, and the more likely that workload will be high and advanced cognitive skills will be needed when humans take over task performance (Bainbridge, 1983).

- Lumberjack effect: “More automation yields better human-system performance when all is well but induces increased dependence, which may produce more problematic performance when things fail” (Onnasch et al., 2014, p. 477). Although increased levels of automation may improve workload under normal conditions, the tendency for lower SA increases the likelihood of failed manual recovery.

- Automation conundrum: “The more automation is added to a system, and the more reliable and robust that automation is, the less likely that human operators overseeing the automation will be aware of critical information and able to take over manual control when needed. More automation refers to automation use for more functions, longer durations, higher levels of automation, and automation that encompasses longer task sequences” (Endsley, 2017, p. 8).

- Multitasking effect: As the purpose of automation is often to allow people to perform other tasks, automation both enables and encourages the redirection of human attention, making it more likely that people will be disengaged from oversight over automation or understanding of automation when competing tasks are present (Kaber and Endsley, 2004; Moray and Inagaki, 2000; Parasuraman and Manzey, 2010; Parasuraman, Molloy, and Singh, 1993).

The committee notes that a number of major recommendations have come from this research. AI efforts directed at improving human SA and understanding of events, particularly integrations from large, heterogeneous datasets, will be most useful and least likely to suffer from negative OOTL effects. AI efforts for improving decision making may be useful if combined with information presentations that allow people to easily understand the basis

TABLE 6-1 Summary of Research on Effects of LOA on Human SA, Workload, and Performance

NOTE: SA – situation awareness; OOTL – out of the loop.

SOURCE: Endsley, 2017, (p. 13). Reprinted with permission from Sage Publications.

for those recommendations, although this may be subject to the challenges of decision biasing (see Chapter 8). AI efforts that focus on completing an entire function, from data gathering and integration to conducting actions, will put people the most OOTL and make them the most likely to suffer from the consequences of being wrong (i.e., the lumberjack effect). AI that executes tasks to human specifications will reduce some human workload but may either demand additional monitoring to ensure reliable performance or may produce OOTL effects when not performing reliably. Although intermediate LOAs were shown to provide improved SA compared to fully automated systems, this effect is generally insufficient for overcoming OOTL deficiencies.

AI that takes over all aspects of a function for any length of time (high LOAs) significantly increases the likelihood of low SA for the human, and new methods for rapidly regaining SA in the face of AI deficiencies are needed. This is particularly the case for unexpected or “black swan” events (Wickens, 2009). In some cases, it may be necessary for an AI system to take over action execution due to time limitations (e.g., cybersecurity). In these situations, it will be particularly important to focus on improving the transparency of the AI system for humans involved in on-the-loop operations.

Key Challenges and Research Gaps

Although the effects of LOA on human workload, SA, and performance have been addressed by existing research (Endsley, 2018b), the committee finds three primary research gaps in the following areas:

- Methods for supporting collaboration between humans and AI on shared functions;

- Methods for maintaining or regaining SA during on-the-loop operations when working with AI at high LOAs; and

- Methods for managing multiple AI systems, each of which may be operating at a different LOA.

Research Needs

The committee recommends addressing three major research objectives for improving human-AI interaction across LOAs.

Research Objective 6-1: Human-AI Team Task Sharing.

Research is needed to determine improved methods for supporting collaboration between humans and AI systems in shared functions, at intermediate levels of automation. It would be beneficial for this research to focus on a more detailed understanding of the ways that people and AI systems can share tasks (Miller, 2018), and to explore various methods for combining humans and AI systems, to improve cognitive performance and resilience to errors and unforeseen conditions (Cummings et al., 2011; Smith, 2017, 2018).

Research Objective 6-2: On-the-Loop Control.

Methods are needed for maintaining or regaining situation awareness when working with AI systems at high levels of automation. It is expected that human situation awareness will be low and attention will be directed elsewhere during normal operations, but high situation awareness and attention will be needed to deal with off-nominal and unusual events. People are most likely to miss events that are rare, unexpected, not salient, and outside of foveal vision (Wickens, 2009). Although improved automation transparency has been called for (Endsley, 2017; Wickens, 2018), greater transparency will only help once human attention is directed to the AI system. Given that human monitoring of AI systems will be poor and attention to competing tasks likely, methods are needed for supporting the detection of unusual events and situations the AI system is not trained to handle, and for rapidly building human understanding of the situation and the actions of the AI system (Endsley, 2017).

Research Objective 6-3: Multiple-Level-of-Automation Systems.

Given that future multi-domain operations may involve multiple AI systems potentially operating at different levels of automation, research is needed to determine the effects of multiple systems on operator performance, to develop effective methods for managing multiple AI systems (Lee, 2018). It would be advantageous for this research to consider the potential for interdependencies among multiple AI systems and the resulting emergent behaviors, as well as to consider the cognitive overhead needed for tracking and managing multiple AI systems.

AI DYNAMICS AND TEMPORALITY

Another body of research has examined the impact of interjecting periods of manual performance into automated tasks, to improve human engagement and retain skills through adaptive automation (AA) (Rouse, 1988). AA can be triggered based on set time periods, the occurrence of critical events, drops in human performance, physiological measures, or human models (Scerbo, 1996). This temporal mixing of human and automated control has been demonstrated to reduce workload (de Visser and Parasuraman, 2011; Hilburn, 2017; Hilburn et al., 1997; Kaber and Endsley, 2004; Kaber and Riley, 1999), improve operator engagement during periods of manual control (Bailey et al., 2003; Prinzel et al., 2003), improve human-system performance (Parasuraman, Molloy, and Singh, 1993; Wilson and Russell, 2007), and improve skill retention (Volz et al., 2016).

As an example, the USAF has implemented AA in fighter aircraft via the Automatic Ground Collision Avoidance System, which takes over flight control when imminent terrain collision is detected. This system has been credited for saving multiple lives, but it may increase the risk of complacency (Lyons et al., 2017).

While much research has focused on the AA paradigm alone, the committee recommends that both AA and LOA be considered in conjunction. Examining the two together, Kaber and Endsley (2004) found that, while LOA primarily affects human SA, the amount of time spent in automated versus manual control primarily affects workload and associated propensity for risk taking. It is important to consider that different LOAs can be in effect at different periods of time, which constitutes a major design decision (Feigh and Pritchett, 2014; Kaber and Endsley, 2004). Most tasks can theoretically be performed manually or at varying LOAs at various times or under different conditions. Whereas decisions about LOAs are often part of system design, the flexible-autonomy approach stipulates that LOAs can shift over time, either at human discretion or based on criteria built into the automation (USAF, 2015). When flexible autonomy is employed, methods for achieving effective transitions between humans and automation are needed.

In the committee’s judgment, the ways that automation levels change over time is also in need of further research. Oppermann (1994) and Miller et al. (2005) differentiate between adaptive automation, in which the system assigns the automation level, and adaptable automation, in which the human operator assigns the automation level. Adaptable automation, which keeps the human in the decision loop in terms of the appropriate LOA, is believed to be advantageous because it allows the human to anticipate and prepare for changes in LOA (van Dongen and van Maanen, 2005). Adaptable automation can avoid some of the pitfalls of AA and can result in higher levels of trust, SA, and user acceptance (Parasuraman and Wickens, 2008). As a downside, in certain circumstances operators may become too busy to make LOA changes themselves (Kaber and Riley, 1999), and adaptable automation can involve a higher manual workload (Kirlik, 1993).

In her review of the adaptable and adaptive approaches to flexible automation, Calhoun (2021) reported that studies comparing these two approaches have generally found that adaptable automation is beneficial in terms of improved workload, task performance, and subjective preference; however, she noted that the research base is limited. Miller et al. (2005) recommend that a mix of both adaptable and adaptive approaches to flexible automation may be warranted, with considerations of workload, competency, and automation predictability serving as critical mediators. In the committee’s judgment, more research is needed on the relative costs and benefits of human- versus automation-based changes in LOA over time.

Key Challenges and Research Gaps

The committee finds two main research gaps that exist with respect to flexible automation, in the areas of:

- Best methods for supporting dynamic transitions between LOAs over time to maintain optimal human-AI team performance, with a consideration of both adaptable and adaptive automation approaches; and

- Requirements and methods for supporting SA, collaboration, and other teaming behaviors generated by dynamic functional assignments across the human-AI team.

Research Needs

The committee recommends addressing two major research objectives for improving human-AI teaming using a flexible automation approach.

Research Objective 6-4: Flexible Autonomy Transition Support.

Research is needed to determine the best methods for supporting dynamic transitions in levels of automation over time to maintain optimal human-AI team performance, including when such transitions should occur, who should activate them, and how they should occur (USAF, 2015). It would be useful for this research to identify the task factors or contexts important for making temporal transition decisions in multi-domain operations, and mechanisms needed for those transitions. Methods for managing workload spikes and avoiding untimely interruptions need to be addressed (Feigh and Pritchett, 2014).

Research Objective 6-5: Support for Flexible Autonomy.

Research is needed to determine the new requirements generated by dynamic functional assignments across the human-AI team, including new situation-awareness needs, collaboration requirements, and other necessary teaming behaviors. Shared situation-awareness needs for supporting temporal shifts in functional assignments across the human-AI team need to be determined, and research on how best to provide the necessary information for making such shifts is also needed.

GRANULARITY OF CONTROL

A third major factor relevant to human-AI interaction is the control granularity, which is the degree of specificity of control input required to interact with the AI system (USAF, 2015). Granularity of control (GOC) can be manual or programmable, with programmable GOC necessitating the programming of task specifications and parameters. GOC can also involve Playbook control, in which operators choose from a “Playbook” of adaptable, preset behaviors (Miller, 2000; Miller and Parasuraman, 2007); or it can involve goal-based control, in which only high-level goals must be provided to the AI system (USAF, 2015).

Programmable control, common for many automation systems, involves significant workload because the human must set up and control the automation under different conditions at various times. Systems with lower GOC, such as Playbook approaches, may reduce the amount of work needed to interact with the AI system.

Plays and Playbook-style delegation architectures have been studied and shown to have promise for military applications (Miller and Parasuraman, 2007). AI will likely operate at much lower levels of granularity, avoiding this problem. Plays, in this tradition, are templates of behavior known to be effective for accomplishing specific goals. Within a play, a method is a kind of task decomposition that is constrained yet offers flexibility, either for the supervisor to further restrict or specify during delegation, or for the subordinate to select during execution. Playbook delegation has been shown to be effective in reducing workload (Fern and Shively, 2009), even in unpredictable (Parasuraman et al., 2005) or non-optimal play environments—those that do not conform to the conditions for which the plays were designed (Miller et al., 2011). Playbooks have been found to be easy for users to understand while allowing for a wide range of autonomous behavior. Recent work has shown that play-based architectures are effective in multiple-actor, multiple-unmanned-aerial-vehicle control (Behymer et al., 2017; Draper et al., 2017). Potential benefits of play-based approaches include a vocabulary that allows shared expectations for human-human or human-automation communication about task performance, and streamlined communication about behaviors and outcomes, through a framework or contract against which performance can be reported and evaluated (Miller, 2014). More advanced AI, particularly based on deep-learning approaches, has not yet been integrated with play-based approaches.

Key Challenges and Research Gaps

The committee finds two major research gaps related to GOC, in the following areas:

- Effects of Playbook control on SA and OOTL; and

- Applicability of Playbook control to new applications relevant to multi-domain operations.

Research Needs

The committee recommends addressing two major research objectives related to the use of GOC as a method for integrating human-AI teams.

Research Objective 6-6: Granularity of Control (GOC) and AI Transparency.

Although systems with low GOC, such those using Playbook control or goal-based control, promise to lower workload, the transparency of these systems and their effects on operator situation awareness need to be further researched. Because systems with low GOC involve increased queuing of tasks, situation awareness may decrease and the human may be less

likely to detect the need for interventions in non-normal conditions (Endsley, 2017). Methods for improving situation awareness within low-GOC systems are needed.

Research Objective 6-7: Playbook Extensions for Human-AI Teaming.

It would be useful for the utility of plays and Playbooks for human-AI teaming to be studied on several novel fronts, including (1) the utility of a play-based framework in communication and mental model formation before and after mission execution activities (e.g., training, debriefing, change awareness); (2) the utility of plays (and hierarchical task frameworks) in organizing, constraining, and framing change awareness of learning results against prior, functional baselines; and (3) the utility of communication based on play (and hierarchical task decomposition) frameworks for intent-centered communications between human and AI team members and how such communications need to be constructed.

OTHER HUMAN-AI TEAM INTERACTION ISSUES

The committee finds that a number of additional human-AI interaction issues remain largely unexplored and need further research. There is a paucity of design and engineering methods to support a fine-grained analysis of the interactions between human and machine agents that are necessary for optimal joint performance (Roth and Pritchett, 2018; Roth et al., 2019; Smith, 2018). Roth and colleagues (2019) argue that methods are needed to support (1) “analyzing operational demands and work requirements” (i.e., context of use); (2) “exploring alternative distribution of work” across human and AI agents; (3) “examining interdependencies” among human and AI agents required for effective “performance under both routine and off-nominal (unexpected) conditions”; and (4) “exploring the trade-space of alternative” human-AI team options (p. 200).

Several promising efforts have begun to address these needs. Matt Johnson and colleagues (Johnson, Bradshaw, and Feltovich, 2017; Johnson, Vignati, and Duran, 2020) have developed detailed representations and modeling techniques for analyzing alternative methods of distributing work across humans and AI systems, and they described the implications of these techniques for detailed human-AI interaction, including a consideration of how different human and machine agents could support each other. Several promising approaches exist. For example, Elix and Naikar (2021) are developing methods to analyze how work can be shared and/or shifted fluidly between agents, and IJtsma et al. (2019) are developing computational methods for “determining the allocation of work and interaction modes for human-robot teams” (p. 221). Calhoun (2021) and Roth and colleagues (Roth et al., 2017, 2018) are developing frameworks to inform detailed human-machine teaming interaction design. McDermott et al. (2017, 2018) have produced human-machine teaming systems engineering guidance to inform military system acquisition.

Key Challenges and Research Gaps

The committee finds three key research gaps in the area of human-AI team interaction, including

- Prediction of emergent behaviors in human-AI team interaction;

- Effects of human-AI team interaction design on skill retention, training requirements, job satisfaction, and resilience; and

- Predictive models of human-AI team performance in both routine and failure conditions.

Research Needs

The committee recommends addressing two research objectives for improving human-AI interaction.

Research Objective 6-8: Human-AI Team Emergent Behaviors.

People often change their behaviors in unpredictable ways in response to automation (Woods and Hollnagel, 2006). Research is needed to better predict how human behaviors will change with the introduction of AI systems, and the potential interactions of human behaviors

with AI system behavioral changes, as one influences the other. It would be beneficial for this research to explore how various forms of interaction will influence cognitive performance (Smith, 2018).

Research Objective 6-9: Human-AI Team Interaction Design.

Research is needed to better understand the effects of interaction design decisions on skill retention, training requirements, job satisfaction, and overall human-AI team resilience (Roth et al., 2019). In addition, methods for managing the inherent tradeoffs in human-AI team design are needed (Hoffman and Woods, 2011; Roth et al., 2019).

SUMMARY

There are a number of factors associated with combining humans and AI systems into teams, and the ways tasks are temporally and functionally distributed between team members, that have a significant effect on the performance of the human-AI team. The LOA and the ways that LOA assignments can change over time present a key design decision for human-AI teams. Research is needed to better support flexible automation, to support low-workload GOC approaches such as Playbook control, and to explore additional features of human-AI interaction in team settings. Models of human-AI interaction need to be developed to predict the outcomes of design decisions in routine and failure conditions, as well as potential emergent behaviors.