7

Trusting AI Teammates

Trust can be defined as “the attitude that an agent will help achieve an individual’s goals in a situation characterized by uncertainty and vulnerability” (Lee and See, 2004, p. 2). Trust can mediate the degree to which people rely on each other or on a technology such as AI. Studies of trust in technology (e.g., automation, computers, robots, and AI) have emerged in many work domains, including automotive, retail, healthcare, education, military, and cybersecurity (Chiou and Lee, 2021; Siau and Wang, 2018). Although myriad studies have investigated the antecedents of human trust in technology, such as personality types and characteristics of the technology, many studies concerned with system performance focus on trust defined or operationalized as reliance on or compliance with another agent (Kaplan et al., 2021).

Although this work remains useful, the committee notes two critical issues that impede future progress in understanding the role that trust plays in human-AI teaming. These issues are (1) the lack of research on understanding how organizational and social factors surrounding AI-enabled systems—including how goals are adapted, negotiated, or aligned—inform the interdependent process of trusting; and (2) the strict definition of trust that limits its study to factors affecting reliance or compliance behaviors in the context of risk, rather than as a process that develops across multiple interactions and decision situations and affects broader sociotechnical and societal outcomes, such as cooperation (Coovert, Miller, and Bennett, 2017; Lee and Moray, 1994; Riley, 1994). One of the myriad factors affecting the organizational and social contexts of a team, albeit a novel one, will be the presence of one or more AI team members, and thus, trust in a team will include and be impacted by the perceived and projected decisions, actions, and impacts that the AI team member will have.

TRUST FRAMEWORKS PAST AND PRESENT

An early integration of the trust literature (Lee and See, 2004) showed that trust is one of many factors that affect reliance and compliance behaviors with automation. This framework of trust shows that self-confidence and other attitudes combine with trust to guide intention; and multiple factors, including workload and task demands, combine with intention to guide a person’s action. Fishbein and Azjen’s (Ajzen and Fishbein, 1980; Fishbein and Ajzen, 1975) theory of reasoned action provides a framework that links belief, attitude, intention, and behavior in a model that has been broadly applied, including to computer system acceptance (Davis, Bagozzi, and Warshaw, 1989). More recent reviews of trust in automation present evidence that, although there are similarities between people’s social responses to technology and to other people (Nass and Moon, 2000), trust in technology may differ from trust in other people (Madhavan and Wiegmann, 2007). Others advance a deeper description of trust

development, in terms of a framework containing three layers of trust: dispositional, situational, and learned (Hoff and Bashir, 2015). Three categories of factors have been found to influence trust: human, technology, and environmental (Hancock et al., 2011; Kaplan et al., 2021). In the committee’s judgment, consistent outcomes of these analyses since 2004 include (1) trust involves analytic and affective processes; (2) trust affects decisions to rely on or comply with technology; and (3) trust is influenced by the qualities of a person, the technology, and the environment.

Another consistent finding from Lee and See (2004) through Kaplan et al. (2021) and the broader trust literature is that trust influences and is influenced by other humans who might use the automation. This idea has led to concepts like distributed dynamic team trust (Huang et al., 2021), which stems from research showing that trust in technology affects both active users and passive users (i.e., people whose interactions may be mediated or interrupted by technology) (Montague, Xu, and Chiou, 2014). Domeyer et al. (2019) reported that trust can reflect differently among incidental or indirect users (i.e., people who are not the direct customers or beneficiaries of that specific technology) when AI is deployed in systems that are more open. In the committee’s judgment, trust depends on social interactions such as reputation and the formal or informal communication that contributes to that reputation, such as gossip. The committee believes this social dimension of trust, distinct from the cognitive dimensions of trust, may become more prominent in human-AI teaming situations in which an AI system is capable of more autonomy in specific roles, or when human-AI teams are completing certain tasks or operations (Chiou and Lee, 2021; Sheridan, 2019).

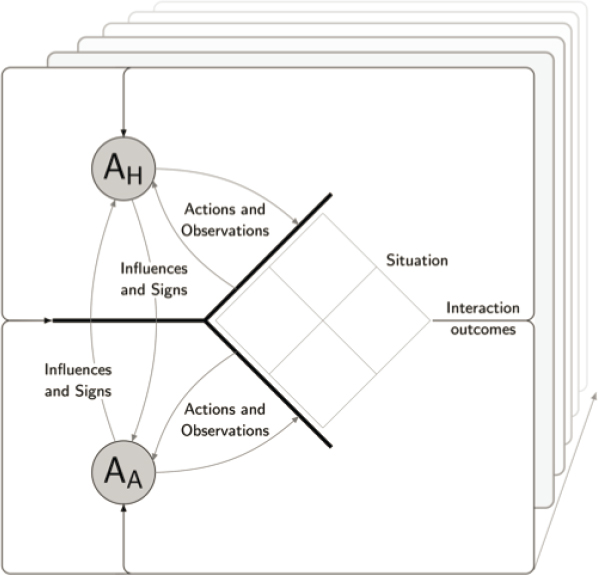

Figure 7-1 provides a model of relational trust that illustrates interactions between two agents. This model does not address distributed trust involving groups of three or more (Huang et al., 2021), or how distrust might spread through reputation within a network of agents (Riegelsberger, Sasse, and McCarthy, 2005). Chiou and Lee (2021) portray in Figure 7-1 that a trusting, highly capable automation depends on social decision situations that are embedded in a goal environment. Trust evolves from repeated interactions between a human agent (AH) and an automated agent (AA) and the outcomes of those interactions over time. Fading in this figure depicts future situations. The focus on dyadic exchanges highlights the structural influences of trust through interactions that are

SOURCE: Chiou and Lee, 2021, (p. 8). Reprinted with permission from Sage Publications.

not usually explicit in studies on trust between more than two interacting agents. Although this model has limitations, it uses the dyad as a simple unit of analysis to explore the relationships between inter-agent coordination and cooperation (Williams, 2010).

TRUSTING AI IN COMPLEX WORK ENVIRONMENTS

Most team, AI, and automation trust literature assumes a shared goal. In the committee’s opinion, goals may become misaligned to various degrees in fast-changing, adversarial environments. Although squadron leaders have some judgment-based decision authority for on-the-ground decisions, for example, broader strategic goals could come into conflict with the goals of other units, as new information emerges. When we consider human-AI teaming in multi-domain operations (MDO) environments, there is an even more pervasive demand for rapid information gathering, processing, filtering, and communicating, to support strategic decision making between agencies in decision contexts that are also rapidly changing. Although organizational process controls may address this—through appropriate, interdependent performance measures that foster cooperation within and across agencies, for example— trust is likely to be central to smoothing these complex interactions. For the success of human-AI teams, the committee believes it is crucial to understand how the organizational and social contexts of those teams affect the evolution of trust at the interactive, front-line level of decision making (e.g., within the context of the conflicting goals of preserving the safety of civilians versus mission accomplishment). This understanding can also partly inform how trust between teams evolves (e.g., the scenario in Gao, Lee, and Zhang, 2006). Trust in other teams is complicated by the need to understand the capacity of the other team’s AI system and the influence of that system on the team. Team situations require the integration of an individual with the team’s goals, and, in the extreme, being a “team player” may mean a willingness to set aside individual goals and cooperate on shared, team goals.

The committee finds that any assumption of shared goals in teaming merits examination, as exemplified by AI team members such as HAL 9000 in Stanley Kubrick and Arthur C. Clarke’s novel and film 2001: A Space Odyssey, and Murderbot from Martha Well’s 2017 novella All Systems Red. In military contexts, the dilemma of whether people should be sacrificed to achieve broader strategic goals seems central to trusting AI team members. The challenge emphasized in this report is less about the moral philosophy or ethics behind decisions, and more about how local goals will need to be adapted, negotiated, or aligned to achieve global use of human-AI teams in complex and dynamic task environments. In such environments, AI systems may be distrusted not because they perform poorly, but because they act on a broader information array that conflicts with the narrower information array available to the human, resulting in misaligned goals. In addition, the effects of concept drift, in which current situations are different from the situations the AI has been trained for (Widmer and Kubat, 1996), can negatively affect goal alignment and trust. In teaming, the process of adapting, negotiating, and aligning goals with actions depends on trust. Until recently, guidance on how to specify those increasingly complex contexts has been sparse.

In the committee’s judgment, understanding the context in which AI systems will be implemented within MDO, whether in the office, on the ground, in the air, or at sea, is critical to specifying the goal environment. For example, recent work on standardizing evaluation criteria for trusting AI systems (Blasch, Sung, and Nguyen, 2020), focused heavily on criteria that identify the AI system’s information credibility. This focus would be less appropriate for evaluating the trustworthiness of AI systems involved in cybersecurity access control, scheduling and logistics, or resource management domains, among many other areas with differing task demands within their respective goal environments. Even in the comparatively restricted realm of plays and Playbooks, agents must be delegated some authority to behave within the constraints of the play if there is to be workload savings for the team (Miller and Parasuraman, 2007) and truly autonomous and independent agents can, presumably, always choose to violate their orders if trust or willingness to sacrifice is lacking.

KEY CHALLENGES AND RESEARCH GAPS

The committee finds two key challenges that need to be addressed for improving the understanding of trust in an AI teammate, particularly in the MDO context.

- Trust research, testing, and evaluation environments need to be reframed. This can be accomplished by specifying the social structure of team decisions in trust research, and by moving toward directable and directive interactions, not just transparent and explainable interactions.

- Trust measures and statistical models need to be reframed. This can be accomplished by moving from technology reliance to team coordination and cooperation, delineating distrust from trust, considering dynamical models of trust evolution, and thinking about trust as a process that emerges from interacting dyads as well as from multi-echelon networked agents.

RESEARCH NEEDS

The committee recommends addressing six major research objectives for improving trust in human-AI teams.

Research Objective 7-1: Effect of Situations and Goals on Trust.

To establish baseline models of trust across studies of interacting agents in various operational task contexts, situation structures could be used to study how the goal environment affects trust decisions. Situation structures (different from the task situation defined in Chapter 4) refer to a formalism in social decision making based on interdependence theory (Kelley and Thibaut, 1978) and are commonly represented as a 2 x 2 decision matrix. “[A] situation structure specifies the choices available to each actor in a dyad, the outcomes of their choices, and how those outcomes depend on the choices of the other agent” (Chiou and Lee, 2021, p. 9). Situation structures are useful in determining the relative advantages of trust for cooperation or coordination in an environment. Situation structures can be used as an organizing framework for designing evaluation environments (e.g., testbeds) for trust research in human-AI teaming (Chiou and Lee, 2021). Trust calibration, which is to align

a person’s trust with the automation’s capabilities—is often described as a prerequisite for superior human–AI performance (Lee and See, 2004). However, conflicting results show that trust calibration alone is not sufficient for superior performance (McGuirl and Sarter, 2006; Merritt et al., 2015; Zhang et al., 2020). The situation structures show that when the performance profile of the person and the automation are highly correlated, trust calibration does not matter. (Zhang et al., 2020) (Chiou and Lee, 2021, p. 10)

Furthermore, situation structures can be used to show “when it might be appropriate for humans to rely on AI, even when AI performs worse on a task than humans would, “because relying on the automation enables [humans] to shift attention to a more important activity” (Chiou and Lee, 2021, p. 11). Situation structures encourage an analysis that considers reliance on AI in a broader sociotechnical context. Although formally representing situations as decision matrices can be one way for researchers and developers to quickly identify trust-relevant contextual similarities across empirical studies and to evaluate the generalizability of those studies, identifying these common structures can also help explain the variable and seemingly contradictory findings in the trust literature, and can help define the contexts within which we can begin to find consistent results. As such, field studies that leverage mixed methods, including anthropological studies with rich qualitative datasets, are also necessary to help identify the many situations that might exist within a task environment. Other computational approaches (e.g., agent-based modeling and hidden Markov modeling) that capture interaction patterns and investigate outcomes of interactions (Cummings, Li, and Zhu, 2022; Juvina, Lebiere, and Gonzalez, 2015) can also benefit from these formal representations of situations. Beyond identifying the situation structure, identifying strategies for negotiating these situations is a closely related issue. Such strategies could help the AI teammate maintain cooperation in a situation in which competition is likely (Chiou and Lee, 2021).

Research Objective 7-2: Effects of Directability on Trust.

Concepts like transparency and explainability would benefit from being accompanied by concepts like directability, to better support dynamic, trusting relationships in complex task environments. Directability is “one’s ability to influence the behavior of others and complementarily be influenced by others” (Johnson and Bradshaw, 2021, p. 390). Designing for AI system transparency and explainability remains important for communicating purpose, process, and performance information about an AI

teammate (see Chapter 5). Such information, when communicated effectively, can facilitate smoother human-AI team integration by engendering, repairing, and sustaining trusting relationships. However, in the committee’s judgment, studies that focus exclusively on how design techniques can improve human or human-AI performance tend to miss the relational aspects of these constructs that are foundational for trust. These relational aspects include the idea that transparency can mean different things to different stakeholders within and between organizations or organizational units (Ananny and Crawford, 2016), and that anthropomorphism can enable a sense of efficacy with and understanding of AI, yet its effects are highly variable and sometimes inappropriate (Epley, Waytz, and Cacioppo, 2007). To be deemed an explanation according to human social interactions, explanations must be presented relative to the explainer’s beliefs about the receiver’s beliefs (Miller, 2019). Furthermore, transparency and explanations may not always be necessary or possible, depending on the teaming arrangement (e.g., in human-animal teams that do not share mental models or communication modalities).

In the committee’s opinion, responsivity may be a more useful concept for teaming with AI. AI responsivity refers to “the degree to which the automation effectively adapts to the person and situation” and “captures the notion that [AI] could affect trust development through its responses to common ground variables” (Chiou and Lee, 2021, p. 6). Common ground, which is a concept related to shared mental models (see Chapter 3) and shared situation awareness (see Chapter 4), refers to “the mutual knowledge, beliefs, and assumptions that support [the type of] interdependent actions” (Klein et al., 2005, p. 146; Chiou and Lee, 2021, p. 6) required in teamwork, not just task work.

Therefore, beyond thinking about transparency and explainability (or related concepts like legibility, observability, and explicability) in a more relational way, AI responsivity requires making information on the purpose, process, and performance of the AI system directable and observable (i.e., transparent). To what extent does sharing transparency information, versus behaving in a transparent manner, affect in-the-moment decisions (Takayama, 2009)? Further, AI responsivity may provide the AI system with the ability to observe and direct its human counterparts (Johnson and Bradshaw, 2021). This means going beyond simply presenting information that “privilege[s] seeing over understanding” (Ananny and Crawford, 2016, p. 8), and advances from the notion that AI reliability alone affects trust, if trusting relationships are to be sustained over time and across contexts. In the committee’s judgment, this seems critical for the coordination and timing of AI contributions, balancing workload, and sharing tasks according to expertise, as effective teams often do. Perhaps more important is understanding whether the dynamic interaction can help to align goals for cooperation. Team situations require that people align their goals and cooperate. Although this requirement is often assumed during the formation of a team, in complex work environments with fast-paced, changing conditions, goal alignment and cooperation may additionally require setting aside individual goals (or initial goals) to align quickly to the updated goals that are in the best interests of the team. Analyzing the situation structure can identify the degree to which individual goals must be adjusted to align with team goals.

Research Objective 7-3: Cooperation as a Measure of Trust.

In teaming structures, behavioral measures of cooperation would be useful to employ, to understand when trust matters beyond reliance and compliance in function allocation structures. Team science literature provides a useful framework for understanding human-AI teaming (i.e., interactions with increasingly capable automation) because teams in general tend be comprised of members with high levels of autonomy, meaning team members do not have complete control over other team members (NRC, 2015). Therefore, an assumption of teaming is that it is not deterministic (i.e., not akin to choreographing a collection of autonomous agents), but that coordination emerges from the team’s interactions (Cooke et al., 2013) with trust playing a central role (i.e., in teamwork). In the function allocation approach outlined in early conceptualizations of human-automation systems (Fitts, 1951; Sheridan and Verplank, 1978), which remains prevalent in modern applications of AI with well-defined roles narrowly scoped to a particular task, reliance and compliance may make sense as primary behavioral outcomes of interest when it comes to human trust. However, coordination to achieve a specific goal in laboratory studies of teaming needs to be distinguished from coordination in more complex environments, in the field or in simulated settings, that involve uncertainty and changing goals. These environments often demand a dynamic process of trust that is central to coordination.

Whereas “coordination is about the timing or arrangement of joint decisions” (Chiou and Lee, 2021, p. 10), or dependency management (Malone and Crowston, 1994), cooperation is about negotiating and aligning individual

goals when they differ from the joint goal, and it is best identified in team members willing to give up individual benefit to achieve greater benefits for the team. “Negotiating goals to cooperate—by way of trust—has different outcomes compared to coordination. Importantly, situation structures indicate which decisions require negotiation” (Chiou and Lee, 2021, p. 10). Although “trust plays a role in both coordination and cooperation situations, for coordination, the benefits of trust” (Chiou and Lee, 2021, p. 10) tend to be more interpersonal (similar to how perceived collegiality accumulates as social capital to facilitate positive downstream outcomes in a relationship) whereas, in cooperation situations, “trust directly affects the immediate decision outcome” (Chiou and Lee, 2021, p. 10). Strain test situations are one example in which decisions to cooperate can emerge from interactions. If a team member cooperates, or meaningfully helps a teammate in the moment, even though it incurs some cost to the team member (e.g., time, other resources), then that team member is said to have “passed” the strain test, and such actions are known to bolster trust in a relationship (Simpson, 2007). Behavioral outcome measures of trust that do not interfere with work performance and dynamics should be considered.

Research Objective 7-4: Investigations of Distrust.

In teaming environments that are highly interactive, more granular measures may be needed to consider distrust separately from trust, rather than as opposing ends of the same linear scale. Studies on highly reliable (but not perfect) automation that fails have shown a resulting bimodal distribution in trust, which is not well explained by individual differences, such as predisposition to trust. Furthermore, there is a theoretical argument (Harrison McKnight and Chervany, 2001), and more recent empirical evidence (Dimoka, 2010; Schroeder, Chiou, and Craig, 2021; Seckler et al., 2015) that distrust is a separate, albeit related, construct from trust, and that there is merit in viewing trust and distrust as separate, simultaneously operating concepts. Yet, many studies that focused on trust in technology do not measure distrust separately from trust, possibly because influential instruments developed to measure trust have suggested that trust and distrust could be treated as part of the same continuum (Jian, Bisantz, and Drury, 2000). One working hypothesis from this committee is that active suspicion of a teammate is a different mode from looking for reasons to trust, and that distrust and trust may be more about switching between modes. For example, a system may be mistrusted due to its performance capabilities or due to a suspicion that it has been hacked. Therefore, as a dynamical system, human-AI teaming may involve not only calibrating trust or developing trust with one another (i.e., seeking out reasons to trust), but may also involve detecting adversarial behavior that leads to distrust.

Research Objective 7-5: Dynamic Models of Trust Evolution.

Dynamic models of trust evolution within specified goal environments are needed, which go beyond eliciting and categorizing the factors that could affect trust in an AI teammate. For example, research has shown that trust can be lost after a system failure and may take time to recover, and that automation failures have a greater effect on trust than automation successes (de Visser, Pak, and Shaw, 2018; Lee and Moray, 1992; Lewicki and Brinsfield, 2017; Reichenbach, Onnasch, and Manzey, 2010; Yang, Schemanske, and Searle, 2021). As an analog for team outcomes, Gottman, Swanson, and Swanson (2002) show how marriage outcomes can be described and predicted through dynamical systems analysis and differential equations, an approach that is not about understanding individual differences, spousal traits, or environmental factors, but about the dynamics of partner communication. Such models are needed to understand how trust evolves and affects performance outcomes in various human-AI team contexts. These contexts can be specified through situation structures after identifying and understanding the goal environment of the human-AI team, shown in Figure 7-1, which envelops the task environment.

The citations in the previous paragraph, focused on eliciting and categorizing factors that could possibly affect trust in an AI teammate, may be useful for understanding the state-of-the-art in research (e.g., Kaplan et al., 2021), but may also do little to advance our understanding of trust dynamics, such as how trust evolves in real-world, interactive contexts. Furthermore, factor analyses that rely on a layperson’s concept of the word “trust” may dilute, or worse, lead astray from, the rigorous, theoretical concept of trust as something influenced by the purpose, process, and performance information of a partner in work contexts (Lee and See, 2004; Long et al., 2020; McCroskey and Young, 1979). Dynamic models of trust help make better use of the behavioral responses that are associated with trust and that are often the primary outcomes of interest with respect to understanding

trust. Such dynamic trust models, and their use of contemporary datasets, can help to capture the granularity of how interactions in one context might influence subsequent interactions in another context.

Research Objective 7-6: Trust Evolution in Multi-Echelon Networks.

Trust between multi-echelon networked agents has unique properties that cannot be captured by studying dyadic trust alone. Proposed frameworks of trust in self-organizing, agent-based automation and AI-enabled teammates have raised issues including the concept of trust transitivity among multi-agent teams (Huang et al., 2021; Lee, 2001). Also, a recent meta-analysis identified reputation as an impactful AI-related antecedent of trust (Kaplan et al., 2021). Though studies of dyads remain critical for understanding the dynamics of trust through interactions, effects on critical team concepts like coordination, cooperation, or competition, and human decision making with technology, dyads are limited for understanding the spread of trust in multi-echelon networked agents and the effects of trust on broader outcomes like organizational performance (Moreland, 2010; Williams, 2010). Understanding coalition building across teams and how peripheral stakeholders within the human-AI team’s goal environment form various situation structures requires further study involving larger units of analysis, including teams of three to eight, teams of teams, and networks.

SUMMARY

The development of increasingly capable AI-enabled teammates, and the flattening of organizational structures from hierarchies to MDO, mosaic-like structures, suggest that a reframing of trust is needed to advance our understanding, design, and implementation of human-AI teams. Although a good deal of research has focused on promoting human reliance on automation by calibrating trust, this approach does not address the relational aspects of teaming and system-level outcomes, such as cooperation. The proposed research objectives outline a path forward for understanding how organizational and social factors surrounding AI systems inform the interdependent process of trust in teams. These objectives go beyond the pervasive focus on calibrating trust solely for appropriate reliance and compliance.