2

Why Ontologies Matter

Why worry about behavioral ontologies, when scientists and those who apply scientific knowledge may have many other pressing concerns? To understand why this seemingly arcane idea is so important, it is helpful to consider what problems arise when ontologies are not in place. For example, imagine a mental health professional who treats adolescents in a practice where productivity demands are high. This professional knows there is a wealth of research related to their work but struggles to identify answers to specific questions that arise in the course of their practice. With very limited time to keep up with journals that document the latest research findings, health care providers just want to be able to determine what evidence-based treatment approaches are new and relevant, which ones could help their patients, and how their patients might respond to these approaches. Seeking answers in the literature, they usually encounter a bewildering array of ideas, measures, and treatments.

Researchers have developed many terms and models for studying mental health disorders, as well as possible treatments. But that evidence about research developments exists in thousands of research papers published every month, which may be classified in varying ways and venues, using a wide array of possible search terms. A review of 435 randomized clinical trials for youth mental health interventions, for instance, noted that nearly 60 percent of studies (i.e., 258 of the 435) did not use a diagnosis to describe the participants (Chorpita et al., 2011). Instead, many used cutoffs on scales or other criteria to define the study samples (e.g., elevated depression scores, juvenile justice involvement). Even among the minority of trials that reported diagnoses to define their study samples, multiple

systems were used, including three different editions of the Diagnostic and Statistical Manual of Mental Disorders (DSM) as well as the International Classification of Diseases (ICD) 10. Other trials reported diagnosis with no specification of the diagnostic classification system used.

If one wishes to retrieve and consider even a nearly complete list of all clinical trials relevant to an adolescent with a diagnosed DSM-5 major depressive disorder, for instance, the task is nearly impossible without a strategy to link the inconsistent definitions of depression. Detecting patterns across the 65 depression-relevant clinical trials (e.g., “effective treatments used with younger depressed youth tend to have these features . . .”) would similarly require a shared conceptualization of the variables of interest from those trials along which inferences were of interest (i.e., how treatments operations were defined, how age was defined) to allow some statistical aggregation. Thus, it is difficult for anyone to draw on an established and evolving evidence base relevant to this particular domain. Although this example highlights the predicament of a busy mental health professional, researchers and others seeking literature relevant to many kinds of questions in many domains routinely face similar dilemmas.

Another sort of ontological problem can be found in the large and rapidly growing literature on the profound ways that disparities affecting population groups defined largely by race and ethnicity influence human health and development throughout people’s life spans (see, e.g., Institute of Medicine, 2003, 2012; NASEM, 2019a, 2021). Researchers and members of the general public who are interested in these issues must rely on racial categories used in the collection of data about specified groups.1 Yet the accuracy of the available data depends on the validity of the way those groups have been labelled. Most early scientific efforts to classify and understand groups of human beings in this way are now recognized as both misguided and harmful, and the definitions have evolved continuously over time (National Research Council, 2004): see Box 2-1. Many recent studies of racial and ethnic groups are based on the understanding that these are evolving, socially constructed categories—not biological ones—and so the earlier data must be interpreted carefully (see, e.g., Hunley et al., 2016; National Research Council, 2004). On a purely practical level, researchers studying trends that affect population groups need to account for changes over time in the definitions of who was in which group.

There are no easy answers for challenges such as these, but they partly reflect limitations in the way ontologies—basic tools for communicating clearly about concepts and constructs, and the way they are classified—are used, or not used, in the behavioral sciences (see Box 2-2).

___________________

1 The committee gathered valuable insights about these issues from a session at their first workshop; see https://www.nationalacademies.org/event/06-29-2021/understanding-ontologies-in-context-workshop-2

In this chapter we look first at the most visible challenges: the reasons ontologies can make a difference in people’s lives, including synthesizing and applying the knowledge produced by scientists. We then turn to the scientific challenges that underlie these problems, looking first at difficulties with generalizing about scientific findings and then at challenges with building and structuring knowledge.

CHALLENGES WITH SYNTHESIZING AND APPLYING KNOWLEDGE

Behavioral scientists produce a vast amount of research every year, but the publication of the results is only an initial step in the process by which scientific knowledge can bring benefits to society. For new knowledge to benefit patients; clinicians, investigators; and professionals in education, business, law, and other domains, it has to be tested and reproduced, and the findings have to be managed, synthesized, disseminated, and applied. Without ontologies, all of these functions are more difficult.

Conscientious scholars and clinical practitioners are expected to keep abreast of literature in their field. But is this expectation realistic? Every year, there are 23,000 scientific journals that collectively publish more than 2 million peer-reviewed scientific articles each year (Elsevier, 2020; National Science Board, National Science Foundation, 2021). In the United States alone, an estimated 422,000 papers were published in 2018. No human being could sift through the volume of literature relevant to even a single domain to retrieve the information they need to stay current or identify nuances and trends in research findings.

An estimate of the rate of science growth since the mid-1600s, based on data from the Web of Science and the number of cited references identified per year, illustrates the scope of the challenge: see Figure 2-1. Acceleration has been most rapid over the last 70 years, with the greatest inflection within the most recent decade; there are no signs the trend will decelerate (Bornmann and Mutz, 2014).

Most academics and health care providers can devote only a limited number of hours to reading the scientific literature in their fields. Academics must teach, participate in university service activities, advise students, and engage in community service. Clinical practitioners spend most of their time seeing patients, writing notes, dealing with insurance issues, and managing their practices. That leaves them little time to survey, let alone consume, the literally thousands of potentially relevant articles that enter the literature each year.2 Without ontologies to frame the scientific discourse, it is practically impossible for stakeholders to reliably identify the most important developments in their fields.

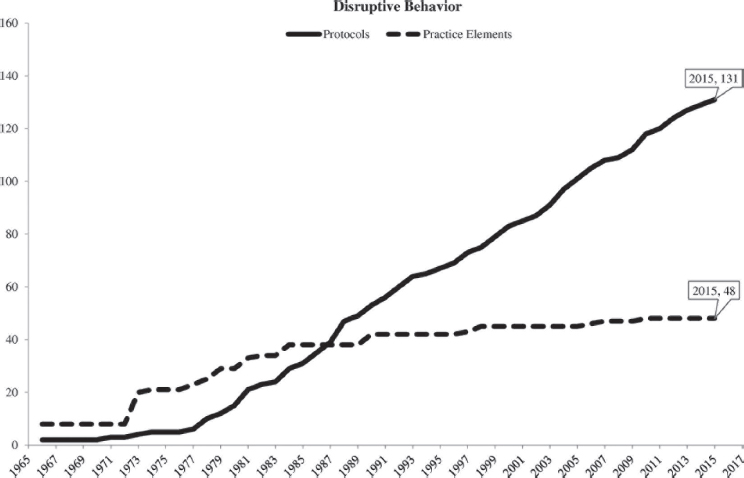

One illustration of challenges facing the behavioral sciences was provided by a recent historical review of 50 years of randomized clinical trials on the subject of youth mental health (Okamura et al., 2020). The authors found that the way the studies were classified—their implicit ontological structures—did not offer clear guidance for enhancing the delivery of care.

Treatment manuals that specify the nature of a clinical intervention have long been used as the written codifications of psychotherapy procedures

___________________

2 See Kim et al. (2020) for a discussion of searching academic literature in the internet age.

SOURCE: Bornmann and Mutz (2014, p. 2218).

to be used. Such manuals have served as a tool for defining different approaches being studied in clinical research trials.3 In their review, Okamura and colleagues (2020) compared this dominant conceptualization of treatments based on manuals to a less widely used way of classifying intervention approaches, in which it is the component practices defined within these manuals, known as “practice elements,” that are classified (Chorpita et al., 2005). Practice elements include specific procedures typically enumerated in a manual, such as guiding caregivers in the use of a reward program, teaching a problem-solving technique to a youth, or promoting specific communication skills among family members. Such procedures are typically used across many instances of evidence-based programs for youth and family mental health (e.g., Chorpita and Daleiden, 2009). The relationship between practice elements and manuals is basically one of component membership. Although they include other material, manuals are collections

___________________

3 Building on earlier policy recommendations from the American Psychological Association Task Force on Promotion and Dissemination of Psychological Procedures, for example, Chambless et al. (1998, p. 6) argued that “ . . . brand names [labels] are not the critical identifiers [of an intervention]. The manuals are.”

SOURCE: Okamura et al. (2020, p. 75).

of practice elements, in the same way that a playlist represents a collection of songs.

Figure 2-2 shows the proliferation of treatment manuals (referred to as “protocols” in the figure), especially over the last 25 years (Chorpita and Daleiden, 2014, p. 329). By 2015, 131 protocols targeting disruptive behavior in youth had been tested in 149 randomized clinical trials (Okamura et al., 2020). In contrast, a smaller number of practice elements, only 48, appeared in these manuals, with a slope that is clearly shallower over the latter half of the period.4

As the example illustrates, many new manuals have been developed and tested, but these manuals largely appear to be new combinations of existing practice elements, rather than conceptually distinctive approaches to intervention. Returning to the playlist metaphor: although the field is producing many new playlists, they appear mostly to be combinations of the same set

___________________

4 The same data source used by Okamura et al. (2020), PWEBS [see https://www.practicewise.com/#services], shows that as of November 2021, there are 304 manuals using 58 of the practice elements specified in the analysis by Okamura and colleagues, which, if superimposed on their figure, shows an increasing separation between the rate of innovation of clinical procedures and the full protocols tested in trials.

of songs. In other words, despite decades of research regarding psychosocial treatments for disruptive behavior, the actual protocols used in real-world practice are rarely innovative, and there is little truly systematic development and refinement across protocols and approaches. Moreover, detailed reviews of actual clinical care have shown that the component procedures of these treatments are typically delivered irregularly, with systematic omissions, and at low levels of intensity and fidelity (Kazdin, 2018; Garland et al., 2010). It has been difficult to perceive this lack of progress because of the ways the field describes and classifies the elements being studied. A lack of ontological clarity makes it difficult to perceive significant trends and highlight valuable developing knowledge about effective combinations of practice elements.

The significant point is not that one conceptualization of the interventions is superior to the other. There clearly was and is a need for both views: specification of entire treatments as collections of procedures with defined length, pace, order, and other features, on one hand, and specification of the subordinate common elements of the treatments studied on the other hand. Although both conceptualizations are important, a more granular “elements” approach allows synthesis of a wealth of interventions that otherwise could be difficult to aggregate, and thereby can lend itself to particular use cases. What is important is that the way that entities are labeled and classified has implications for synthesis and retrievability.

This example also illustrates two key challenges that are relevant to most, if not all, areas of behavioral science. The first is the potential for inefficiency of ongoing research. For example, without an ontology that explicitly specifies relationships among different entities within a domain, researchers run the risk of engaging in empirically or conceptually siloed retesting of the same research questions. In this example, it would mean repeatedly testing whether similar collections of roughly equivalent clinical procedures performed better than a control group in a randomized trial. Although it is possible that the past 20 years shown in Figure 2-2 underrepresent some types of innovation, such as how the same clinical procedures were potentially rearranged or re-sequenced, the ability to visualize that innovation depends on having explicit specification of practice elements or procedures and of the ways they are arranged for application; the latter is especially underdeveloped or absent in most areas of behavioral science (Chorpita and Daleiden, 2014, p. 330). This challenge is not unique to intervention research or to mental health; it arises from a fundamental challenge with synthesizing an evidence base using a shared, explicit specification of the concepts underlying research in a specified area (i.e., an ontology).

The second challenge is a manifestation of the more general and ubiquitous “wealth of information” problem, that as a body of knowledge grows,

its retrievability and actionability decreases (Simon, 1971). Research in the behavioral sciences is producing a body of knowledge so extensive that it is increasingly difficult to apply efficiently and effectively. As the example above illustrates, it is unlikely that without an ontology, the more than 300 treatment programs tested in clinicaltrials.gov could be organized effectively to guide service delivery for a mental health care provider. Roughly 50 years ago Herbert Simon (1971) provided an aspirational description of science as “the process of replacing unordered masses of brute fact with tidy statements of orderly relations from which these facts can be inferred” (p. 45). However, identification of patterns and distillation of facts both depend on the ability to aggregate, filter, and otherwise manage the evidence, which is currently limited in the behavioral sciences. In short, ontologies make it much easier to filter, aggregate, synthesize, and retrieve very large knowledge bases that would otherwise be too complex to be fully useable—and to generalize that knowledge.

CHALLENGES WITH GENERALIZING RESEARCH FINDINGS

Questions about the robustness of research in the social and behavioral sciences have attracted significant attention over the last two decades. This issue came to public attention in 2013 when questions about the replicability of a wide range of papers were raised in both the academic and popular literatures. In response, an expert committee from the National Science Foundation (NSF) considered the problem of robust science in the behavioral, social, and economic sciences (Bollen et al., 2015).5 The report drew attention to problems with the orderly development of knowledge, including the indiscriminate use of methods and measures to evaluate constructs without careful documentation of the relation between the measures and the constructs to be measured. The report identified generalizability as a serious problem in the behavioral sciences. Ideally, researchers hope to design studies that yield results that are equivalently applicable across different sets of study participants and can be generalized to apply much more broadly. Problems with generalizability can arise from a number of factors, several of which are relevant to a potential role for ontologies. One difficulty is in generalizing results obtained using a particular method or measure to other contexts or populations where different methods or measures of the same constructs were used. As we discuss below, the challenge of identifying comparable measures for phenomena is in part an ontological one.

Another challenge is that a causal or predictive association may be found in one population or set of circumstances (e.g., predominantly White

___________________

5 A recent report addresses issues of reproducibility and replicability in science more generally (NASEM, 2019b).

undergraduate students at a large state university) but not in others (e.g., Black adults in Chicago), even if the same measures are used. In such cases it may be unclear what conclusion to draw. Perhaps there was some sort of critical flaw or limitation in the original study. Alternatively, perhaps the original study was valid for the study population but there are additional, unrecognized moderating or contextual variables that affect other populations differently. Another possibility is that although the results from the original study were replicable under the same laboratory conditions, they were formulated in terms of treatments and measures rarely present in real-world settings. Ontologies that define the entities that are important in a field of study will support the development of measures and research designs that can be replicated and generalized.

Ultimately, problems with generalizability create difficulties for consumers of research—whether other researchers, health care providers, or other stakeholders. Ontologies can help by providing a framework for accurately describing and comparing conclusions, describing how measures are related to conclusions, identifying moderating variables, and distinguishing domains or regimes in which relationships hold from those in which they do not.

CHALLENGES WITH BUILDING AND STRUCTURING KNOWLEDGE

It is a basic function of science to label and classify the phenomena that are observed and organize them for study in a particular domain. Scientific classifications are the basis for the organization of knowledge through the formation of hypotheses, the design of experiments, the modeling and interpretation of data, and the integration of findings. Clear labeling is the basis for systems of classification, such as ontologies, but even in situations in which there is no sanctioned one, scientists still rely on classification (grouping of phenomena). When this classification is approached formally, the resulting classifications may be ontologies (see Chapter 3). Often, however, it proceeds more automatically and implicitly, as when so-called folk categories from everyday life (informal understandings of phenomena, such as cognition, emotion, perception) play an unrecognized role in behavioral science.

As a relatively young group of disciplines, the behavioral sciences are still developing and refining many of the sets of concepts and classifications on which they are based (and by which each organizes the knowledge that is created), as well as the constructs useful for scientific study. And the challenge of discerning whether two constructs actually describe the same entity or phenomenon has been an issue in the behavioral sciences for more than a century (Larsen and Bong, 2016). In general, the constructs and

classifications that have been established in the behavioral sciences have not been systematized (see, e.g., Davis et al., 2015; Barrett, 2009; Lilienfeld et al., 2015; Lawson and Robbins, 2021). Scientists working within even a narrow field may use different constructs and classifications for the same phenomena depending on their interests and other factors. They may be using the same word (label) for a phenomenon or a class and not recognize that they are actually referring to different phenomena or classes. Indeed, the charge given to this committee reflects an understanding that a broad range of stakeholders are interested in the continuing development and refinement of how behavioral science knowledge is organized and disseminated. We briefly review some of the challenges associated with classification and with defining and measuring constructs that point to a need for greater reliance on ontologies.

Challenges with Classification

Definitions of many of the concepts, constructs, and classifications used in the behavioral sciences may not be widely shared. As already noted, scientists identify classes by focusing on certain features of equivalence (features that the entities or instances are thought to share), but these groupings do not necessarily correspond to the words that label them in principled and consistent ways. As a result, scientists and science consumers may not easily recognize the extent to which two entities or even two constructs are similar or different. Even when a classification system is accepted within a domain of study, it may be inadequate or unsuitable for its intended purpose.

In addition, classifications inevitably become out of date as scientific knowledge evolves in response to new findings and new ideas. For example, Linnaeus, the legendary taxonomist, classified rabies as a mental illness because end-stage rabies results in confusion, agitation, and hallucinations (Linné and Schröder, 1763, cited in Chute, 2021). Linnaeus created the class before the infectious origins of rabies were understood. A more contemporary illustration is the 11th edition of the International Classification of Diseases (ICD), which significantly modified its classification of mental health disorders to reflect current understanding of pathology, setting aside many terms that are rooted in Freudian psychoanalysis (Chute, 2021): see Box 2-3.

A classification that has not been developed systematically might not be useful and may even be counterproductive. For example, in the mental health field, diagnosis often involves placing individuals into discrete classes. Yet, evidence challenges the reliability of many psychological and psychiatric diagnoses (Regier et al., 2013). Furthermore, mental health diagnoses and the symptoms that underlie them are highly intercorrelated.

For example, mental disorientation may be associated with several different diagnoses. And disorientation may be highly correlated with other symptoms, such as flat affect, hallucinations, and social distrust. These findings challenge the clinical practice of placing individuals into a primary diagnosis class (Lahey, 2021). The mechanisms that cause a person to display particular signs or symptoms of conduct may vary across individuals.

There are different ways to create classification systems. The most common approach uses collaborative consensus building to define groupings in the observed domain. Empirical statistical methodologies have the potential to support this effort by identifying data that are more or less similar. The statistical approach builds on the estimated correlations between observations and constructs and can sharpen definitions of classes and improve the reliability of an ontology. By identifying correlations among diagnoses and symptoms, for example, it may be possible to replace binary mental health diagnoses (e.g., normal or abnormal) with estimates of where individuals fall along multiple continua (Lahey, 2021; Lahey et al, 2017). This point is discussed further in Chapter 5.

The Jingle Problem

One common problem in the behavioral sciences occurs when researchers assume that two groups of entities that can be distinguished by their features (i.e., they should be considered as two different constructs) are assumed to be the same because they are named by the same word. This is called the jingle problem or fallacy (see, e.g., Kelley, 1927; Gonzalez et al., 2021).6 An example of the jingle problem is evident in the scientific use of the word “control” and its associated constructs. The same word can refer to the subjective experience of being in control, a feeling of agency or effort, and can also refer to the function of systems or mechanisms that guide behavior (Helmholtz, 1925; Bargh, 1994). Yet there is clear and consistent evidence that the two need not correspond (e.g., Barrett et al., 2004). Similarly, scientists who study emotion often use the same words to refer to a range of very different constructs (see, e.g., Barrett et al., 2019, Fig. 1).

The Jangle Problem

A related problem occurs when scientists assume that two similar phenomena are different because they go by different names. This is called the jangle problem or alternatively, the “toothbrush problem” (so named because behavioral scientists often avoid using others’ theories just as people are repulsed by the thought of using others’ toothbrushes) (Mischel, 2008). Examples abound in the behavioral sciences. “Coping,” “emotion regulation,” “self-regulation,” and “self-control,” or even “cognitive control” may all refer to similar phenomena that share many features. It is possible, of course, that differences in language may indicate subtle but critical differences in the features that scientists focus on when studying and conceptualizing a classification. Nevertheless, it is indisputable that the jangle or toothbrush problem has interfered with the accumulation of knowledge in the behavioral sciences. The proliferation of measures and theories often leads to more confusion than clarification.

Challenges with Defining Constructs

The jingle and jangle problems highlight the importance of clear definitions of constructs. When the same constructs are described by different names or are measured using different instruments, or when different definitions are adopted for the same construct, it hinders scientific communication and clinical application, as well as dissemination.

Consider a relatively straightforward example: the diagnosis of hypertension. Professional organizations have different thresholds for the diag-

___________________

6 The terms jingle and jangle are widely accepted in the context of the behavioral sciences but other terms for these problems are used in other scientific disciplines.

nosis of this chronic health condition. According to the American Heart Association and the American College of Cardiology, a 60-year-old man whose blood pressure is 135/87 mmHg has stage one hypertension and is very near the threshold for the most severe level or stage two hypertension (Goetsch, 2021). However, according to the European Society on Hypertension, his blood pressure is high normal (Williams et al., 2018). Moreover, these thresholds have also changed over time. Such differences have consequences. When the guideline from the American College of Cardiology and the American Heart Association lowered the threshold for high blood pressure to >130/80 mmHg, the number of people eligible for treatment increased by 7.5 million, and 13.9 million people became candidates for treatment intensification (Khera et al., 2018).

The situation with hypertension is actually a simple case. Hypertension research uses clearly delineated and widely shared methods and measurement requirements. Blood pressure has a common label, meaning, and causal relationship to other medical conditions. The literature contains several thousand studies that use these common labels, measures, and meanings to empirically validate or refute the hypothesized relationships.

In contrast, the classification of mental health problems presents problems of definition as well as other challenges. Some of these challenges arise from the fact that mental disorders, like many health conditions, are not binary. In defining high blood pressure, epidemiologists and cardiologists identify cut points on the basis of epidemiological studies and expert judgment. Although errors in blood pressure assessment do occur (Padwal et al., 2019), measurement of mental disorders such as depression is inherently less precise and reliable. In addition, there is no clear agreement among investigators and clinicians regarding when to initiate treatment for depression (or other mood disorders). Some experts believe that treatment should be reserved for those who meet diagnostic criteria for a “major” depressive disorder, which frequently is defined as having at least five of the symptoms listed on the Patient Health Questionnaire-9 (Kroenke et al., 2001). But other experts recommend treatment for people with “subsyndromal depression,” which is defined as having as few as two symptoms measured by the same instrument. Recent evidence suggests that the point prevalence of subsyndromal depression is about 2.5 times the rate of major depression (Oh et al., 2020) and that most adults would be eligible for a diagnosis of subsyndromal depression at some point in their lives (Rapaport and Judd, 1998).

Challenges with Measuring Constructs

In almost all areas of science, a distinction is drawn between a phenomenon and the measures used to observe and assess its features. That is, scientists recognize that any measure may be a less than perfectly precise

representation of what is being measured. In some areas of science, there are recognized ways of measuring the same phenomena that consistently yield the same results. Obtaining consistent results from a number of different measures is often taken to be strong evidence both that the phenomenon in question is real or robust and that the measures themselves are reliable and valid. Yet the availability of multiple measures has not always enhanced progress in the behavioral sciences.

For example, the jingle problem often appears when measures that go by the same name (i.e., are supposed to be measures of the same construct) do not correlate. In the domain of emotion, self-reports of experience; observations of facial, body, and vocal behaviors; and physiological measurements for any given emotional constructs (anger, fear, happiness, etc.) correlate weakly with one another, or sometimes not at all. There are many possible reasons for a lack of correlation among measures: one or more of the measures may be defective in some way; the different measures may be measuring features that differ in some subtle way; the entity (i.e., instance of emotion) being measured may be heterogeneous in unrecognized ways; or the constructs in question may have a relational, contextual meaning that varies across its instances (see, e.g., Bollen and Lennox, 1991; Barrett, 2011). But it does highlight the importance of careful attention to measures and their assumed relationships to the constructs they are used to assess; lack of agreement, although frustrating, can be a source of information about the nature of the phenomena being studied.

Another example of the jingle problem in measurement can be seen in the assessment of health-related quality of life, which has become commonplace in clinical research in medicine and clinical psychology. Many measures are designed for specific clinical populations but use very similar items (individual questions, for example) (Kaplan and Hays, 2022). Common examples include the Arthritis Impact Measurement Scale (Meenan et al., 1992) and the Minnesota Living with Heart Failure Questionnaire (Rector and Cohn, 1992). Despite the availability of these well-validated measures, investigators often make slight modifications and create a different measure or adaptation that is offered under a new name. Targeted measures are designed to be relevant to specific groups (e.g., people with diabetes, people with hypertension, seniors, women). There are now so many measures that systematic reviews of instruments for most major diseases have been developed, including those for heart disease (Garin et al., 2009), diabetes (El Achlab et al., 2008), and breast cancer (Montazeri, 2008). In the case of vision-related quality of life, there are so many measures that there are now systematic reviews of the systematic reviews (Assi et al., 2021). One example of progress toward harmonization of measures is provided by the Patient-Reported Outcomes Measurement Information System (PROMIS®).

Over the last half century there have been numerous efforts to develop measures that represent outcomes from the patient perspective, but these measures do not reflect a common conceptualization of health and health outcome. Most widely used among these methods are questionnaires that purport to measure health-related quality of life (HRQoL). However, because there has not been a formal ontology to specify the concepts of interest in this domain, survey instruments designed for this purpose ask different questions and address different concepts. The assumption that the constructs measured are interchangeable has resulted in confusion and disagreement in the field.

Recognizing the importance of standardization for clinical research, the National Institutes of Health supported an effort to develop harmonized measures of health outcomes. The project, which became known as PROMIS®, was launched in 2004. PROMIS® used item response theory (IRT), a method of measuring traits, attributes, or other constructs of interest in the behavioral sciences. In brief, this method is a way of drawing inferences about the construct being measured from the relationship between an individual’s performance on a single question (or test item) and their performance on the whole set, which makes it possible to obtain the most information using the fewest possible questions. Thus, IRT can identify the commonality among different measurement approaches. Through its use of IRT, PROMIS® has the potential to link outcomes across studies so that evaluations can be compared directly to one another, and has been the basis for reconciling ontologies: the PROMIS® framework has been mapped to the WHO’s International Classification of Functioning, Disability, and Health (Cella and Hays, 2021).

In contrast with the jingle problem, the jangle problem arises when measures that go by different names (i.e., are supposed to be measures of different constructs) correlate so highly (and may contain overlapping features) that they appear to be measuring the same construct. For example, separate self-report measures of anxiety and of depression are sometimes as highly correlated with one another as their scale reliabilities will allow, meaning that they contain no unique variance (Feldman, 1993). A similar situation occurs with clinician ratings of anxiety and depression (e.g., the Hamilton Anxiety and Depression scales; see Mountjoy and Roth, 1982).

There are more complex examples, in which a number of different, but related constructs, each with its own measures, are used by different researchers and the statistical correspondence among these measures is less than robust and varies from study to study. For example, a number of different tasks are used to measure “working memory,” “executive function,” and “cognitive control” (Lenartowicz et al., 2010). These measures are variably related across studies, leaving it unclear whether the tasks in question are actually measuring different classes of phenomena with variable amounts of precision or error, or whether they are measuring different

features of the same class. That is, there may be a number of different features of cognitive control that are loosely associated with each other but are usefully distinguished and therefore should be measured in different ways, which have been recently referred to as “sibling constructs” (Lawson and Robins, 2021). An ontology that explicitly identifies agreed-upon definitions for these constructs would support clear conclusions drawn from disparate research into them and their features.

SUMMARY

This chapter describes a number of problems that arise, at least in part, because of the absence of a shared ontology, that is, because the knowledge structures used (even if only tacitly) lack the clarity and breadth of application that would allow them to productively influence ongoing research and clinical application. We emphasize that increased attention to the development and use of ontologies would be entirely consistent with the long-standing reliance of scientists in the behavioral domain on constructs, construct validity, and related ideas. Ontologies are not an alternative or a challenge to psychometrics, but instead complement and support psychometric analysis.

Scientists in every field develop operational definitions for the terms they use. However, doing this on a case-by-case basis, without necessarily establishing shared understanding and perhaps without attention to the relationships between the defined terms and others, has left many domains in the behavioral sciences with challenges those definitions cannot address. The development of ontologies need not constrain the work scientists do or compel them to shoe-horn their ideas into dichotomies or other relationships that are not logical. Instead, it can support scientists in addressing the use of different terms or descriptions for the same underlying entity or condition, the use of the same term for different entities or concepts (often without recognition that this is the case), and the use of different, poorly correlated measures for the same entity.

These problems in the field create difficulties in communication among scientists, difficulties in comparison of research results, and difficulties for the application and dissemination of research results in clinical and other settings. They interfere with the synthesis of knowledge across domains in the behavioral sciences. All of these difficulties reduce the impact of behavioral sciences research. Ultimately, the return on investments in the behavioral science research is limited when knowledge does not accumulate; when largely similar questions are retested as if they were different; and when findings cannot be easily synthesized or retrieved to guide research, training, policy, and service delivery.

Carefully developed ontologies are a key tool for addressing many of the challenges that constrain the behavioral sciences. We do not claim that

the development of improved and more widely shared ontologies will by itself fully solve these problems, but as we describe in subsequent chapters, we are confident that thoughtfully developed ontologies in the behavioral sciences will contribute substantially to their resolution. We acknowledge that there are important issues about how uniform (as opposed to pluralistic) behavioral science ontologies should be and that there are a wide range of perspectives on issues that ontology developers confront in making decisions about how to define concepts and describe their relationships, and we consider many of these in the following chapters. However, our review suggests that thoughtful ontology development and use in the behavioral sciences has the potential to accelerate the creation, synthesis, and dissemination of behavioral science knowledge.

REFERENCES

Assi, L., Chamseddine, F., Ibrahim, P., Sabbagh, H., Rosman, L., Congdon, N., Evans, J., Ramke, J., Kuper, H., Burton, M.J., Ehrlich, J.R., and Swenor, B.K. (2021). A global assessment of eye health and quality of life: A systematic review of systematic reviews. JAMA Ophthalmology, 139(5), 526–541. https://doi.org/10.1001/jamaophthalmol.2021.0146

Bargh, J.A. (1994). The four horsemen of automaticity: Awareness, intention, efficiency, and control in social cognition. In R.S. Wyer, Jr. and T.K. Srull (Eds.), Handbook of Social Cognition: Basic Processes; Applications, 1–40. Mahwah, NJ: Lawrence Erlbaum Associates, Inc.

Barrett L.F. (2009). The future of psychology: Connecting mind to brain. Perspectives on Psychological Science, 4(4), 326–339. https://doi.org/10.1111/j.1745-6924.2009.01134.x

Barrett L.F. (2011). Bridging token identity theory and supervenience theory through psychological construction. Psychological Inquiry, 22(2), 115–127. https://doi.org/10.1080/1047840X.2011.555216

Barrett, L.F., Adolphs, R., Marsella, S., Martinez, A.M., and Pollak, S.D. (2019). Emotional expressions reconsidered: Challenges to inferring emotion from human facial movements. Psychological Science in the Public Interest, 20(1), 1–68. https://doi.org/10.1177/1529100619832930

Barrett, L.F., Tugade, M.M., and Engle, R.W. (2004). Individual differences in working memory capacity and dual-process theories of the mind. Psychological Bulletin, 130(4), 553–573. https://doi.org/10.1037/0033-2909.130.4.553

Bollen, K., Cacioppo, J.T., Kaplan, R.M., Krasnik, J.A., Olds, J.L., and Dean, H. (2015). Social, behavioral, and economic sciences perspectives on robust and reliable science. https://www.nsf.gov/sbe/AC_Materials/SBE_Robust_and_Reliable_Research_Report.pdf

Bollen, K.A., and Lennox, R. (1991). Conventional wisdom on measurement: A structural equation perspective. Psychological Bulletin, 110, 305–314. https://doi.org/10.1037/0033-2909.110.2.305

Bornmann, L., and Mutz, R. (2015). Growth rates of modern science: A bibliometric analysis based on the number of publications and cited references. Journal of the Association for Information Science and Technology, 66, 2215-2222. https://doi.org/10.1002/asi.23329

Cella, D., and Hays, R. (2021). Ontological Issues in Patient Reported Outcomes: Conceptual Issues and Challenges Addressed by the Patient-Reported Outcomes Measurement Information System (PROMIS®). Commissioned paper prepared for the Committee on Accelerating Behavioral Science Through Ontology Development and Use. Available: https://nap.nationalacademies.org/resource/26464/Cella-and-Hays-comissioned-paper.pdf

Chambless, D.L., Sanderson, W.C., Shoham, V., Johnson, S.B., Pope, K.S., Crits-Christoph, P., Baker, M.J., Johnson, B., Woody, S.R., Sue, S., Beutler, L.E., Williams, D.A., and McCurry, S.M. (1998). An update on empirically validated therapies. The Clinical Psychologist, 49(2), 5–18. https://div12.org/sites/default/files/UpdateOnEmpiricallyValidatedTherapies.pdf

Chorpita, B.F., and Daleiden, E.L. (2009). Mapping evidence-based treatments for children and adolescents: Application of the distillation and matching model to 615 treatments from 322 randomized trials. Journal of Consulting and Clinical Psychology, 77(3), 566–579. https://doi.org/10.1037/a0014565

Chorpita, B.F., and Daleiden, E.L. (2014). Structuring the collaboration of science and service in pursuit of a shared vision. Journal of Clinical Child and Adolescent Psychology, 43(2), 323–338. https://doi.org/10.1080/15374416.2013.828297

Chorpita, B.F., Daleiden, E.L., Ebesutani, C., Young, J., Becker, K.D., Nakamura, B.J., Phillips, L., Ward, A., Lynch, R., Trent, L., Smith, R.L., Okamura, K., and Starace, N. (2011). Evidence-based treatments for children and adolescents: An updated review of indicators of efficacy and effectiveness. Clinical Psychology: Science and Practice, 18(2), 154–172. https://doi.org/10.1111/j.1468-2850.2011.01247.x

Chorpita, B.F., Daleiden, E.L., and Weisz, J.R. (2005). Identifying and selecting the common elements of evidence based interventions: A distillation and matching model. Mental Health Services Research, 7(1), 5–20. Available: https://doi.org/10.1007/s11020-005-1962-6

Chute, C. (2021). Key Issues in the Development of the ICD and Its Effects on Medicine. Commissioned paper prepared for the Committee on Accelerating Behavioral Science Through Ontology Development and Use. Available: https://nap.nationalacademies.org/resource/26464/Chute-commissioned-paper.pdf

Davis, R., Campbell, R., Hildon, Z., Hobbs, L., and Michie, S. (2015). Theories of behaviour and behaviour change across the social and behavioural sciences: A scoping review. Health Psychology Review, 9(3), 323–344. https://doi.org/10.1080/17437199.2014.941722

El Achhab, Y., Nejjari, C., Chikri, M., and Lyoussi, B. (2008). Disease-specific health-related quality of life instruments among adults diabetic: A systematic review. Diabetes Research and Clinical Practice, 80(2), 171–184. https://doi.org/10.1016/j.diabres.2007.12.020

Elsevier. (2020). Scopus Content Coverage Guide. https://www.elsevier.com/__data/assets/pdf_file/0007/69451/Scopus_ContentCoverage_Guide_WEB.pdf

Feldman L.A. (1993). Distinguishing depression and anxiety in self-report: Evidence from confirmatory factor analysis on nonclinical and clinical samples. Journal of Consulting and Clinical Psychology, 61(4), 631–638. https://doi.org/10.1037//0022-006x.61.4.631

Garin, O., Ferrer, M., Pont, A., Rué, M., Kotzeva, A., Wiklund, I., Van Ganse, E., and Alonso, J. (2009). Disease-specific health-related quality of life questionnaires for heart failure: A systematic review with meta-analyses. Quality of Life Research, 18(1), 71–85. https://doi.org/10.1007/s11136-008-9416-4

Garland, A.F., Brookman-Frazee, L., Hurlburt, M.S., Accurso, E.C., Zoffness, R.J., Haine-Schlagel, R., and Ganger, W. (2010). Mental health care for children with disruptive behavior problems: A view inside therapists’ offices. Psychiatric Services, 61(8), 788–795. https://doi.org/10.1176/ps.2010.61.8.788

Goetsch, M.R., Tumarkin, E., Blumenthal, R.S., and Whelton, S.P. (2021). New Guidance on Blood Pressure Management in Low-Risk Adults with Stage 1 Hypertension. American College of Cardiology. https://www.acc.org/latest-in-cardiology/articles/2021/06/21/13/05/new-guidance-on-bp-management-in-low-risk-adults-with-stage-1-htn

Gonzalez, O., MacKinnon, D.P., and Muniz, F.B. (2021). Extrinsic convergent validity evidence to prevent jingle and jangle fallacies. Multivariate Behavioral Research, 56(1), 3–19. https://doi.org/10.1080/00273171.2019.1707061

Helmholtz, H. (1925). Treatise on physiological optics (3rd ed.) 3, J.P.C. Southall, Trans. Banta.

Hunley, K.L., Cabana, G.S., and Long, J.C. (2016). The apportionment of human diversity revisited. American Journal of Physical Anthropology, 160(4), 561–569. https://doi.org/10.1002/ajpa.22899

Institute of Medicine. (2003). Unequal treatment: Confronting Racial and Ethnic Disparities in Health Care. Washington, DC: National Academies Press. https://doi.org/10.17226/12875

Institute of Medicine. (2012). How Far Have We Come in Reducing Health Disparities? Progress Since 2000: Workshop Summary. Washington, DC: National Academies Press. https://doi.org/10.17226/13383

Kaplan, R.M., and Hays, R.D. (2022). Health-related quality of life measurement in public health. Annual Review of Public Health, 10. Advance online publication. https://doi.org/10.1146/annurev-publhealth-052120-012811

Kazdin, A.E. (2018). Innovations in Psychosocial Interventions and Their Delivery: Leveraging Cutting-Edge Science to Improve the World’s Mental Health. Oxford, England: Oxford University Press.

Kelley, T.L. (1927). Interpretation of Educational Measurements. Yonkers-on-Hudson, NY: World Book Company.

Khera, R., Lu, Y., Lu, J., Saxena, A., Nasir, K., Jiang, L., and Krumholz, H.M. (2018). Impact of 2017 ACC/AHA guidelines on prevalence of hypertension and eligibility for antihypertensive treatment in United States and China: Nationally representative cross sectional study. BMJ (Clinical Research Ed.), 362, k2357. https://doi.org/10.1136/bmj.k2357

Kim, L., Portenoy, J.H., West, J.D., and Stovel, K.W. (2020). Scientific journals still matter in the era of academic search engines and preprint archives. Journal of the Association for Information Science and Technology, 71(10), 1218–1226. https://doi.org/10.1002/asi.24326

Kroenke, K., Spitzer, R.L., and Williams, J.B. (2001). The PHQ-9: Validity of a brief depression severity measure. Journal of General Internal Medicine, 16(9), 606–613. https://doi.org/10.1046/j.1525-1497.2001.016009606.x

Lahey, B.B. (2021). Dimensions of Psychological Problems: Replacing Diagnostic Categories with a More Science-Based and Less Stigmatizing Alternative. New York: Oxford University Press.

Lahey, B.B., Krueger, R.F., Rathouz, P.J., Waldman, I.D., and Zald, D.H. (2017). A hierarchical causal taxonomy of psychopathology across the life span. Psychological Bulletin, 143(2), 142–186. https://doi.org/10.1037/bul0000069

Larsen, K.R., and Bong, C.H. (2016). A tool for addressing construct identity in literature review and meta-analyses. MIS Quarterly, 40(3), 529–551; A1–A20. https://doi.org/10.25300/MISQ/2016/40.3.01

Lawson, K.M., and Robins, R.W. (2021). Sibling constructs: What are they, why do they matter, and how should you handle them? Personality and Social Psychology, 25(4), 344–366. https://doi.org/10.1177/10888683211047101

Lenartowicz, A., Kalar, D.J., Congdon, E., and Poldrack, R.A. (2010). Towards an ontology of cognitive control. Topics in Cognitive Science, 2(4), 678–692. https://doi-org.proxy.lib.duke.edu/10.1111/j.1756-8765.2010.01100.x

Lilienfeld, S.O., Sauvigné, K.C., Lynn, S.J., Cautin, R.L., Latzman, R.D., and Waldman, I.D. (2015). Fifty psychological and psychiatric terms to avoid: A list of inaccurate, misleading, misused, ambiguous, and logically confused words and phrases. Frontiers in Psychology, 6, 1100. https://doi.org/10.3389/fpsyg.2015.01100

Linné, C.V., and Schröder, J. (1763). Genera Morborum: In Auditorum Usum.

Meenan, R.F., Mason, J.H., Anderson, J.J., Guccione, A.A., and Kazis, L.E. (1992). AIMS2. The content and properties of a revised and expanded Arthritis Impact Measurement Scales Health Status Questionnaire. Arthritis and Rheumatism, 35(1), 1–10. https://doi.org/10.1002/art.1780350102

Mischel, W. (2008). The toothbrush problem. APS Observer, 21(11).

Montazeri A. (2008). Health-related quality of life in breast cancer patients: A bibliographic review of the literature from 1974 to 2007. Journal of Experimental & Clinical Cancer Research, 27(1), 32. https://doi.org/10.1186/1756-9966-27-32

Mountjoy, C.Q., and Roth, M. (1982). Studies in the relationship between depressive disorders and anxiety states. Part 2. Clinical items. Journal of Affective Disorders, 4(2), 149–161. https://doi.org/10.1016/0165-0327(82)90044-1

Murphy, G.L. (2002). The Big Book of Concepts. Cambridge, MA: MIT Press.

NASEM (National Academies of Sciences, Engineering, and Medicine). (2019a). Fostering Healthy Mental, Emotional, and Behavioral Development in Children and Youth: A National Agenda. Washington, DC: National Academies Press. https://doi.org/10.17226/25201

______. (2019b). Reproducibility and Replicability in Science. Washington, DC: National Academies Press. https://doi.org/10.17226/25303

______. (2021). Reducing the Impact of Dementia in America: A Decadal Survey of the Behavioral and Social Sciences. Washington, DC: National Academies Press. https://doi.org/10.17226/26175

National Research Council. 2004. Measuring Racial Discrimination. Washington, DC: The National Academies Press. https://doi.org/10.17226/10887

National Science Board, National Science Foundation. (2021). Publications Output: U.S. Trends and International Comparisons. Science and Engineering Indicators 2022. NSB-2021-4. https://ncses.nsf.gov/pubs/nsb20214

Oh, D.J., Han, J.W., Kim, T.H., Kwak, K.P., Kim, B.J., Kim, S.G., Kim, J.L., Moon, S.W., Park, J.H., Ryu, S.H., Youn, J.C., Lee, D.Y., Lee, D.W., Lee, S.B., Lee, J.J., Jhoo, J.H., and Kim, K.W. (2020). Epidemiological characteristics of subsyndromal depression in late life. The Australian and New Zealand Journal of Psychiatry, 54(2), 150–158. https://doi.org/10.1177/0004867419879242

Okamura, K.H., Orimoto, T.E., Nakamura, B.J., Chang, B., Chorpita, B.F., and Beidas, R.S. (2020). A history of child and adolescent treatment through a distillation lens: Looking back to move forward. The Journal of Behavioral Health Services & Research, 47(1), 70–85. https://doi.org/10.1007/s11414-019-09659-3

Padwal, R., Campbell, N., Schutte, A.E., Olsen, M.H., Delles, C., Etyang, A., Cruickshank, J.K., Stergiou, G., Rakotz, M.K., Wozniak, G., Jaffe, M.G., Benjamin, I., Parati, G., and Sharman, J.E. (2019). Optimizing observer performance of clinic blood pressure measurement: A position statement from the Lancet Commission on Hypertension Group. Journal of Hypertension, 37(9), 1737–1745. https://doi.org/10.1097/HJH.0000000000002112

Pew Research Center. (2020). What Census Calls Us. https://www.pewresearch.org/interactives/what-census-calls-us/

Rapaport, M.H., and Judd, L.L. (1998). Minor depressive disorder and subsyndromal depressive symptoms: Functional impairment and response to treatment. Journal of Affective Disorders, 48(2–3), 227–232. https://doi.org/10.1016/s0165-0327(97)00196-1

Rector, T.S., and Cohn, J.N. (1992). Assessment of patient outcome with the Minnesota Living with Heart Failure questionnaire: Reliability and validity during a randomized, double-blind, placebo-controlled trial of pimobendan. Pimobendan Multicenter Research Group. American Heart Journal, 124(4), 1017–1025. https://doi.org/10.1016/0002-8703(92)90986-6

Regier, D.A., Narrow, W.E., Clarke, D.E., Kraemer, H.C., Kuramoto, S.J., Kuhl, E.A., and Kupfer, D.J. (2013). DSM-5 field trials in the United States and Canada, Part II: test-retest reliability of selected categorical diagnoses. The American Journal of Psychiatry, 170(1), 59–70. https://doi.org/10.1176/appi.ajp.2012.12070999

Simon, H.A. (1971). Designing organizations for an information-rich world. In M. Greenberger (Ed.), Computers, Communications, and the Public Interest, 37–52. Baltimore, MD: Johns Hopkins University Press.

Williams, B., Mancia, G., Spiering, W., Agabiti Rosei, E., Azizi, M., Burnier, M., Clement, D. L., Coca, A., de Simone, G., Dominiczak, A., Kahan, T., Mahfoud, F., Redon, J., Ruilope, L., Zanchetti, A., Kerins, M., Kjeldsen, S.E., Kreutz, R., Laurent, S., Lip, G., McManus, R., Narkiewicz, K., Ruschitzka, F., Schmieder, R.E., Shlyakhto, E., Tsioufis, C., Aboyans, V., Desormais, I., and ESC Scientific Document Group. (2018). 2018 ESC/ESH guidelines for the management of arterial hypertension. European Heart Journal, 39(33), 3021–3104. https://doi.org/10.1093/eurheartj/ehy339

This page intentionally left blank.