5

Data and Communications for Polar Research

Polar science—like many other fields of science—revolves around the collection and analysis of data. Numerous technology developments related to data connectivity, data communication, and data analytics are occurring within and beyond the scientific enterprise, yet many questions remain regarding how such developments can potentially be applied in remote, extreme polar environments. This session explored such questions under three main categories of discussion:

- Potential game changers for data connectivity: to explore two key developments that have the potential to transform Internet and data connectivity for Antarctic science—including the use of low Earth orbit (LEO) satellites and subsea telecommunication fiber-optic cables for science and data transfer. Speakers were asked to discuss: What is the potential for expanding these new avenues for Internet connectivity in the Antarctic? What sorts of technological and/or logistical challenges would need to be addressed to implement these systems in the harsh polar environment?

- Instrument data communication current capabilities and needs: to learn from researchers who are pushing the frontiers of how one captures and transfers data from field instruments deployed in polar research. Speakers were asked to discuss the current state of the art for data communication with particular types of research instruments deployed on land, on ice, and in aquatic environments; to discuss the most significant problems and limitations of current systems; and to share views on technological advances that would be most valuable to pursue.

- Advanced data analytics developments: to better understand the rapidly growing landscape of “big data” analytical methodologies (e.g., artificial intelligence [AI] including machine learning [ML], data–model integration, data fusion, and other methodologies) that can potentially be applied to countless complex issues in polar science. Although this topic is too broad to be addressed comprehensively within this limited workshop setting, speakers were asked to share illustrative examples of some cutting-edge data processing and analysis techniques that could be more widely applied to polar research.

POTENTIAL GAME-CHANGERS FOR CONNECTIVITY

Anthony Lyscio, Starlink, discussed the idea of deploying polar region connectivity service via Starlink—a high-performance, low-latency LEO satel-

lite-based Internet service. Currently, Starlink is licensed to operate in Antarctica but it does not have service there yet. It expects that initial service may become available by early 2023.

The addition of polar-specific launches with intersatellite links would allow Starlink to cover these regions, but this is a technical challenge. To balance the overall network, uptime trade-offs may be required (e.g., is 50 percent coverage acceptable?). Some of the other specific challenges include

- Cold-proofing technologies: Some technology maintenance will likely need to be managed by the customer.

- Mounting stability: Starlink does not expect the wind to have an impact on performance as long as mounting stability is adequate.

- Thermal considerations: A high-performance dish is designed to operate at ~55ºC. Once powered up, the dish can self-heat and operate below this temperature, but this process has not been well vetted for Antarctic-level conditions. Cables may be more of a challenge because they can become brittle at extreme temperatures.

- Snow and ice buildup: The buildup of snow and ice could degrade performance. The dish does have a “snow melt mode,” but the technology has not been validated in the field.

- Power needs: The high-performance kit operates with a maximum of 300 watts. Snow melt mode will increase energy consumption but it can be disabled as needed.

Matt Fouch, Subsea Data Systems, discussed the potential to improve data and communication possibilities through the use of Science Monitoring and Reliable Telecommunications (SMART) repeaters on subsea cables. The current cables installed on the ocean floor include ~20,000 repeaters to transmit signal across long distances. The vision is to use these repeaters for science and monitoring capabilities (You 2010). The initial goal is to install new monitoring sensors (e.g., for pressure, temperature, and seismic) on some or all of these repeaters. Cables are replenished every 20–25 years, so when new cables are laid, sensor-enabled repeaters can be installed. A United Nations multiagency task force has been leading the adoption of this strategy, and there is broad support for SMART repeaters, but none have been built yet.

Subsea Data Systems was founded to develop SMART cable technology and to manage the resulting data ecosystem. It uses commercial off-the-shelf sensors that can be placed inside a repeater or placed outside the pressure repeater housed in a sensor pod (e.g., Lentz and Howe 2018). New and existing proposed cables to provide telecommunications to Antarctica present an important opportunity to include SMART repeaters and branched units that are mini-science observatories. Ultimately, SMART cables can be a great opportunity to meld research programs, the private sector, and government agencies—with opportunities to provide groundbreaking new science data and to benefit society, Fouch said.

INSTRUMENT DATA COMMUNICATION: CURRENT CAPABILITIES AND NEEDS

Elizabeth Bagshaw, Cardiff University, discussed a system that she and her team are designing, Cryoegg, to transmit data from subglacial environments. Cryoegg is a flexible wireless sensor platform designed to go underneath ice sheets to collect data and transmit those data to the surface using radiofrequency, following groundwork by radar glaciologists and other wireless-probe designers. It currently measures the temperature, pressure, and electrical conductivity of water to learn about the subglacial environment and about how meltwater interacts with the ice bed. Cryoegg is a “sacrificial” instrument (i.e., it is not meant to be recovered after deployment) that transmits data to the surface to an autonomous receiver that logs data. Data collected are simple to help minimize the data transmission needs, which improves communication performance. (See Box 7 for more on data transmission issues.) Data can then be transmitted onward via Iridium or another satellite provider.

The main challenge that Bagshaw and her team have experienced is that the Cryoegg must fit in a small space, so it requires a thoughtful design with a very small radio antenna. When they tested the device in Greenland, they lowered it down a standard ice core borehole. After deployment of the device, they set up their surface receivers. Although the team got the data they expected, data stopped transmitting at ~1,300 m, due to a failure of some seals around the sensors. If they can address these mechanical problems with new pressure seals, they think they can collect data down to 2.5 km. Thus far, signals were transmitted through the ice from a small, low-power platform, and the team was able to collect data in real time.

Lee Freitag, Woods Hole Oceanographic Institution, discussed some emerging technology solutions for robotic exploration under Arctic ice shelves. He discussed three communication-related issues with the development of these technologies: acoustics, light fiber-optic communication, and free-space optical communications. Similar to GPS systems needing multiple satellites, an acoustic system needs multiple beacons transmitting acoustic signals for autonomous systems to be able to compute its location. Beacons can be lowered through drill holes and placed in the ice or they can be placed in front of the ice shelves. Placing them in front of the ice shelf may be easier but placing them in drill holes may be an attractive option when autonomous systems can use them far under the ice (e.g., 50 km or more). Autonomous vehicles can use acoustic homing to get to a beacon and upload data to it.

Freitag also discussed light fiber-optic communication, which offers considerable promise for long-range control of hybrid vehicles exploring deep under ice shelves. A ship can launch and control the vehicle, or the vehicle and fiber management system can be staged and then deployed from seasonal ice to proceed under the shelf. For data offloading, Freitag discussed the use of free-space optical communications. The primary use for these optical modems has been for data offload. An underwater vehicle can swim down to an instrument that has been sampling on the seafloor for months or years and then use the optical modem to offload hundreds of megabytes of data without having to recover the instrument. Another potential use of optical communications is to offload sensor data from a vehicle after a mission. This could be done with an optical model lowered through a drill hole in the shelf, and could also include a line for the vehicle to home to and hold onto while offloading data and waiting for the next mission.

Melinda Holland, Wildlife Computers, gave an overview of how animal-borne sensor tags (see Figure 11) have developed over the past few decades. She discussed how they collect data and transmit information, focusing on satellite telemetry, which is necessary for studies where the animal cannot be recaptured to recover the instrument. Tags incorporate low-power sensors to collect data and use onboard processors to orchestrate the data collection, storage, and archiving. There have been continuous improvements over the past few years in the power of microprocessors and low-powered sensors. Tags can now leverage technology such as AI and ML to do sophisticated event detection and transformation of data into useful information. Once the information is created, the tag transmits it to the satellites, and data centers decode the path messages for the end user.

Wildlife Computers currently uses Argos transmitters, which are low-powered (~400 milliwatts or less), meaning the transmitters can use small batteries. However, the satellite industry is a growing field and some are developing even smaller, more-sensitive, and less-expensive receivers than Argos. Similarly, Argos is making updates to its older satellite receivers. This new generation of satellite receivers can be carried on SmallSat spacecraft.

Even with improvements in satellites, coverage may never reach 100 percent. To meet the research needs where more coverage is needed, Wildlife Computers developed land-based receivers called “Motes.” Animals must move into view of the Mote for the tag transmissions to be received, so the higher the Mote, the farther the range. Motes require external power, so advances in power systems in the extreme cold will be necessary for use in some polar regions. The data from Motes can be retrieved manually or via data portal if the Motes are connected to the Internet. Two possible ways to increase the data throughput from animal-borne tags are through (1) more powerful

onboard deterministic and AI and ML algorithms to process the sensor data and (2) telemetry systems that provide the most throughput within the constraints on animal behavior. As the next generation of animal-borne tags is developed, hardware and software architecture will need to evolve to keep up with emerging technology.

Mike Rose, British Antarctic Survey (BAS), discussed some of the technology challenges and developments he and his team have experienced over many years of Antarctic field deployments. He noted that traditional methods of deploying instruments with large ships and crewed aircraft are expensive and generate a lot of carbon dioxide, contributing to climate change; hence, there is increasing interest in developing small autonomous air and sea observing systems (such as those other speakers have described). The availability of tractor trains to deploy instruments in the field removes the weight constraints one faces when relying on small aircraft.

Rose also noted that there are limited possibilities to work with commercial industries to develop the technologies needed for Antarctic research; thus, the research community has to continually assess what instruments to develop themselves and what they can adapt from the marketplace. While we cannot control when and what technology breakthroughs occur, we can control how well new technology is implemented for better use. Rose believes there is great room for improvement in fostering knowledge sharing of best practices and technology solutions for Antarctic and polar research generally.

One notable technological advance made by BAS occurred when growing chasms in the ice shelf forced it to cease having winter staff at the station. To keep the station scientifically active, its solution was to provide power using a capstone microturbine, which can run unattended for an entire year. It did not develop the technology, but it has learned a lot about how to run one successfully, safely, and in an environmentally safe way. He believes that this knowledge could be shared and improved upon by the community.

ADVANCED DATA ANALYTICS DEVELOPMENTS

Krithika Manohar, University of Washington and AI Institute in Dynamic Systems, highlighted how AI and ML research may have some synergies with polar research. Her team’s expertise in AI and ML models is grounded in the physics of a dynamic system. Her work in optimal sensing for flow reconstruction prediction is motivated by the limited sensing availability in real-world applications, including polar research. Sensor placement is a design problem on top of an existing data optimization problem and in large-scale systems that present many degrees of freedom. Their approach is to leverage learned physics in the optimal sensing problem to efficiently optimize sensor placement (Manohar et al. 2018). Their sensor placement methodology is rooted in linear inverse problems but it generalizes to many other applications, including control and nonlinear sensing.

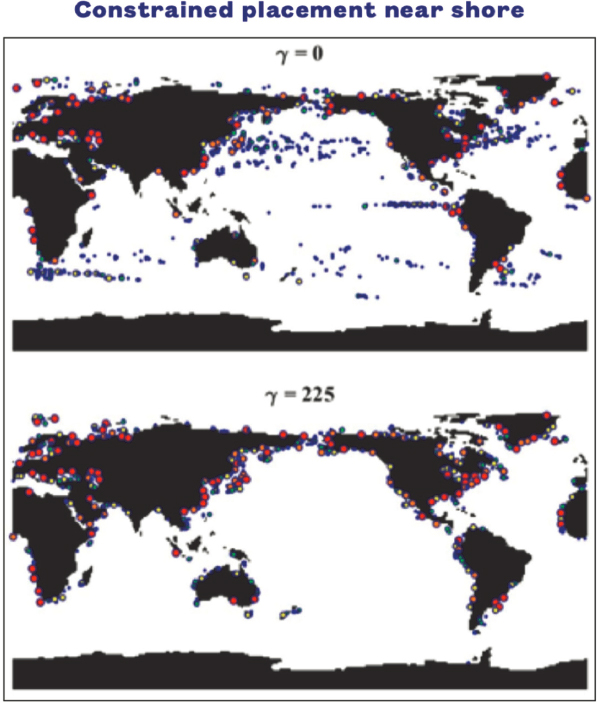

In dynamic systems, spatiotemporal correlations afford an even higher degree of compression. They can enforce more realistic sensing constraints for real-world applications, such as enforcing that high-fidelity sensors or

buoys in the ocean be deployed near coastlines by parameterizing a spatial constraint parameter (called gamma) (Clark et al. 2019; see Figure 12). This can be particularly useful for a limited sensing constraint and spatial constraint on placement, such as is common in polar research. They can also generalize this to sensor and actuator placement by optimizing observability and controllability metrics through systems theoretic metrics in networked dynamical systems. They have software for the optimal sensing framework and ongoing directions in atmospheric source inversion and optimal sensing, as well as prediction and control models.

Building on Manohar’s discussion of advanced data analytics techniques generally, Maryam Rahnemoonfar, Lehigh University, focused on data analytics techniques developed specifically for polar regions. Over the past 40 years, much data have been collected in the polar regions with different sensors (e.g., lidar, radar, optical, multispectral) and from different platforms (uncrewed aerial vehicles, drones, satellites, and ground-based measurements). At the same time, many physical models have been developed to detect the ice sheet

beds, internal layers predicting the ice dynamics, and their contribution to sea level rise. However, data and physics models often do not communicate with each other. Rahnemoonfar and her team are addressing this gap using AI and ML combined with physics (e.g., of the sensor or hardware, signal or image, land, atmosphere).

For calculating ice thickness and predicting its contribution to sea level rise, it is important to study both the ice surface and the subglacial topography, which is observed with radar sensors. Rahnemoonfar described several challenges associated with such observations: for example, low signal-to-interference and -noise ratios; highly variable subglacial topography; and surface multiples that are artifacts that they do not want to detect. To overcome these challenges, Rahnemoonfar and colleagues have developed a model-based approach to tracking the surface and the bottom of the ice sheet. Model-based approaches require a lot of parameter tuning, which is not practical for big data. Because of that, they are using ML and deep-learning approaches. To train the data, they have been looking at the physics of the sensor (e.g., radar) and some data-driven approaches to be able to generate some data and address data with missing labels (Yari et al. 2021). The generated data have proven to be relatively accurate. They are also trying to use the physics of the atmosphere to combine with ML, which has reduced the error.