5

Workshop 1, Session 3: Sustainable Strategies and Digital Tools to Expand Implementation of PCOR Findings

USING THE LEARNING COLLABORATIVE APPROACH FOR IMPLEMENTING AND SCALING INNOVATION

James Schuster, chief medical officer for the University of Pittsburgh Medical Center’s (UPMC) insurance services division and a member of the

___________________

1 A Learning Collaborative is a systematic approach to process improvement based on the Institute for Healthcare Improvement Breakthrough Series Collaborative model. During the Collaborative, organizations will test and implement system changes and measure their impact. They will share their experiences to accelerate learning and broader implementation of best practices (CIBHS, 2015).

PCORI Board of Governors, began by discussing the Community Care Behavioral Health Organization. This is the umbrella organization under which he conducted his work and a subsidiary of UPMC that manages behavioral health services on behalf of over a million Medicaid members across 43 counties in Pennsylvania. Community Care’s mission is to improve the health and well-being of the community through the delivery of effective, cost-efficient, and accessible behavioral health services. His work has focused on adults living with serious mental illness. He explained that these individuals frequently have unmet medical needs, placing them at significant risk of developing serious physical health issues (Afzal et al., 2021; De Hert et al., 2009; Rossom et al., 2022). Individuals with serious mental illness die as much as 10 to 20 years younger than the general population because of the increased prevalence of cardiovascular disease, diabetes, and obesity (WHO, 2018a).

Wellness coaching plays a central role in the Behavioral Health Home Plus model developed by Schuster and his colleagues in 2010. Schuster explained that wellness coaching is a strategy for care managers, peer specialists, and other health care staff to help individuals develop skills for self-managing their physical health conditions and other challenges they may face (Swarbrick, 1997, 2006; Zechner et al., 2021). The Behavioral Health Home Plus model focuses on enhancing behavioral health providers’ capacity to serve as health homes; to provide comprehensive care management, care coordination and health promotion; and to link service users to community resources. After observing positive results in a small demonstration project, Schuster and colleagues in the UPMC Center for High-Value Health Care conducted a 2-year trial at 11 community mental health settings that treat Medicaid beneficiaries, comparing a provider-directed intervention with this self-management approach (Schuster et al., 2019). The first year of the trial focused on implementation of the model using the Institute for Healthcare Improvement’s Learning Collaborative model. Key outcomes from the 2-year trial included

- enhanced patient activation and engagement in care,

- a shift of care from the hospital to community settings,

- sustained practice transformation,

- a greater than 20 percent reduction in cost of care, and

- a cultural change in community care settings that led providers to be more attentive to their own health.

The success of the learning collaborative approach, said Schuster, relies heavily on significant technical support for providers and the agencies that employ them. It is not enough to educate providers or provide tools, said

Schuster. “It is optimal to provide ongoing, structured technical assistance,” which the implementation team did over the entire 2-year period, but especially over the first year. For example, the team held two to three learning, engagement, and work review sessions with individual providers each month using a plan-do-study-act (PDSA) approach. Given this success, Schuster and his colleagues expanded the model to new populations using the same learning collaborative approach, including mental health services for adults in community-based settings, in children’s outpatient services settings, and in a health home model that uses community- and school-based teams of clinicians to work with children in school, at home, and in other community settings. They also expanded the program to specialty peer support programs, opioid treatment providers, and psychiatric residential treatment providers.

Schuster explained that one key factor that contributed to the success of this implementation effort was the extensive outreach to agency leadership he and his colleagues conducted prior to trying to engage the treatment team. As a result, agency leadership helped drive staff engagement and participation. Qualitative evaluations revealed that providers felt positively about the support and resources they received. Providers also identified areas for improvement, which included further tailoring materials for the youth population, bolstering support for site leadership, and increasing workbook navigation training. Quantitative results showed that the learning collaborative approach increased staff confidence, documented reciprocal communication between behavioral and physical health providers, boosted the number of individuals with a wellness plan, and led to individuals feeling engaged in their own care.

In closing, Schuster said that UPMC’s Community Care Behavioral Health Organization is now using the learning collaborative model to address additional practice challenges in other care settings. While he sees this as a successful model, he acknowledged that it does require a significant focus by the agency that is trying to encourage behavior change and the provision of substantial resources to the agencies.

Key facilitators of success include:

- taking a structured approach to scaling;

- getting leadership support and participation in the quality improvement team;

- defining and monitoring key milestones and process and outcome measures;

- using the PDSA cycle;

- employing shared learning in a safe and supportive environment; and

- celebrating successes.

PERSON-CENTERED AND SUSTAINABLE DIGITAL REPRODUCTIVE HEALTH INTERVENTIONS

Tamar Krishnamurti is an assistant professor of medicine and clinical and translational science at the University of Pittsburgh and founder of the FemTech Collaborative within the University of Pittsburgh’s Center for Innovative Research on Gender Health Equity. She began by explaining the FemTech Collaborative developed as a result of various researchers’ efforts to generate technologies that could supplement the formal health care system to address the goal of improving people’s reproductive health and advancing their reproductive health equity.

Krishnamurti opined that reproductive health indexes in the United States, particularly those related to maternal health, are substandard. She said these indexes in part reflect the inequities among people of color and those with preexisting medical conditions. Current health care delivery models can perpetuate poor reproductive health outcomes when they fail to identify people’s reproductive risks, their needs, and their preferences. She said current health care delivery models frequently do not adequately support people’s autonomy regarding their health care decision-making and inadequately address preventable adverse outcomes in a patient-centered manner.

One of the collaborative’s projects was to develop and implement the MyHealthyPregnancy app, which offers early identification and intervention on modifiable pregnancy-related risks. Krishnamurti and her collaborators originally designed the MyHealthyPregnancy platform 6 years ago as a means of identifying and intervening in modifiable risks that are precursors for preterm births prior to 37 weeks of gestation (Krishnamurti et al., 2022). They built the platform in collaboration with behavioral scientists, pregnant individuals, new mothers, physicians, human-computer interaction specialists, and community leaders. An individual’s doctor prescribes the app at the first prenatal visit, and the pregnant person uses it to routinely enter information that enables risk factor modeling with a machine learning algorithm and clinical best practice screening. The backend of the system then generates real-time tailored feedback on the individual’s pregnancy and provides connections to vetted, patient-centered resources both inside the health care system and in the community. The platform then transfers this information to the health system’s physician portal to alert providers and recommend clinical actions for risks that the app detects.

In an 18-month trial of MyHealthyPregnancy that enrolled 7,000 pregnant patients from UPMC’s health care system, the FemTech team assessed how well the system detected preeclampsia risk, a leading preventable cause of maternal mortality (Ananth et al., 2013; Ghulmiyyah and Sibai, 2012).

The FemTech team embedded multiple choice questions in the app to assess risk criteria for preeclampsia and to allow the team to determine whether the MyHealthyPregnancy app could facilitate preventive care in the form of aspirin for preeclampsia. Krishnamurti explained that low dose daily aspirin is an evidence based therapy for reducing the risk of preeclampsia (Askie et al., 2007; Xu et al., 2015). They then sent a question out via the app to 2,500 app users to see if their providers had prescribed aspirin and, if so, how often the app users were taking it (Krishnamurti et al., 2021b). Of the 124 individuals at highest risk for preeclampsia, 73 percent had a recommendation for aspirin use in their chart, but only 37 percent were aware of that recommendation. Only about half those who were aware of the recommendation adhered to the prescribed regimen. She said that the study results suggest that the risk criteria for preeclampsia are underused to some extent, and that physicians may have been relying on other cues, such as chronic hypertension, for assessing their patient’s risk more heavily than others risk factors. “All of this is to say we were able to use a digital tool like MyHealthyPregnancy to identify risk cues that physicians were relying on and examine which risk factors might be routinely missed,” she said. This finding led to a change in clinical practice that UPMC has implemented in its health care system. “This tool let us identify folks at risk from a distance, but it also allowed us to update how they are cared for in person,” she said.

Krishnamurti said that when building the MyHealthyPregnancy platform, the FemTech team developed effective predictive machine learning models that allowed the team to pose indirect questions to app users to assess their risk for intimate partner violence throughout pregnancy (Krishnamurti et al., 2021a). She explained that intimate partner violence is a difficult subject to raise with patients. As a result, intimate partner violence often goes undiscussed and undetected. The app has been able to identify pregnant people at risk who were not identified during visits with health care providers. The app connects people identified as at risk with resources. The team has modified that process based on feedback from app users.

Krishnamurti said that two of the most important takeaways from the MyHealthyPregnancy project are that digital tools can sometimes serve as a safe space to disclose sensitive information, and these tools offer a different layer of connection that is particularly important in situations where access to in-person care is limited. She noted the team learned through user feedback, it is important to be mindful of creating tools that feel personalized without being invasive. Another lesson was that the data generated by a digital tool can identify gaps in care, as the app did with preeclampsia. She noted that UPMC’s women’s health service has done a phenomenal job in combining such data and establishing streamlined responses to risks identified in between routine prenatal care visits.

In terms of the potential for digital women’s health tools, Krishnamurti said that the market size for FemTech is estimated to be over a trillion dollars in the next five years, which means the stakes are high for how developers create these technologies and how health systems adopt them. Given that, she shared some additional high-level pitfalls that can arise when technology is seen as an all-encompassing solution rather than as a supplement to care. First, digital tools can create an opportunity for the perpetuation of misinformation as well as for disengagement between patients and health care professionals at a time when human connections are increasingly important for delivering quality care. Second, monetized sensitive data can be used to exploit rather than to alleviate fear. “That is particularly egregious when we know so much about the relationship between stress and poor health outcomes,” said Krishnamurti. Third, it is important to remember that machine learning algorithms rely on data that may be inherently biased in how it has been collected and in the modeling assumptions, which can exacerbate inequities. Finally, while smartphone ownership is almost ubiquitous across sociodemographic groups, the quality and the cost of access differs across sociodemographic groups, which can again exacerbate inequities. Given these considerations, the FemTech Collaborative has agreed on the following set of principles to guide how it builds and evaluates its tools (Krishnamurti et al., 2022):

- Ground content in evidence-based science.

- Advance health equity.

- Center the needs and preferences of those seeking care.

- Support patient autonomy in managing their care.

- Incorporate community stakeholders as partners.

- Be iterative and flexible over time with what is being built.

ENGAGING PEOPLE IN INNOVATIVE DIGITAL INTERVENTIONS

Andrea Graham, clinical psychologist and assistant professor at the Center for Behavioral Intervention Technologies at Northwestern University’s Feinberg School of Medicine, discussed engaging people with digital tools. She began by noting that digital health tools have been considered an opportunity to extend provision of health care treatment beyond in-person interactions. However, when digital interventions have moved from research settings to real-world settings, they often have low rates of use and retention among patients, failed integration within systems of care, or failed implementation. Graham said the source of the disconnect is that developers often do not design their interventions for users and the contexts in which health systems implement them. Digital tools need to support contextually relevant care that

matches the patients’ needs through personalization and precision. These tools also need to use pragmatic design approaches to match clinician workflow. She said human-centered design is a useful strategy to make digital health tools that better engage patients and providers (Graham et al., 2019).

Graham and her colleagues have proposed a model of user experience (Figure 5-1) that applies the experimental therapeutics approach, or the science of behavior change, to consider how a digital tool (in this case a theoretical digital mental health tool) can improve the user experience, and thus improve clinical outcomes for users (Graham et al., 2019). The model focuses on how someone uses the digital tool and how useful it is, asking several questions:

- Does it help them achieve things in their daily lives that they would like to achieve?

- Can they navigate it effectively and easily?

- Can they learn to use it easily?

- Are they satisfied with their experience?

Graham explained that integrating new interventions into existing workflows is challenging, particularly integrating digital tools because the delivery differs by design from in-person services. In part, this challenge exists as a

SOURCE: Reproduced from Graham et al., 2019. Reproduced with permission from JAMA Psychiatry. 2019. 76(12): 1223-1224. Copyright ©2019 American Medical Association. All rights reserved. Presented by Andrea Graham on June 9, 2022, at Accelerating the Use of Findings from Patient-Centered Outcomes Research in Clinical Practice to Improve Health and Health Care: A Workshop Series.

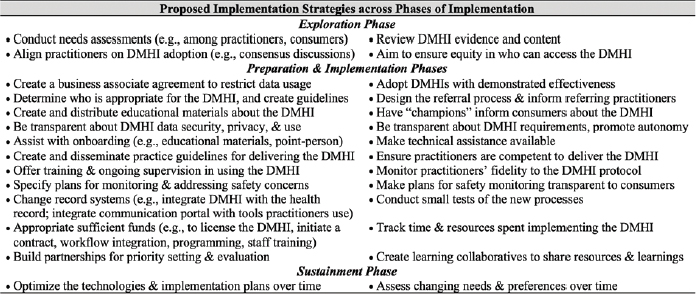

result of the gap in knowing what methods and techniques are most effective for implementing digital interventions into health care settings. Graham and her colleagues assembled a tailored list of implementation strategies specifically for digital health interventions to address that gap (Figure 5-2) (Graham et al., 2019). This list proposes strategies for each phase of implementation. She noted that workflow considerations remain among the least explored but most needed factors toward facilitating implementation of digital health interventions (Torous et al., 2021).

Graham discussed a suite of apps her team has been developing that focus on interventions for depression and anxiety as an example of workflow integration (Graham et al., 2020a). This suite includes digital tools that individuals can use to improve their symptoms and that fit into their daily lives. The suite also provides coaching from a paraprofessional. Graham and her team conducted a clinical trial of the mental health app platform for use in a primary care setting. In terms of workflow integration, specifically referral management, Graham’s team deployed several strategies to refer individuals to the app suite (Graham et al., 2020a). Strategies included direct-to-consumer approaches, giving presentations on campus, and clinician referrals through an electronic health record (EHR) alert that would prompt the physician to place an order through the EHR.

Graham explained that one finding from the clinical trial was that interoperability with an EHR is often not consistent, and does not always match what designers think they are trying to build. In this case, EHR referral alone

NOTE: DMHI refers to digital mental health intervention.

SOURCE: Reproduced from Graham et al., 2019. Copyright © 2019 by American Psychological Association. Reproduced with permission. Presented by Andrea Graham on June 9, 2022, at Accelerating the Use of Findings from Patient-Centered Outcomes Research in Clinical Practice to Improve Health and Health Care: A Workshop Series.

accounted for only 4 percent of the people who used the app and enrolled in the clinical trial. However, of those individuals who were referred to the app as the result of an EHR alert, 50 percent ended up participating in the clinical trial, compared to 4 percent of those reached via the direct-to-consumer approach. She noted this demonstrated the importance of the provider-patient interaction for digital tool uptake.

Graham said referral management is an important step in what she called the implementation cascade that first identifies people in need of care, convinces them to want the intervention, and then gets them to use it so they benefit from care (Graham et al., 2020c). One challenge to addressing this step is deciding who is responsible for integrating digital health tools into the workflow.

Graham concluded by highlighting important considerations for design of digital health tools. She said context and sustainability should be considered in the earliest phases of the design process. This includes considering how the implementation process for the digital tool will be integrated into clinical workflow. Developers should seek to allow end users to engage with new digital tools as soon as possible in the design process and evolve design in an iterative manner based on end user feedback.

DISCUSSION

Considerations for Assessing Digital Tools

Session moderator Cara Nikolajski, director of research design and implementation at the UPMC Center for High-Value Health Care, asked the panelists to discuss whether designers should assess apps in terms of evidence-based workflow integration, attention to equity, and other factors so that clinicians know which tools to prescribe over others. Krishnamurti replied that the Food and Drug Administration (FDA) does regulate digital apps that either diagnose or treat a health care issue. FDA also has the right to oversee and regulate other apps that do not fully meet that criterion. However, she noted that there are many tools available that have not gone through rigorous regulatory examination. She explained that was why her presentation highlighted some of the guiding principles as a framework for both designing and evaluating digital tools, as well as for the people who are evaluating whether they want to adopt a particular tool. Graham remarked on the importance of collaboration when thinking about how to engage with clinicians and how to ensure that they understand the tool and feel comfortable and confident referring patients to the tool. For example, her team has found that in certain primary care settings there is not much time to talk about mental health issues, nor

are primary care providers comfortable doing so. This suggests there is a need to take a step back in training, engage with the clinical team, and talk about how to have those conversations.

Nikolajski then asked the group to discuss any standard metrics that the Agency for Health Research and Quality (AHRQ) should require for any implementation of a digital intervention. Schuster replied that in addition to outcomes, engagement rate should be evaluated. He noted the literature suggests that engagement rates with digital health tools are low unless accompanied by coaching or clinical service. Krishnamurti suggested a good engagement rate would likely depend on the function of the tool being evaluated. “If someone uses a tool sparsely but they get real value from it—maybe they log in once or twice in our tool, register intimate partner violence, and access support for that, that minimal use could have a big impact,” she said. Krishnamurti said it is important to first define whom an app is designed to serve and how the app will serve them, and then develop the metric for engagement to capture who is using the tool and to what benefit. Schuster suggested a requirement to demonstrate a positive outcome at the population level, which will be contingent on engagement.

Opportunities to Combine Digital Tools and Community Health Workers

Another question from the audience asked the panelists to comment on possible opportunities for AHRQ to support combining digital tools with community health workers to provide care. Krishnamurti answered that digital tools represent an amazing opportunity to support community health workers and bridge gaps in health care. For example, digital tools might be useful for getting information from patients about risk factors that their physicians may not have the time to explore. They might also serve as a way in which community health workers can stay in touch with their clients and track their health issues. Krishnamurti said digital tools are complements to in-person care, not solutions on their own.

Graham agreed that digital tools are not a stand-alone solution. She noted that such tools can make it easier for clients to access more than one type of clinician, enhance health equity, and expand the diversity of whom the health care system can reach. It is important to be thoughtful about how data and opportunities to interact with a health care provider are integrated into the clinic workflow. “If patients are messaging all the time, who is responsible for reacting to that? Who is responsible for reading alerts and when in the workflow is it [message alert] going to pop up so that it can actually be really actionable?” she asked. She said that considering those factors is an important part of human-centered design, and they represent an important design implementation frontier the field needs to consider further.

Considering Equity when Designing Digital Tools

An audience member asked speakers to discuss strategies to address equity issues related to digital tools. Nikolajski noted both Graham and Krishnamurti discussed engaging stakeholders in the development process, which she said is one way to support advancing health equity. Schuster agreed and noted the need to ensure developers engage with communities that have health equity challenges and often access services less frequently than others. From an implementation science2 perspective, said Graham, it is important to consider equity when creating and testing a tool that may not be equally available to all who might benefit from that tool. For example, researchers should consider the implications of providing a scale or cell phone to a study participant and then taking it back once the study is done. Krishnamurti pointed out that there are few opportunities to fund the dissemination and maintenance of digital tools. This is one reason, she said, why so many promising digital tools and decision support applications are built but not implemented.

CLOSING SUMMARY OF WORKSHOP 1

Lauren Hughes concluded the workshop by summarizing her takeaways from the presentations and discussions. She said several speakers highlighted several approaches for improving the design and implementation of research projects that are effective and generate trust and authenticity, intentionality, and longitudinal impact. Those include3

- co-creation with patients, families, and communities that are directly affected by a project;

- incorporating human-centered design approaches when designing interventions and involving a broad array of stakeholders and research colleagues; and

- including practice-based research networks (PBRNs) and community members as partners and respecting the expertise that community members bring to the research.

She said speakers also highlighted research and translation challenges that are important to identify and address to improve the impact of key research findings. These challenges include

___________________

2 Implementation science is “the scientific study of methods to promote the systematic uptake of research findings and other EBPs [evidence-based practices] into routine practice, and, hence, to improve the quality and effectiveness of health services” (Bauer, 2015).

3 These points were made by the individual workshop speakers/participants identified above. They are not intended to reflect a consensus among workshop participants.

- short funding cycles and funding sustainability;

- navigating complex adaptive systems that evolve during the course of research that can impact findings;

- developing effective approaches for disseminating research findings;

- increasing the use of effective digital tools that are designed for context, integrated smartly into workflows, and are engaging and valuable; and

- examining the design of studies to consider including mixed method approaches and more community-based participatory research.

She said another theme touched on by several speakers was the importance of continuing to challenge assumptions and consider new perspectives about engaging in patient-centered outcomes research (PCOR) and implementing PCOR findings. She highlighted several perspective related considerations for researchers that were put forth by speakers:4

- While facts are universal, implementation is local, so researchers should attend to local factors that will impact implementation.

- Community outreach begins with an answer, but community engagement ends with an answer.

- Privilege can contribute to psychological distortions, which can lead to an “us-versus-them” mentality that contributes to health inequities.

- Researchers should practice cultural humility and engage an assets-based rather than deficits-based approach when working and collaborating with communities.

___________________

4 These points were made by the individual workshop speakers/participants identified above. They are not intended to reflect a consensus among workshop participants.