2

Understanding Implementation Science

Workshop Session Objective: To create a shared understanding of implementation science and considerations for conducting implementation science research.

Chapter 2 provides a snapshot of implementation science. In it, speakers explained common terms used in implementation science and described implementation outcomes within a pedagogical context. The chapter closes with suggestions from the speakers on how educators and researchers can start or expand their use of implementation science, since, as noted by the speakers, many are already doing it.

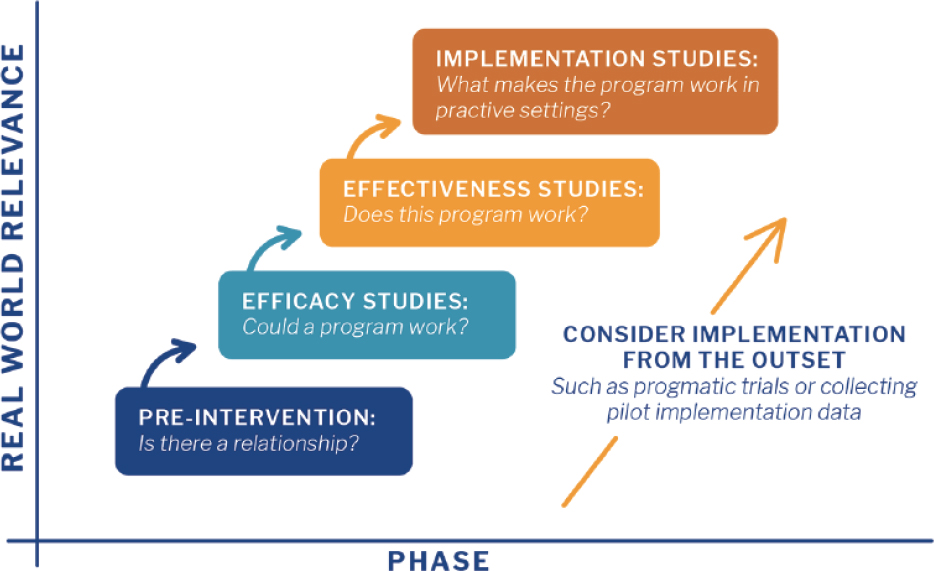

“What is implementation science?” asked Raechel Soicher, School of Psychological Science, Oregon State University. She offered two ways of understanding the concept. Implementation science was defined by Eccles and Mittman (2006) as the “scientific study of methods to promote uptake of research findings in real-world practice settings to improve quality of care.” This idea of implementation science stemmed from the world of medicine, public health, and behavioral health to bridge the gap between research and practice, she added. Another way of understanding implementation science is to view an intervention or practice (e.g., pedagogy) as the “thing,” implementation strategies as “the ways that we do the thing,” and implementation science as a way of measuring outcomes to evaluate how well people or institutions are “doing the thing.” Soicher explained that while traditional research looks at whether a practice is effective, implementation studies take this one step further and examine what, specifically, makes the practice work

(Figure 2-1). What are the characteristics of the context, the infrastructure, and the process that make it work? Soicher added that implementation studies tend to be conducted with the assumption that the practice itself has been proven effective, but some implementation scientists argue that implementation should be considered from the outset. If research is designed to measure implementation outcomes starting at the preintervention phase, it will help build the evidence base for later phases.

Soicher explained the different phases of study (Figure 2-1) by using an example of a hypothetical memory intervention. At the preintervention phase, the issue is a simple one of whether there is a potential relationship between the intervention and being able to recall a list of words. Efficacy studies then test this relationship in a tightly controlled laboratory setting by, for example, testing whether flashcards can improve a person’s recall. (See Box 2-1 for a list of implementation science terms used by Soicher.) At the effectiveness phase, said Soicher, the intervention is introduced in a real-world setting to see if it would work in the environment where it might be used. For example, half the students in a class could be asked to make flashcards as they read the text book during the semester, and their final exam scores would be compared to students who were not asked to make flashcards. Implementation science is the process of translating information from effectiveness studies into real-world use, said Soicher, from exploration to preparation to implementation to sustainment. As a program or

SOURCE: Harvard Catalyst (n.d.), as presented by Raechel Soicher on May 20, 2020.

practice is implemented, the idea is to begin with implementation in a local and specific context, then take the lessons learned from this experience and expand them into more generalizable knowledge that can be shared. To this end, it is critical to share as many details as possible about how implementation was conducted, what the barriers were, and what the context was.

She emphasized the differences between traditional effectiveness studies and implementation studies. If a study looked at an intervention that was delivered in classes with different teachers, traditional research would treat the different teachers as variables to be controlled so the effect of the intervention could be isolated. Conversely, for implementation science, these variables are the object of study; research would attempt to identify differences between the teachers in order to determine if a certain way of delivering the intervention was associated with better student outcomes. This type of research can identify core components of the intervention so it can be generalized and modified to other contexts while maintaining effectiveness.

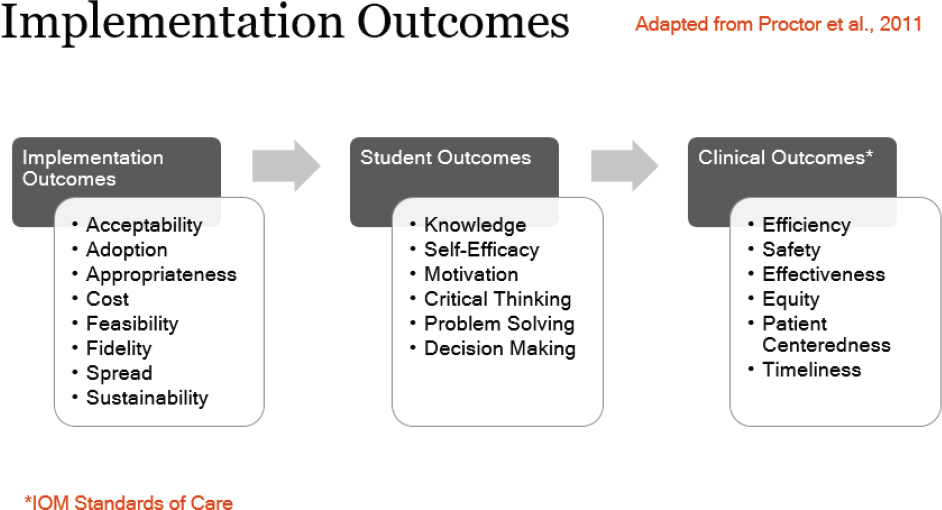

There are a huge number of outcomes that can be considered in implementation science, said Soicher (Figure 2-2). Implementation outcome measures—that is, how well or to what extent a pedagogical practice or

SOURCE: Presented by Raechel Soicher on May 20, 2022 (adapted from Proctor et al., 2011).

program is implemented—include acceptability by students and faculty, rate of adoption, whether the practice or program is appropriate for the context, the cost and feasibility of the practice or program, how far the practice or program spreads, and whether it is sustainable over time. Soicher noted that of implementation outcomes, fidelity is a particularly important one. Fidelity refers to how closely a practice or program can be implemented in the way it was designed. Many people want to modify a program to fit their context, she said, but most of the time it is unknown which components of the program are critical for it to be effective and which ones can be adapted.

Student outcomes of interest include knowledge gain, self-efficacy, motivation, critical thinking, problem solving, and decision-making skills. In health professions education, Soicher said, student outcomes will ultimately affect clinical outcomes, which can be evaluated using measures of efficiency, safety, effectiveness, equity, patient centeredness, and timeliness. While these are not the outcomes that implementation science directly focuses on, the thought is that implementation of a practice or program will affect the knowledge and skills of students, which will in turn affect outcomes for patients.

GETTING STARTED

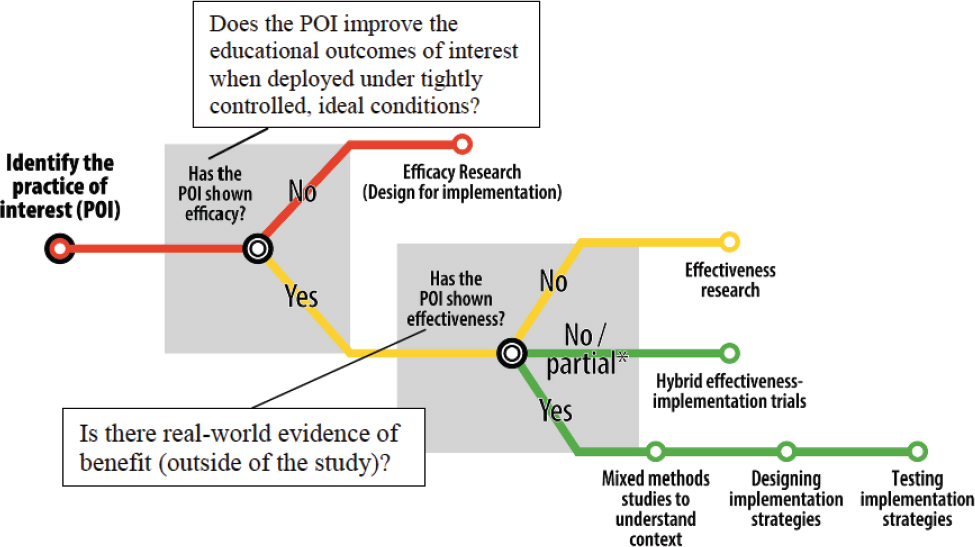

When beginning the process of translating a practice from research into use, Soicher said the first step is to identify the position of the practice on

the “subway line” of translational research (Figure 2-3). For a practice to be ready for implementation, there must be evidence of both efficacy and effectiveness. If these data do not exist, efficacy or effectiveness research must be conducted; this research, she underscored, should be conducted with implementation outcomes in mind. Soicher said that when she first became interested in implementation science, she had to teach herself through books, conferences, and attending talks. Based on her experiences, she offered workshop participants two options for “dipping their toes” into the implementation science waters.

First, researchers can improve the transparency of their reporting. It can be difficult to replicate other people’s research because of a lack of details about how the intervention or program was actually implemented, she said. To improve transparency and replicability, Soicher encouraged researchers to use modified CONSORT guidelines in reporting (see Glasgow et al., 2018). The second step that researchers can take, she said, is to evaluate the implementation process using a standardized model such as Damschroder and Hagedorn (2009). For example, when using a new practice in the classroom, teachers could collect data on whether the practice was easy to use or whether students were engaged. Soicher noted that many teachers already do this type of data collection, but may not think of it as implementation science. An additional resource was suggested by Eric Holmboe, chief of Research, Milestones Development and Evaluation Officer at the Accreditation Council for Graduate Medical Education, known as

SOURCE: Presented by Soicher on May 20, 2022; Lane-Fall et al., 2019.

Standards for Quality Improvement Reporting Excellence (SQUIRE), which was originally designed to improve consistency in reporting quality improvement studies (Ogrinc et al., 2008). Box 2-2 contains a summary of Soicher’s suggestions.