Mathematics and Statistics of Weather Forecasting

Weather forecasting is incredibly difficult. You may have noticed that meteorologists share forecasts with the caveat that uncertainty or variability should be expected. And, given the complexity of your local weather, you may wonder about the reliability of these forecasts.

Weather forecasting has dramatically improved over the past decade because of advances in theoretical mathematics and statistics, innovative mathematical and statistical models, constantly improving approaches toward integrating data, efficient computing, and expanded data collection. Not only do these improvements help understand tomorrow’s weather, but they also help communities to better prepare for weather events.

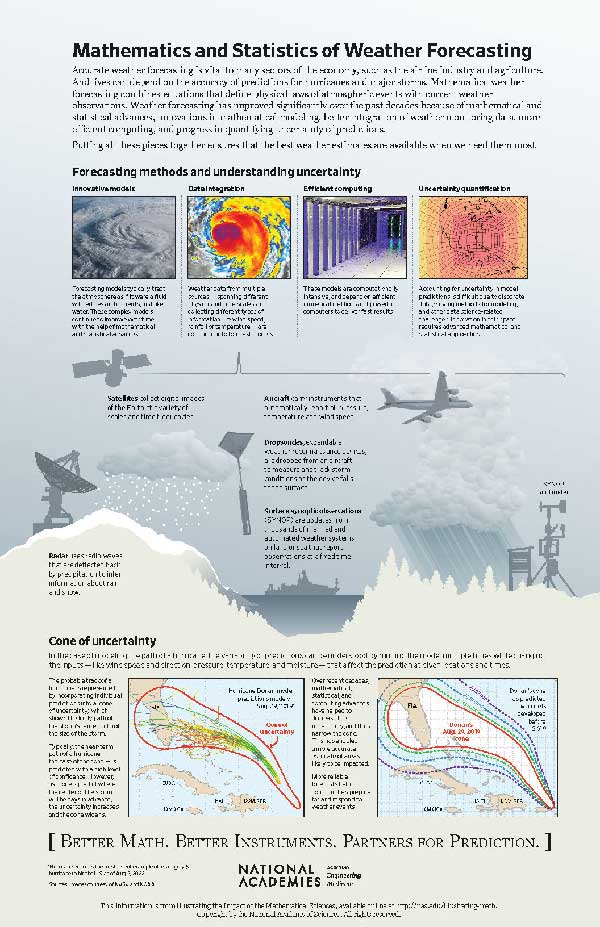

Forecasting Methods and Understanding Uncertainty

Numerical weather prediction models are collections of equations that describe weather patterns and depend on computer simulations to find approximate solutions. These models have been used since the 1950s and rely on physical laws—including Newton’s second law of motion, conservation of mass, and thermodynamics—treating the atmosphere as a fluid with eddies and currents. This approach is dynamic, meaning that the weather today impacts the weather tomorrow. Despite the models resting in principle on fundamental physics that has been understood for decades, our ability to actually compute in practice what the models predict relies on up-to-the-minute mathematical developments.

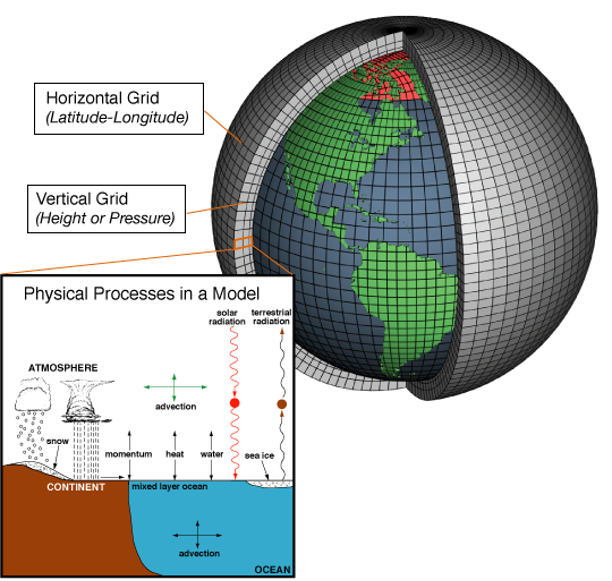

A mathematical technique called discretization is integral to modeling an enormous and complex physical phenomenon like weather. Scientists partition Earth’s atmosphere into thousands of three-dimensional cubes. (See Figure 1.)

SOURCE: NOAA, 2021. https://celebrating200years.noaa.gov/breakthroughs/climate_model/modeling_schematic.html

In each discrete portion, scientists track variables like temperature and pressure to gain a comprehensive view of weather around the world. It is crucial to track all discrete portions, even those in the atmosphere and in the ocean, because the behavior within one portion impacts all of its neighbors. Critically, data on turbulence—which is responsible for the redistribution of heat, moisture, and momentum—and similar modes of energy transfer must be captured in the portions adjacent to Earth’s surface.

Improvements in weather forecast models stem from finer discretizations (i.e., smaller cubes, and lots of them) of Earth’s atmosphere. The language of mathematics is critical for developing finer discretizations and implementing them in numerical weather prediction models.

Data are incorporated into models on a streaming basis; this is called data assimilation. Missing observations can be approximated using statistical techniques. A variety of new and improved instruments increase accuracy in assessing the current state of the weather system.

- Radar is especially useful in getting information about rain and snow. Inferring this information from how radio waves are deflected back by the precipitation involves a type of mathematics known as inverse problems.

- Digital images of Earth can be taken from satellites at a variety of scales and time frequencies. These are critical in making short-term forecasts and also in tracking major storms. The mathematics of deblurring, denoising, and interpreting images in an automated way has made immense strides in recent years and improves how these images are effectively turned into information.

- Data collection from major storms is often done using a dropsonde, an expendable weather reconnaissance device that is dropped from an aircraft over water to measure and track storm conditions as the device falls to the surface. The measurements from these devices are incorporated into predictions of a storm’s path and intensity.

- Ground- and water-based stations capture local weather data through meteorological and oceanographic sensors. The number of stations has greatly increased, contributing to the size of data flowing into increasingly accurate weather prediction models.

Combining these heterogeneous data sources continually requires innovation in mathematical modeling techniques and can be very computationally intensive. The widespread development of more powerful computers and the improvement of efficient numerical methods help to deliver faster results. The National Oceanic and Atmospheric Association’s (NOAA) recent supercomputer upgrade completed in 2018 is one such improvement. These new systems enable NOAA to process 8 quadrillion calculations per second and increase digital storage by 60 percent for faster and more accurate weather forecast models.

It is important not only that forecasts be accurate but also that we know just how accurate they are. The models capture much of the dynamics of the weather but are still idealized. Further, the models themselves are too complex to be solved exactly. Instead, we solve a discrete version, which contributes some amount of error. Even with very accurate mathematical models, we are still dependent on the inputs, such as ocean temperature or wind speed, which have some measurement errors. Quantifying the uncertainty of any one prediction or a broader set of forecasts depends on mathematical and statistical techniques that take into account types of uncertainties such as data availability and reliability, modeling assumptions about the physical world, and simplifications that have been made to streamline the model.

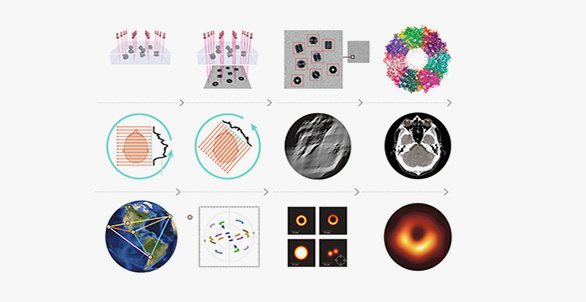

A Hurricane Path and the Cone of Uncertainty

Not all models are the same. One model may weigh some inputs more than others in its analyses. Another may rely more heavily on historical weather data. The result is that scientists and meteorologists have access to many models—for example, predicting the path of a hurricane—and the models likely will not all agree. While it may seem confusing to have models that give, in some cases, distinctly different predictions, it turns out to be quite useful.

With many models that predict the path of a hurricane, scientists can build an ensemble forecast that estimates the probable track of a storm by incorporating individual predictions into a “cone of uncertainty.” As is the case with most weather forecasts, the near-term path of a hurricane can be confidently predicted, yielding a narrow cone. Over time, the cone widens as the predictions become more uncertain.

In recent decades, mathematical, statistical, and computing advances have helped to decrease uncertainty throughout the cone. In particular, ensemble modeling has played a key role in putting together information provided by different classes of models into an aggregate projection that is superior to any one of its parts. The more accurately forecasters can narrow the band of likely impact of a storm, the better we can target emergency efforts to evacuate or assist those people at greatest risk.