2

Evaluation of the Cost, Effectiveness, and Deficiencies of These Methodologies

Regardless of the specific application(s) of a vulnerability assessment of an aircraft, whether it is to aid in design or design validation, satisfy program requirements, support subsequent analytical assessments, predict test outcomes, satisfy the Live Fire Test law, or support acquisition decisions, the goal of the assessment is to obtain information on the vulnerability of that aircraft. This information can be described in terms of the attributes of the types, amounts, and applications of the information obtained; the accuracy of, or level of confidence in, the information; and the cost required to obtain the information. An effective methodology is one that provides a great deal of accurate information, of all types, with many applications, at very low cost, and with very few deficiencies. Both of the methodologies reviewed in Chapter 1 (analysis/modeling and live fire testing1) individually have certain advantages and disadvantages with respect to accomplishing the goal of obtaining information on the vulnerability of aircraft.

In general, the analysis/modeling methodology can provide considerable numerical information on the vulnerability of the total aircraft from all aspects for all threats at a reasonable cost, but the level of confidence placed in the information can vary from very low to high, depending upon the type of information obtained, the model used, the quality of input data, the analyst, and the evaluator.2 On the other hand, although the live fire testing methodology has the potential to obtain data that can be used to determine numerical information on the vulnerability of the aircraft to a particular weapon for many hits over the entire presented area of the aircraft from all aspects, in actual practice, testing provides information only on the aircraft’s vulnerability to hits in relatively few locations,3 and the expenditure of funds required to obtain this information is relatively large. However, the level of confidence in the test results usually is relatively high. This chapter examines the three information attributes of types (including amounts and applications), accuracy, and cost for both methodologies.

Analysis/Modeling

Type, Amount, and Applications of the Information. The analysis/modeling programs described in Chapter 1 for the

three types of weapons provide numerical values for the vulnerability of the individual critical components and the total aircraft from all aspects around the aircraft and for any selected ballistic projectile or guided missile. The numerical values can be in the form of vulnerable areas or probabilities of kill. This information can be used to aid in design and design validation, to satisfy program requirements, to support subsequent analytical assessments, to predict test outcomes, and to support acquisition decisions.

Accuracy of Analytical Models. There are three aspects of the accuracy of the analytical models that need to be examined: model verification (Are the internal workings of the model in good order—are the equations properly coded?), model validation (Does the model adequately represent the processes it portrays—do the equations adequately represent the actual physical situation?), and model accreditation (Is the model appropriate to the particular application—is the model capable of properly representing the aircraft and the weapon?).

Model Verification. In so far as model verification is concerned, there appears to be little reason to doubt the veracity of the logic and coding of the models described in Chapter 1. They have been widely employed by government laboratories in all three military Services and by a great many nongovernmental users. The committee accepts the fact that the internal workings of the models are in good order.

Model Validation. Credibility problems exist with model validation. Despite all the physical phenomena that the current aircraft vulnerability models attempt to depict (and many are depicted with high confidence), there are some phenomena that are known to exist, but have not been characterized and entered into the model structure, or are poorly modeled. Perhaps the most notable phenomenon not modeled is the random deviation or ricochet of the path of the penetrator or fragment from the assumed straight shotline as it passes through the aircraft components. Another phenomenon not modeled is the synergism that occurs when a portion of an aircraft is hit by a multitude of closely spaced fragments. In this multiple, closely spaced hit condition, the fragments can be far more damaging to the impacted structure than when they are not closely spaced. A third example of a phenomenon not explicitly modeled is spall. The backface spall generated by the impact of a projectile or fragment on a plate is not considered as additional fragments to be tracked through the aircraft by the shotline model.4

A poorly modeled phenomenon is the treatment of the remaining parts of an impacting fragment or penetrator after it penetrates a plate. The current model considers only the largest remaining piece in calculating subsequent effects. Another poorly modeled phenomenon is the increase in a component’s Pk/h that occurs when the component is hit by more than one projectile or fragment or is damaged by both blast and fragments. The assumption is nearly always made that the same Pk/h value for the first hit in the component is adequate for subsequent impacts on the component. The increase in a component’s vulnerability due to damage caused by prior hits is neglected. For example, a tail rotor drive shaft on a helicopter may not be killed when first hit by a tumbled 12.7-millimeter armor-piercing projectile with incendiaries (API), but a second hit in the vicinity of the first hit may cause the shaft to break due to the synergism in damage between projectiles. This increase in Pk/h due to previous hits is usually neglected.

Also, there are known physical phenomena that are modeled, but their numerical values are not well known because they have not been tested, or the test results are for conditions not satisfied in the combat incident. For example, the penetration equations that determine the velocity and mass decay of fragments and penetrators as they penetrate plates on the aircraft may not have the proper decay coefficients for the material of interest. Furthermore, these equations have been developed for specific geometric shapes of impactors, such as spheres and cubes, whereas fragments are usually irregular. Two of the most difficult kill modes to model in aircraft are fire and explosion, This is due to the randomness of fuel sloshing within a tank, fuel leakage into dry bays around the tanks, and fuel migration into distant portions of the aircraft.

Neglect of On-Board Ordnance. One of the most important findings of our study is that on-board ordnance is usually neglected as a contributor to vulnerability. Most of the simulation of internal ordnance aboard the aircraft has been treated by the aircraft vulnerability community as “clutter.” Clutter is inert material in a compartment that is considered only as a compartment filler in the calculation of overpressure in the compartment due to its volume. The overpressure from the explosive “clutter” when hit by projectiles and fragments can change the total pressure in the compartment dramatically. One of the basic premises of all development testing is that modeling must precede testing. Any aspect of hardware impact on vulnerability must first be modeled so that the testing can be used to verify the modeling rather than for the testing to be extensive enough to cover all statistical events. One of the basic requirements of the Live Fire Test program is to test full-scale vehicles with a full load of on-board ordnance. The continual neglect of one of

the basic vulnerability contributors will make it more difficult to convince anyone that computer modeling can be substituted for the full-up testing of combat-loaded aircraft.

Weapon Lethality Assessments Versus Aircraft Vulnerability Assessments. Some of the programs currently used to compute an aircraft’s vulnerability to the various types of weapons were developed by the Joint Technical Coordinating Group for Munitions Effectiveness. Because the munitions effectiveness community wanted conservative estimates for the prediction of a weapon’s lethality, its programs were developed so that our weapons were not to be credited with any kill capability that was not clearly justified; a sound policy from the weapon development point of view. However, as a result of this approach, the use of these programs to predict the vulnerability of a U.S. aircraft most likely leads to overly optimistic predictions. Potential vulnerabilities have been ignored unless clearly justified; an unsound policy where the survivability of U.S. aircrews is concerned. Examples of where the vulnerability of aircraft is underestimated are

-

the use of the Thor penetration equations, which consider only the largest remaining piece of a penetrator as it passes through the aircraft,

-

the lack of synergism due to both multiple simultaneous and sequential hits on a component;

-

the lack of direct consideration of spall, and

-

the lack of consideration of cascading damage, such as fuel tank damage leading to fuel ingestion.

The Joint Technical Coordinating Group on Aircraft Survivability (JTCG/AS) Pk/h Workshop. Even if all other aspects of the model were perfect, some of those portions of the model that portray the results of the physical interactions or damage processes that occur when a threat weapon interacts with an aircraft are inaccurate or incomplete. In order to eliminate some of the model deficiencies, more vulnerability data are needed on component kill modes and Pk/h functions. This deficiency in the component vulnerability data base was the subject of a JTCG/AS Component Pk/h Workshop held from March 5–8, 1991, at Wright-Patterson Air Force Base (WPAFB).

The objectives of the JTCG/AS Workshop were to critically review the current state-of-the-art of component Pk/h prediction, to recommend a set of Pk/h or Pd/h values or functions for use in analyses, and to develop plans for improving and validating this set.5 Working panels were organized as follows:

Fuel System

Flight Controls/Hydraulics (Control and Power)

Crew Station

Engines and Accessories

Stores, Ammunition, and Flares

Electrical and Avionics

Structures, Landing Gear, and Armor

Helicopter Unique Components

The draft reports of the individual panels are currently being integrated into a final report. The findings of the eight working panels are given in Appendix D.6

The general conclusion from the workshop is that the component vulnerability data base is the weakest link in the vulnerability analysis chain. Clearly, much remains to be done with respect to validating those sections of the aircraft vulnerability models that deal with component and subsystem damage prediction. The level of confidence placed in the analytical models would be significantly increased if a concerted effort was made to determine the maximum error in Av that occurs in the model predictions using the current data base, and to what extent this error might be reduced with the availability of new test data.

Model Accreditation. Model accreditation is accomplished by the JTCG/AS. This organization, consisting of representatives from all three Services, has established procedures for verifying, validating, and accrediting models. Despite the deficiencies identified by the aircraft vulnerability assessment community in its analytical models, the community has been exemplary in the exchange of data and ideas among the three Services and industry, in the development of handbooks and design guides for reducing vulnerability, and in the establishment of validated data bases from test data. Although there is still much to learn, more perhaps is known. There will always be a need to update models with new input data as aircraft materials and designs change and as the threat weapons change.

The Cost of Analysis. It is extremely difficult to determine the costs associated with a typical analytical assessment since the cost depends heavily on the type of aircraft being assessed and the particular application for the assessment. However, some rough estimates for the costs to conduct an analysis that would be appropriate for a major milestone decision are given below. The numbers were obtained in a personal communication from a representative from the Ballistic Research Laboratory.

Cost of the Model. The cost of creating a COVART or HEVART model for a helicopter is roughly between $75,000 and $200,000, with the low end for an upgrade to an existing system and the high end for an all new, high detail description of a U.S. system. These costs also include the generation of the shotline data files needed to run the Computation of Vulnerable Area and Repair Time Model (COVART) or High Explosive Vulnerable Area and Repair Time (HEVART) model. The cost of developing appropriate target models for the Stochastic Qualitative Analysis of System Hierarchies (SQuASH) might be two to three times that required for the conventional vulnerable area models.7 As the design community increases its use of three-dimensional modeling and grid generation, and as computer usage becomes less expensive, the cost of modeling will come down significantly. The major cost will be the preparation of input data.

Cost of Computer Runs. A typical batch of computer runs for a helicopter includes two flight modes (hover and forward flight) and from 6 to 26 attack aspects. The cost of this batch of runs using COVART or HEVART ranges from $45,000 to $100,000. The analysis and preparation of input data account for most of this cost.

Cost of Obtaining Supporting Experimental Data for Pk/h Functions. The execution of an analytical model without any live fire test data on kill modes and Pk/h functions as input data on component vulnerability could be accomplished, but the level of confidence in the results would be very low. Consequently, the cost of obtaining the necessary data on component vulnerability must be included in the cost of the analysis. If all of these data are available from prior tests, the cost of gathering them is relatively low. If all of the necessary data are not available, supporting tests must be carried out to obtain the missing data. These tests can be as simple as the firing of fragments at pieces of plate to measure penetration or V50 velocities, or they can be as complicated as firing several fragments at running engines to determine the severity of damage, or firing at major portions of the aircraft structure that contains fuel tanks to simulate the fire/explosion and hydraulic ram phenomena. The typical costs associated with two types of live fire tests are given below.

For a helicopter engine and a 23-millimeter HEI threat, basic preliminary testing with components and/or a static engine would cost approximately $30,000. Comprehensive testing with a full-up running engine would cost approximately $250,000. For a tail boom structure, basic shoot-and-look damage characterization tests would cost approximately $25,000. A comprehensive evaluation of the test results, including postdamage controlled structural experiments would cost approximately $150,000.

Live Fire Testing

A live fire test is one in which live ammunition, either explosive or non-explosive, is fired at a target. Live fire testing can be used to assist in the design and design validation of an aircraft, to provide empirical information on component vulnerability in support of the analytical models, to satisfy the Live Fire Test law, and to support acquisition decisions. Live fire testing is part of either developmental testing (DT) or Live Fire Testing. The difference between the two types of tests is that DT is part of the normal design and development process, whereas Live Fire Testing is that testing intended to satisfy the Live Fire Test law. “As currently defined, development test and evaluation (DT&E) is that test and evaluation (T&E) conducted throughout the acquisition process to assist in the engineering design and development process and to verify attainment of technical performance specifications and objectives and supportability. DT&E includes T&E of components, computer software, subsystems, and hardware/software integration. It encompasses the use of modeling, simulations, and test beds, as well as advance development, prototype, and full-scale engineering development models of the system. Technical performance specifications must be validated through DT&E in order for the developer (program manager) to certify that the weapon system is ready for the final phase of Initial Operational Test and Evaluation (IOT&E)” (OSD, 1987).

Of particular interest here is the specific test and evaluation methodology required to satisfy the Live Fire Test (LFT) legislation. The law requires realistic survivability (vulnerability) testing. Although such a test program will in fact involve early component and subsystem or sub-scale Live Fire Testing, the hallmark of this approach is a substantial test program that involves a significant number of shots against a combat-configured, full-scale version of the weapon system (i.e., a full-scale LFT). The early Live Fire Tests on components and subsystems provide information on any vulnerabilities of the individual components and subsystems. Once the information from these tests has been evaluated, the tests on the full-scale aircraft are to be conducted as mandated by the law, unless a waiver has been granted. The full-scale test program will often include a number of shots that are randomly chosen and a number of shots that are selected to address specific issues. Real (or realistic surrogates of) threats likely to be encountered in combat must be used in the tests. These threats can be non-explosive ballistic projectiles, ballistic projectiles with contact-fuzed and proximity-fuzed high-explosive (HE) warheads, and guided missiles with contact-fuzed and proximity-fuzed HE warheads. The three information attributes of

types (including amounts and applications), accuracy, and cost for both sub-scale testing and full-scale testing are examined below.

Type, Amount, and Applications of the Information. In general, the Live Fire Tests on both sub-scale and full-scale targets produce information on what actually happened for a particular set of test conditions (e.g., the specific target, weapon, and shotline) and typically for a relatively small number of shots under these conditions.8 Some typical examples of tests on sub-scale targets are tests to determine the penetration capability of fragments through plates of composite materials, tests on helicopter rotor blades, tail booms, and gear boxes using small arms projectiles and small-caliber HE AAA rounds, tests on fuel tank simulators using small caliber HE AAA to determine the efficacy of a particular fuel tank protection scheme, and stand-alone tests on running engines using several impacting fragments. The information from these tests ranges from measured fragment velocities, temperatures, and overpressures to the ability of the tested article to continue to function after the hit. Tests on the full-scale aircraft will most likely be conducted with the aircraft on the ground or suspended; it can neither crash nor be forced to land as a result of the shot. Thus, the ability of the aircraft to sustain the essential functions for flight after the hit is not observed directly. Furthermore, rather than produce a sufficient amount of numerical data from the full-scale test that can be used directly to determine the kill probability of the aircraft for the shot, each full-scale test provides a list of damaged components along with descriptions of the details of the extent and severity of the damage and the associated damage events for each shot.

With respect to the applications of the information obtained from the tests, the committee notes that the Live Fire Tests are conducted primarily to (1) satisfy the LFT law and its intent (i.e., to determine any inherent vulnerabilities in the design sufficiently early in the program to allow the vulnerabilities to be corrected), and (2) provide information in support of acquisition decisions. They are not specifically intended to provide information that can be used to improve the analytical models for predicting aircraft vulnerability. Nevertheless, previous experience with full-scale Live Fire Tests on ground vehicles has shown that important types of damage and kill modes have been observed that were not included in the analytical models for these vehicles. Thus, the information provided by these LFTs can be used in the other applications. Besides satisfying the letter of the law, the LFT&E program provides (in principle) an opportunity to

-

obtain information on the vulnerability of the aircraft;

-

find vulnerabilities that were not anticipated by the analyses; and

-

gather data on the synergism among the different damage processes and kill modes.

All three aid in the design and design validation, support the analytical models, and support acquisition decisions.

Accuracy of and Level of Confidence in the Information. The level of confidence that is placed in the subscale and full-scale Live Fire Test results depends primarily upon the level of realism of the tests. Certainly, there is a possibility for realism in Live Fire Testing. Some of the vulnerability aspects that are represented in Live Fire Testing are the effects of blast, including structural deformation and component damage, and component damage due to multiple fragment hits, including bending, breaking, and perforating. Other aspects of vulnerability that are represented include the occurrence of spall and the penetration through components. The penetration damage and velocity decay in the test are the result of real penetrators going through real materials, with no assumptions about breakup, ricochet, etc.9 Synergisms among blast, fire, spall, and fragments are realistically represented to the extent that the other aspects of the test conditions (e.g., air speed and altitude) are adequately represented.

Although full-scale tests have the potential to provide the most realism, there are some problems, particularly with the weapons used, the flight conditions, crew vulnerability, and on-board munitions. The weapons selected for testing will most likely be those that are not overmatching (i.e., they will not have a high probability of destroying the aircraft). Consequently, most of the weapons will be either non-explosive or small-caliber explosive rounds. When larger explosive weapons are used, particularly the large-caliber projectiles and guided missiles, the damage to the aircraft can be severe and widespread, making it very difficult to repair the aircraft and return it to a condition that would be satisfactory for further testing. Associated with the explosive weapon is the location of the point of detonation. Contact-fuzed HE warheads must impact the aircraft in order to damage it, but proximity-fuzed weapons can detonate at distances ranging from the aircraft skin to several hundred feet away. If the warhead is detonated too close to the aircraft, it could destroy it.

With respect to the flight conditions, airflow can be simulated to some extent, although it seems unlikely that an entire

transport aircraft would be placed in a uniform high-speed airstream. Static loading conditions can be simulated, at least over a part of the aircraft, but there is some concern about the effects of wind gusts and about transient loads produced by maneuvering and loss of control. Furthermore, altitude and temperature are not simulated. For externally detonating warheads, a static test may result in a different set of blast and fragment impact conditions from those of a dynamic test. In some warhead/target encounters (e.g., in head-on pass or when overtaking at very high velocity), the high-velocity fragments may impact on one part of the target aircraft’s structure while the slower blast may affect a different area. In static tests, both affect the same portion of the target.

Clearly, real people cannot be used in the tests. Anthropomorphic dummies, pressure gauges, and gas-sampling equipment can be used to obtain data on vulnerability issues related to personnel vulnerability. On the other hand, the responses of the crew to shock, incapacitation, temporary loss of control, etc., are not directly measured and must be inferred. These responses are critical to both crew and aircraft survivability.10

Vulnerability of the Aircraft. Although it is true that certain catastrophic kills, such as an explosion within an aircraft fuel tank, would be observable in a test, it is also true that other types of kills would not be directly observable. For example, would the aircraft actually crash after damage to one of the control surfaces? Even if the actual kill was directly observed, there are the problems of associating the results with the other kill categories and levels, and of extrapolating the results to other threats and tactical conditions. For example, suppose the proximity-fuzed detonation of an 85-millimeter HE warhead near the left differential stabilator of the aircraft removed 90% of the stabilator. Is this a kill, and if it is for the 85-millimeter weapon, would a 57-millimeter warhead detonation in the same location cause the same kill?

Unanticipated Vulnerabilities. The analytic models are presently structured to provide aircraft kill probabilities based upon assumed kill modes, and an aircraft with reduced vulnerability is designed to prevent these kill modes from occurring. However, if a kill mode is unanticipated in both the analysis and the design, the model will underestimate the aircraft’s actual vulnerability, and the aircraft will contain this vulnerability. These unanticipated kill modes may be local effects that occur in the vicinity of the original impact, or they may be caused by cascading damage from the impact location to a distant part of the aircraft. For a local effect example, suppose the unanticipated kill mode was an electrical wire bundle that caught fire when hit by a bullet or fragment. This is a local kill mode that could be discovered by component or subsystem testing. On the other hand, suppose the unanticipated kill mode was a fuel ingestion kill of an engine mounted externally on the rear portion of the fuselage. Hydraulic ram pressure in the fuel in the wing fuel tank in front of the engine (due to a penetrator) caused fuel to spew out the top of the tank. This fuel was ingested by the engine, which then died. A test of only the wing fuel tank might not reveal this kill mode because of the absence of the running engine.

Although a full-scale Live Fire Test might reveal a local unanticipated kill mode, such as the burning wire bundle, full-scale testing is not necessarily an efficient methodology for obtaining this information. Furthermore, one can not say with great confidence that if no unexpected vulnerabilities occurred in the full-scale Live Fire Tests, then there are none to be discovered later in combat. Kill modes involving cascading damage may not always occur in a test, and they may be particularly difficult to observe in a full-scale test if they do occur.11 Some kill modes due to cascading effects are well known, such as the kill of an engine due to the ingestion of fuel from a damaged fuel tank next to an air inlet, and are relatively easy to observe. Others, such as the migration of toxic fumes or flames from one portion of the aircraft to another, are not as well known, may not always occur, and may be difficult to detect if they do occur.

It is not feasible to test all possible combat situations for unanticipated vulnerabilities using full-scale aircraft because of the large number of parameters that affect the target’s vulnerability. These parameters include all of the weapons likely to be encountered in combat, the tactical situations of interest, and all of the possible impact locations on the aircraft (i.e., the shotlines). Furthermore, the damaged aircraft should be returned to its original condition after each test if appropriate and possible. Consequently, the test plan may contain a number of random shots, a number of random shots from directions expected in combat, a number of selected shots, and an ordering that schedules potentially catastrophic situations at the end of the program.12

Likelihood of Discovering a Particular Vulnerability. Unless a particular vulnerability is relatively insensitive to the parameters associated with a large subset of the conditions and has a relatively high probability of occurring, it may remain undiscovered in a test program. For example, suppose that a particular vulnerability event associated with a

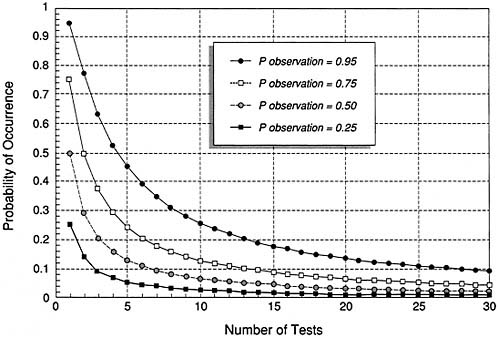

FIGURE 2-1 Relationship among the probability of occurrence on each test, the number of tests, and the probability of at least one observation over the number of tests.

high-explosive weapon had a 0.2 probability of occurrence on any random shot for all test conditions of interest. If the test plan consisted of 10 random shots around the aircraft, the probability the event would occur at least once in the 10 tests is 1−(1−0.2)10=0.89 and hence the probability it would not occur is 0.11. Thus, this vulnerability would most likely be observed.13 On the other hand, if the event could only occur on 2 of the 10 random shots with a 0.2 probability (and a probability of zero on the other 8 shots), the probability the event would occur at least once is

and the probability that it would not occur is 0.64. Thus, this particular vulnerability would most likely not be observed.

Figure 2-1 shows the relationship among the probability of occurrence; the probability of observation of 0.25, 0.50, 0.75, or 0.95; and the number of tests. This figure can be used to determine the number of tests required to obtain a probability of observation of 0.25, 0.50, 0.75, or 0.95 for a given probability of occurrence. For example, if the probability of occurrence in each test is 0.2 and the desired probability of observance at least once is 0.75 or higher, at least seven tests must be conducted.

Suppose there were 5 independent vulnerability events possible on each of the 10 random shots, and each event had a 0.2 probability of occurring on each of the 10 shots. The probability that any one of the events would occur at least once during the test program is 0.89. The probability that all of the events would occur at least once (not necessarily on the same shot) during the program is

Hence, there is a 0.44 probability that one or more of the five kill modes will not be observed. Thus, the test program is essentially equally likely either to reveal all or to miss one or more of the aircraft’s vulnerabilities. If each vulnerability

could occur on only 2 of the 10 test shots, the probability that one or more vulnerabilities would not occur in the 10 tests is essentially one.14

Synergism Among the Damage Processes and Kill Modes. Live Fire Tests are also a valuable source of vulnerability information on the synergism among the damage processes and kill modes, albeit a limited source. Suppose there are two kill modes I and II that exhibit synergism. Mode I has a probability of occurrence of 0.1, regardless of the occurrence of mode II. Mode II has a probability of occurrence of 0.1 if the first mode does not occur and a 0.5 probability of occurrence if the first mode does occur. On one shot, the probability that both modes occur is 0.05. The probability that only mode I occurs is 0.05, the probability that only mode II occurs is 0.09, and the probability that neither mode occurs is 0.81. If these modes were independent, and both had a probability of occurrence of 0.1, mode I only occurs with a probability of 0.09, mode II only occurs with a probability of 0.09, modes I and II together occur with a probability of 0.01, and neither mode occurs with a probability of 0.81. Thus, the probability that neither mode occurs is the same in both the synergistic case and the independent case. In the synergistic case, mode II is more likely to occur (0.14 vs. 0.10) and the probability that both modes occur together is higher than in the independent case (0.05 vs. 0.01).

Validation of the Analytical Model. The analytical models are presently structured to provide kill probabilities and vulnerabilities based upon a selected kill category. The Live Fire Tests do not directly provide the numerical data required to validate the model’s predictions; they only provide information on the components that were damaged or killed, the occurrence or non-occurrence of the kill modes, and any cascading damage. Thus, some compromises must be made that require additional analyses in order to relate the empirical test results to actual combat conditions and to determine if the test results correspond to an actual kill of the aircraft. If enough test shots could be made under the identical set of conditions, a statistical inference could be made regarding the probability of an aircraft kill given the test conditions. Unfortunately, the number of identical shots that can be conducted is usually small, and hence the confidence level in the sample mean probability of kill is low. Furthermore, because the models are expected value models, and given the randomness of the empirical results from the limited number of tests, the likelihood that the test results and the model predictions are in general agreement with respect to the components affected and their vulnerability is low. For example, suppose the model predicts a wing fuel tank will explode when hit with a probability of 0.5. If each of three identical tests result in an explosion, does this mean the model is inaccurate? The answer is no.15 Thus, the Live Fire Tests can not be used in themselves to directly validate the models, and they are not conducted for that purpose. However, they do provide valuable information on damage processes that should be modeled.

Neglect of On-Board Munitions.. The Live Fire Test law requires the aircraft to be full-up when tested. This means that any munitions normally carried by the aircraft must be on-board at the time of the test. This will probably be done in only a very few tests; it is not the intent of the Live Fire Test law to destroy aircraft needlessly. The intent of the law is to obtain information on the vulnerability of the aircraft sufficiently early to allow any design deficiency to be corrected. Consequently, it does not seem reasonable to intentionally create a situation in which the aircraft could be destroyed when essentially the same information on the vulnerability of the aircraft to the on-board munitions can be obtained by using realistic off-line tests of sub-scale models of the portion of the aircraft in the vicinity of the munitions. This is particularly true for aircraft with internally stored munitions. Consequently, inert surrogates most likely will be used for munitions on-board the full-scale aircraft in order to prevent a catastrophic kill. The increase (or decrease16) in aircraft vulnerability due to the presence of the munitions can be determined by relating the observed projectile and fragment impacts on these surrogates to the vulnerability data on munition reactions to impacts obtained from the offline sub-scale tests.17 It is not necessary to load the munitions on the full-scale aircraft in order to determine the likelihood of an adverse munition reaction to a hit or a fire. If a design deficiency with respect to the on-board munitions is discovered and a less vulnerable design can be developed, it can be incorporated without the loss of the test aircraft.

The Cost of Live Fire Testing. No complete Live-Fire Testing program for any aircraft has been carried out as yet. Consequently, the following information is offered only as an indication of the costs involved in full-scale Live Fire Testing. If no waiver has been given from full-scale testing,

TABLE 2-1 Live Fire Test Options for the C-17A (IDA, 1989)

|

Option |

Test Item |

Test Item Cost ($ million) |

Testing Cost1 ($ million) |

Total Cost ($ million) |

|

1 |

Full-up aircraft |

154 |

4 |

158 |

|

2 |

Fuselage & one wing |

107 |

4 |

111 |

|

3 |

Fuselage section & one wing |

60 |

4 |

64 |

|

4 |

One wing |

36 |

4 |

40 |

|

5 |

One wing leading edge |

? |

? |

152 |

|

1This does not include the cost of repairing the damage for the next shot. Also, some members of the committee believe that $4 million is too low. 2An Air Force estimate. |

||||

at least one production or preproduction aircraft is required.18 If the test is carefully designed, and if the target is reparable up to the last shot, one aircraft should be sufficient. The cost of the test aircraft is most likely the major cost of the total program. A question arises as to the proper cost of the aircraft to use when determining the program cost. Should the actual construction cost of the test aircraft, which might be one of the first five or six aircraft built, be used? Should the cost also include the research and development costs, or should the average flyaway cost be used? The particular cost used will have a major impact on the perceived benefit of the Live Fire Test program. If one aircraft is tested out of a total aircraft buy of 400, and the average aircraft cost over the buy is used, the cost of the Live Fire Tests will be less than 0.3% of the total program cost.

Costs for the C-17A. The Institute for Defense Analyses (IDA) has presented a number of test program options, issues, and costs for Live Fire Testing the C-17A in a draft report (IDA, 1989). The report makes no recommendations on which (if any) of the options should be selected. The costs of five options are given in Table 2-1.

At least one production aircraft is required for option 1. If the test is carefully designed, and if the target is reparable up to the last shot, one aircraft should be sufficient. The aircraft cost of $154 million is based on a FY1990 recurring flyaway cost of $181 million with engineering, tooling, and avionics costs removed.19 On the other hand, the FY1997 and 1998 flyaway costs are $78 million. Thus, the cost of the test article depends upon the method of bookkeeping used. The most elaborate test program suggested by IDA is estimated to cost no more than $4 million and involves 100 small arms projectiles, 50 small AAA HE rounds, and 20 man-portable infrared missile shots.

Costs for the RAH-66A COMANCHE Helicopter. The cost of Live Fire Testing the COMANCHE helicopter has been estimated by a representative from the Ballistic Research Laboratory and coordinated with the Program Manager (PM). Based on projected production system fly-away costs for COMANCHE, a full-up low-rate initial production (LRIP) target that is representative of an operational, combat-configured system will cost in excess of $7.5 million. Given the alternative (and planned) use of an engineering test prototype aircraft built up to meet specific Live Fire Test requirements (a minimal configuration), the cost would be less. However, it still is a major percentage of the LFT&E program expense. At least two sets of target components/ subsystems, plus repair provisions, would also be needed. Steps can be taken to attempt to minimize the risks to the hardware, but these may not always work. The operational reutilization of the test articles is unlikely.20

The number of shots that can be conducted on one target is highly variable. However, given ideal repair capability and no catastrophes, 25 or more small-caliber API shots

TABLE 2-2A Relative Advantages and Disadvantages of the Two Methodologies: Type, Amount, and Applications of the Information

|

Analysis/Modeling |

Live Fire Testing |

|

|

Sub-scale Testing |

Full-scale Testing |

|

|

Advantages |

Advantages |

Advantages |

|

|

|

|

Disadvantages |

Disadvantages |

Disadvantages |

|

|

|

TABLE 2-2B Relative Advantages and Disadvantages of the Two Methodologies: Accuracy of, and Level of Confidence in, the Information

|

Analysis/Modeling |

Live Fire Testing |

|

|

Sub-scale Testing |

Full-scale Testing |

|

|

Advantages |

Advantages |

Advantages |

|

|

|

|

Disadvantages |

Disadvantages |

Disadvantages |

|

|

|

plus four or more small-caliber HEI projectile shots might be possible. The cost per shot is highly variable, ranging from $500 to $10,00021, and is a function of the weapon used, the target configuration, the scope of the test issues, the extent of the instrumentation and data capture, the workup costs, etc.

Advantages and Disadvantages of Analysis/Modeling and Live Fire Testing

Both analysis/modeling and Live Fire Testing have advantages and disadvantages or deficiencies relative to one another. Furthermore, there are relative advantages and disadvantages of both sub-scale testing and full-scale testing. Table 2-2 presents a comparison of the methodologies for each of the three information attributes of type, amount, and applications; accuracy or level of confidence; and cost.

Conclusion

The committee concludes that the combination of analytical models, supported by live fire tests on components and subsystems, and the sub-scale and full-scale Live Fire Tests are mutually compatible in the vulnerability analysis, evaluation, and design of aircraft. They complement each other, and the whole is superior to the sum of the parts. More work is needed to unify these approaches in order to obtain the maximum benefit. The aircraft vulnerability

TABLE 2-2C Relative Advantages and Disadvantages of the Two Methodologies: Cost

|

Analysis/Modeling |

Live Fire Testing |

|

|

Sub-scale Testing |

Full-scale Testing |

|

|

Advantages |

Advantages |

Advantages |

|

|

|

|

Disadvantages |

Disadvantages |

Disadvantages |

|

|

|

community, based on its plans for the Live Fire Tests, seems to appreciate the need to integrate these approaches, having witnessed the success in using the data from the Live Fire Tests on the Abrams tank and the Bradley Fighting Vehicle to improve both the analytical methodology and the vehicle designs.

References

• Institute for Defense Analyses (IDA), 1989. C-17A Live Fire Test Options Report, Paper P-2228.

• Office of the Secretary of Defense, 1987. Report of the Secretary of Defense on Test and Evaluation in the Department of Defense.