Although people commonly ask whether the actions they take are “safe,” with an implication that safety poses no risk of harm to human health, it is impossible to demonstrate such a definition of safety or indeed to achieve zero risk. It has previously been recommended (NRC, 1998) “that water agencies considering potable reuse fully evaluate the potential public health impacts from the microbial pathogens and chemical contaminants found or likely to be found in treated wastewater through special microbiological, chemical, toxicological, and epidemiological studies, monitoring programs, risk assessments, and system reliability assessments.” In other words, an evaluation of the adequacy of public health and ecological protection rests upon a holistic assessment of multiple lines of evidence, such as toxicology, epidemiology, chemical and microbial analysis, and risk assessment.

Major research efforts have attempted to refine our understanding of the human health risks of water reuse, particularly the risks of potable reuse, through toxicological and epidemiological studies (see Boxes 6-1 and 6-2; NRC, 1998).1 In the context of reclaimed water projects, epidemiological analyses of health outcomes are an imprecise method to quantify chronic health risks at levels generally regarded as acceptable. This is especially true when interpreting negative study results, which typically do not have the statistical power to detect the level of risks considered significant from a population-based perspective (e.g., an additional lifetime cancer risk of 1:10,000 to 1:1,000,000). Although epidemiology is invaluable as part of an evaluative suite of analytical tools assessing risk, epidemiology may be most useful at bounding the extent of risk, rather than actually determining the presence of risk at any level. Direct toxicological methods (Box 6-2) are intriguing, as indeed was noted in the National Research Council report on Issues in Potable Reuse (NRC, 1998), yet there remains insufficient development and knowledge for these methods to be broadly applied.

There will always be a need for human-specific data, and epidemiological studies will remain important to assessing and monitoring the occurrence of health impacts. However, today’s decisions as to health and environmental protection remain grounded in the measurement of chemical and microbiological parameters and the application of the formal process of risk assessment. Risk can be identified, quantified, and used by decision makers to assess whether the estimated likelihood of harm—no matter how small—is socially acceptable or whether it may be justified by other benefits. Risk assessment provides input to the overall decision process, which also includes consideration of financial costs and social and environmental benefits (discussed in Chapter 9).

The focus of this chapter is to present risk assess-

_____________

1 Toxicological studies expose animals or organisms to a series of doses or dilutions of a single contaminant, complex mixtures, or actual concentrates of reclaimed water to predict adverse health effects (e.g., mortality, morphological changes, effects on reproduction, cancer occurrence). Toxicological tests on mammals often are used to identify doses associated with toxicity, and these dose-response data are subsequently used to estimate human health risks. Potential adverse human health effects are more difficult to predict based on studies in nonmammalian species or microorganisms; however, observed effects are considered cause for further investigation. Epidemiological studies examine patterns of human illness (morbidity) or death (mortality) at the population level to assess associated risks of exposure.

BOX 6-1

Water-Reuse–Specific Epidemiological Information

NRC (1998) provided a comprehensive review of six toxicological and epidemiological studies of reuse systems. The epidemiological study findings from potable reuse applications are briefly summarized in this box. The results from several toxicology studies are summarized in Box 6-2.

Windhoek, Namibia, is the first city to have implemented potable reuse without the use of an environmental buffer (sometimes called direct potable reuse; see Box 2-12). It has been doing so since 1968, especially during drought conditions, and the plant provides up to 35 percent of the potable water supply during normal periods. Epidemiological evaluations of the population have found no relationships between drinking water source and diarrheal disease, jaundice, or mortality (Isaacson et al., 1987; Isaacson and Sayed, 1988).

Three sets of studies have been conducted for the Montebello Forebay Project in Los Angeles County, California: (1) a 1984 Health Effects Study, which evaluated mortality, morbidity, cancer incidence, and birth outcomes for the period 1962–1980; (2) a 1996 RAND study, which evaluated mortality, morbidity, and cancer incidence for the period 1987–1991; and (3) a 1999 RAND study, which evaluated adverse birth outcomes for the period 1982–1993, The first studies looked at two time periods (1969–1980 and 1987–1991) and characterized census tracts into four or five categories by 30-year average percentage of reclaimed water in the water supply. The annual maximum percentage of reclaimed water ranged from less than 4 percent to between 20 and 31 percent. The studies included 21 and 28 health outcome measures, respectively, including health outcomes related to cancer, mortality, and infectious disease incidence. Although some outcomes were more prevalent in the census tracts with a higher percentage of reclaimed water in the water supply, neither study observed consistently higher rate patterns or dose-response relationships (Frerichs et al., 1982; Frerichs, 1984; Sloss et al., 1996). Sloss et al. (1996) identified reclaimed water use and control areas so that comparisons could be made. Compared with the control areas, reclaimed water use areas had some statistically higher as well as lower rates of disease. After evaluating the overall patterns of disease, the authors concluded that the study results did not support the hypothesis of a causal relationship between reclaimed water and cancer, mortality, or infectious disease. Although assessment of a dose-response relationship was possible in the study design, none was identified for the excesses of disease seen.

Since the NRC (1998) report, there have been only a few additional epidemiological studies of human health impacts of wastewater reuse. The largest and most comprehensive study was the third continuation of the Montebello Forebay study (Sloss et al., 1999). Sloss et al. (1999) included a health assessment utilizing administrative health data from 1987–1991 and birth outcomes from 1982–1993. They found some differences between study groups but saw no pattern and concluded that the rates of adverse birth events were similar between the control group and the region receiving reclaimed water.

The most recent study (Sinclair et al., 2010) compared the health status of residents in two housing developments: one with dual plumbing to support nonpotable reuse and a nearby development using a conventional water supply. The study assessed the rates that residents consulted with primary care physicians for gastroenteritis, respiratory complaints, and dermatological complaints (conditions that could be related to reclaimed water exposure) as well as two conditions unrelated to water reuse or waterborne disease exposure. Sinclair et al. (2010) reported no differences in consultation rates between the two groups. There were slight differences in the ratios of specific consultations (i.e., dermal versus respiratory), but the seasonal reporting patterns did not match the timing of reclaimed water exposure.

Population-based studies, also called ecological studies, such as these face significant challenges such as short study periods for chronic disease outcomes, changing exposures over time, nonspecific disease outcomes with unknown attributable risks, and the inability to know actual water consumption rates. Their use for quantitative risk assessment is extremely limited. Such studies simply cannot have the statistical power to achieve detection of the risk expectations established in public water supply regulatory standards such as 10–5 or 10–6 lifetime cancer risk. Population-based studies are probably best viewed as “scoping” or hypothesis-forming exercises. They cannot prove that there is no adverse effect from the reuse of water in these areas (indeed no study can do so), but they can suggest an upper bound on the extent of the impact if one did exist.

Two alternative study approaches could be considered for assessing the effects of reclaimed water on public health. Blinded-design household intervention studies could be used in which all households in the study receive point of use (POU) “treatment devices,” although the control group receives sham devices, and the occurrence of acute gastroenteritis illness is tracked. Most health concerns related to chemical exposures are chronic diseases that may take years to appear. To avoid the need for long observation periods, the household intervention approach could use human tissue chemical biomarkers rather than disease occurrences. Another methodology that is more passive but holds promise for assessing the health impacts of reclaimed water consumption is the “opportunistic natural experiment,” epidemiologically characterized as a community intervention study. These studies assess the incidence of acute gastrointestinal illness before and after scheduled changes in water sources or treatment processes. An example of such a study is a 1984–1987 Colorado Springs study of water reuse for public park irrigation. Three different sources of water (potable, nonpotable water of wastewater origin, and nonpotable water of runoff origin) were used to irrigate municipal parks, and randomly selected park users were surveyed for the occurrence of gastrointestinal disease. Wet grass conditions and elevated densities of indicator bacteria, but not exposure to nonpotable irrigation water per se, were associated with an increased rate of gastrointestinal illness. Increased levels of disease and symptoms were observed when several different bacterial indicators exceeded 500/100 mL. These levels occurred most commonly with the nonpotable water of runoff origin (Durand and Schwebach, 1989). A well-designed case control study can also be used in select populations. Such studies in the context of ordinary potable water have been conducted by a number of authors (Payment et al., 1997; Aragón et al., 2003; Colford et al., 2005).

BOX 6-2

Potable Reuse Toxicological Testing

In 1982, the National Research Council Committee on Quality Criteria for Reuse concluded that the potential health risks from reclaimed water should be evaluated via chronic toxicity studies in whole animals (NRC, 1982). Early studies in laboratory animals, most notably the Denver and Tampa Potable Water Reuse Demonstration Project studies, which used rats and mice exposed to concentrates of reclaimed water, failed to identify adverse health effects when tested in subchronic, reproductive, developmental, and chronic toxicity studies (Lauer et al., 1990; CH2M Hill, 1993; Condie et al., 1994; Hemmer et al., 1994; see also more comprehensive descriptions in NRC, 1998). The absence of adverse effects following repeated, long-term exposure to concentrates of reclaimed water was also confirmed in mice chronically exposed to 150 and 500× concentrates of reclaimed water from a Singapore reclamation plant (NEWater Expert Panel, 2002). Although data from the 24-month tests were planned for completion in 2002, the Singapore Water Reclamation Board did not reconvene the NEWater Expert Panel to evaluate the results or issue an updated final report.

The Orange County Water District conducted online biomonitoring of Japanese Medaka fish exposed to effluent-dominated Santa Ana River water over 9 months and found no statistically significant differences in mortality, gross morphology, reproduction, or gender ratios (Schlenk et al., 2006). The Singapore Water Reclamation Board also exposed Japanese Medaka fish (Oryzias latipes) to reclaimed water over multiple generations and identified no estrogenic or carcinogenic effects in fish (Gong et al., 2008). However, the relevance of these findings to human health remains unclear.

In addition to the in vivo studies described above, a number of in vitro genotoxicity studies have been conducted on samples of reclaimed water and/or concentrates of reclaimed water sampled from sites in Montebello, California, Tampa, Florida, San Diego, California, and Washington, DC (summarized in C. Rodriguez et al., 2009). These studies have identified a small number of positive results—a few tests showed mutagenic effects in the Ames assay in Salmonella typhimurium—although most in vitro and in vivo genotoxicity assays (e.g., mammalian cell transformation, 6-thioguanine resistance, micronucleus, Ames, and sister chromatid assays) have been negative (Nellor et al., 1985; Thompson et al., 1992; Olivieri et al., 1996; CSDWD, 2005). Although in vitro assays are useful for identifying specific bioactivity and chemical modes of action, they are not likely to be used in isolation for the determination of human health risk. Such bioassays provide a high degree of specificity of response, but they generally cannot represent the actual situation in animals that includes metabolism, multicell signaling, and plasma protein binding, among others. In addition, some chemicals can be rapidly degraded during digestion and metabolism, whereas others are transformed into more toxic metabolites. At the same time, many limitations also plague the current in vivo testing paradigm in that interspecies and intraspecies variability can obfuscate the interpretation of animal testing results when applied to humans. For this reason, uncertainty factors are applied in an attempt to provide a conservative estimate of human health risk from animal models.

The U.S. Environmental Protection Agency (EPA) and the National Toxicology Program continue to investigate modern in vitro, genomic, and proteomic methods for rapid screening of chemicals and mixtures and to better deduce the complex pathways leading to disease (NRC, 2007; Collins et al., 2008). Although high-throughput screening using in vitro tools will increase the knowledge on various modes of toxicity of chemicals, in vivo testing will remain an integral part of evaluation of human health consequences from chemical exposure. However, a powerful approach to screening waters can involve a battery of bioassays, each with different toxicological endpoints (Escher et al., 2005).

ment methods for chemical and microbial contaminants that can be used to quantify health risks associated with water reuse applications. In Chapter 7, these methods are applied in a comparative analysis of several reuse scenarios compared to a conventional drinking water source commonly viewed as safe.

INTRODUCTION TO THE RISK FRAMEWORK

With the limitations of toxicological testing and population-level epidemiological studies, quantitative risk assessment methods become a critically important basis for assessing the acceptability of a reclaimed water project (NRC, 1998; Asano and Cotruvo, 2004; C. Rodriquez et al., 2007b, 2009; Huertas et al., 2008). Quantitative methods to assess potential human health risks from chemical and microbial contaminants in reclaimed water have evolved over the past 30 years and are still being refined. Although EPA has extensive health effects data on regulated contaminants, potable reuse and de facto reuse involve some level of exposure to minute quantities of contaminants that are not regulated. Many of these classes of constituents may require innovative approaches to assess health risks. Challenges associated with assessing risks posed by such contaminants include incomplete toxicological datasets, uncertainties associated with concomitant low-level exposures to multiple chemical and biological materials that may share similar modes of action; and

deficiencies in analytical methods to accurately identify and quantify the presence of these contaminants in reclaimed water (Snyder et al., 2009, 2010a; Drewes et al., 2010).

The contribution of water-associated risks to the total U.S. disease burden is estimated to be relatively small. However, water that is not treated to the appropriate level for the end use can pose significant human health risks. These include chronic effects, such as cancer or genetic mutations, or acute effects, such as neurotoxicity or infectious diseases. These adverse outcomes may be caused by different agents, such as inorganic constituents, organic compounds, and infectious agents. The impact of an agent may be a function of the route of exposure (e.g., oral, dermal, inhalation, ocular). Rarely can an observed outcome be ascribed to a particular agent and exposure route in a particular vehicle (such as reclaimed water). In water reuse considerations, there will invariably be multiple substances, types of effects, and modes of exposure that may be relevant.

Historically, the paradigm for risk analysis has been divided into risk assessment (based on objective technical considerations) and risk management, wherein more subjective aspects (e.g., cost, equity) are considered. Risk characterization served as the conduit between the two activities, as introduced in NRC (1983; also known as the “Red Book”). However, evolution in the use of risk to regulate human exposure has resulted in substantial evolution of the framework.

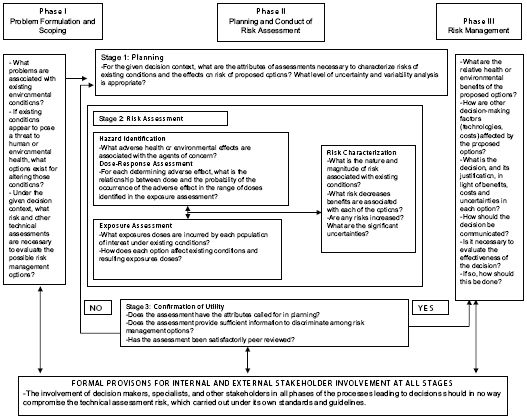

Early in 2009, an updated risk framework, encapsulated in Figure 6-1, was developed (NRC, 2009b). This updated framework has a number of important revisions that are of particular relevance to the problem under consideration in this report. This framework shares a number of similarities with the 1983 Red Book framework with respect to the central tasks of risk assessment (i.e., hazard characterization, dose-response assessment, exposure assessment, and risk characterization). However, it formally introduces several new aspects to the risk analysis and management process that are particularly germane to assessing and managing health risks from reclaimed water:

• Problem formulation: At the outset, there should be a problem formulation and scoping phase in which the risk management question(s) to be answered should be explicitly framed, and the nature of the assessment activities—with respect to agents, consequences, routes, and methodologies—should be outlined. The nature of the management question to be addressed should drive the nature of the assessment activities. Examples of potential scoping questions relevant to water reuse include what is the risk from using groundwater that has been mixed with reclaimed water as a supplement to an existing surface water supply, or what is the human health risk from the application of undisinfected secondary effluent to fruit crops?

• Stakeholder involvement: At all stages, there should be well-understood processes available for involvement of internal and external stakeholders. This is an important consequence of the fact that risk assessment per se involves a number of trans-scientific assumptions (Crump, 2003), and the involvement of stakeholders at all stages promotes transparency to the process and, it is hoped, greater acceptance of the ultimate risk management decision.

• Evaluation: Within the assessment phase itself, there is an explicit evaluation step to determine whether the computations have produced results of sufficient utility in risk management and of the nature contemplated in problem formulation and scoping. If this is not the case, further developed assessments should be conducted. This recognizes that there are various levels of complexity that can be used in risk assessment with a tradeoff between time and resources required for the assessment and degree of uncertainty in the results. If a risk management question can be addressed satisfactorily with a less intensive assessment process, such an approach would be favorable inasmuch as it would enable a decision to be reached more expeditiously with less resource expenditure.

There is also more explicit recognition (NRC 2009b) that risk management decisions will involve consideration not only of the risk assessment results, but of issues of economics, equity, and law, which are discussed in Chapters 9 and 10.

In the following sections, four core components of risk assessment are discussed with regard to a range of water reuse applications:

1. hazard identification, which includes a summary of chemical and microbiological agents of concern;

2. exposure assessment, which explains the route and extent of exposure to contaminants in reclaimed water;

FIGURE 6-1 Consensus risk paradigm.

SOURCE: NRC (2009b)

3. dose-response assessment, which explains the relationship between the dose of agents of concern and estimates of adverse health effects, and

4. risk characterization, in which the estimated risk under different scenarios is compiled. This may include a determination of relative risk (via the route under consideration, e.g., reclaimed water) versus risks from the same contaminants via other routes (e.g., alternative supplies).

CONTEXT FOR UNDERSTANDING WATERBORNE ILLNESSES AND OUTBREAKS

As noted in Chapter 2, the early 20th century brought significant public health improvements due to the implementation of constructed water treatment and supply systems as well as wastewater collection and treatment systems. Despite much success across the developed world to consistently deliver safe water, diseases associated with microorganisms in water continue to occur. Epidemiological investigations have resulted in estimates of between 12 million and 19.5 million waterborne illnesses per year in the United States (Reynolds et al., 2008). Such illnesses are caused by exposure to bacteria, parasites, or viruses (Barzilay et al., 1999).

Fortunately, in the United States these illnesses rarely result in death. On the other hand, death due to acute gastrointestinal illness, especially in the vulnerable young, is all too common in the developing world. Most obvious to the public are the reported outbreaks of acute gastrointestinal illness largely due to pathogens in the water supply (Mac Kenzie et al., 1994).

Epidemiologists have been conducting surveillance for waterborne outbreaks for nearly 100 years and keeping statistics since 1920. The epidemiological investigation of these events has helped identify the vulnerabilities in our drinking water delivery systems and led to many system improvements. From 1991 to 2002, an annual average of 17 waterborne outbreaks were reported and investigated in the United States compared with an annual average of 23 during 1920–1930 (Craun et al., 2006), while over the same period, the U.S. population increased by a factor of over 2.5. From 1991 to 2000 there were 155 outbreaks recorded in the national epidemiological surveillance system. In 39 percent of the reports, no causative agent was identified, and in 16 percent, the cause was a chemical. These studies suggest that the epidemiology of waterborne disease is complex and that outbreak surveillance is far from complete, with significant underreporting. Analyses from recent years have identified that deficiencies in the water distribution system rather than failure in the treatment process are increasingly the cause of outbreaks (Craun et al., 2006; NRC, 2006). Thus, water may be free of contamination when it leaves the municipal water treatment plant but becomes recontaminated by the time it reaches the household tap. The adequacy of the distribution system may therefore provide a limit to the degree of risk reduction even though treatment becomes more stringent. This also heightens the need for monitoring at the point of exposure (i.e., the tap) rather than relying solely on monitoring immediately after treatment. Data collected by the Centers for Disease Control and Prevention’s Surveillance for Waterborne Diseases and Outbreaks indicated that Escherichia coli, norovirus, and unidentified microbial pathogens (likely viral) are the common causes of the waterborne disease outbreaks (Blackburn et al., 2004; Liang et al., 2006; Yoder et al., 2008). Cases of meningitis and other infectious diseases also were reported during water recreation in virus-contaminated coastal waters (Begier et al. 2008).

The record of waterborne disease outbreaks, however, is only the tip of the iceberg. Large numbers of waterborne infectious diseases are undocumented. The level of background endemic diseases associated with water and water supplies is not well understood. There is no estimate of waterborne diseases by specific region or community or by water utility treatment modalities. A review of 33 studies of incidence and prevalence of acute gastrointestinal illness from all exposure sources ranged from 0.1 to 3.5 episodes per adult per year, with child estimates higher (Roy, 2006). Roy (2006) estimated 0.65 episode per person per year in the United States. Health effects from marine recreational exposures to microbial pathogens in water receiving treated wastewater discharge (e.g., eye infection, ear and nose infections, wound infections, skin rashes) are also underreported (Turbow et al., 2003, 2008).

As illustrated above, many human illnesses have the potential to be transmitted via water exposure. There are few if any waterborne pathogens that are distinct to reclaimed water, as opposed to other modes of introduction into the potable or nonpotable aquatic environments. Sometimes these other modes can result in large waterborne outbreaks. For example, an estimated 400,000 cases of Cryptosporidium illness occurred in Milwaukee in 1993 caused by a failure in a filtration process at a water treatment plant (Mac Kenzie et al., 1994), and an acute gastrointestinal illness outbreak in Ohio affected over 1,500 people from microbial contamination of a groundwater supply (Fong et al., 2007). Therefore, although this chapter focuses on the risks of water reuse, potential waterborne hazards should be considered in the context of the full suite of possible exposure routes.

The first step in any risk assessment (microbial or chemical) is hazard identification, defined as “the process of determining whether exposure to an agent can cause an increase in the incidence of a health condition” (NRC, 1983) such as cancer, birth defects, or gastroenteritis, and whether the health effect in humans or the ecosystem is likely to occur.2 Hazards of reclaimed water may depend on factors such as its composition and source water (industrial and domestic sources), varying removal effectiveness of different treatment processes, the introduction of chemicals, and the creation of transformation byproducts during the water treatment process (NRC, 1998). It is important to remember that risk is a function of hazard and exposure, and where there is no exposure, there is no risk.

_____________

2 http://www.epa.gov/oswer/riskassessment/human_health_toxicity.htm

Chemical and microbial contaminants constitute two types of agents that may cause a spectrum of adverse health impacts, both acute and/or chronic. Acute health effects are characterized by sudden and severe illness after exposure to the substance. Acute illnesses are common after exposure to pathogens, but acute health effects from exposure to regulated or unregulated chemical contaminants found in drinking water or reclaimed water are highly unlikely under anything but aberrant conditions due to system failures, chemical spills, unrecognized cross connections with industrial waste streams, or accidental overfeeds of disinfection agents. Chronic health effects are long-standing and are not easily or quickly resolved. They tend to occur after prolonged or repeated exposures over many days, months, or years, and symptoms may not be immediately apparent. There is recently recognized concern for effects arising via an epigenetic route wherein an agent alters aspects of gene translation or expression; such effects can be manifested in a variety of end points (Baccarelli and Bollati, 2009).

Chemical Hazards and Risks

Health hazards from chemicals present in reclaimed water (discussed in Chapter 3) include potential harmful effects from naturally occurring and synthetic organic chemicals, as well as inorganic chemicals. Some of these chemicals, including the carcinogens N-nitrosodimethylamine (NDMA; see Box 3-2), and trihalomethanes (EAO, Inc., 2000), may be produced in the course of various treatment processes (e.g., disinfection), rather than arising from the source water itself. Among the most studied of this latter class of chemicals are the chlorination disinfection byproducts, which have been associated with cancer as well as adverse birth outcomes. Because of the need to disinfect wastewater, which may have comparatively higher organic content than typical drinking water sources, such treatment-related contaminants may be problematic in some reclaimed waters.

Multiple studies in the scientific literature have described associations between chemical contaminants in drinking water and chronic disease such as cancer, chronic liver and kidney damage, neurotoxicity, and adverse reproductive and developmental outcomes such as fetal loss and birth defects (NRC, 1998). Most toxic chemicals that are relevant to water reuse pose chronic health risks, where long periods of exposure to small doses of potentially hazardous chemicals can have a cumulative adverse effect on human health (Khan, 2010; see Chapter 10 for discussion of regulation of drinking water contaminants). As noted in Box 6-1, epidemiological studies are seldom able to determine which of the many chemicals typically present in the water over time are associated with the chronic health effects described. Box 6-3 provides a list of the biologically plausible diseases investigated in the literature for associations with water exposures as well as the organ systems most vulnerable to the contaminants present in wastewater (Sloss et al. 1996; NRC, 1998).

As noted in Chapter 3, a large array of chemicals are present at low concentrations in the nation’s source waters and drinking water, including pharmaceuticals and personal care products (see Table 3-3; Kolpin et al., 2002; Weber et al., 2006; Rodriquez et al., 2007a,b; Snyder et al., 2010b; Bull et al., 2011). There is a growing public concern over potential health impacts from long-term ingestion of low concentrations of trace organic contaminants (Snyder et al., 2009, 2010b; Drewes

BOX 6-3

Biologically Plausible Possible Health Outcomes from Exposures to Chemicals Found in Wastewater

| Cancer | |

| Bladdera | Liver |

| Colona | Pancreas |

| Esophagus | Rectuma |

| Kidney | Stomach |

| Reproductive and Development Outcomes | |

| Spontaneous abortiona | Birth defectsa |

| Low birth weight | Preterm birth |

| Target Organ Systems | |

| Gastrointestinal organs | Cardiovascular organs |

| Kidney | Cerebrovascular organs |

| Liver |

aMost consistently increased in epidemiological studies, especially those of trihalomethane disinfection byproducts.

et al., 2010). In contrast to well-documented adverse health effects associated with exposure to specific disinfection byproducts (such as trihalomethanes) in municipal water systems, health hazards posed by long-term, low-level environmental exposure to trace organic contaminants in reclaimed water or from de facto reuse scenarios are not well characterized, nor are their subsequent health risks known (NRC, 2008a; Khan, 2010; Snyder et al., 2009, 2010b). Although chemicals currently regulated in drinking water have comparatively robust toxicological databases, many more chemicals present in water are unregulated and are missing critical toxicological data important to understanding low-level chronic exposure impacts (Drewes et al., 2010). These same agents can be present in treated wastewater in concentrations not otherwise encountered in most public water supply sources.

To date, epidemiological analyses of adverse health effects likely to be associated with use of reclaimed water have not identified any patterns from water reuse projects in the United States (Khan and Roser, 2007; NRC, 1998; see Box 6-1). In laboratory animals and in vitro studies, there is a mixed picture, with more recent studies on genotoxicity, subchronic toxicity, reproductive and developmental chronic toxicity, and carcinogenicity showing negative results (summarized in Nellor et al., 1985; Lauer et al., 1990; Condie et al., 1994; Sloss et al., 1999; Singapore Public Utilities Board and Ministry of the Environment, 2002; R. A. Rodriguez et al., 2009; see also Box 6-2). Collectively, while these findings are insufficient to ensure complete safety, these toxicological and epidemiological studies provide supporting evidence that if there are any health risks associated with exposure to low levels of chemical substances in reclaimed water, they are likely to be small.

Microbial Hazards

Most waterborne infections are acute and are the result of a single exposure. Disease outcomes associated with infection from waterborne pathogens include gastroenteritis, hepatitis, skin infections, wound infections, conjunctivitis, and respiratory infections. Microbial infection rates are determined by the survival ability of the pathogen in water; the physicochemical conditions of the water, including the level of treatment; the pathogen infectious dose; the virulence factor; and the susceptibility of the human host.

Bacterial pathogens in general are more sensitive to wastewater treatment than are viruses and protozoa; thus, few survive in disinfected water for reuse (see Chapter 3, Table 3-2). Most bacterial pathogens (e.g., Vibrios) also have a high median infectious dose, which requires ingestion of many cells for a likely establishment of infection in healthy adults (Nataro and Levine, 1994). Other bacteria, such as Salmonella, can constitute a likely human infection with 1 to 10 cells if consumed with high-fat-content food (Lehmacher et al., 1995). Toxigenic E. coli O157:H7 with two potent toxins is also suspected of having a low median infectious dose (Teunis et al., 2004).

In comparison with bacterial pathogens, protozoan cysts and viruses are more resistant to inactivation in water. Protozoan cysts are resistant to low doses of chlorine, and high infection rates in water are associated with suboptimal chlorine doses. Viruses can pass the filtration system in water treatment plants because of their small size. Some viruses are also resistant to ultraviolet disinfection (see Chapter 4). Because they have a low median infectious dose, viruses have the potential to present a concern in water reuse applications.

In addition to microbial characteristics, human host susceptibility plays an essential role in microbial hazards. Microbial agents that are benign to a healthy population can lead to fatal infections in a susceptible population. The growing numbers of immunocompromised individuals (e.g., organ transplant recipients, those infected with HIV, cancer patients receiving chemotherapy) are especially vulnerable to such infection. Because of their clinical status, infection is difficult to treat and often becomes chronic. Infectious-agent disease can also lead to chronic secondary diseases, such as hepatitis and kidney failure, and can contribute to adverse reproductive outcomes. The exacerbating factors are not unique to water reuse but apply to all exposure to infectious microorganisms via water, food, and other vehicles. Table 3-1 lists the microbial agents that have been associated with waterborne disease outbreaks and also includes some agents in wastewater thought to pose significant risk.

WATER REUSE EXPOSURE ASSESSMENT

For the purpose of human health risk assessments, exposure is defined as contact between a person and a chemical, physical, or biological agent. The amount of exposure (or dose) is a product of two variables: concentration of a substance in a medium (e.g., the concentration of trihalomethanes in reclaimed water) and the amount of that medium to which an individual is exposed (e.g., via ingestion or inhalation). For an ingested contaminant, the dose is the concentration in water multiplied by the amount of water ingested. Accurately assessing exposure to reclaimed water is a critically important aspect of assessing health risks, because the likelihood of harm from exposure distinguishes risk from hazard.

Influence of Water Treatment on Potential Exposures

Reclaimed wastewater that has undergone varying degrees of water treatment will have different levels of microbial and chemical contamination (see Table 3-2 and Appendix A). As discussed in Chapter 2, the appropriate end use of reclaimed water is dependent on the level of water treatment, with greater intensity of treatment more effectively reducing or removing microbial and chemical contaminants as needed by particular applications (EPA, 2004; de Koning et al., 2008). The treatment and conveyance of waters of different qualities is not novel and dates to the Roman imperial times (Robins, 1946).

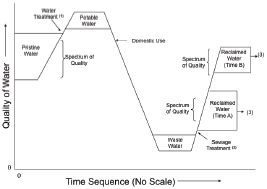

Over the course of time, a unit volume of water undergoes changes in quality (illustrated conceptually in Figure 6-2). With use, a deterioration in quality occurs that may be reversed with treatment. Depending on the desired use, water may be abstracted at different locations along this continuum (i.e., at the right-hand side, increasing degrees of treatment will produce reclaimed wastewater suitable for increasingly stringent usages).

Reclaimed water that has undergone secondary treatment (biological oxidation or disinfection) has numerous nonpotable uses in applications with minimal human exposure potential, such as industrial cooling and nonfood crop irrigation (see Chapter 2). Secondary effluent that has undergone further treatment (e.g., chemical coagulation, disinfection, microfiltration, reverse osmosis, high-energy ultraviolet light with hydrogen peroxide) is suitable for a greater number of nonpotable or potable uses, including uses that have a higher degree of human exposure to the constituents in reclaimed water, such as food crop irrigation and groundwater recharge. In contrast, wastewater that has only undergone primary treatment (sedimentation only), has no use as reclaimed water in the United States because of the likely chemical and microbial contamination. It should be recognized that more extensive treatment generally is more cost- and energy-intensive, may have greater potential for byproducts to occur, and may have greater environmental footprints. Different applications of reclaimed water are also associated with different exposure scenarios, discussed in more detail later in this section.

FIGURE 6-2 Continuum of water quality with use and treatment.

NOTES:

(1) Typical processes include coagulation-flocculation, sedimentation, filtration, and disinfection.

(2) Processes include secondary treatment and disinfection.

(3) Effluent discharged to environmental receiving water or reused.

SOURCE: Adapted from McGauhey (1968); T. Asano, personal communication, 2010).

Influence of Different Exposure Circumstances and Routes of Exposure on Dose

Exposure to contaminants in reclaimed water occurs not only through the ingestion of water that has been designed for potable reuse applications but also

from food, skin and eye contact, accidental ingestion during water recreation, and inhalation in other reuse applications (Gray, 2008). Exposure can also result from improper use of reclaimed water, improper operation of a reclaimed water system, or inadvertent cross connections between a potable water and a nonpotable water distribution system (see Box 6-4). This illustrates that regardless of the intended use, the assessment of risk should consider unintended but foreseeable plausible inappropriate uses of the reclaimed water.

A key component of a human health risk assessment is the estimation of an individual’s average daily dose (ADD) of a chemical. The ADD of a chemical in reclaimed water represents the sum of the ADDs for ingestion, dermal contact, and inhalation of that chemical from reclaimed water. To assess the likelihood that adverse health effects may occur, the ADD can be compared with a daily dose determined to be acceptable over a lifetime of exposure. See Appendix B for equations used for calculating each of these terms.

Ingestion of Reclaimed Water

Ingested volumes of tap water vary with gender, age, pregnancy status (Burmaster, 1998; Roseberry and Burmaster, 1992), ethnicity (Williams et al., 2001), climate, and likely other factors. Also, the concentration of contaminants in reclaimed water, which affects

BOX 6-4

Cross Connections

Several cross connections between nonpotable reclaimed water and potable water lines have been reported in the United States and elsewhere (e.g., Australia). Some of the cross connections existed for 1 year or longer prior to detection. Only a few cross connections involving reclaimed water have resulted in reported illnesses, and fewer still have been medically documented. Most cross connections that occur are accidental, although some are intentional by homeowners or others.

Some examples of cross connection incidents reported in the literature are provided below:

• In 1979, several people reportedly became ill as a result of a cross connection between potable water lines and a subsurface irrigation system that supplied reclaimed water for irrigation at a campground. Based on a survey of 162 persons who camped at the site, at least 57 campers reported symptoms of diarrheal illness (Starko et al., 1986).

• In 2004, a cross connection in a large residential development with a dual-distribution system reportedly affected approximately 82 households (Sydney Water, 2004). The cross connection resulted from unauthorized plumbing work during construction of a house in the development.

• A meter reader discovered a cross connection in 1996 when he noticed that a water meter at a private residence was registering backwards, which indicated that reclaimed water was flowing into the public potable water system (University of Florida TREEO Center, 2011). The reclaimed water service had recently been connected to an existing irrigation system at the residence. The irrigation system had previously been supplied with potable water and was still connected to the potable system. A backflow prevention device was not installed at the potable water service connection, and it was estimated that about 50,000 gallons of reclaimed water backflowed into the public potable water system.

• Homeowners reported illnesses (diarrhea and digestion and intestinal problems) resulting from a cross connection that occurred in 2002 between a reclaimed water line supplying reclaimed water to a golf course and a potable water line supplying water to more than 200 households. Contractors failed to sever a potable water line that previously provided irrigation water, which created a cross connection between the potable line and the reclaimed water irrigation system. Pressures in the reclaimed and potable systems were comparable, and when a higher demand was created on the potable system, water from the nonpotable reclaimed system was siphoned into the potable system (Bloom, 2003).

• A cross connection between reclaimed (nonpotable) and drinking water lines was discovered at a business park in 2007. It was determined that occupants in 17 businesses at the business park had been drinking and washing their hands with reclaimed water for 2 years. The cross connection was found after the water district increased the percentage of reclaimed water in the nonpotable water line from 20 percent (the remaining 80 percent being potable water) to 100 percent, and occupants complained that the water tasted bad and had a odor and a yellowish tint (Krueger, 2007).

Detailed information on cross-connection control measures is available in manuals published by the American Water Works Association (AWWA, 2009) and the EPA (2003a). Regulations often address cross-connection control by specifying requirements that reduce the potential for cross connections (see Box 10-5). However, effective as such programs are, 100 percent compliance has not been achievable.

end-user exposure, will differ according to the source water, the level of treatment (see Chapter 4), and the extent of dilution with other water sources. If the water is treated to levels intended for nonpotable uses, but it is inadvertently ingested (e.g., after a cross connection of the delivery pipes), the exposure might be much greater than the ingestion of water intended for potable consumption, depending on the level of treatment of the reclaimed water (see Box 6-4). In terms of potential health risks, ingestion of reclaimed water is of greater importance than other reclaimed water uses because exposure and estimation of potential health risks are assessed on the basis of the consumption of drinking water, which most governments (including EPA and countries such as Australia) assume to be 2 L/d (NRMMC/EPHC/NHMRC, 2008).

Aside from the consumption of reclaimed water for drinking water, other sources of ingestion exposure of reclaimed water—primarily from incidental exposures—would be less. Although more data are needed to define the variability of such exposures, Tanaka et al. (1998) provide useful benchmarks for reclaimed water ingestion exposures (see Table 6-1). Indirect exposure pathways through ingestion of contaminants in reclaimed water could potentially occur when reclaimed water is used for food crop irrigation, for fish or shellfish growing areas, or in recreational impoundments that are used for fishing. In these cases, exposure may occur from the accumulation of chemicals within the particular food. Some compounds that occur in wastewater such as nonylphenol (Snyder et al., 2001a) and perfluorinated organic compounds (Plumlee et al., 2008) have been shown to bioconcentrate in animals as the result of water exposures. The potential for bioaccumulation of chemicals and pathogenic microbes can occur, as well as decay of chemicals or microbes during product cultivation. With long-term use of reclaimed water on agricultural land, attention should be paid to accumulation in food crops of persistent substances such as perfluorinated chemicals and metals from repeated application of reclaimed water containing these substances. Limited data have suggested that certain compounds potentially present in reclaimed water may be detectable in irrigated food crops (Boxall et al., 2006; Redshaw et al., 2008). Thus, more research is needed to assess the importance of these indirect pathways of exposure.

TABLE 6-1 Illustration of Differential Water Ingestion Rates from Different Reclamation Uses

| Application Purposes | Risk Group Receptor | Exposure Frequency | Amount of Water Ingested in a Single Exposure, mL |

|

Scenario I, golf course irrigation |

Golfer |

Twice per week |

1 |

|

Scenario II, crop irrigation |

Consumer |

Everyday |

10 |

|

Scenario III, recreational impoundment |

Swimmer |

40 days per year—summer season only |

100 |

|

Scenario IV, groundwater recharge |

Groundwater Consumer |

Everyday |

1000 |

SOURCE: Tanaka et al. (1998).

Inhalation and Dermal Exposures

Household uses of water can result in inhalation and dermal exposure to chemicals from showering (Xu and Weisel, 2003) and by volatilization (for volatile substances) from other water uses in household appliances, such as clothes washers and dryers (Shepherd and Corsi, 1996). Experimental studies in humans and in vitro test systems using skin samples indicate that certain classes of chemicals can be absorbed into the body following inhalation or dermal exposure to water following bathing or showering. Research has examined dermal and inhalation exposures to neutral, low-molecular-weight compounds, such as water disinfection byproducts present in conventional water systems, including trihalomethanes (e.g., chloroform, bromoform, bromodichloromethane, dibromochloromethane) and haloketones, (e.g., 1,1-dichloropropanone, 1,1,1-trichloropropanone) (Weisel and Wan-Kuen, 1996; Baker et al., 2000; Xu and Weisel, 2005). Levels of these chemicals are not known to be higher in reclaimed water than in conventional water systems (see Appendix A). As reliance on membrane processes in reclaimed water increases (see Chapter 4), there will be a need to assess the potential exposure to neutral, low-molecular-weight organic compounds that could be present, such as 1,4-dioxane and dichloromethane.

Use of reclaimed water in ornamental fountains, landscape irrigation, and ecological enhancement may

result in inadvertent exposure via aerosolization, dermal contact, or ingestion from hand-to-mouth activity. Although these have not been studied with respect to reclaimed waters, there have been outbreaks or expressions of concern from many of these exposure pathways fed by other waters (Benkel et al., 2000; Fernandez Escartin et al., 2002), and therefore the potential for such effects cannot be neglected.

In instances where there is adequate information and justification to assess exposure following dermal and/or inhalation exposure to a contaminant in reclaimed water, an average daily dose for dermal and inhalation exposures can be computed analogously to that for ingestions as shown in Appendix B.

Recreational Exposures

The storage of reclaimed water in recreational impoundments or the conveyance through rivers used for recreational purposes may result in exposure via all three routes: oral, dermal, and inhalation. Frequently, for swimming, it is assumed that ingestion of 10–100 mL per incident occurs (Tanaka et al., 1998; Heerden et al., 2005), although direct estimation of this ingestion rate is not common (Schets et al., 2008).

Dose-response assessment is “the process of characterizing the relation between the dose of an agent administered or received and the incidence of an adverse health effect in the exposed populations and estimating the incidence of the effect as a function of human exposure to the agent” (NRC, 1983). The assessment includes consideration of factors that influence dose-response relationships such as age, illness, patterns of exposure, and other variables, and it can involve extrapolation of response data (e.g., high-dose responses extrapolated to low-doses animal responses extrapolated to humans) (NRC, 1994a,b). Dose-response relationships form the basis for the risk assessments used for establishing drinking water regulatory standards. To protect public health, drinking water standards are established at levels lower than those associated with known adverse health effects following analysis of a chemical’s dose-response curve and cost-benefit analysis. These standards are intended to protect against adverse health effects such as cancer, birth defects, and specific organ toxicity, that occur after prolonged exposures and are generally established using various margins of safety or acceptable risk levels to protect humans, including sensitive subpopulations (e.g., children, immunocompromised persons).

Chemical

Dose-response assessment and the subsequent estimation of health risk from exposure to chemicals has traditionally been performed in two different ways: linear methods to address cancer effects and nonlinear (or threshold) methods to address noncancer health effects. These different approaches have been used historically because cancer and noncancer health effects were thought to have different modes of action. Cancer was thought to result from chemically induced DNA mutations. Because a single chemical-DNA interaction in a single cell can cause a mutation that leads to cancer, it has generally been accepted that any dose of chemical that causes mutations may carry some finite risk. Thus, in the absence of additional data on the mode-of-action, cancer risk is typically estimated using a linear, nonthreshold dose-response method. In contrast nonlinear, threshold dose-response methods are typically used to estimate the risk of noncancer effects becausemultiple chemical reactions within multiple cells have been thought to be involved.

Dose-response assessment for chemicals is a two-step process. The first step involves an assessment of all available data (e.g., in vitro testing, toxicology experiments using laboratory animals, human epidemiological studies) that document the relationship(s) between chemical dose and health effect responses over a range of reported doses. In the second step, the available observed data are extrapolated to estimate the risk at low doses, where the dose begins to cause adverse effects in humans (EPA, 2010c; WHO, 2009). Upon considering all available studies, the significant adverse biological effect that occurs at the lowest exposure level is identified as the critical health effect for risk assessment (Barnes and Dourson, 1988). If the critical health effect is prevented, it is assumed that no other health effects of concern will occur (EPA, 2010c).

For both carcinogens and noncarcinogens, it is common practice to also include uncertainty factors to account for the strength of the underlying data, interspecies variation, and intraspecies variation. The effect of these factors may be several orders of magnitude in the estimated effect/no-effect level.

Noncarcinogens/Threshold Chemicals

Chemicals that cause toxicity through mechanisms other than cancer are often thought to induce adverse effects through a threshold mechanism. For these chemicals, it is generally thought that multiple cells must be injured before an adverse effect is experienced and that an injury must occur at a rate that exceeds the rate of repair. For chemicals that are thought to induce adverse effects through a threshold mechanism, the general approach for assessing health risks is to establish a health-based guidance value using animal or human data. These health-based guidance values, known as reference dose (RfD), acceptable daily intake (ADI), or tolerable daily intake (TDI), are generally defined as a daily oral exposure to the human population (including sensitive subgroups) that is likely to be free of appreciable health risks over a lifetime (see Box 6-5 for the derivation of RfDs). For pharmaceuticals, maximum recommended therapeutic doses (MRTDs) are generally derived from doses employed in human clinical trials, and are estimated upper dose limits beyond which a drug’s efficacy is not increased and/or undesirable adverse effects begin to outweigh beneficial effects. For a number of drug categories (e.g., some chemotherapeutics and immunosuppressants), a clinical effective dose may be accompanied by substantial adverse effects (Matthews et al. 2004). Matthews et al. (2004) analyzed FDA’s MRTD database and found that the overwhelming majority of drugs do not demonstrate efficacy or adverse effects at a dose approximately 1/10 the MRTD.

Carcinogens/Nonthreshold Chemicals

A dose-response assessment for a carcinogen comprises a weight-of-evidence evaluation relating to the potential of a chemical to cause cancer in humans, considering the mode of action (EPA, 2005a). For chemicals that can cause tumors by inducing mutations within a cell as well as chemicals whose mode of action is unknown, the dose response is assumed to be linear, and the potency is expressed in terms of a cancer slope factor (CSF, expressed in units of cancer risk per dose; see Box 6-6). Cancer risk then is assumed to be linearly proportional to the level of exposure to the chemical, with the CSF defining the gradient of the dose-response relationship as a straight line projecting from zero exposure–zero risk (Khan, 2010).

Tumors that arise through a nongenotoxic mechanism and exhibit a nonlinear dose-response are quantified using an RfD-like method. Ideally, the risk is evaluated on the basis of a dose-response relationship for a precursor effect considering the mode of action leading to the tumor (EPA, 2005a; Donohue and Miller, 2007). In the absence of specific mechanistic information relating to how chemical interaction at the target site is responsible for a physiological outcome or pathological event, nonthreshold and threshold approaches are generally employed when analyzing dose-responses for carcinogens and noncarcinogens, respectively.

Microbiological

Microbiological dose-response models serve as a link between the estimate of exposed dose (number of organisms ingested) and the likelihood of becoming infected or ill. Infectivity has been used as an end point in drinking water disinfection because of the potential for secondary transmission (Regli et al., 1991; Soller et al., 2003).

From deliberate human trials (“feeding studies”), such as for cryptosporidium (Dupont et al. 1995), rotavirus (Ward et al. 1986), and other organisms, mechanistically derived dose response relationships (exponential and beta-Poisson) have been developed (Haas, 1983). It has also been possible to use outbreak data to develop dose response information, as in the case of E. coli O157:H7 (Strachan et al., 2005); however, this will likely only be possible with agents in foodborne outbreaks where exposure concentration data are available.

In some cases, dose-response relationships relying on animal data must be used. It has generally been found that the ingested dose in animals from a single

BOX 6-5

Derivation of Reference Doses

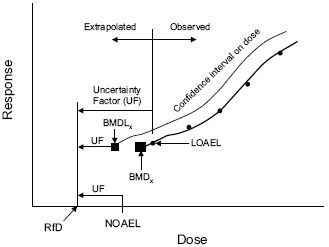

RfDs, ADIs, and TDIs can be derived from no-observed-adverse-effect levels (NOAELs) or lowest-observed-adverse-effect levels (LOAELs) in animal or human studies, or from benchmark doses (BMDs) that are statistically estimated from animal or human studies. The overall process associated with derivation of an RfD, ADI, or TDI is illustrated in the figure below, and the detailed equation is

![]()

Where

NOAEL = The highest exposure level at which there are no biologically significant increases in the frequency or severity of adverse effect between the exposed population and its appropriate control. Some effects may be produced at this level, but they are not considered adverse or precursors of adverse effects.

LOAEL = The lowest exposure level at which there are biologically significant increases in frequency or severity of adverse effects between the exposed population and the appropriate control group.

BMD = A dose that produces a predetermined change in response rate of an adverse effect (called the benchmark response) compared with background.

UFH = A factor of 1, 3, or 10 used to account for variation in sensitivity among members of the human population (intraspecies variation).

UFA = A factor of 1, 3, or 10 used to account for uncertainty when extrapolation from valid results of long-term studies on experimental animals to humans (interspecies variation).

UFS = A factor of 1, 3, or 10 used to account for the uncertainty involved in extrapolating from less-than-chronic NOAELs to chronic NOAELs.

UFL = A factor of 1, 3, or 10 used to account for the uncertainty involved in extrapolating from LOAELs to NOAELs.

UFD = A factor of 1, 3, or 10 used to account for the uncertainty associated with extrapolation from the critical study data when data on some of the key toxic end points are lacking, making the database incomplete (Donohue and Miller, 2007).

Both the NOAEL approach and BMD approach involve use of uncertainty factors (UFs), which account for differences in human responses to toxicity, uncertainties in the extrapolation of toxicity data between humans and animals (if animal data are used), as well as other uncertainties associated with data extrapolation.

The underlying basis of calculating an RfD, ADI, or TDI is the dose-response assessment, where critical health effects are identified for each species evaluated across a range of doses. The critical effect should be observed at the lowest doses tested and demonstrate a dose-related response to

exposure presents the same risk as ingesting the same dose in humans; thus, there is not a need for interspecies “correction.” This has been shown, for example, for Legionella (Armstrong and Haas, 2007), E. coli O157:H7 (Haas et al., 2000), and Giardia (Rose et al., 1991).

While the one-time exposure to a pathogen carries the possible risk of an adverse effect, multiple exposures (e.g., exposures on successive days) may enhance the risk. Very little is known about the description of risk from multiple exposures to the same agent. As a default, multiple exposures are modeled as independent events (Haas, 1996), although it is biologically plausible that either positive deviations (due to sensitization) or negative deviations (due to immune system inactivation) could occur. Dose response experiments using multiple dose protocols would be necessary to further inform this assessment.

Depending on the agent, effects from exposure to pathogens can produce a spectrum of illnesses, from mild to severe, either with acute or chronic effects. For some agents, particularly in sensitive subpopulations, mortality can occur. To determine public health consequences, it is necessary to integrate across the spectrum of effects. This can be done using disability adjusted life years (DALYs) or quality adjusted life years (QALYs) (see Box 10-4).

support the conclusion that the effect is due to the chemical in question (Donohue and Miller, 2007; Faustman and Omenn, 2008).

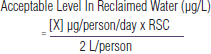

The RfD, ADI, TDI, or MRTD can then be used as the basis for deriving an acceptable level of chemical contaminant in reclaimed water, using the following equation:

![]()

where drinking water intake is assumed to equal 2 L/d, and the relative source contribution (RSC) equals the portion of total exposure contributed by reclaimed water (default is 20 percent).

Example RfD derivation for noncarcinogens or chemicals with a threshold effect. This figure shows graphically how various dose-response data are converted to an RfD, considering confidence intervals and various uncertainty factors.

SOURCE: Adapted from Donohue and Orme-Zavaleta (2003).

Risk characterization is the last stage of the risk assessment process in which information from the preceding steps of the risk assessment (i.e., hazard identification, dose response assessment, and exposure assessment) are integrated and synthesized into an overall conclusion about risk. “In essence, a risk characterization conveys the risk assessor’s judgment as to the nature and existence of (or lack of) human health or ecological risks” (EPA, 2000). Ideally, a risk characterization outlines key findings and identifies major assumptions and uncertainties, with results that are transparent, clear, consistent, and reasonable.

When estimates or measures of exposure and potency (i.e., dose-response relationships) exist, risk can be formally characterized in terms of expected cases of types of illness (with uncertainties) resulting under a given scenario. For example, for a nonthreshold chemical or microbial agent that has a linear dose-response relationship, the characterized risk from a uniform exposure is the simple product of the potency multiplied by the dose. The process is illustrated in Chapter 7. There are a variety of summary measures of risk that can be used (e.g., RfD, ADI, TDI, risk quotient [RQ; i.e., the level of exposure in reclaimed water divided by the risk-based action level, such as the maximum contaminant level or MCL]).

BOX 6-6

Derivation of Cancer Slope Factors

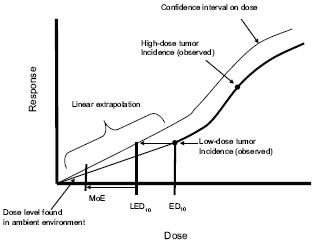

CSFs can be derived using a multistage model of cancer (available through EPA’s Benchmark Dose Modeling software), where the quantal relationship of tumors to dose is plotted. A point of departure, or dose that falls at the lower end of a range of observation for a tumor response, is estimated, and a straight line is plotted from the lower bound to zero. The below figure illustrates a linear cancer risk assessment (Donohue and Orme-Zavaleta, 2003). The CSF is the slope of the line (cancer response/dose) and is the tumorigenic potency of a chemical.

The CSF can be used as the basis for deriving an acceptable level of chemical contaminant in reclaimed water, using the following equation:

![]()

where the acceptable risk level generally equals 10-6, and drinking water intake is assumed to be 2 L/d.

Example cancer risk extrapolation, using the linear dose-response model. The CSF is the slope of the line (i.e., cancer response/dose) and represents the tumorigenic potency of a chemical.

NOTES: MoE = margin of exposure; ED10 = effective dose at 10 percent response; LED10 = lower 95th confidence interval of ED10.

SOURCE: Adapted from Donohue and Orme-Zavaleta (2003)

Risk Characterization Given Lack of Data

For many chemicals, dose-response information is unavailable. Nonetheless, communities still need to make decisions on water reuse projects in the absence of such data. In this section, frameworks for providing information on risk in absence of dose-response data are discussed.

Numerous organic and inorganic chemicals have been identified in reclaimed water and waters that receive wastewater effluent discharges, and only a limited number of these chemicals are actually regulated in water supplies. Current regulatory testing protocols address only one chemical at a time, leaving a gap in our understanding of the potential adverse effects of chronic, low-level exposure to a complex mixture of chemicals. A mixture of chemicals may result in toxicity that is additive (i.e., reflecting the sum of the toxicity of all individual components), antagonistic (i.e., toxicity is less than that of an individual component), potentiated (i.e., toxicity is greater than that of an individual component), or synergistic (i.e., with toxicity that is greater than additive). Of particular concern are chemicals that are mutagenic or carcinogenic and share similar modes of action. As with other types of exposures, in the case of reclaimed water, multiple chemicals may be present

at the same time for prolonged exposure periods, and they may have a synergistic relationship.

Due to the absence of a federal risk assessment paradigm for evaluating health risks from trace contaminants in reclaimed water, private associations as well as states (particularly California) have embarked upon their own programs to use existing screening paradigms to assess health risks of contaminants in reclaimed water (e.g., Rodriquez et al., 2007a; Bruce et al., 2010; Drewes et al., 2010; Snyder et al., 2010b; Bull et al., 2011). Techniques to conduct such water quality evaluations and subsequently perform exposure and risk assessments are summarized in Khan (2010).

Rodriquez et al. (2007a,b, 2008) and Snyder et al. (2010b) used these screening health risk assessment approaches to evaluate potential health risks from chemicals in reclaimed water in Australia and the United States, respectively. In both evaluations, potential health impacts of chemical contaminants were evaluated using a combination of approaches based on extrapolating health risks using actual health effects data on a specific contaminant, as well as chemical class-based evaluation approaches in the absence of contaminant-specific data. For regulated chemicals, EPA MCLs, Australian drinking water guidelines, or WHO drinking water guideline values were used as benchmark risk values (or risk based action levels, RBALs), from which risk quotients can be evaluated (see also example in Appendix A). RBALs for unregulated chemicals with existing risk values can be based upon EPA reference doses (RfDs), WHO acceptable daily intakes (ADIs), lowest therapeutic doses for pharmaceuticals, or EPA cancer slope factors (CSFs), among other risk values. If existing risk values have not been derived, it is possible to derive risk values for noncarcinogens or carcinogens using human or laboratory animal datasets on the chemical under consideration using methods described in Boxes 6-5 and 6-6. The selection of one risk value over another (e.g., RfD vs. ADI) or selection of a specific epidemiological or toxicological dataset used to derive a RBAL generally should be based upon the critical health effect(s) identified for the specific chemical in the most sensitive species.

Potential health risks from the presence of a chemical in reclaimed water can be assessed by dividing a chemical’s RBAL by the concentration of that chemical in reclaimed water. This risk quotient is known as a Margin of Safety (MOS), with values >1 indicating that the presence of a chemical in reclaimed water is unlikely to pose a significant risk of adverse health effects. This is exampled in Chapter 7 for 24 organic contaminants in reclaimed water.

Benchmarks for unregulated chemicals without complete epidemiological or toxicological datasets or risk values were evaluated by Rodriquez et al. (2007a,b) and Snyder et al. (2010b) using class-based risk assessment approaches, including the Threshold of Toxicological Concern (TTC), FDA’s Threshold of Regulation (TOR; see Box 6-7), or EPA’s Toxicity Equivalency Factor (TEF) approach. Rodriquez et al. (2007a,b) used the TTC approach for both unregulated noncarcinogens and carcinogens without available toxicity information, while Snyder et al. (2010b) used TTC for noncarcinogens and nongenotoxic carcinogens. The Toxicity Equivalency Factor (TEF)/Toxicity Equivalents (TEQ) approach was used by Rodriquez et al. (2008) to assess potential health risks from dioxin and dioxin-like compounds in Australian reclaimed water used to augment drinking water supplies, based

BOX 6-7

Threshold of Regulation (TOR)

One class-based approach is the Threshold of Regulation, which was developed as a method to evaluate the potential toxicity of carcinogens extracted from food contact substances. The TOR is a concentration of chemicals unlikely to pose a significant risk of adverse health effects, including cancer risk (10–6) over a lifetime (FDA, 1995; Rulis, 1987, 1989). The FDA derived a threshold value of 0.5 ppb for carcinogens in the diet based on carcinogenic potencies of 500 substances from 3500 experiments of Gold et al.’s (1984, 1986, 1987) Carcinogenic Potency Database. The distribution of chronic dose rates that would induce tumors in 50 percent of test animals (TD50s) was plotted. This distribution was extrapolated to a Virtually Safe Dose (10–6 lifetime risk of cancer) in humans and is equal to 0.5 μg chemicals/kg of food, or 1.5 μg/person/day (based on 3 kg food/drink consumed/day). This value can be extrapolated to a concentration in water intended for ingestion, as follows:

TOR: 0.5 μg/kg food/day x (3 kg food/day)/ (2 L water/day) = 0.75 μg/L.

BOX 6-8

Thresholds of Toxicological Concern (TTCs)

For carcinogens, distributions of chronic dose rates from lifetime animal cancer studies were statistically evaluated for more than 700 carcinogens to identify an extrapolated threshold value in humans unlikely to result in a significant risk of developing cancer over a lifetime of exposure (Cheeseman et al., 1999; Kroes et al., 2004; Barlow, 2005). This threshold value is equal to 1.5 μg/person/day. For noncarcinogens, analyses have been performed to identify human exposure thresholds for chemicals falling into certain chemical classes. One of the best known TTC evaluations is Munro et al. (1996)’s evaluation of 613 organic chemicals that had been tested in noncancer oral toxicity studies in rodents and rabbits, where chemicals are grouped into three general toxicity classes based on the Cramer classification scheme (Cramer et al., 1978):

• Class I—Simple chemicals, efficient metabolism, low oral toxicity

• Class II—May contain reactive functional groups, slightly more toxic than Class

• Class III—Substances that have structural features that permit no strong initial presumption of safety or may even suggest significant toxicity

Human exposure thresholds (TTCs) of 1800, 540, and 90 μg/person/day (30, 9, and 1.5 μg/kg body weight/day, respectively) were proposed for class I, II, and III chemicals using the 5th percentile of the lowest No Observed Effect Level for each group of chemicals, a human body weight of 60 kg, and a safety/uncertainty factor of 100 (Munro et al., 1996). Using the above TTC human exposure thresholds, an acceptable level of each chemical in reclaimed water can be derived as follows:

Where X = 1800 μg/day for class I compounds, 540 μg/day for class II compounds, and 90 μg/day for class III compounds; Relative Source Contribution (RSC) = 0.2 (assumed default), and drinking water intake = 2 L/day. Therefore, the TTC approach assigns acceptable levels for these three classes of chemicals in reclaimed water as follows:180 μg/L for Class compounds, 54 μg/L for Class II compounds, and 9 μg/L for Class III compounds.

on TEFs developed by the WHO. (For details on calculation of TEQs, see EPA, 2010c.)

Although newer than traditional risk assessments, which are based upon chemical-specific data, these class-based values are widely used by regulatory authorities to assess health risks in the absence of complete substance-specific health effects datasets. The TTC approach is used by the World Health Organization’s Joint Expert Commission on Food Additives (JECFA) to assess health risks from food additives present at low levels in the diet, and the U.S. Food and Drug Administration (FDA) uses the TOR approach when assessing health risk from indirect food additives (such as chemicals in food contact articles; Box 6-7).

The TTC approach has evolved over the past 20 years, starting from the FDA’s TOR concept (Rulis, 1987, 1989) and more recently developing into a tiered appear, where different threshold doses are established based on chemical structure and class (Munro, 1990; Munro et al., 1996; Kroes et al., 2004). The TTC approach is based on the existence of a threshold for a toxic effect (e.g., cancer or a systemic toxicity end-point such a liver toxicity), which is usually identified through animal experiments. TTC values are statistically derived by analyzing toxicity data for hundreds of different chemicals, where doses in animal studies are extrapolated to doses that are unlikely to cause adverse health effects in humans. TTC values have been derived for carcinogens and noncarcinogens (see Box 6-8).

Despite the utility of TTC, there are multiple classes of chemicals that cannot be screened using the TTC approach, such as heavy metals, dioxins, endocrine active chemicals, allergens, and high potency carcinogens, which instead must be evaluated using different risk assessment approaches (Kroes et al., 2004, Barlow, 2005, SCCP, 2008). Reasons for this are primarily public health protective and include the following factors:

• Heavy metals and dioxins may bioaccumulate, and safety factors used in derivation of TTC values may not be large enough to account for differences in elimination of such chemicals in the human body compared to laboratory animals. In addition, the original databases used to develop TTC threshold values may not have included structurally similar chemicals.

• Endocrine active chemicals have limited datasets relating at lower doses.

• Allergens don’t always display a clear threshold, and may elicit adverse effects even at extremely low doses.

• High potency carcinogens, such as aflatoxin-like, N-nitroso and azoxy compounds, are toxic even at low levels.

The TTC approach is meant solely as a method to derive relatively rapid conservative estimation of risk for compounds without detailed risk assessment or with limited datasets. The screening approach was not intended for detailed regulatory decision making. This tool also provides a means to prioritize attention to chemicals where complete toxicological relevance data are absent. The screening value also provides a means for analytical chemists to target meaningful method reporting limits based on health, rather than simply relying on absolute maximum instrumental and method sensitivity.

Results of Screening-Level Analyses

Rodriquez et al. (2007b) evaluated a total of 134 chemicals, including volatile organic compounds, disinfection byproduct, metals, pesticides, hormones and pharmaceuticals, in water that had undergone advanced treatment (microfiltration or ultrafiltration followed by reverse osmosis) at the Australian Kwinana Water Reclamation Plant (KWRP). Calculated risk quotients (RQ) were 10 to 100,000 times below 1 for all volatile organic compounds and all pharmaceuticals except cyclophosphamide (RQ=0.5). Risk quotients <1 indicate that there is unlikely to be a significant health risk associated with exposure to a specific chemical. RQs for all metals were also <1. Rodriquez et al. (2007a) concluded that there were no increased health risks from the KWRP reclaimed water destined for indirect potable reuse as evidenced by levels of contaminants being well below benchmark values.