Workshop Overview1

The National Strategy for Suicide Prevention, first released in 2001 and updated in collaboration with the National Action Alliance for Suicide Prevention in 2012, outlined goals and objectives that are meant to work together in a synergistic way to prevent suicide in the United States. Some of these goals call for the use of innovative data science approaches to identify individuals, populations, and communities at high risk for suicide, which is the topic for this workshop. Specifically, goal 4 calls for the promotion of responsible media reporting of suicide, accurate portrayals of suicide and mental illnesses in the entertainment industry, and the safety of online content related to suicide. Also there is a goal to increase the timeliness and usefulness of national surveillance systems relevant to suicide prevention and to improve the ability to collect, analyze, and use this information for action (goal 11) (U.S. Department of Health and Human Services Office of the Surgeon General and National Action Alliance for Suicide Prevention, 2012). Box 1 contains the 13 goals of the National Strategy.

___________________

1 This workshop was organized by an independent planning committee whose role was limited to identification of topics and speakers. This Proceedings of a Workshop was prepared by the rapporteurs as a factual summary of the presentations and discussions that took place at the workshop. Statements, recommendations, and opinions expressed are those of individual presenters and participants and are not endorsed or verified by the National Academies of Sciences, Engineering, and Medicine, and they should not be construed as reflecting any group consensus.

Emerging real-time data sources, together with innovative data science techniques and methods—including artificial intelligence (AI)/machine learning (ML)—can help inform upstream suicide prevention efforts (CDC, 2021). Nonclinical, natural-language processing data from social media platforms, and data from wearable devices, are currently being leveraged by technology companies as part of their suicide prevention efforts to identify individual users who are at high risk for suicide. These “social suicide” prediction algorithms have particular relevance to individuals who are reluctant to engage the health care system, since timely access to relevant education and health care resources may encourage help seeking during a crisis. De-identified social media discussions on suicide and suicide-related behavior are also being analyzed to determine potential hotspots that can help target public health resources to communities at high risk. For example, suicide risk is higher in Indigenous populations.2

Although innovative, real-time data sources, including social media data, and algorithms that predict suicide and nonfatal suicidal behavior can potentially enhance state and local capacity to track, monitor, and intervene “upstream,” these innovations may also be associated with unintended consequences and risks, such as potential bias in AI algorithms that could contribute to health disparities. Also, utilizing these technologies could paradoxically increase the risk of harm in some cases, especially if the technologies are not validated. Thus, the ability to leverage real-time data sources and novel methodologies to identify and mitigate suicide risk needs to be balanced with minimizing unintended consequences.

This challenge was the impetus for the recent Workshop on Innovative Data Science Approaches to Assess Suicide Risk in Individuals, Populations, and Communities: Current Practices, Opportunities. Hosted by the Forum on Mental Health and Substance Use Disorders at the National Academies of Sciences, Engineering, and Medicine, the virtual workshop consisted of three webinars held on April 28, May 12, and June 30, 2022. The main goal of the workshop was to explore the current scope of activities, benefits, and risks of leveraging innovative data science techniques to help inform upstream suicide prevention at the individual and population levels. Addressing that goal will require careful thought about how best to frame the issue and then exploring what data are available that can be used to assess at-risk individuals and determine the best intervention. To that end, the workshop organizers invited speakers with diverse backgrounds and expertise, including in areas such as

___________________

2 This topic is covered in another National Academies of Sciences, Engineering, and Medicine report published in 2022: Suicide prevention in indigenous communities. See https://www.nationalacademies.org/our-work/suicide-prevention-in-indigenous-communities-a-workshop (accessed August 29, 2022).

mental health and suicide prevention, AI/ML, big data, social media algorithms, natural language processing, app development, public policy, ethics, and public communication.

This Proceedings of a Workshop summarizes the presentations and discussions that took place during the three webinars that composed the workshop. The presentations and discussions are organized thematically, rather than strictly chronologically, to allow for similar points made by different participants to be synthesized and streamlined. The proceedings highlights individual participants’ suggestions to help advance innovative data science techniques including AI/ML learning to help inform upstream suicide prevention efforts at the individual, community, and population levels. The suggestions are discussed throughout the proceedings and summarized in Box 2. Appendix A includes the Statement of Task for the workshop. The webinar agendas are provided in Appendix B. Speaker presentations and the workshop webcast have been archived online.3

Benjamin Miller, president of Well Being Trust, said in his opening remarks that social media “has become a major way that we interact with the world and those around us.” Miller said that people at risk of suicide often turn to social media platforms for help. In response, a number of these platforms have proactively deployed sophisticated AI/ML algorithms to identify individual users who are at high risk for suicide, and in some cases these platforms may, if they deem it necessary, activate local law enforcement to prevent imminent death by suicide. He added, this is one approach to taking advantage of social media information to detect at-risk individuals and to get them the help they need, but there are many more approaches in place, in planning, or under consideration—and, quite certainly, many more approaches that have not yet been imagined.

U.S. Surgeon General, Vice Admiral Vivek Murthy, spoke about the toll of suicide and the importance of finding improved ways to identify at-risk individuals and to intervene to prevent suicide. In January 2021 the U.S. Department of Health and Human Services (HHS) and the Office of the Surgeon General, in collaboration with the National Action Alliance for Suicide Prevention, released The Surgeon General’s Call to Action to Implement the National Strategy for Suicide Prevention.4 The report outlines actions that communities and individuals can take to reduce the rates of suicide and to help improve resilience. Also in 2021, the Surgeon General issued an advi-

___________________

3 See https://www.nationalacademies.org/our-work/using-innovative-data-science-approaches-to-identify-individuals-populations-and-communities-at-high-risk-for-suicide-a-workshop (accessed August 29, 2022).

4 See https://www.hhs.gov/sites/default/files/sprc-call-to-action.pdf (accessed August 19, 2022).

sory on Protecting Youth Mental Health.5 The advisory lays out the policy, institutional, and individual changes needed to address the mental health crisis among young people, which has been exacerbated by the COVID-19

___________________

5 See https://www.hhs.gov/sites/default/files/surgeon-general-youth-mental-health-advisory.pdf (accessed August 19, 2022).

pandemic. Murthy said that the COVID-19 pandemic has increased feelings of depression, anxiety, and loneliness for many, and that “using data science to assess suicide risk is both important and increasingly urgent.”

Suicide Detection and Prediction

Gregory Simon, senior investigator at the Kaiser Permanente Washington Health Research Institute, described four types of actions that might be performed by data science tools applied to some of the new types of data that are becoming available. The first of such actions would be to use inference to create generalizable knowledge. For example, the tools might be applied to discover that students who are subject to online bullying are at high risk for suicidal behavior. A second action would be the detection of hotspots for a community-level intervention; one might, for instance, find that students in a particular high school are at high risk for suicidal behavior. Third, data science tools could be used for detection at the individual level—say, of a student who is at the present moment experiencing suicidal ideation or a mental health crisis. Finally, the tools might be used for individual-level prediction—for example, finding that a specific student is more likely to attempt suicide in the coming month. In laying out the four possibilities, Simon emphasized that it is important to keep in mind the difference between detection and prediction: detection refers to uncovering something that is happening right now, such as someone at immediate risk of suicide, whereas prediction refers to foreseeing something that is likely to happen in the future. “It’s going to be important for us when we talk about these tools to say which of these jobs are we speaking of,” he concluded.

What can social media data bring to the table in terms of helping at-risk individuals and lowering the country’s growing suicide rates? Several speakers addressed that question with context-setting presentations that provided overviews of the issue and suggested ways to proceed.

Artificial Intelligence–Based Suicide Prediction

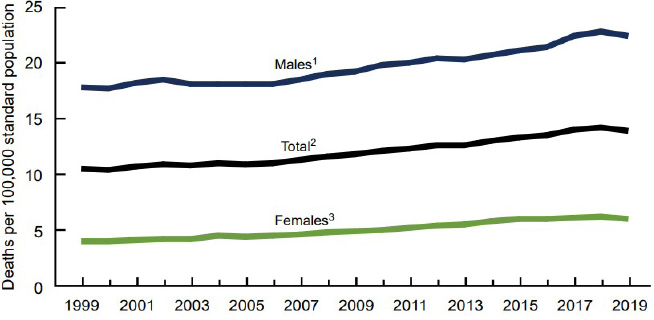

Mason Marks, the Florida Bar Health Law Section Professor of Law at Florida State University and senior fellow at the Petrie-Flom Center for Health Law Policy, Biotechnology, and Bioethics at Harvard Law School, described two types of AI-based suicide prediction approaches—medical suicide prediction and social suicide prediction. He said that suicide rates in the United States have been steadily increasing over the past 20 years (Figure 16), “so it’s

___________________

6 See https://www.cdc.gov/nchs/data/databriefs/db398-H.pdf (accessed September 19, 2022) for more up to date data.

SOURCES: Mason Marks workshop presentation, April 28, 2022; Hedegaard et al., 2018.

NOTE: Age-adjusted death rates were calculated using the direct method and the 2000 U.S. standard population.

clear that we are not doing as good a job as we could be or should be to reduce suicide rates.” Marks said that part of the problem is that historically, physicians have not been very good at predicting who will attempt suicide. Also, the methods available to physicians and others to predict suicide are often little or no more accurate than chance. That is one of the reasons why the use of AI is exciting to people in this area, he said.

Marks explained that medical suicide prediction is largely used for research at this stage. Moreover, it is typically undertaken by hospitals or health care systems, and it involves physicians and physician researchers or other medical scientists who have access to patient health records. This access allows them to know which patients attempted or died by suicide. Researchers can then train machine learning algorithms on those records to identify patterns and pick out words or phrases, including diagnoses or medications, that are more commonly associated with suicide than other words or phrases. They can then deploy the algorithms to find those data points in new patient cohorts and predict suicide risk in those populations. Because medical suicide prediction occurs within the health care system, it is typically subject to the Privacy Rule7 promulgated under the Health Insurance Portability and Accountability Act (HIPAA) and other regulations associated with clinical care and medical research. This kind of research is typically reviewed by an institutional review

___________________

7 See https://www.hhs.gov/hipaa/for-professionals/privacy/index.html (accessed August 22, 2022).

board to provide ethical oversight, and the results are often published in peer-reviewed medical journals, which helps contribute to the work’s scientific rigor and transparency.

By contrast, Marks said that social suicide prediction is usually undertaken by corporations, and instead of being used primarily for research, social suicide prediction will often trigger real interventions, such as sending the police to a person’s home for a wellness check. An example is Crisis Text Line,8 a company focused on young adults and adolescents that relies primarily on texting. Some companies, such as Gaggle and GoGuardian, focus on students and market their services to schools and school districts. Because these companies do not have access to medical records, they typically cannot train their algorithms using actual suicide data, so they end up using proxies for suicide data, particularly information pulled from social media and data produced when consumers use internet-enabled services. Companies analyze this information to make inferences about consumers’ health and other characteristics. Because these analyses are performed outside the health care system, they are not subject to the HIPAA Privacy Rule.

In addition, Marks said the use of social suicide prediction has generally not been reviewed by institutional review boards, and although companies might do some sort of internal review, there is generally a lack of transparency regarding their use of predictive algorithms. Furthermore, the algorithms are usually proprietary and companies may treat them as trade secrets. Perhaps most concerning, interventions triggered by the algorithms, such as wellness checks, carry a certain risk of harm to the individuals reported. Individuals may be hospitalized or otherwise detained against their will, or they may be medicated without their consent. Sometimes a violent confrontation with the police results, and in several cases, police carrying out wellness checks have shot and killed the people they were summoned to help. Confrontations with first responders can actually increase the risk of suicide, which means that these systems can sometimes do more harm than good, Marks said.

Medical suicide prediction carries certain risks as well—such as the possibility of stigmatizing someone who has contemplated suicide—but the risks are more concerning for social suicide prediction, Marks said, due to the lack of transparency and the fact that less is known about social suicide prediction methods (Barnett and Torous, 2019; Marks, 2019).

Among the risks that are associated with both types of suicide prediction is the possible damage caused by inaccurate predictions. Both types of systems produce a large number of false negatives and false positives, Marks said. A false negative can leave a suicidal person without support, but false positives can also be quite dangerous. For example, if the fact that a person

___________________

8 See https://www.crisistextline.org/ (accessed August 22, 2022).

has been flagged by a social suicide prediction algorithm is leaked or shared, that person could be denied access to insurance, employment, or housing and may never know the reason why. People could also have their civil liberties violated—such as being held against their will—because of a false-positive suicide prediction.

Marks suggested several ways to improve AI-based suicide prediction methods. First, AI-based suicide prediction should be viewed as part of a larger system. For instance, if there are no safe and effective interventions that can be triggered once suicide risk is identified, the prediction algorithm should not be used until the treatment shortcomings are addressed. In the case of social suicide prediction, he said consumer consent should be sought and individuals should be allowed to opt in or opt out of the prediction systems employed by social media companies. Furthermore, he added that the prediction systems should be linked primarily to “soft touch” interventions rather than “firm hand” interventions—e.g., referring someone to mental health services instead of calling the police for a wellness check. Also, Marks said that social suicide prediction systems should be limited to research settings until there is greater transparency and more evidence to support their effectiveness. He concluded that first responders should be better trained in how to respond to mental health emergencies.

The Promise of Social Media Data

Nick Allen, a clinical psychologist and professor at the University of Oregon and the co-founder of Ksana Health, spoke about the differences between industry and academic settings in terms of innovation and knowledge generation and the implications of those differences for suicide prediction and prevention. Allen said that when thinking about digital technology and data science methods, it is essential to know that traditional research methods are greatly outpaced by changes in consumer behavior. He mentioned that a typical R01 grant funded by the National Institutes of Health generally takes about 7 years, maybe longer, from the conception of the project to its completion, but in the real world things tends to move much faster. There are many examples of such rapid technological changes over the past few decades, he said, pointing in particular to the adoption of mobile cellular and broadband technologies. He added that the use of technology services can change even more rapidly than the use of devices. “If you were doing research on social media use using traditional methods and traditional approaches,” he said, “you really would have a challenge adapting to this change.”

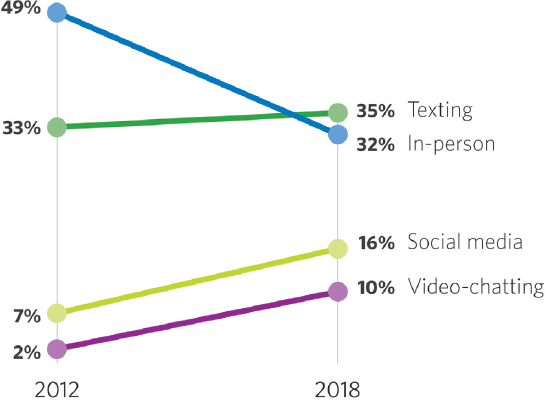

With these changes in consumer technology use come changes in human behavior. Allen shared data to show the changes in the ways in which adolescents prefer to communicate (Figure 2) (Rideout and Robb, 2018). From

2012 to 2018 the percentage of 13- to 17-year-olds who said their favorite way to communicate with friends was in person dropped from 49 percent to 32 percent; the percentage of those identifying texting as the preferred communication method stayed about the same, while the percentages of those who named social media and video chatting grew sharply (7 to 16 percent and 2 to 10 percent, respectively). Allen emphasized that “we’ve got a change that’s occurring at a pace that’s really hard for traditional research methods to model.”

Meanwhile, over the past couple of decades there have also been major changes in the mental health landscape—particularly increases in mental health challenges among young people—including an increase in the suicide rate. Allen said that “this presents us with a situation where we clearly need to come up with models that adapt to these rapidly changing landscapes, both in terms of the epidemiology of mental health difficulties as well as the affordances of consumer behavior and consumer devices.”

Against this backdrop, Allen said, it is important to understand the strengths and weaknesses of traditional health research approaches versus business innovation approaches. The traditional health research approach emphasizes internal validity and, excluding alternative explanations, has a slow funding and research cycle, assumes a static environment in terms of the risk factors, and uses rigorous measures and methods. By contrast, the busi-

SOURCES: Nick Allen workshop presentation, June 30, 2022. Rideout and Rob, 2018.

ness innovation model has an emphasis on external validity (“If it sells, it’s working”), involves rapid iteration based on user-centered design processes (one of its goals is to “Fail fast”), and assumes a dynamic environment created by business competition and technological innovation. One weakness of the business innovation model is that there can be confusion between a product’s market fit and the actual health benefit or effectiveness of the product. People may assume that because a product sells, it must do what it is supposed to do, and that is not always the case. An advantage of this approach is that it focuses on feasibility, engagement, and usability, so a product that fails on one of those criteria will generally be abandoned quickly. Allen stressed that the two different approaches have some complementary strengths and weaknesses and it is essential to find ways to leverage those complementary strengths to find solutions more quickly.

Data of various types are central to a number of technology business models, such as subscription models (paying for access to data) and contextual and behavioral advertising (both of which use data to provide targeted advertisements). Allen said that in pursuing these core business models, many technology businesses collect large amounts of the types of data that could be used for suicide predictions. He added that there is both a business case and potentially a health use case for these same data. He said that in mobile computing, smartphones are used to collect data on a whole range of different kinds of behavior—including language, patterns of usage, circadian rhythms, autonomic physiology, geographic movement, acoustic voice quality, facial expressions, social interactions, physical activity, and sleep patterns—that research has shown are linked to mental health.

Turning to the issue of preventing suicide, Allen said that one of the most effective methods for preventing suicide is to intervene at moments of highest risk. He suggested two ways in which this could be done. The first is by reducing access to means—for example, by storing firearms and ammunition in a way that makes them more difficult to retrieve or by putting pills into blister packs rather than having them in bottles. The second is by providing support and diversion at high-risk moments with what are called just-in-time adaptive interventions (JITAIs). A JITAI is an intervention design that aims to provide support at a critical time point by adapting to the dynamics of an individual’s internal state and context, which is measured continuously (Nahum-Shani et al., 2018).

Allen offered a vision for the future of digitally enhanced mental health care, noting that it is critical to build systems that are flexible and allow users to get the right level of care and support for the different levels of needs they have in a seamless way. Mobile sensing, smart nudges, and communication tools could all play a major role in turning that vision into a reality, he concluded.

Social Media and Population-Level Digital Mental Health

Munmun De Choudhury, associate professor at the School of Interactive Computing at The Georgia Institute of Technology, spoke about efforts to proactively assess a person’s or a population’s risk of developing mental health challenges. De Choudhury noted that mental health disorders affect millions of people worldwide and are one of the leading causes of disability and death. She said that current approaches to predicting and preventing suicide often rely on clinical interviews, patient self-reports, family observations, and assessment scales, but these approaches are limited by the fact that they do not allow for frequent monitoring of risk for suicidality. The data are not collected frequently enough to monitor for important changes in people. Also, many people suffering from mental illness do not have easy access to health care or social services (Coombs et al., 2021). Furthermore, many mental health challenges are characterized by such things as negative perspectives, self-focused attention, a loss of self-worth and self-esteem, and social disengagement (Bravo et al., 2018; Wilburn and Smith, 2005). It is in filling these voids that social media can play a particularly important role, De Choudhury said.

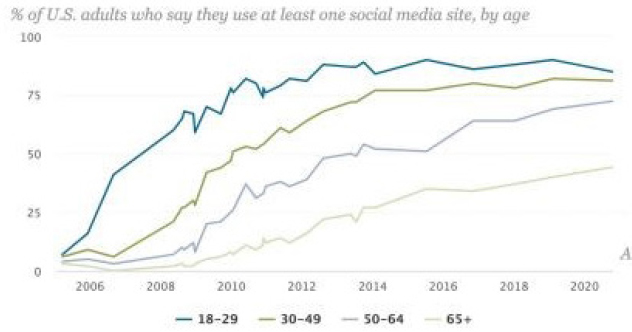

One of the advantages of applying social media to predicting suicide is that large and increasing percentages of youth and young adult populations are online (Figure 3) and using various social media applications. For younger people in particular, these applications are very much a part of their lives.

De Choudhury shared the findings of a study that explored the potential to use social media to detect and diagnose major depressive disorder in individuals (De Choudhury et al., 2013). The team recruited 476 people (243 male,

SOURCES: Munmun De Choudhury workshop presentation, April 28, 2022. Pew Research Center, 2021.

233 female) through Amazon’s Mechanical Turk, a crowdsourcing interface. The researchers asked participants various questions, including about diagnoses of clinical major depression, and rated them on two standardized scales of depression, the Center for Epidemiological Studies Depression Scale9 and the Beck Depression Inventory.10 After getting consent to access the participants’ Twitter feeds, the researchers measured various aspects of those feeds related to their engagement, their emotions, and their linguistic styles. They created a predictive classifier that could determine which individuals might be at the highest risk, up to a year in advance of a reported onset of major depression.

With that classifier the team then developed a social media index that compared the frequency of standard Twitter posts with Twitter posts that were indicative of depression. The researchers compared the results of that index with actual reported measures of depression across the United States on a state-by-state basis and found a relatively good agreement (a correlation of 0.52 according to a “least squares” regression fit). Thus, this index makes it possible to assess the rate of depression not only across various geographies such as cities and states but also across demographics such as sex and also across time. De Choudhury said that an advantage of this approach is that it makes it possible to make such assessments more efficiently and more frequently. Also, the approach could practically help determine which areas in the country have a shortage of mental health providers relative to the estimated need.

De Choudhury also described a study aimed at predicting when an individual will start talking about suicide in an online forum (De Choudhury et al., 2016). Working with conversations on Reddit, the researchers focused on individuals speaking about and seeking support for mental illnesses and suicidal behavior. Looking backward at the conversations over time, the researchers looked for various attributes in past conversations that might indicate a transition to talking about suicide. Their analyses found that various linguistic cues were associated with either an increased or decreased risk of suicidal ideation in the future. For instance, the transition to suicidal ideation was found to be associated with heightened attentional focus, poor linguistic coherence and coordination with the community, reduced social engagement, signs of hopelessness, and anxiety, among other factors. These cues were shown to have predictive power in identifying which individuals would go on to engage in suicide ideation discussions in the future.

De Choudhury cautioned that not everybody is on social media, and thus social media needs to be thought of as part of a broader data ecology and to be applied in the context of other conventional data sources. She emphasized that

___________________

9 See https://cesd-r.com/ (accessed August 22, 2022).

10 See https://www.apa.org/pi/about/publications/caregivers/practice-settings/assessment/tools/beck-depression (accessed August 23, 2022).

social media can be used to augment existing and conventional data sources in the context of suicide prevention. She described a recent study where researchers developed ML models to estimate weekly suicide fatalities in the United States (Choi et al., 2020). The researchers combined suicide-relevant data streams from social media or the web with health services data streams from clinical sources, such as data on emergency department visits, and also micro-economic data. “Our idea was to harness the strengths of each of these data sources using ML, and then take those individualized predictions of weekly suicide fatalities to combine them intelligently with a single composite score,” De Choudhury said. With this model the researchers made forecasts of weekly suicide death counts, and these predictions were significantly better than those made using models that rely on only one or two data sources. The value of such an ability is that it can provide real-time numbers for suicide rates as opposed to the currently available suicide statistics, which are typically 1 to 2 years behind.

De Choudhury posed three questions for more research, including: (1) What evidence is needed to show that these sorts of prediction approaches are ready for real-world use? (2) How can models be supported in those inevitable cases of failure, given that ML algorithms are never going to be perfect? (3) What can be done to address the questions of social justice that will arise with the use of social media–based suicide prediction and to make sure that the new approaches do not exacerbate existing inequities?

Working with Social Media Data to Predict Suicide Risk at the Individual and Population Levels

Kenton White, co-founder and chief data scientist at Advanced Symbolics, an AI company in Ottawa, Canada, said that suicide, unlike many other health conditions, is almost entirely preventable yet many lives are lost every year by death from suicide. He listed three reasons for this. First, there is a social stigma associated with suicide, so many at-risk people do not want to talk about it. Second, it is difficult to screen for at-risk people who are not already getting help from a mental health professional. Third, the resources for addressing suicide are very limited. He said, for example, “anyone that has tried to get mental health help during COVID-19 realizes that there’s a shortage of mental health professionals right now, and there’s a waiting list for people who want to get help.”

White said that a major problem is finding where to put limited resources so that help can be provided where it is needed. Social media might offer a solution, he added, saying that “we have got websites where people are talking about what they are feeling, what they are doing, in real time, and we could be using such online platforms to help with screening and get the resources where they are needed.” (Online screening is discussed in Box 3.)

White said that many social media companies are willing to help. However, they are concerned about the legal ramifications of providing information on individuals. For this reason, Advanced Symbolics is looking to apply a scientific approach to help at a population level. White said that one thing that sets the work of Advanced Symbolics apart from other work with social media data is that it starts with a statistical sample, which makes its research more like medical research, where samples are generally representative of the population. Furthermore, it is looking beyond individual posts to get infor-

mation on an individual’s entire past history to provide a historical context to what is happening in the present.

White said that the Advanced Symbolics team is collaborating with clinicians to complement the insights from social media. When the team’s algorithm identifies someone who might be depressed or experiencing a precursor for self-harm, Advanced Symbolics shares the information with their clinician partners. The clinician partners review the entire history and inform the Advanced Symbolics team if they are seeing any clinical patterns that could indicate risk of self-harm. White said that from this work, the team has learned that they should not only be looking for keywords in people’s social media posts but also pay attention to posting patterns. For example, insomnia is one sign that a person may be dealing with mental health issues, and if an individual begins making more posts late at night, it could be a sign of increased risk. Other things they look for are people disconnecting from their social networks and communities without any explanation or friends asking whether they are okay. White added that other factors that are predictive of self-harm include a history of substance misuse or physical abuse or trauma. He noted that all this information could be obtained from the entire history of a person, not just a single post.

White said that the idea behind the work is to construct a sample that is nationwide and includes not just social media posts but also as much additional information and history as possible to create a predictive index (Vogel, 2018). He said that when his team did this in 2016–2017, it had success with showing correlations between the index and increased suicide rates, and it was slated to begin a program with the Public Health Agency of Canada.11 The goal was to move beyond the current situation of waiting a year or two for data on suicide rates and to be able to identify suicide hotspots before they happen. White noted that the nationwide project unfortunately did not launch because of high concerns about the potential misuse of data, such as the Facebook–Cambridge Analytica data scandal in which a data analytics company harvested data from millions of Facebook users to influence America voters.12

White said that his team has learned valuable lessons from the experience, however. The first is that any such research really has to be done “from a position of trust.” With that in mind, the team is now working with Indigenous communities in northern Canada, through partners who have established trust with the communities by working with them over many decades on mental

___________________

11 See https://www.mobihealthnews.com/content/canada-will-use-ai-monitor-suicidal-social-media-posts (accessed August 29, 2022).

12 See https://www.theguardian.com/news/2018/mar/17/cambridge-analytica-facebook-influence-us-election (accessed August 19, 2022).

health issues. He said that at this point, however, the study is not far enough along to have generated results.

Ethical and Pragmatic Suggestions to Improve the Ability to Identify Individuals and Populations at Risk for Suicide

Glen Coppersmith, chief data officer at SonderMind, offered what he referred to as “ethical and pragmatic” suggestions for improving the ability to identify individuals, groups, communities, and populations at risk for suicide. Coppersmith said that technology companies have access to meaningful data around suicide risk that could be useful for entities trying to prevent suicide. He urged technology companies—working individually or in consortia—to make aggregated data feeds accessible to trusted parties. He underscored the importance of technology companies working with these trusted parties to offer training resources to the caregivers of those at risk of suicide, noting that these companies have insights into the people who are at risk and what might help their caregivers have greater success in caring for them.

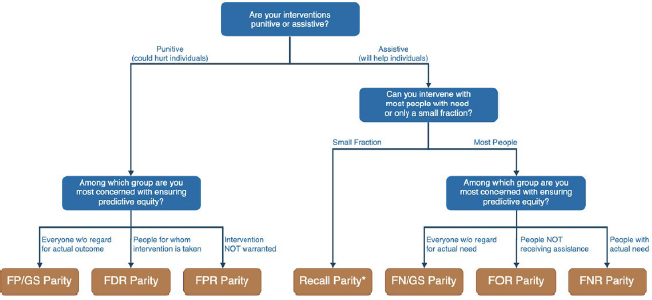

Coppersmith shared a figure to illustrate the sort of data that social media companies have available to them (Figure 4) (Coppersmith, 2022). The hashes on the top represent one person’s interaction with the health care system over the course of many years, with each hash indicating an encounter with a clinician or an emergency room. These interactions are traditionally where health care providers get information about the individual’s mental health and well-being. By contrast, the hashes along the bottom represent all of the times the individual posted on a single social media site. “There’s a lot of information that is being generated in the time in between the health care visits,” Coppersmith emphasized. Coppersmith said his team has built ML algorithms that can take social media data and make predictions about suicide risk or other mental health issues in between those interactions with the health care system—“filling in the gaps,” as he termed it.

Coppersmith suggested three guiding assumptions when working with social media data to predict suicide risk. The first is that technology companies have—or could have—the ability to identify at least some of the people at risk for suicide based on their behavior. Second, technology companies also have the ability to group users into meaningful cohorts, such as veterans, health care workers, or geographic area. Third, however, the organizations that have the information about suicide risk—that is, the technology companies—are not the organizations with the capabilities to prevent suicide. “They’re not health care organizations,” he said. He added that many of these companies can only act within the constraints of their brand and legal departments.

Coppersmith said that it is helpful to think of suicide-related information in terms of three spectrums. The first spectrum is the timeline before a mental

SOURCES: Glen Coppersmith workshop presentation, June 30, 2022. Coppersmith, 2022.

health crisis develops. At one end of that spectrum is information from the moment of crisis itself, while on the other end is stable situation, such as the social determinants of health. Where the information falls on the spectrum will influence how it can be used and what sort of interventions it can inform. The second spectrum is the level of aggregation, from a single individual on one end to all humans on the other, with groups of individuals in the middle. Where information falls on that spectrum will inform the particular risk. The third spectrum is the fidelity of the information. On one end of that spectrum is information that is fully calibrated—with meaningful data points that are important and correct in some way that will allow an action to be taken. On the other end of that spectrum is directional information that has some value but cannot be acted upon by itself.

Most of the interventions that people think about in terms of how social media data might be used to prevent suicide involve information at the far end of these spectrums: individualized data from the moment of crisis that is precise and highly calibrated. This is the sort of ideal data a team of mental health professionals could use to intervene in a moment of crisis. However, Coppersmith said, attaining such information is not very pragmatic for a host of reasons, in large part because technology companies are unwilling to release such sensitive information. This raises the question of what technology companies can do with this information, such as aggregating it and adjusting it in various ways, so that they can hand off the modified information to mental health professionals for use in suicide prediction and prevention. Coppersmith suggested that one approach could be by grouping data from a number of individuals to make it possible to address many of the privacy concerns while still providing useful information. This is the approach taken by the U.S. Census, for example.

In a similar vein, during the COVID-19 pandemic Coppersmith and colleagues tracked social media postings from a cohort of users that represents

the general U.S. population and another cohort that represents health care professionals, and used a natural-language processing algorithm to calculate the estimated suicide risk for the two populations (Fine et al., 2020). “This gave us a chance to draw some conclusions about how the COVID-19 pandemic, as it was unfolding, was affecting mental health professionals, and gave us some leading indicators to some problems that we have all now seen play forward,” he said.

A combination of willing partners in the technology industry and willing public entities and health care entities could work together to make such things happen, he said. There are many nonprofit groups and public sector groups that would work to prevent suicides among certain groups at certain times if only they had the information about when and where the risks actually are occurring. Furthermore, there are plenty of researchers who, if they had access to aggregated data of the correct sort, would find ways to inform policy decisions and resource allocations. In conclusion, Coppersmith urged technology companies that are interested in preventing suicide to make aggregated data available to trusted parties that take care of people at risk of suicide.

Learning from Search Engine Data

Elad Yom-Tov, senior principal researcher at Microsoft Research, discussed ways that search engine data can be used to study issues in health and medicine and also to nudge people to better health practices. Yom-Tov said that search engines such as Bing, which Yom-Tov’s team works with, or Google can provide information and insights that almost no other data source can provide. He offered several examples of how such data can be useful at the population level.

Yom-Tov said, first, search engine data can provide temporal resolution of individual behaviors. People ask questions on Bing many times a day—sometimes 20, 30, or even 50—and although each individual is anonymous, it is possible to follow an individual’s search engine queries over time and know with high probability that all the queries came from one person. A second advantage of search engines is the truthfulness of the supplied data. Because a search engine is an anonymous platform, people do not have to identify themselves as they do for other social media platforms. Yom-Tov said for example, that Google Trends13 can be used to analyze the popularity of the top search queries in Google Search across various regions and languages. The third advantage of search engines is that they can provide rich data—because they are able to collect a lot of data that is typically not obvious. A fourth advantage

___________________

13 Google Trends is a tool for showing the volume of searches on Google across time and geography. See https://trends.google.com/trends/?geo=US (accessed August 19, 2022).

of search engine data is access—because the vast majority of internet users use search engines, it is possible to get data covering most of the population, including people from all different segments of society.

Yom-Tov offered a suicide-related example of what could be done with the data. The Werther Effect refers to the observation that when there is a publication about a high-profile suicide, it increases the risk that other people will decide to kill themselves as well (Yom-Tov and Fischer, 2017). This has been documented at country levels, but Yom-Tov observed that search engine data would allow for a much finer resolution. He shared data on a study to assess the effect of news stories on the intentions of internet users. The researchers analyzed search engine data to determine which phrases and words were more likely to cause an increase in suicides and which ones would decrease it. The result was a model that can predict whether a user report about a suicide will increase or reduce the number of suicides or not affect it at all.

Another study looked at suicides in India, which are often stigmatized and so likely to go unreported (Adler et al., 2019). The researchers compared official government statistics on suicide in the different states of India with search data on people asking about suicide methods. The researchers found a correlation between the two sets of numbers, but in some states the official suicide numbers were significantly less than would be expected from the search engine data. The implication is that in these states there is underreporting of suicides because of the stigma.

Moving from the population level to the individual level, Yom-Tov said that when an individual searches for information on suicide, Bing will put a banner at the top of the search results with a link to a support help line. The particular banner depends upon keywords in the search and on the user’s location. Beyond suicides in some cases, it is possible to identify particular medical issues that the person may have—such as cancer or a mental health crisis—and provide helpful links relating to those as well. Using mouse-tracking data, the researchers were able to find that when people who had asked questions about suicide were presented with a page of results including a banner with a help link at the top, they spent much of their time looking at the help line number. This is encouraging, Yom-Tov said, but it is still not clear from these data whether presenting these individuals with such a banner actually changes their behavior.

Yom-Tov said that it is difficult to conduct experiments that test the effectiveness of such banners in changing behavior because of both regulatory and ethical reasons. However, it is possible to analyze “natural experiments” that shed light on the issue. Moreover, there are several possibilities, he said, including training advertisement systems to offer advertisements for helpful sites to people who are contemplating suicide. Such targeting systems can be trained to be very specific so it is possible they could offer help to people

well before someone is at the point of no return and looking for ways to kill themselves. Again, Yom-Tom cautioned that a variety of ethical issues arise in this sort of approach (Yom-Tov and Cherlow, 2020).

CURRENT OFFLINE APPROACHES TO PREDICTING AND PREVENTING SUICIDE

Several speakers spoke about current offline approaches to suicide prevention based on data that can be collected from tools including electronic health records, hospital records, and demographic databases.

Best Practices from the Department of Veterans Affairs

Lisa Brenner, professor of physical medicine and rehabilitation, psychiatry, and neurology at the University of Colorado, Anschutz Medical Campus, and the director of the Department of Veterans Affairs Rocky Mountain Mental Illness Research, Education, and Clinical Center, presented an overview of the Department of Veterans Affairs (VA)/Department of Defense (DoD) Clinical Practice Guidelines14 for the assessment and management of patients at risk for suicide. Brenner said that the viewpoints expressed in her presentation do not necessarily reflect the views of the VA or DoD. Again, she cautioned not to consider the VA/DoD Clinical Practice Guidelines as the final word because much more needs to be done in the context of clinical care in terms of identifying those at risk of suicide and specifying how best to intervene.

Brenner said that the most recent version of the VA/DoD Clinical Practice Guidelines, released in 2019, offers 22 evidence-based recommendations. Five of those recommendations are related to screening and evaluation for suicide risk. She said that when evaluating patients, it is recommended that risk factors be assessed as part of a comprehensive evaluation of suicide risk, including such things as current suicidal ideation, previous suicide attempts and psychiatric hospitalizations, current psychiatric conditions and symptoms, and the availability of firearms.

Brenner said that VA clinicians apply a two-stage screening process. First, they use the Columbia Suicide Severity Rating Scale15 to detect who may be at risk for suicide and is in need of further evaluation. Second, they use the

___________________

14 See https://www.healthquality.va.gov/guidelines/mh/srb/ (accessed August 23, 2022).

15 See https://www.hrsa.gov/behavioral-health/columbia-suicide-severity-rating-scale-c-ssrs (accessed September 16, 2022).

VA Comprehensive Suicide Risk Evaluation16 to inform clinical impressions about acute and chronic risk and the patient’s disposition. Brenner noted that the process is implemented in a clinical setting in which false positives or negatives can be addressed. “We would not only depend on the screener. We would have lots more information based on the way the patient is presenting, based upon their history, perhaps based upon what their family members are reporting, and we would be able to use that in addition to the screener and then be able to complete the evaluation,” she added.

There are a number of potential weaknesses to this approach, she said. For instance, some individuals may be uncomfortable communicating emotional distress to health care providers or even to friends or family. Some of them may be more comfortable communicating about these issues online. Also, health care providers may not see a patient often enough to ask about suicide at the most important time. Furthermore, health care providers may not feel comfortable asking about suicide.

Brenner highlighted several observations on the use of social media algorithms to predict suicide risk based on her VA experiences. She said that there are differences between how different cohorts use social media, noting that it is essential to incorporate those differences into social media algorithms. Also, research has found that individuals at different geographic locations post differently about suicidal behavior; therefore, social media algorithms should take geographic location into account (Morese et al., 2022). It would also be important to discover how various factors—age, sex, race, ethnicity, cultural background, primary language, and so on—affect what people post and influence the algorithms used to assess risk. She also reiterated the legal and ethical issues, and wider implications concerning suicide risk detection and interventions in social media platforms (Celedonia et al., 2021). Intervening based on limited data, for example, raises a variety of both ethical and legal concerns. Consent is another important issue, she added. How much do people on social media platforms understand about how their data may be used? Should those data be shared with family members or care providers? How should an individual’s privacy and autonomy be taken into account when considering an intervention, and how can patient preferences best be considered?

Brenner mentioned a number of knowledge gaps that affect the VA/DoD Clinical Practice Guidelines and that likely also apply to the use of social media algorithms for suicide prediction and prevention. One is the issue of identifying acute versus chronic risk. Another is determining whether risk stratification is actually reliable and valid in assessing risk. Concerning evalu-

___________________

16 See https://www.mirecc.va.gov/visn19/cpg/recs/3/resources/VA-CSRE-Printable-Worksheet.docx (accessed September 16, 2022).

ation, there are questions about the extent to which screening and evaluation should be connected and about the most appropriate settings in which to do evaluations. These are all challenging questions, she concluded.

Suicide Prediction and Prevention in the Military Health System

Dan Evatt, who leads a research team at the Psychological Health Center of Excellence, provided an overview of identification and management of suicide risk in the Military Health System (MHS) and discussed analytic and practical consideration related to implementing suicide analytic screening in the MHS. Evatt said that in 2020 the suicide mortality rate was 28.7 deaths per 100,000 active service members. Evatt said that in the MHS, suicide risk can be identified either by a health care provider or through an algorithm, and in either case the suicide risk is classified as low, intermediate, or high. Based on the assessment, there are various management and care pathways that can be chosen. Evatt added that the goal is to use the best science and tools to determine the risk and the pathways.

Evatt said that prediction models for suicide attempts and deaths are improving. The ML models are more accurate in predicting suicide ideation, attempts, and deaths compared to theoretically driven models (Schafer et al., 2021), and they perform better than traditional methodology (e.g., multiple logistic regression) and clinician-based prediction (Bernert et al., 2020; Burke et al., 2019). Furthermore, health care systems have started to use some of these new models. For example, the VA recently rolled out the Recovery Engagement and Coordination for Health—Veterans Enhanced Treatment or REACH VET, which increased outpatient encounters and resulted in a 5 percent decrease in documented suicide attempts (McCarthy et al., 2021).

Evatt spoke about a simulation of suicide predictive models that his team ran in the MHS. The analysts wanted to know how many at-risk individuals the models would identify, how many of those people would die by suicide, and how many would be false positives. Using estimates of performance based on published research, the analysts were also interested in how good an intervention would have to be in order for a predictive model to lead to a meaningful reduction in suicides in the MHS. The analysts identified high risk by using varying thresholds of the 99.9th percentile risk of suicide, the 99th percentile risk, and the 90th percentile risk. The model assumed that individuals over a particular risk threshold would be provided with some type of suicide prevention services.

The analysts found that if they chose the highest risk threshold, the interventions resulted in a very small reduction in the number of deaths by suicide because even though the people above the threshold were at a higher risk of suicide, the number of people in that top 0.1 percentile who would

die by suicide was actually very small. Most of the people who would die by suicide were outside the top 0.1 percentile because even though those people were lower risk, there were so many more of them.

So why not lower the risk threshold? The problem, Evatt said, is that then the number of false positives jumps up because more people are being considered. The combination of considering more people and getting more false positives means that the number of false positives skyrockets as the threshold drops. And if tens of thousands of people are getting prevention interventions that they do not actually need, that is an “untenable situation,” he said. There is a “tough trade-off” between providing interventions to so many people that there are huge numbers of false positives getting interventions that they do not need, or having a negligible effect on the total number of suicides.

Evatt also described some VA and DoD programs aimed at service members and veterans who are at high risk of suicide. One of the biggest barriers to getting these people the help they need is the stigma associated with mental health issues. Therefore, many of the programs aim at reducing that stigma or finding ways for people to get information and help without worrying that their help-seeking will become known to people outside the health care system. “We want to make it easier for people to get into care. We want to give them education and information,” he added. For example, the Real Warriors Campaign17 promotes a culture of support for psychological health by encouraging members of the military community to reach out for help whether they are coping with the daily stresses of military life or other psychological concerns. Evatt emphasized that “we want to improve access to care. We want to break down those barriers and really what we are doing is directing service members to the resources they need.”

Alternative Crisis Response

John Franklin Sierra, health systems engineer in the Los Angeles County Alternatives to Incarceration Office, described that county’s alternative crisis response efforts, which are intended to be used in crisis cases when law enforcement does not need to be involved.

One goal of the efforts is to have as many people as possible use the 988 Suicide & Crisis Lifeline18 (formerly known as the National Suicide Prevention Lifeline), instead of dialing 911. As a result of the National Suicide Hotline Designation Act passed in 2020, Congress designated 988 as a new

___________________

17 See https://www.health.mil/Military-Health-Topics/Centers-of-Excellence/Psychological-Health-Center-of-Excellence/Real-Warriors-Campaign (accessed August 23, 2022).

18 See https://988lifeline.org/ (accessed August 21, 2022).

nationally available suicide and mental health crisis hotline. In July 2022 the 988 Suicide & Crisis Lifeline officially went live across the United States.19 Sierra said that there will still be some 988 calls that require a 911-level response, either from law enforcement or emergency medical services. But the aim has been to get 988 accepted as the default number and to only opt into a higher-level response from 911 when there is a clear public safety threat or a clear medical emergency. Otherwise, crisis calls are triaged through 988 and civilian services.

Sierra explained that in triaging the 988 calls, a request for help is classified into one of four levels. Level 1, the least critical, includes calls where there is no crisis or the crisis is resolved with something like a referral to a treatment provider. Level 2 calls require immediate remote support through, for example, a transfer to a suicide prevention hotline. Level 3 calls require in-person support such as what could be provided by emergency medical services (EMS) or a psychiatric mobile response team, but the public is not in immediate danger, and law enforcement is not involved. Level 4 calls involve crimes or an immediate threat to public safety, and law enforcement is dispatched. “The hope with this kind of system,” Sierra said, “is that we can reliably push calls to 988 and to civilian mobile crisis responses as much as possible and only rely on 911 law enforcement and EMS when there is a clear public safety threat or medical emergency reason.”

The performance of the system will be monitored to see where improvements need to be made. For example, the number of 911 calls that can be diverted to 988 will be tracked, and the performance will be gauged against a certain standard. Counties will also be watching to see whether people who need an in-person mobile crisis response from a civilian team actually get that response within a reasonable amount of time. And the data will include information on such personal characteristics as age, sex, race and ethnicity, and geography. “Providing equitable access to these services is of primary importance in the county,” Sierra commented. “We know we need to keep track of data accordingly and ensure we are actually meeting those equity goals,” he added.

Sierra also spoke about the role of social media in the alternative crisis response system. He said that because the people answering 988 crisis hotlines are trained to de-escalate crises and will only engage 911 when there is a clear public safety threat or medical emergency, it makes sense that if social media algorithms are going to provide links to crisis services, those algorithms must link to 988 by default. The algorithms should only link to 911 as a last resort if 988 cannot be reached. Sierra said that social media algorithms used to determine whether a person is in crisis will always have limits and potential biases.

___________________

19 See https://www.samhsa.gov/find-help/988 (accessed August 23, 2022).

He suggested that, ultimately, a human being trained in culturally competent triage and de-escalation needs to intervene as soon as possible. “We know that there are many different ways in which people’s crises manifest, and if they are not triaged competently, they could lead to an unnecessary 911 escalation,” Sierra emphasized.

Jonathan Goldfinger, a pediatrician, intergenerational trauma researcher, and former chief executive officer of Didi Hirsch Mental Health Services,20 said that Didi Hirsch is among the largest providers on the 988 Suicide & Crisis Lifeline. The organization helped develop strategic plans for California in preparation for the projected 988 infrastructure needs, volume growth, and other needs for equitable access to the Lifeline. They also helped with code-signing and testing the 911 to 988 diversion algorithms with the Los Angeles County’s law enforcement and EMS as described by Sierra. Goldfinger and Didi Hirsch collaborated with state and local leadership, suicide prevention experts, people with lived experience, law enforcement, and others to create a 988 implementation plan, and support California’s 13 988 centers in funding and preparing for the Lifeline’s new operational, clinical, and performance standards meant to allow broader access to care. He said that the 988 number, as envisioned, could help provide more equitable access to vital mental health and substance use services and help decrease suicide and mental health stigma.

Linking Datasets to Predict Suicide Risk

Holly Wilcox, professor in the Department of Psychiatry and Behavioral Sciences and the Department of Mental Health at the Johns Hopkins Bloomberg School of Public Health, who serves as co-chair of the Maryland Suicide Prevention Commission, discussed various aspects of suicide prediction and prevention at the state and local levels, with a particular focus on the value of linking multiple datasets to increase predictive power.

First, she described GoGuardian Beacon, a software tool designed to help elementary, middle, and high schools identify signs that a student may be at risk for suicide, self-harm, or harm to others. It is part of a suite of educational software offered by GoGuardian that also includes a filtering and monitoring application and a classroom management application. The Beacon application monitors students’ online activities when they are logged on with their school user ID and password, and if it detects any indication that a student is at risk of any of the targeted behaviors, it flags the content and notifies school administrators. It is then up to the school to decide how to respond, and different schools have different response protocols.

___________________

20 See https://didihirsch.org/about-us/ (accessed August 23, 2022).

Wilcox said that there have been different public responses to GoGuardian Beacon. For example, when a 12-year-old boy in the Clark County School District in Nevada was detected and rescued, the media portrayal was positive,21 but in Baltimore, where there has been a great deal of tension between residents and police, GoGuardian has been portrayed negatively because of concerns about the police becoming involved with potentially suicidal students.22 Wilcox suggested that more research is needed on the impact of such tools as well as which response protocols are most effective and beneficial to students and under what circumstances.

Also, Wilcox discussed the Maryland Statewide Suicide Data Warehouse,23 which was established in part with funding from the National Institute of Mental Health. She said that one of the reasons for its establishment was a recognition of the promise of ML-based algorithms in examining multiple risk factors—“this whole idea of being able to link data that usually sit in silos and never come together.” At the time, she said, very few researchers were combining multiple risk factors to predict and prevent suicide.

Wilcox said that one of the sources of data for the suicide data warehouse is the Chesapeake Regional Information System for our Patients, which is a regional health information exchange covering Maryland and the District of Columbia. It receives data from multiple settings and links them together, and the data it provides to the suicide data warehouse for predictive analysis work are stripped of identifiers. Among the other sources of data provided to the suicide data warehouse are electronic health records, insurance claims data, hospital discharge data, information on deaths and their causes for the Office of the Chief Medical Examiner, Census-derived geographical data, Medicare and Medicaid data, and data from the VA. “We really want to know which of these sources are useful in suicide risk prediction,” she said, and also to provide a framework for other states to follow if they wish to use similar predictive analytics and modeling, using commonly available data sources. Some of the most useful data are in narrative form, such as narratives and toxicology reports from medical examiners, discharge summaries, and social workers’ notes. In this case, natural-language processing is used to extract key points.

___________________

21 See https://news3lv.com/news/local/ccsds-goguardian-software-saves-12-year-old-boys-life (accessed August 23, 2022).

22 See https://www.forbes.com/sites/lisakim/2021/10/12/school-issued-laptops-in-baltimore-are-monitoring-students-for-risk-of-self-harm-as-concern-mounts-nationwide-over-surveillance/?sh=511f15be5a48 (accessed August 23, 2022).

23 See https://publichealth.jhu.edu/departments/mental-health/research-and-practice/violence/our-work-in-action (accessed August 23, 2022).

The Maryland Commission on Suicide Prevention24 is looking at various ways to add to and improve its suicide prediction and prevention efforts, Wilcox said. It is trying to learn from both the VA health system and Kaiser Permanente in terms of both predictive modeling and on how to use the predictions from these models to better help patients while at the same time respecting their privacy. It is looking at other potential sources of data, such as the suicide prevention toolkit of Epic Systems Corporation.

Furthermore, Wilcox described a pilot study in which she and her colleagues sent caring text messages to those who had been treated in the health care system for suicide risk and had then returned home. More than three-quarters of those in the study reported that the messages had a positive impact on their mental health, while 67 percent said the texts reduced their suicidal ideation, and 74 percent said the texts helped prevent them from engaging in suicidal behavior (Ryan et al., 2022). After the success of that study, the state suicide prevention office set up a program that would send caring text messages to anyone who signed up for them. It is relatively new, she said, but already 3,000 people have signed up to receive the messages.

Identifying Suicidal Individuals through High-Performance Computing

John Pestian, professor at the University of Cincinnati Department of Pediatrics, director of the Computational Medicine Center in the Cincinnati Children’s Hospital, and faculty member at the Oak Ridge National Lab, spoke about using data analytics on high-performance computers to identify people at increased risk of suicide. Pestian said that suicide is a complex, difficult-to-predict phenomenon. Suicidal behavior differs between sexes, age groups, geographic regions, and sociopolitical settings, and variably associates with different risk factors, suggesting etiological heterogeneity. Improving recognition and understanding of clinical, psychological, sociological, and biological factors can potentially help the detection of high-risk individuals and assist in treatment selection (Turecki and Brent, 2016). In many cases, for example, an individual may make a number of suicide attempts with increasing risks of lethality before the final attempt that is successful. He said this is because the psychological pain they feel—the “psychache”—gets worse with each attempt.

Such patterns indicate that there is an opportunity for early identification of individuals who might attempt suicide. Pestian explained that one way to

___________________

24 See https://health.maryland.gov/bha/suicideprevention/Pages/governor’s-commissionon-suicide-prevention.aspx (accessed September 16, 2022).

identify patterns for predicting suicide attempts is to collect large amounts of data, including data from electronic medical records and external data such as information on social determinants, and use those data to develop models that can help identify those individuals most at risk of suicide attempts. Because there is so much data, training the models on using the data requires high-performance computers.

Pestian described two analyses related to the identification of suicide risk using high-performance computers. The first analysis trained a computer language model to analyze writings and identify the emotions expressed in them (Pestian et al., 2012). Researchers collected notes written by 1,319 people just before they died by suicide. The notes were digitized and then each reviewed by three separate annotators, who identified the emotions in the notes. The emotions identified in the suicide notes included anger, blame, fear, guilt, hopelessness, sorrow, forgiveness, happiness, peacefulness, hopefulness, love, pride, thankfulness, instructions, and information. This model could be used for a population-based assessment to understand the language and gain insights into people contemplating suicide.

In a second analysis, researchers designed a prospective clinical trial to test the hypothesis that ML methods can differentiate between the conversations of suicidal and nonsuicidal individuals. The researchers recorded and analyzed the conversations of 30 adolescents who presented to an emergency department for suicide risk and 30 adolescents who were nonsuicidal (as controls). Using ML algorithms, the researchers were able to differentiate the individuals at risk of suicide from the controls with greater than 90 percent accuracy based on spoken language differences.

In the future, Pestian said, researchers are looking to collect data from medical records, social media postings, environmental data, census data, information on the social determinants of health, and data from published scientific research. “The data would be stored in a ‘foundation database’ and would be analyzed with high-performance computers. The ultimate goal is to be able to predict the mental health trajectories of individuals and make it possible to offer interventions before an individual reaches a crisis point,” he concluded.

Leveraging Digital Health Applications to Assist in Mental Health Treatment

Mary Czerwinski, partner research manager of the Human Understanding and Empathy (HUE) Research Group at Microsoft Research, described the development and testing of an app called Pocket Skills (Schroeder et al., 2018). Czerwinski said that mobile mental health interventions have the potential to reduce financial and time burdens associated with in-person therapies and to increase engagement and comfort in providing disclosure. Pocket

Skills was designed to help people undergoing dialectical behavioral therapy (DBT).25 The work, which was done in collaboration with Marsha Linehan, the inventor of DBT, was intended to make the therapy more broadly available as well as to quantify the effectiveness of the therapy. Mindful of the fact that many mobile mental health applications do not follow evidence-based principles, Czerwinski’s team used Linehan’s DBT skills training manuals and worksheets26 as the basis for Pocket Skills.

As the name implies, Pocket Skills helps people develop various skills, such as mindfulness, emotional regulation, and distress tolerance. The learning has been gamified so that users gain points by developing various capabilities. All of the patients who were tested with Pocket Skills were already in DBT, she said, but many were having difficulty doing the homework given to them by their therapists. The application, which walks patients through the steps of developing a skill and asks questions about it to test their understanding, turned out to be very useful in helping these patients complete their homework. One advantage of putting the mental health behavior training on a smartphone was that it made it possible to track and analyze users’ behaviors, including what skills they chose to use, how often they used the application, and which aspects were most effective.

Czerwinski described a study with 73 suicidal patients that was carried out to test the effectiveness of Pocket Skills (Schroeder et al., 2018). The patients’ application usage was tracked over 4 weeks, and various outcomes were examined, such as when the participants used the skills, whether particular skills were more or less effective, and whether skill-level effectiveness influenced overall depression, anxiety, or skill-use improvement throughout the study. The study found that skills were used in the participants’ times of need. Results showed that the context in which skills were used was very important, and that skills were more or less effective for different subgroups of people. In addition, characteristics such as age, disorder type, how close family members were, and type of medication influenced the effectiveness of different skills.

Czerwinski said because individual characteristics influence how effective the various skills are, one approach to improving the overall effectiveness of DBT would be to use ML to personalize the interventions. “It’s really important that you allow participants or users of your applications to tell you what works for them in that . . . particular context, that particular moment,” she emphasized. She added that it is also possible to use ML to predict which

___________________

25 DBT is a type of cognitive behavioral therapy that can be used to treat people with suicidal ideation and various other complex disorders, helping them to develop concrete coping skills.

26 These are comprehensive resources that provide vital tools for implementing DBT skills training.

skills will work for which people in which contexts. It requires a minimum of about 3 weeks of user data to be able to make such predictions, but by combining these data with expert feedback, one can recommend skills based on emotional, personal, and environmental contexts.

Czerwinski concluded that in developing mental health applications, it is important that the design takes the unique emotional, environmental, and personal contexts of individuals at risk for suicide into account and allows for evidence-based support with personalized skill recommendations.

CURRENT ONLINE APPROACHES TO PREDICTING AND PREVENTING SUICIDE

Several speakers described the current state of the art of online approaches to predicting and preventing suicide and what possibilities the future might hold.

Google’s Efforts to Help Users in Crisis

Megan Jones Bell, consumer and mental health director at Google, spoke about expert- and partner-informed strategies to improve helpfulness to users in crisis. She agreed with other speakers that a person going through a mental health crisis can feel isolated, overwhelmed, and distressed. To get through those moments, access to the right information at the right time can make all the difference. Bell said that with searches for mental health therapists or mental health help on the rise, Google is working to quickly support users experiencing mental health challenges by providing them access to reliable information. She added that “our goal is to surface authoritative information that you can trust, create access to helpful resources you need in the moment, and show empathy for everyone who is experiencing mental health issues.”

Bell said that in order for Google to uncover the right crisis information, it is first necessary to recognize that a user is in crisis in that very moment, which in turn requires understanding the nuances of human language. Because it is not always obvious from the wording of a query that a user may be in crisis, AI and ML can facilitate identification of users who need help. Google recently updated its “Search” to use its AI Multitask Unified Model (MUM) to automatically and more accurately detect personal crisis queries in 75 languages in order to show users the most relevant information when they need it. The MUM is used to detect when users are looking for information related to suicide, domestic violence, sexual assault, and substance use. It is capable of a nuanced language understanding, and can also read images, videos, and other forms of media.

Once Google Search has identified a crisis query, it does several things to provide access to high-quality information and immediate help. At the top of

the search results, it provides contact information for relevant hotlines that are available to provide immediate help 24/7. “For example,” Bell said, “if you type in, ‘how to kill myself’ or ‘ways to commit suicide,’ we will provide 988 phone number in the US and other local hotline numbers in other countries.”

Something similar is done on YouTube, which is part of Google. When a user does a search on YouTube that indicates a crisis situation, the platform shows crisis resource panels to help users connect with local organizations that can help them in a moment of critical need. The panels appear on the “watch page” below videos offering a combination of educational and emotionally resonant content alongside prompts to take action if needed. The watch page is the place where users spend the most time so contextualizing mental health resources there increases the reach of critical health information.

Bell said that in the past, YouTube’s crisis resources had only appeared in response to searches related to suicide and self-harm. But because mental health and well-being go far beyond suicide and self-harm, the company is now expanding the range of topics that display crisis resources on YouTube search results to include issues such as depression, sexual assault, substance use disorders, and eating disorders. Bell said that the company has also introduced a new video block for suicide-related queries. She mentioned that if someone searches for something like “I want to die,” the search results include a section that shares videos telling stories of help and recovery as well as video content from national hotline providers.