E

Technical Risk and Cost Evaluation Related to the Chapter 6 Research Campaigns

WHAT IS THE TRACE PROCESS AND HOW WAS IT APPLIED TO THE CONCEPT OF RESEARCH CAMPAIGNS?

The technical risk and cost evaluation (TRACE) process is a specific application of risk-advised programmatic evaluation. It has evolved over more than a decade of work with the National Academies of Sciences, Engineering, and Medicine and The Aerospace Corporation to provide a common framework for the programmatic evaluation of concepts under consideration for decadal surveys and other studies by the National Academies. Previous works include two decadal surveys for both astronomy and astrophysics and planetary sciences, and one each for Earth sciences and applications from space and solar and space physics. The TRACE process evolved from cost and schedule modeling methodologies that have been used for evaluating NASA mission options for many decades. It continues to evolve for the decadal surveys, and each decadal survey applies the TRACE process differently, both in how they conduct it, and how the steering committees use the results in their decision-making. TRACE is an input to the decision-making but does not determine the decisions.

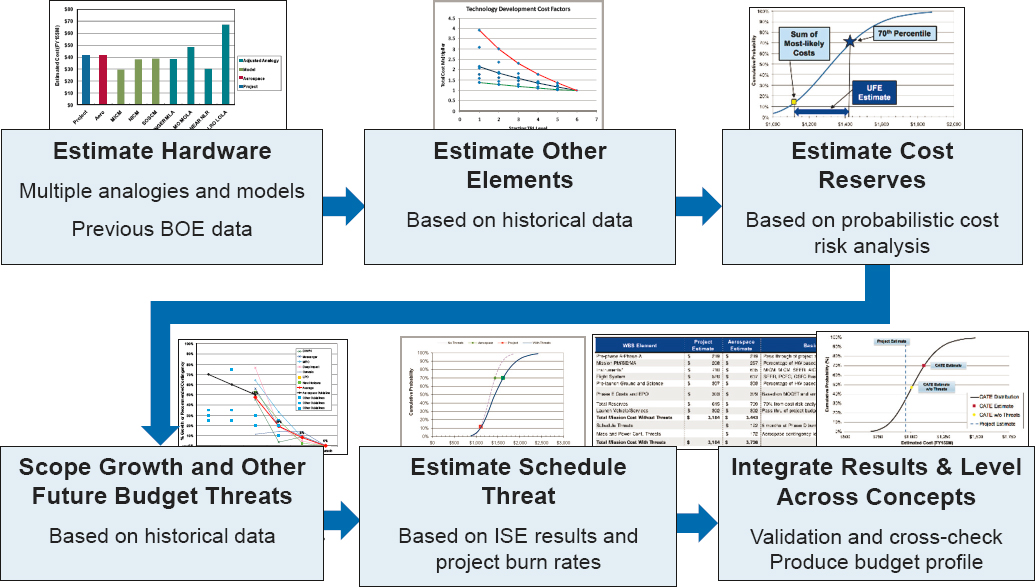

The approach used for this assessment was to identify (1) a compelling need, (2) a set of research questions and knowledge goals required to meet that need, and (3) set(s) of experiments that if executed could be expected to provide answers to those questions with successive experiments building the body of knowledge. From the experiment plan, the required support requirements for the number and type of experiments (e.g., up/down mass, facilities needed, crew time, etc.) were identified. Once this work was accomplished, the Panel on Engineering and Science Interface iterated with the Aerospace team to identify the complete set of elements needed to execute the campaign, refine the analogies and costed elements, and execute the process that is diagrammed below.

For example, the Bioregenerative Life Support Systems (BLiSS) research campaign was developed around the compelling need to develop self-sustainable biological life support systems that produce food, clean water, and renew air to enable long-duration space missions. From this, four research thrusts were developed and mapped into the key scientific question from Chapters 4 and 5. Five sets of experiment groups were developed, each with secondary experiment/measurements goals. Approximately 500 experiments and a similar number of inhabitable environment samples were identified. For each of those, the needed facility (e.g., Veggie, Ohalo, bioreactor) and the type of experiment was identified to allow for calculation of crew time, up/down/mass, and so on.

Leaning heavily on International Space Station (ISS) experiences and lessons learned, the operations concepts were described to include items such as the ground facilities to pre- and post-process experimental materials,

perform house control experiments, and to begin assembling BLiSS composite systems. Also included were the terrestrial research programs to prepare for and exploit the knowledge from the space-based experiments, the needed technology developments, and technical and programmatic management.

The majority of the key development and operations data was provided by NASA. The operations concepts for the Commercial LEO Destinations have been published, but there is of course no performance history, so ISS experiences were used to modify and inform those estimates as appropriate.

Once this work was accomplished, the panel iterated with the Aerospace team to identify the complete set of elements needed to execute the research campaign, refine the analogies and costed elements, and execute the process diagrammed below. (See Figure E-1.)

Key elements of this work are (1) identifying and rating risks associated with technology development requirements, flight systems, instrumentation, facility design, and operations; (2) estimating budget scope for proposed activities; (3) developing scenarios for phasing and implementing activities consistent with the predicted budget, including future cost threats, complexity growth, or campaign or scope creep or schedule realism; and (4) using a Monte Carlo analysis to capture appropriate reserves based on concept maturity and technology readiness.

The results were captured into tables and spreadsheets with the mean cost by element and aggregate cost risk captured as reserves. The latter items were captured as explicit budget reserves for development items and funded schedule extension risk for the operations phase.