1

Role and Importance of Exascale and Post-Exascale Computing for Stockpile Stewardship

This chapter describes the role of advanced computation in support of stewardship of the current stockpile. Computation has been an essential part of the nuclear weapons mission since its inception. For example, the Manhattan project relied on computational capabilities available at the time to determine that a weapon was feasible in terms of size and weight.

The sections below provide a description of some of the key phenomenology that must be simulated both for nuclear weapon operation and for some of the associated engineering requirements. Although it is impossible to be complete in this regard, the committee’s objective is to provide some context for why such problems are so challenging and also to lay the groundwork for why continued growth in computational capability will be required even beyond the advent of exascale systems. A question that is often asked of the nuclear design laboratories is “What are the resolution and numerical throughput (also known as memory size and floating-point speed) requirements for accurate simulation of nuclear weapon performance?” A more colloquial way to ask this is “How much is enough?” The answer to this question depends on the end goal of the simulation. If one’s objective is to simulate accurately all the relevant physical length and time scales (so-called direct numerical simulation), such a computation is currently out of reach. On the other hand, if one’s objective is to explore the predictions of various modeling assumptions and then make comparisons with experiments, such calculations are performed routinely today.

The chapter begins by describing the role of computing in the early days of the weapons program, when nuclear explosive testing was possible and then describes how the role of computing has evolved. This discussion is followed by a more detailed

description of the relevant physical processes. A discussion below on the modeling of high explosives used to initiate nuclear detonation is more detailed than the others for two reasons. First, many of the details of high-explosive modeling are unclassified and in the open literature. Second, the use of various levels of modeling to simulate high explosives mirrors some of the considerations that are relevant to present-day modeling of nuclear weapons—namely, the trade-off of modeling sophistication versus the need to explore efficiently a large design space.

A nuclear weapon is an energy amplifier that uses the chemical energy of high explosives to initiate nuclear fission. To give some idea of the level of amplification and to provide some perspective for the challenge of simulation, if completely exploded, a kilogram of conventional TNT chemical explosive provides about 4.6 megajoules of energy. In contrast, if completely fissioned, a kilogram of 239Pu provides 88 terajoules of energy, a factor of roughly 20 million over TNT and chemical explosives in general. An overview from Lawrence Livermore National Laboratory (LLNL)1 describes how energy is released by nuclear weapons. This level and rate of energy release makes overall system behavior sensitive to details of the component processes. This translates into computational requirements for simulation that are far more stringent in terms of resolution of length and time scales than those encountered for example in the simulation of conventional weapons.

Early in the history of nuclear weapons development, it was understood that the underlying physics of nuclear weapons were sufficiently complex that ab initio computation was not possible, for reasons further discussed below. Instead, computation could be used to provide feasibility estimates for proposed designs, with confirmatory experiments including underground nuclear testing essential to prove the workability of those designs. Phenomenological data from such experiments were used to develop calibrated models that improve the predictive capability of computation, provided consideration was limited to small excursions from the tested design. This approach of computation combined with underground testing was highly successful in developing and ensuring the robustness and reliability of today’s modern stockpile.2 Given that the current stockpile has been successfully developed and certified, it is reasonable to ask if there remains a need for additional computing capability. At present, however, the computational tools essential in designing the current stockpile continue to rely heavily on calibration with underground test data. The resulting methods are not predictive outside the range of the data in which they were calibrated and are viewed as possibly invalid if there is a change

___________________

1 B.T. Goodwin, 2021, “Nuclear Weapons Technology 101 for Policy Wonks,” Center for Global Security Research, Lawrence Livermore National Laboratory, https://cgsr.llnl.gov/content/assets/docs/CGSR_NW101_Policy_Wonks_WEB_210827.pdf.

2 N. Lewis, 2021, “Trinity by the Numbers: The Computing Effort That Made Trinity Possible,” Nuclear Technology 207(Sup 1):S176–S189, https://doi.org/10.1080/00295450.2021.1938487.

in design, manufacturing processes, or even the age of the weapon. In the absence of underground tests, high-fidelity simulation becomes a necessity in addressing significant changes in the stockpile.3

The entry of the United States into a voluntary moratorium on nuclear testing in 1992 led to the development of Science-Based Stockpile Stewardship (SBSS) and elevated the importance of high-performance computing (HPC) both in terms of ensuring the safety and reliability of the existing stockpile as well as providing scientific support should modifications be required. To understand the challenge presented by this HPC application, it should be noted that the cursory description of the operation of a nuclear weapon provided above does not do justice to the precision and timing required to achieve the staged amplification of energy. The length and time scales associated with the functioning of the weapon become increasingly short. As discussed below, the release of energy from explosives involves chemical reactions among molecules. In contrast, fission and fusion occur on length and time scales associated with the nucleus, which is more than 10,000 times smaller. Because of the enormous amplification of energy associated with nuclear reactions, small perturbations in any of the early stages can lead to large perturbations of the energy output or yield of the weapon. These considerations make it necessary to provide enhanced resolution of space and time scales to faithfully capture the dynamics via computation. While the relevant physical processes are all founded in established theory, the multiscale, coupled nature of the problem as well as the large range of relevant length and time scales have made computation—and, in particular, HPC—essential to making existing simulation codes more predictive even in the absence of supporting data from nuclear tests. This enhanced level of predictive capability is needed for several reasons:

- Early weapons designs employed computation, but these calculations assumed either simplified one-dimensional symmetry or two-dimensional axisymmetry, owing to limitations of computational speed and memory size at that time. Such calculations were not predictive and had to be calibrated via results from underground nuclear testing with the details of calibration dependent on the specifics of individual weapons designs. Once calibrated, these early weapons codes could be used to study the effect of small design changes, but they could not be used to explore changes considered significantly different from what was explored in previous nuclear testing. For this reason, future consideration of any new design concepts or changes to existing systems for which there is little or no testing history requires a more

___________________

3 R.J. Hemley and D.I. Meiron, 2011, “Hydrodynamic and Nuclear Experiments,” JASON Defense Advisory Panel: Reports on Defense Science and Technology, https://irp.fas.org/agency/dod/jason/hydro.pdf.

- predictive capability, which in turn requires increased spatial and temporal resolution of the relevant phenomena and thus increased computing capability and capacity.

- It has long been appreciated that nuclear weapons are inherently three-dimensional both in geometric detail and in operation. Despite efforts to create as symmetric a geometric environment as possible, there are engineering features that are essential to the operation and delivery of the weapon, and these are inherently three-dimensional. In addition, the basic physical processes involved in the implosion of the primary and secondary possess intrinsic instabilities that can make the resulting weapon response diverge from the desired axisymmetry if not properly controlled.

- As part of the ongoing stewardship of the existing stockpile, every effort is made to ensure that refurbishment of the various systems—for example, those undergoing a life extension program (LEP)—use materials and components that are as close to identical as possible to those used in the original design. However, this has not always proven possible. First, some of the original materials are no longer manufactured. Second, manufacturing approaches evolve, and so it may not be practical to reestablish various component production lines. Last, the materials used often age with time (a notable example is plutonium, discussed in more detail below). Thus, the stockpile inevitably evolves with age, and both experiment and computation are required to make sure that a given weapons system has not unduly diverged from its original certified design.

- Although much progress has been made in understanding the basic physical processes that govern a nuclear weapon, there remain phenomena central to the operation of modern nuclear weapons for which understanding remains incomplete. The computational resources required for full numerical resolution of these physical processes typically exceed exascale capability. An example would be the detailed three-dimensional calculation of the implosion of an inertial confinement fusion capsule, taking into account the grain structure of the shell bounding the deuterium-tritium gas mixture. Such calculations can only be practicably performed today in an axisymmetric geometry.4 To develop engineering models that facilitate rapid iteration of design calculations, high-fidelity simulation that captures as many physical scales as can be resolved given the limitations of computer speed and memory are used to generate data to inform reduced-order models.

___________________

4 B.M. Haines, R.E. Olson, W. Sweet, S.A. Yi, A.B. Zylstra, P.A. Bradley, F. Elsner, et al., 2019, “Robustness to Hydrodynamic Instabilities in Indirectly Driven Layered Capsule Implosions,” Physics of Plasmas 26:012707, https://doi.org/10.1063/1.5080262.

- In the absence of underground testing, it is necessary to acquire some relevant information via computation only. Understanding the relevant phenomena so that they can be predictively modeled requires a combination of focused experiments combined with HPC at the level of exascale and beyond.

- In addition to simulation of fundamental aspects of weapons operation, HPC plays an important role in simulation of nonnuclear components of a weapon in various environments that are encountered during the weapon’s life cycle. This includes weapon response in normal, abnormal, and most challenging, hostile environments. This aspect of simulation also requires HPC capability.5

MODELING AND SIMULATION REQUIREMENTS FOR STOCKPILE STEWARDSHIP

Detonation of High Explosives

As indicated above, a nuclear weapon is effectively an energy amplifier wherein the energy released from detonation of a high-explosive charge is used to compress and initiate a fissile core and begin the nuclear chain reactions that drive further amplification stages. As an exemplar of the requirement for high-fidelity simulations needed to support the stockpile, the committee discusses here in detail some of the extant computational challenges of modeling and simulating the detonation of high explosives and why today such simulation requires state-of-the-art computational capability. In what follows, the committee provides a more detailed discussion of some of the issues as these are unclassified. In contrast, the committee provides more terse descriptions of later stages of weapons operation owing to classification restrictions.

Detonation refers to a particularly rapid form of combustion in which the transfer of energy occurs via a strong shock wave supported by an exothermic chemical reaction zone. The leading shock of a detonation wave compresses and heats the reactant material, thus initiating a chemical reaction that further drives the shock wave. Under the right conditions, this process is self-sustaining as a wave that propagates across the high-explosive fuel. The conversion of chemical to mechanical energy as the high explosive detonates is extremely rapid and intense. A solid explosive can convert energy at a rate of 1010 watts per square centimeter at the detonation front, or 1 MW per square meter. For comparison, insolation of Earth by the Sun provides 1 kW per square meter.6

The rapid release of chemical energy leads to strongly supersonic waves propagating at 8–10 kilometers/second that provide large pressures, on the order of tens to

___________________

5 Briefings by LANL, LLNL, Sandia to National Academies panel, March 21, 2022.

6 W. Fickett and C. Davis, 2001, “Detonation: Theory and Experiment,” Journal of Fluid Mechanics 444:408–411, https://doi.org/10.1017/S0022112001265604.

hundreds of kilobars. Such pressures are well above the compressive strength of metals, and so high explosives are a natural candidate for rapid compression of the plutonium of a primary to a supercritical state.

High explosives are today used in mining, conventional weapons, and sometimes for materials applications like formation of metallic solids from metal powders. Precise characterization of the speed and energy released, or the detailed structure of the detonation wave, are not of top concern in such applications. For nuclear applications, however, understanding the structure and dynamics of the detonation, particularly its velocity and delivered energy, is crucial to characterizing the subsequent implosion of the nuclear material. The high-explosive charge of a modern nuclear weapon is carefully engineered to create a robust implosion that will rapidly assemble a supercritical mass.

For many applications other than nuclear weapons, it is appropriate to assume that the region wherein the reactants are compressed, react, and transition to products is adequately described by a plane steady reaction zone. This assumption is adequate for detonations where the radius of curvature of the detonation wave greatly exceeds the thickness of the reaction zone. If the reaction zone is assumed to be infinitesimally thin and the chemical reaction proceeds to completion instantaneously, the detonation wave can be treated as a discontinuity moving with a specified detonation velocity and the conservation laws of compressible fluid flow can be applied to determine its evolution. Once the detonation velocity is measured, the laws of conservation of mass, momentum, and energy determine the conditions behind the shock wave. In compressible flow without exothermic chemical reactions, shock waves of all strengths are possible, with the minimum velocity of the shock wave being the local sound velocity. When the heat release of an exothermic reaction is introduced, the shock wave must have a minimum velocity that is strongly supersonic relative to the ambient sound speed. This is the basis of the Chapman-Jouguet (CJ) theory of detonation. This minimum velocity is called the CJ velocity and the associated pressure, the CJ pressure. Unfortunately, the simplicity of the CJ theory is not adequate for the tight timing requirements of a nuclear weapon. The CJ theory when applied to detonation in gases is often correct to within 1–2 percent, but later experiments showed that other quantities such as the pressure at the CJ state were off by 10–15 percent; the assumption of a simple one-dimensional discontinuous solution is overly simplistic. The CJ theory is attractive owing to its simplicity; the computational cost to characterize the detonation wave is low, but it is not sufficient for the timing accuracy required, and more elaborate models are required.

A significant improvement in understanding was made independently by Zeldovich, von Neumann, and Döring (ZND).7 They based their work on the one-dimensional,

___________________

7 W. Döring, 1943, “Über Detonationsvorgang in Gasen” [On detonation processes in gases], Annalen der Physik (in German) 43(6–7):421–436.

inviscid, compressible Euler equations of hydrodynamics. The generally complex set of reacting species is modeled by one component and transitions from reactant to product at a finite rate. A detonation profile here still has a discontinuous shock wave, and so the conservation laws must still be used to connect the reactant state to the product state, but the profiles of pressure, density, velocity, and so on are now self-consistent solutions to one-dimensional partial differential equations. With the development of improved computational capabilities, it was found that the simple CJ state was inadequate in describing detonation dynamics. Instead, the detonation front had a complicated time-dependent fine structure that wandered about the CJ values.

As computational capabilities increased further, the one-dimensional theory was extended to include modeling of chemical reactions with multiple reaction rates, heat conduction, viscosity, and diffusion, making it possible to consider two-dimensional calculations and finite size effects such as curvature of the detonation wave and the effect of boundaries. One of the important conclusions of these studies was that with the addition of more physics, the deficiencies of the CJ and ZND models became more apparent, and the detonation velocity was now seen to be a complex function of the explosive material, including the reaction rates, which in many cases are not well known for condensed explosives at high pressure.

For gas-phase detonations, improved experimental diagnostics have shown that considerable three-dimensional fluid motion occurs behind the detonation front. For example, it is known that the leading shock wave of the detonation exhibits instability in the form of transverse shock waves running along the shock surface. Complex motions including spiral waves have also been observed, as well as galloping of the detonation surface. Over time, a qualitative view has emerged of the detonation front as a complex surface with propagating cellular perturbations proportional to the reaction time and so dependent on local conditions as the explosive reacts and detonates. Curvature of shocks leads to the generation of vorticity, the tendency of the flow to exhibit local differential rotation. In gas phase detonations, this fluid rotation leads to what appears to be turbulent dynamics.8

Much of the research that led to the results described above was based on detonation in gaseous media. For several reasons, nuclear weapons make use of solid explosives. First, such explosives have higher energy density and so can provide higher pressures for compression of the metal shell. Second, they can readily be molded around the nuclear core of the primary. These advantages, however, are offset by the fact that modeling and simulation of such explosives is significantly more complex than that required for gas-phase explosives. Solid explosives are complex materials consisting

___________________

8 W. Fickett and C. Davis, 2001, “Detonation: Theory and Experiment,” Journal of Fluid Mechanics 444:408–411, https://doi.org/10.1017/S0022112001265604.

of crystallites of high-explosive molecules that are held together by polymeric binder. Perhaps of more relevance to the requirements for computation, these materials, unlike gases, are opaque, and so it is more challenging to gather experimental data on the internal structure of the detonation to inform phenomenological models.

Numerical simulation of high explosives today remains challenging. First, there is a profusion of length and time scales that must be considered. For a conventional high explosive, the reaction zone thickness is typically on the order of tens of microns. This alone tells us that the ratio of the length scales of the gross dimension of the primary to the reaction zone is on the order of 10,000 to 1. Models for the chemical reactions of the combustion typically involve hundreds of species and thousands of possible reactions. In most cases, the reaction rates must be obtained from molecular dynamics calculations, but these too are challenging to compute because they involve simulation of chemical reactions at high pressures. In addition, the reactions must be understood across several phases: the solid phase of the explosive crystallites and the liquid and gaseous phase of the reactants and products. The time scales for many of these reactions is on the order of picoseconds. This places a severe restriction on the required spatial and temporal resolution needed to obtain resolved results. An example of the challenge is the pioneering work of Baer on the direct numerical simulation of solid explosives.9 Using the Eulerian shock physics code CTH,10 Baer performed simulations of the high-explosive cyclotetramethylene-tetranitramine (also known as HMX) on the state-of-the-art Advanced Simulation and Computing (ASC) platform at that time, the Accelerated Strategic Computing Initiative Red platform. The initial condition for this simulation was a small piece of explosive (about 1 mm3) resolved at the mesoscale. That is, the explosive crystallites and the polymer binder are resolved at a scale of roughly 100–200 microns. The goal was to study the interaction of the crystallites as they were impacted by a strong shock wave ultimately leading to a detonation wave. This calculation, justifiably viewed as an important contribution to detonation science, required on the order of a billion grid cells and led to some important insights on the micromechanics of detonation.

Owing to memory restrictions, however, even this calculation did not include an accurate model of the crystalline deformation, friction, or void heating—effects that are known to play a role in the formation and propagation of detonations in solid explosives. In addition, only a relatively crude model of the combustion was used. Last, an important aspect of detonation science is the propagation of the detonation over curved geometry. To examine this, the calculation would need to be performed using samples

___________________

9 M.R. Baer, 2001, “Computational Modeling of Heterogeneous Reactive Materials at the Mesoscale,” AIP Conference Proceedings 505:27, https://doi.org/10.1063/1.1303415.

10 E.S. Hertel, R.L. Bell, M.G. Elrick, A.V. Farnsworth, G.I. Kerley, J.M. McGlaun, S.V. Petney, S.A. Silling, P.A. Taylor, and L. Yarrington, 1995, “CTH: A Software Family for Multi-Dimensional Shock Physics Analysis,” 377–382, Springer: Berlin/Heidelberg, https://doi.org/10.1007/978-3-642-78829-1_6.

of dimensions on the order of centimeters. If the resulting fluid mechanics behind the detonation wave is truly in the turbulent regime, it becomes necessary to resolve down to the micron scale in order to directly resolve the effects. Improving the quality of the solid mechanics and chemistry further increases the computational volume while also requiring a reduced time step to capture the details of wave propagation. Given the large number of degrees of freedom associated with fully resolved detonation simulation, detailed calculations at the scale of the weapon that include relevant geometrical effects could require resources exceeding those provided by exascale platforms. Indeed, this level of simulation of high-explosive detonation is not practical today even though it is agreed that understanding solid explosives at this level of detail would be of benefit to SBSS. In discussion of these issues with the design laboratories, it is acknowledged that

Using high-fidelity reactive flow with chemical kinetics to model High Explosive (HE) burn requires extremely high resolution to resolve chemistry at the burn front and its inter-relationship with hydrodynamics. In general 3D [three-dimensional] would require billions to trillions of hydro cells/zones in the HE to be modeled predictively. AMR [adaptive mesh refinement] is routinely used to dynamically refine and de-refine the burn front thereby saving memory and other computational resources.11

Adaptive methods (in both the space and time dimensions) that focus resources on the regions about the detonation wave have been developed as indicated above, but efficient implementation of such methods on modern high-performance architectures is complicated by the fact that today’s HPC platforms are not optimally suited to the irregular data access patterns that result from using such methods. If it should prove necessary to resolve fine-scale multispecies turbulence behind the detonation front, the resolution requirements become more demanding. As a result, simulation and experiment are used to create calibrated engineering models for high-explosive detonation, and it is these models that are used in simulation of full systems.

To improve nuclear safety, there are today increased efforts to understand the detonation of insensitive high explosives. Such explosives have lower CJ velocities and pressures so that more of the explosive is needed to implode the primary. On the other hand, these explosives are much more difficult to initiate (hence the name insensitive) and so are preferred for nuclear safety. There is a desire within the nuclear complex to eventually use only insensitive high explosives in the nuclear stockpile, but their lack of sensitivity paradoxically makes their simulation more complex, as the combustion processes are more complex.

___________________

11 From summary of computational requirements provided to the committee by LANL, LLNL, and SNL ASC teams.

For engineering purposes, in which a designer wants to rapidly examine possible changes in the configuration of the explosive, it is not currently practical to use highly resolved computations to design and qualify high explosives. First, as discussed above, the material and chemical response of the initiation of solid explosives leading to detonation is still not completely understood. Second, the range of scales that must be resolved, as also discussed above, makes routine calculations infeasible. Instead, engineering models are developed that parameterize the results in various regions of interest. Such models can then be used to explore some of the design space. The development of such models continues to rely on experimental measurement and, to an increasing degree, detailed computation with the relevant computations, likely requiring exascale computational capability and beyond to properly capture the phenomena of interest.

Today, when a manufactured lot of high explosive is delivered to the nuclear weapons complex, it undergoes extensive testing to confirm that it meets the required specifications. Once an acceptable formulation is found, significant work is required to ensure that it can be manufactured reliably. HPC has been essential in this qualification process. But as it stands today, predictive simulation of high explosives is not feasible even with exascale computing capabilities.

The overall conclusion is that even for the initial phase of nuclear weapon operation, the detonation of solid high explosive, there is still no complete predictive understanding of detonation that would make it possible to specify material properties of the explosive such as crystallite volume fraction, binder composition, and so on that would allow for the ab initio design of a high explosive with a predictable detonation propagation speed that satisfies the very tight timing requirements associated with the initiation of a weapon. As it stands today, predictive simulation of high explosives is not feasible even with exascale computing capabilities. This remains an area of active research. As discussed below, the situation is quite similar as regards the other stages of nuclear weapon operation.

Dynamic Response of Materials Under Extreme Conditions

The second phase of the energy amplification process is the implosion of the fissile material by the high-explosive charge. As the plutonium shell is compressed, knowledge of how various thermodynamic properties, such as density and internal energy, vary with pressure and temperature is essential. This information is provided in the equation of state. While the equation of state has been determined analytically for some simple materials (e.g., gases at low to moderate pressures), for more complex materials, experiments and computation are combined to provide tabular results.

Plutonium is a very complex metal. At ambient pressure, as the temperature is raised toward its melting point, it undergoes six structural phase transitions

corresponding to different crystalline symmetries.12 At the very high pressures achieved in nuclear explosions, the equation of state has mostly been determined through experiment. More recently, HPC has been used to explore the equation of state at pressures and temperatures that can be achieved only in an underground nuclear explosion. Such calculations require the approximate quantum mechanical solution of the many-body problem of electronic structure under high pressure and temperature. For metals such as plutonium, this is particularly challenging because plutonium has 94 electrons, with significant correlations among the electrons occupying the various orbital shells. This is partly responsible for its rich crystalline morphologies.

The equation of state refers to the thermodynamic properties at equilibrium. Under conditions of rapid implosion, it is also necessary to consider the dynamic material strength of a metal that describes irreversible processes such as permanent deformation under applied stress. The physical basis for material strength and failure of metals has been understood for some time and is determined by the motion of imperfections (dislocations and so on) in the crystalline lattices of the crystallites comprising a metal. These propagate through the crystalline lattice under applied stress on length scales of microns. As in the case of high explosives, the dynamics have a multiscale character, but translating this basic physics into a model that can be applied at macroscopic scales has proven to be challenging. HPC has been applied productively here as well, and progress has been made in improving what have been in the past largely calibrated models of such phenomena, but a completely satisfactory model that can be efficiently used in design calculation is not yet available.13

A natural question to ask is whether it is necessary to know the details of the response of the plutonium under the high pressures of implosion with such accuracy. Again, because of the strong amplification of energy inherent in the nuclear explosion process, it turns out that the results are quite sensitive to both the equilibrium and dynamic properties of the plutonium. In particular, the rate of fissioning that governs the output of the weapon is very sensitive to these properties, and so accurate knowledge is essential to predict reliably the performance of the weapon.

Hydrodynamics

The ability to simulate hydrodynamics is essential to the understanding and prediction of nuclear weapon operation. By hydrodynamics, the committee means the transport of mass, momentum, energy, and all component species in the presence of internal and external stresses. The equations of hydrodynamics, properly modified for the operative

___________________

12 S. Hecker, 2000, “Plutonium and Its Alloys: From Atoms to Microstructure,” Los Alamos Science—Challenges in Plutonium Sciences 26.

13 Ibid.

physics, are used to model the detonation of the explosive, the implosion and explosion of the primary, and the subsequent dynamics of the secondary. The flow in all these cases is highly compressible, meaning that under the pressure forces experienced by the various media, significant density changes will occur. For example, as the primary implodes, the resulting flows exhibit velocities well in excess of the local speed of sound in the material. This is known as the hypervelocity regime of hydrodynamics, characterized by the formation of strong shock waves. Resolution of these waves using modern numerical codes requires tracking the evolution of a significant number of degrees of freedom, as such waves are marked by rapid variation of the fluid properties over very narrow and evolving spatial regions. It is not practical to directly simulate these narrow regions. Instead, modelers use various types of regularizations to compute large-scale motions while ignoring the smaller scales in the rapid transition regions. Flows in this high-velocity regime are also prone to development of turbulence in which the flow becomes chaotic. Again, it is currently not practical to numerically resolve such small scales, and so these too are modeled, often requiring significant calibration of the models via experiments and previous underground testing. While modern highly resolved calculations today track billions of degrees of freedom in simulating hydrodynamics, it has long been appreciated that developing a deeper understanding of such hydrodynamic flows requires resolution that requires exascale capabilities and beyond and that such computational capability is essential to developing models that are less reliant on phenomenological calibration.

Neutron and Radiation Transport

Simulation of both neutron and X-ray transport is essential to modeling of both fission and fusion processes. Typically, this is among the most computationally expensive parts of the simulation of weapon operation simply because of the number of degrees of freedom involved. The density, momentum, and energy of the imploding material are tracked computationally at each physical point as discussed above when simulating hydrodynamics. But in addition, it is necessary to track at each point the neutron angular distribution and energy spectrum. This adds an additional three degrees of freedom at each spatial position, leading to computation tracking six dimensions plus time and therefore a large factor to the operation count. Note too that such calculations require input to the transport equation such as the neutron cross sections for fission, absorption, and scattering, and these must be determined through theory or experiment.14

___________________

14 A. Sood, R.A. Forster, B.J. Archer, and R.C. Little, 2021, “Neutronics Calculation Advances at Los Alamos: Manhattan Project to Monte Carlo,” Nuclear Technology 207(Sup 1):S100–S133, https://doi.org/10.1080/00295450.2021.1956255.

A similar requirement arises for the secondary. The fluence and energy of X rays generated by the primary as it explodes are large enough to implode the secondary. Simulating the propagation and evolution of these X-ray photons requires the application of the equations of radiation hydrodynamics. The challenges here are similar in complexity to those encountered in neutron transport, although the details of the governing equations are quite different. Here, the relevant material properties are the opacities of materials as a function of X-ray energy, and these must be measured or computed using calculations of atomic transitions. Again, such transport calculations are significantly more computationally expensive than those associated with basic hydrodynamics.15

Quantification of Margins and Uncertainties

The National Nuclear Security Administration (NNSA) laboratories use quantification of margins and uncertainties (QMU) as a means of describing the overall reliability and robustness of weapons systems. As discussed above, the operation of a weapon proceeds in stages. Each stage is meant to create a set of environments that then make possible the next stage of operation. Detonation of the high explosive must create a compressive environment leading to the fission of the primary. The output of the primary must provide sufficient energy to drive the secondary. To ensure that the required conditions occur in a robust way, weapon designers engineer a certain amount of margin in the design so that even if uncertainty in the required processes leads to some degradation of the required environment, conditions are still sufficient to drive the next stage. To confirm that sufficient margins exist, the maximum and minimum of the operating ranges for the various stages of operation must be established. If there is insufficient margin, the weapon system may not behave in a predictable way, implying that there is a region of parameter space, known as a performance cliff, in which the next stage of operation cannot be achieved or becomes unreliable.

Assessments of where in parameter space such cliffs are located are by nature uncertain, and this uncertainty must also be estimated. The ratio of the assessed margin to uncertainty is a confidence factor, and the research done to determine margins and uncertainties is used to create an evidence file that is reviewed whenever the health of the stockpile is assessed. Margins and uncertainties can be assessed for all aspects of weapon function, and such assessments are performed not only for the nuclear explosive package (NEP) containing the primary and secondary, but also for all the supporting nonnuclear components that must function reliably and precisely. QMU is used today as a basis for evaluating all aspects of what is known as the stockpile to target sequence. Margins (and uncertainties) are not static. Components of the stockpile have undergone

___________________

15 M. Dimitri and B. Weibel-Mihalas, 1984, Foundations of Radiation Hydrodynamics, New York: Oxford University Press.

refurbishment via LEPs. The materials comprising the stockpile age. To ensure that the weapons will operate as designed, ongoing assessments of margins and uncertainties are required. All recent assessments indicate that current stockpile systems have high-confidence factors, but, in the absence of underground testing, it is important to continually assess the stockpile using QMU.

To evaluate margins and uncertainties, ensemble analyses of weapon function as various key parameters are varied must be performed. Some of the required data are available from past nuclear tests, but such tests did not always comprehensively cover the variations of key parameters. Computation today plays an essential role in further assessing margins and uncertainties. It could be argued that such analyses require capacity computing to create the ensemble of calculations needed. But the challenge here is that the regions of parameter space where the various models of physical processes provide accurate results are not completely known. Underground testing and other experimental data explore some small regions of this parameter space, but the regions of validity of the various models (i.e., the failure boundaries) are presently not well understood. Exascale computing and beyond, combined with future basic experiments, is required to further explore this space and provide improved assessments of margins and, even more importantly, uncertainties.16

Engineering Challenges

The committee has up to now focused on the simulation of the NEP, indicating the computational challenges. There are also computational challenges associated with the nonnuclear components. These range, for example, from electronic components like the arming, fuzing, and firing system that controls the firing of the high explosives, to structural components that ensure the NEP survives the rigors of delivery. These must be qualified in normal delivery environments, and their response must also be characterized in abnormal environments such as those arising in case of an accident. While it might be assumed that modeling and simulation for these components should already be well in hand, there remain challenges for which exascale computing will aid future qualification.

First, the weapons system, be it a warhead or bomb, is subject to mechanical and thermal stresses during delivery. This is particularly true for reentry systems, as discussed below. Flight testing has been, and is still, used to confirm that the weapons systems can survive the reentry environment and accurately deliver the NEP to a specified target. However, such testing is expensive and so is not performed often. Simulation of the delivery environment is then required along with the use of QMU to provide additional

___________________

16 National Research Council, 2009, Evaluation of Quantification of Margins and Uncertainties Methodology for Assessing and Certifying the Reliability of the Nuclear Stockpile, Washington, DC: The National Academies Press, https://doi.org/10.17226/12531.

input to qualification. Simulation of reentry environments remains an exascale challenge and will most likely require capability beyond exascale, as will be discussed later in this chapter. Second, a bomb or warhead is geometrically quite complex, with many internal parts that are used to provide mechanical and thermal stability for the NEP as the weapon encounters the stresses of the delivery environment. Simulation is required to understand the mechanical and thermal response. In addition, the dissipation of mechanical energy by joints, bolts, and so on undergoing vibration is today still largely modeled phenomenologically. Third, the weapon must fail safely in the event of an accident such as a fire or collision. Here, testing might provide confirmation of safety in only a few specific scenarios. Simulation is required to cover a range of possible thermal and mechanical loadings. The results of such simulations are often surprising, indicating unexpected failure scenarios. By simulating a wide range of accidents, QMU can then be used to quantify the likelihood that the weapon will fail gracefully. Last, perhaps the most challenging requirement is to quantify the survivability of a weapon in a hostile nuclear encounter. This requires assessing mechanical, thermal, as well as electrical insults to the weapon. Computation is essential here, as it is very difficult to fully replicate this environment experimentally.

COMPUTATIONAL REQUIREMENTS AND WORKLOAD FOR ASC APPLICATIONS

All three nuclear design laboratories, Los Alamos National Laboratory (LANL), Lawrence Livermore National Laboratory (LLNL), and Sandia National Laboratories (SNL), provided the committee with summaries of the computational requirements for their applications. It is important to note that the results provided below represent only a snapshot of typical workloads. For example, for the hydrodynamics calculations, the requirements depend on the number or materials in each computational cell, the level of refinement, the level of physics being described, and so on. For radiation transport using deterministic methods, the computational requirements will depend on the number of energy groups, angles, materials as well as material states, and so forth. LANL applications are characterized in Table 1-1.

As can be seen, the requirements per cell can vary significantly and will depend on the level of fidelity desired. The data access patterns also vary. Regular access patterns make it possible to benefit from memory caching strategies, whereas irregular patterns are harder to optimize. As will be seen in Chapter 2, efficient memory access can lead to performance enhancements. The corresponding characterization for LLNL is displayed in Table 1-2.

TABLE 1-1 Characteristics of Different Hydrodynamics and Transport Applications for Los Alamos National Laboratory

| Typical Characteristics | Hydrodynamics (Hydro 1) | Hydrodynamics (Hydro 2) | Deterministic Transport | Monte Carlo Transportation |

|---|---|---|---|---|

| Memory needs | 100–400 KB/cell | 1–2 KB/side (sides is better metric of complexity) | 128 KB/zone (highly dependent on number of groups and angles) | 6–25 KB/zone |

| Access pattern | Irregular, with low to moderate spatial and temporal locality | Irregular, with low spatial and temporal locality | Regular, with high spatial and moderate temporal locality | Irregular, with low spatial and temporal locality |

| Communication pattern | Surface communication, point-to-point, and RMA for AMR and load balancing some collectives | Surface communication, point-to-point, some collectives | Point-to-point, with some global reductions | All reduce, point-to-point, some surface communication |

| MFLOPS | 0.033/cell/cycle | 0.0034/side/cycle | 0.7/zone/cycle | 0.083/zone/cycle 0.07671/particle/cycle |

NOTE: AMR, adaptive mesh refinement; MFLOPS, million floating-point operations per second; RMA, random memory access.

SOURCE: From summary of computational requirements provided to the committee by LANL, LLNL, and SNL ASC teams.

TABLE 1-2 Characteristics of Different Hydrodynamics and Transport Applications for Lawrence Livermore National Laboratory (LLNL)

| Typical Characteristics | Hydrodynamics | Deterministic Transport | Monte Carlo Transport | Diffusion |

|---|---|---|---|---|

| Memory needs | 0.1–1 KB/zone | 40–240 KB/zone | 3–30 KB/zone | 0.1–1 KB/zone |

| Access pattern | Regular, with modest spatial and temporal locality | Regular, low spatial but high temporal locality | Irregular, low spatial and temporal locality | Regular, good spatial and temporal locality |

| Communication pattern | Point-to-point, surface communication | Point-to-point, some volume | Point-to-point, some volume | Collective communications and point-to-point |

| MFLOPS/zone/cycle | 0.02–0.1 (10× for iterative schemes) | 2–12 | 0.03–0.07 | 0.1–3 |

| I/O (startup data) | 20–160 MB (EOS)a | 0.3–12 MB (nuclear) | 100–300 MB (nuclear) | 0.1–1 KB/zone |

NOTE: EOS, equation of state; I/O, input/output; MFLOPS, million floating-point operations per second.

SOURCE: From briefing to the committee by LLNL.

Again, memory requirements will vary depending on the nature of the computation, and LLNL and LANL use different approaches—for example, in their implementation of hydrodynamics or radiation transport. But a common theme for both laboratories is that the need to simulate radiation transport significantly increases memory requirements in either case. It is also apparent that flop rates per cell can be quite low. This will again be related to how well the memory subsystem can deliver data to the computational units and will be discussed further in Chapter 2.

Each laboratory provided sizes, memory requirements, wall-clock runtimes for both routine calculations versus larger hero calculations, which are executed less often but are needed occasionally for key stockpile decisions. Typical simulation characteristics representative of both LANL and LLNL are shown in Table 1-3.

Routine 1D, 2D, and even small 3D calculations are performed on capacity platforms (CTS-1). Larger 3D calculations require petascale platforms such as Trinity or Sierra. Hero calculations for LANL and LLNL are characterized in Table 1-4.

TABLE 1-3 Runtime and Resource Characteristics of “Typical” Simulations

| Simulation Type | Number of Nodes | Memory Footprint | Wall-Clock Time |

|---|---|---|---|

| Routine 1D | 1 (CTS-1) | All node memory | Minutes–hours |

| Routine 2D | 4–32 (CTS-1) | 128–2,048 GB | 1 hour–1 week |

| Routine 3D (small) | 10–100 (CTS-1) | 1.3–12.8 TB | 1 day–2 weeks |

| Semi-routine 3D (larger) | 1,000–2,000 nodes (Trinity) 100–1,000 nodes (Sierra) |

64–256 TB | 10–100 hours |

NOTES: Calculation of memory requirements are 64 GB × number of nodes. Also of note, there is a complicated relationship between simulation type, resolution, physics fidelity, and memory footprint. These are not comparable across codes or even applications of the same code across different simulation types. 1D, one-dimensional; 2D, two-dimensional; 3D, three-dimensional.

SOURCE: From summary of computational requirements provided to the committee by LANL, LLNL, and SNL ASC teams.

TABLE 1-4 Observed Runtime and Resource Characteristics of Prior Los Alamos National Laboratory (LANL) and Lawrence Livermore National Laboratory (LLNL) Hero Calculations

| Simulation Type | Number of Nodes | Memory Footprint | Wall-Clock Time |

|---|---|---|---|

| LANL Class A simulation | 2,400 (Trinity) | ~300–400 TB | 6 months |

| LANL Class B simulation | 4,990 (Trinity) | ~600 TB | 3–4 months |

| LLNL Class C simulation | 288 (CTS-1, ~25% of the machine) | ~20 TB | 1 month |

| LLNL Class D simulation | 3,250 (Sierra, ~75%) | 104 TB | 5.8 days |

| LLNL Class E simulation | 512 (Sierra, 12%) | 32.8 TB | 2 months |

NOTES: Class A refers to a moderate-resolution and moderate-fidelity three-dimensional (3D) configuration. Class B refers to a high-resolution and moderate-fidelity 3D configuration for a different physical system. Class C refers to a moderate-resolution/high-fidelity 3D simulation; Class D to a high-resolution/moderate-fidelity 3D simulation; and Class E to a very-high-resolution/moderate-fidelity 3D simulation.

SOURCE: From summary of computational requirements provided to the committee by LANL, LLNL, and SNL ASC teams.

Calculations such as these are performed relatively rarely, depending on priorities and the potential contribution to programmatic decisions. They are disruptive in that they require the use a significant portion of the computational resource. One noteworthy aspect is that memory is at a premium on all these platforms, again reflecting that while floating-point capability has increased to the petascale and soon the exascale range, memory sizes have not kept pace. Apparently, even in the larger LANL hero calculations, approximations were made that degrade the fidelity below deemed desirable by experts. Running these calculations at the desired fidelity would require platforms with capabilities beyond exascale. They would exceed the available memory on an exascale system and require months or even years of wall-clock time. Memory footprints for such “post-exascale” computations are in the range of 5–10 petabytes, again reflecting the mismatch of computational capability and memory capacity and throughput. All laboratories concluded that future such hero simulations will be limited by the difficulty of strong scaling large-memory footprint calculations so that they meet practical time-to-solution requirements.

FUTURE CHALLENGES WILL REQUIRE COMPUTATION BEYOND EXASCALE

Having provided some context as to why HPC is crucial to existing efforts in stockpile stewardship, this section provides examples of some future challenges that will require computational capabilities at exascale and beyond.

Subcritical Experiments

Prior to 1992, the validation of various nuclear designs as well as the furtherance of understanding of nuclear weapon science was achieved through underground nuclear testing. In the era of stockpile stewardship, underground testing is no longer possible, and the emphasis has been on simulation, as well as a limited set of experiments. Experiments in which plutonium is driven by high explosives are allowed under the Comprehensive Test Ban Treaty,17 but the resulting assembly cannot achieve criticality. Such experiments today must be performed at the Nevada National Security Site (NNSS, formerly the Nevada Test Site). These subcritical experiments have shown great value in resolving various stockpile issues and have also provided important validation data.

LANL and LLNL have recently proposed experiments in which scaled primaries are imploded. Because the primary is scaled to a fraction of its original size, there is no possibility of achieving criticality. The value of such experiments is that, using modern

___________________

17 The United States has not ratified the Comprehensive Test Ban Treaty but currently does observe its restrictions.

diagnostics such as highly penetrating radiography as well as some innovative measurements of neutron output, it is possible to perform investigations of important physical aspects of nuclear performance. These experiments will provide important validation data. However, even modern diagnostics provide only a limited characterization of what happens in an experiment. Realizing the full value of these experiments will require high-resolution simulations that in turn will require significant computational capability. It may seem paradoxical that a higher level of resolution would be required for an implosion where criticality is not achieved. To make predictions about a system at full scale and get maximum utility from the experimental data, the simulations must resolve and be sensitive to a wide range of fission modes that are not important after a system becomes critical. These modes are very sensitive to the details of how the assembly implodes, and so enhanced resolution will be required. The facility for these subcritical experiments is now under construction at the U1A site at the NNSS. When complete and operational, this facility will be one of the few opportunities for future weapon designers to develop their skills. But, absent continued improvement in simulation capability, it will not be possible to realize the full value of these future subcritical experiments.18

Aging of Plutonium

At present, Los Alamos provides the only production capability for the plutonium shells that are used in modern primaries. Previously, the production of these shells was performed at the Rocky Flats Plant in Colorado, which is now closed. Additional production facilities are planned at the NNSA Savannah River site, but even after the various existing or planned production facilities are at full capability, it will be important to understand the impact of aging of plutonium on the existing stockpile primaries, as these new facilities will take time to fully impact the stockpile.

The principal decay mode of the isotope of plutonium used in primaries, 239Pu, is the emission of an alpha particle (a helium nucleus) and a uranium atom. 239Pu has a long half-life of 24,100 years, and so the transmutation of the metal via radioactive decay is not an immediate concern. However, when a decay occurs, the products are quite energetic, and so can produce damage in the surrounding crystal lattice of the metal. The damage ultimately manifests itself in the formation of helium bubbles in the lattice. The concern is that this damage will ultimately result in formation of voids that lead to swelling of the metal, something that does occur in other nuclear materials, and these consequences of aging could result in changes to the mechanical properties of the plutonium and affect the implosion characteristics of a primary.19

___________________

18 S. Storar, 2021, “Shining a Bright Light on Plutonium,” Science and Technology Review, Lawrence Livermore National Laboratory, https://str.llnl.gov/content/pages/2021-04/pdf/04.21.3.pdf.

19 S. Hecker and J. Martz, 2000. “Aging of Plutonium and Its Alloys,” LANL Science 26.

Careful analysis to date appears to indicate, rather surprisingly, that this lattice damage does not appear to manifest itself in measurable changes in material properties, at least on time scales of tens of years. However, given the potential impact of aging to the existing stockpile, continued experiments are being planned. Given the material complexity of plutonium, supporting computations using ab initio approaches will most likely require exascale capability and beyond to assess how aging affects nuclear performance.

Reentry Flows

As discussed earlier, the NNSA laboratories must qualify that the warheads deployed on the intercontinental ballistic missile (ICBM) leg of the strategic deterrent must survive the reentry environment. The qualification of the reentry body or reentry vehicle are the responsibilities of the Air Force and Navy, respectively, but the design laboratories must understand this environment to assess the thermal and mechanical loadings on the NEP as well as the supporting structures. Much of this assessment for the existing stockpile has been informed in the past through measurements performed during flight testing. But development of future systems or reentry trajectories will increasingly rely on simulation. Simulating these potentially new environments will require increased understanding of hypersonic flows, as well as turbulent transport in such flows. Current work to address these issues will benefit from exascale capability, but given the complexity of this problem there are requirements for capability beyond exascale.

To give some idea of the challenge of surviving the reentry environment, a satellite in orbit at an altitude of 320 km possesses a specific kinetic energy of roughly 3 × 107 J/kg. Although reentry vehicles operate on suborbital trajectories, the specific energy of such a vehicle can approach that of an orbiting body. As a point of reference, carbon vaporizes at 6 × 107 J/kg, and for bodies descending from exoatmospheric altitudes, the rarefied atmosphere inhibits efficient diffusion of heat. As a result, the reentry process generates sufficient energy per unit mass to destroy the vehicle. The challenge then is to dissipate an energy of this magnitude without destroying the reentry vehicle. A conflicting requirement is that the warhead must have an aerodynamic shape to fly stably in the atmosphere. It turns out that a cone shape is not optimal for dissipating the heat of reentry, making the engineering problem more acute. As the reentry body enters the atmosphere, it undergoes significant mechanical and thermal loading, with decelerations on the order of 100–200 times the acceleration, owing to gravity and thermal loads on the order of 1/10 the initial reentry energy. The exact values are dependent on the velocity and angle of reentry. In current systems, the heat load is dealt with by using a sacrificial ablating layer at the tip of the reentry body. This changes the mass properties such as the center of pressure and center of mass, and so careful modeling is required

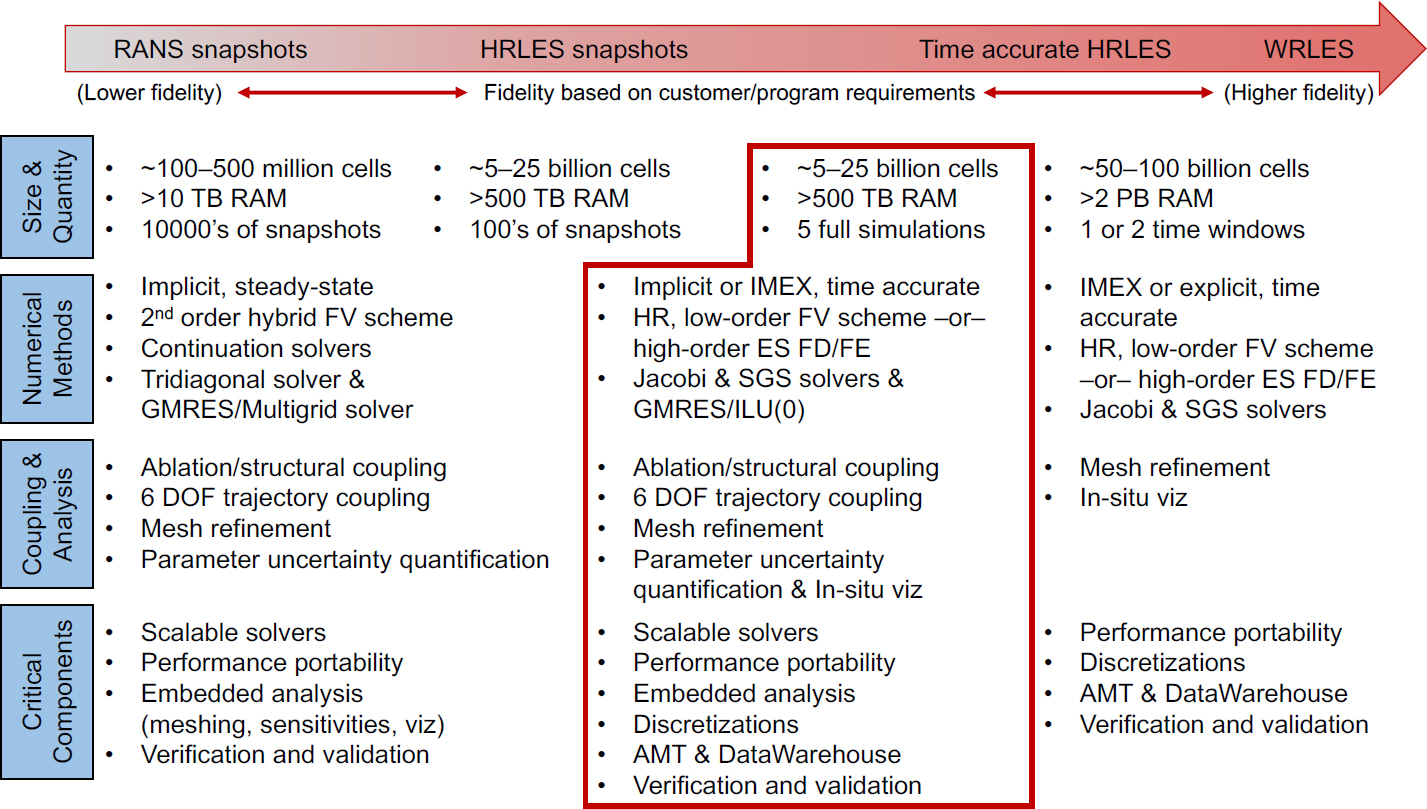

to ensure that the reentry system does not lose aerodynamic stability as it descends through the atmosphere. Last, as the reentry vehicle descends, it experiences different regimes of fluid motion and thus time-varying mechanical and thermal loading. Above an altitude of 125 km, the body descends at 5–6 km/sec and encounters free molecular flow. At an altitude of 75 km, the flow transitions to the continuum limit, where the body experiences its maximum inertial and thermal loads. At these atmospheric densities, the body experiences noise from the wake at the rear of the body. As the body descends further, the boundary layer transitions from laminar to turbulent flow. This regime is particularly stressful because the high level of mixing caused by the turbulence results in higher velocity and thermal gradients near the body wall. Qualitative understanding of the flow dynamics is essential, as the increased thermal loading can result in uneven ablation and loss of stability of the reentry body. New codes are currently being designed to simulate this environment with computational requirements that will exceed exascale capabilities.20 SNL has provided estimates of the computational requirements to develop a “virtual flight test capability” that would be used to study the performance of a reentry vehicle or body over a range of potential trajectories. The requirements at various levels of fidelity are shown in Figure 1-1.

The axis at the top of the figure refers to the level of fidelity for the simulation of the turbulent flow as the body reenters. The lowest fidelity (corresponding to Reynolds-averaged Navier-Stokes turbulence modeling) is computationally efficient but is known to be inaccurate in various key settings. In contrast, the use of more accurate large eddy simulation has memory requirements exceeding 2 petabytes. Not shown in this figure are the costs of performing a fully resolved direct numerical simulation of the transition to turbulence. SNL estimates that simulating 1 ms of time for transition on a full flight vehicle on a 50 exaflop machine would require approximately 3 days of computation.

HPC IN SUPPORT OF THE NNSA ENTERPRISE

In addition to studying weapons science, NNSA is also today effectively using HPC capabilities to support the entire weapons life cycle (known as the 6.x process), from concept through dismantlement. More recently, a particular need has arisen for HPC across the entire weapons complex to address and avoid issues in the current stockpile, including production, engineering, weapons surveillance, and assessment.

___________________

20 F.J. Regan, 1984, Re-Entry Vehicle Dynamics, New York: American Institute of Aeronautics and Astronautics.

NOTE: Acronyms are defined as follows: AMT, Analytics Middle Ter; DOF, degrees of freedom; ES, entropy stable; FD, finite difference; FE, finite element; FV, finite volume; GMRES, Generalized Minimal Residual method; HR, high resolution; HRLES, High Resolution Large-Eddy Simulations; ILU, lower-upper triangular factorization; IMEX, implicit-explicit time integration; RANS, Reynolds-Averaged Navier Stokes; SGS, Symmetric-Gauss-Seidel; WRLES, Wave-Resolved Large-Eddy Simulations.

SOURCE: From summary of computational requirements provided to the committee by LANL, LLNL, and SNL ASC teams.

COMPUTING AND EXPERIMENT—POSITIVE SYMBIOSIS

Although the committee has up to now focused on computational requirements for stewardship of the future stockpile, it is also important to point out that there is a symbiotic connection between computation and experiment that is essential to future success. At its origin, stockpile stewardship had two primary branches—strengthening both the nonnuclear experimental capabilities and the computing capabilities of NNSA. Progress on the first of these is evident through the success of facilities such as the National Ignition Facility (NIF), the Dual Axis Radiographic Hydrodynamic Test Facility, the Z Machine, and the U1A facility. Progress on the second is evident via the increased understanding of historic underground nuclear tests and continuing support for LEPs for systems in the stockpile, such as the W87, W76, and the B61.

It might appear that these two branches are independent; they are not. Rather, they are complementary. HPC is and will be a critical tool in designing the high-value

experiments that are routinely conducted in these facilities and in analyzing the results. Without computing, these experimental facilities would be almost useless. Likewise, the results obtained from these experiments serve to highlight deficiencies in computational models, leading to advances in understanding, often at the extremes of temperature and pressure.

As these experimental facilities continue to advance in both diagnostic capabilities and control systems, the flood of data produced offers new opportunities for computing. These include looking for patterns that would be opaque to human observers as well as machine learning to assist in optimizing complex experimental controls. As the resolution of these experimental capabilities continues to increase, it will be important to continue to support these efforts with increasingly capable computation.

GEOPOLITICAL CONSIDERATIONS

The present geopolitical landscape also further reinforces the importance of HPC. As the committee writes this report, the world is experiencing the greatest changes in geopolitics since the end of the Cold War. There is a war of territorial aggression in Europe, where the threat of nuclear weapons is playing both a deterrent and a compellent role. The committee takes note of the development and deployment of new types of delivery systems by peer competitors—for example, the Avangard hypersonic system that Russia has deployed on the Sarmat heavy ICBM as well as China’s test of a Fractional Orbital Bombardment System. These developments are occurring in an environment where extant nuclear arms control treaties are at their lowest level in many decades and the prospect of engaging in new treaty negotiations is poor.

While the United States has expressed a clear desire to reduce the role and salience of nuclear weapons in accordance with its commitments under Article VI of the Nonproliferation Treaty, the current geopolitical situation indicates that the challenges of stockpile stewardship will remain and will continue to stress the capabilities of the most advanced computers well beyond exascale computing. HPC capabilities must be responsive to new mission requirements, enabling rapid redesign, assessment, and deployment of new requirements for weapons systems and delivery vehicles. Last, HPC is also required to understand adversarial capabilities. If foreign systems differ in fundamental ways from U.S. systems, high-fidelity simulation will be required to characterize their behavior. Without test data to calibrate them, simplified or lower-dimensional models would not be applicable to alternative designs. HPC will be required to assess such systems to avoid with confidence the possibility of technical surprise.

SUMMARY OF FINDINGS

This chapter includes findings that are supported by the above discussion.

FINDING 1: The demands for advanced computing continue to grow and will exceed the capabilities of planned upgrades across the NNSA laboratory complex, even accounting for the exascale system scheduled for 2023.

FINDING 1.1: Future mission challenges, such as execution of integrated experiments, assessment of the effects of plutonium aging on the enduring stockpile, and facilitation of rapid design and development of new delivery modalities will increase the importance of computation at and beyond the exascale level. Orders of magnitude improvement in application-level performance would allow for improved predictive capability, valuable exploration and iterative design processes, and improved confidence levels that will remain infeasible as long as a single hero calculation takes weeks to months to execute on an exascale system.

FINDING 1.2: HPC has traditionally played an important role in support of weapons systems engineering. Some emerging challenges in this arena, such as qualifying future weapons systems for reentry environments, will require new approaches to mathematics, algorithms, software, and system design.

FINDING 1.3: Assessments of margins and uncertainties for current weapons systems will require additional computational capability beyond exascale, a problem exacerbated by the aging of the stockpile. Enhanced computational capability will also be required in assessing margins and uncertainties should there emerge requirements for new military capabilities.

FINDING 1.4: The rapidly evolving geopolitical situation reinforces the need for computing leadership as an important element of deterrence, and motivates increasing future computing capabilities.