Addressing Inaccurate and Misleading Information About Biological Threats Through Scientific Collaboration and Communication in Southeast Asia

BUILDING A TRUSTED SCIENTIFIC NETWORK: THE STRATEGY

Mis- and disinformation about outbreaks, epidemics, and pandemics are age-old problems that have been exacerbated in modern times by the rapid production and flow of information through various means. False claims based on inaccurate and misleading information during infectious disease events present challenges to effective outbreak control, including potential distrust among affected populations in public health response and questions among security experts about the true origins of outbreaks. Some false claims may be disproven through sound scientific analysis, suggesting a role for scientists to provide evidence-based, scientifically defensible information that may discredit or refute such claims. The National Academies conducted a study to engage scientists in Southeast Asia in working across scientific disciplines and sectors to identify and address inaccuracies that may fuel mis- and disinformation. Although no evidence exists demonstrating that Southeast Asia is more likely to develop or receive misinformation than any other geographic region, this region has witnessed the emergence of several infectious diseases that have spilled over into the human population and regional growth of efforts around responsibility in the life sciences. Although this study has a regional focus, the outcomes may be useful for scientists in many other parts of the world given the ubiquitous nature of mis- and disinformation.

The main purpose of the study is to build capacity within Southeast Asia to counter scientific inaccuracies about biological threats that lead to or perpetuate mis- or disinformation in a collaborative and effective manner. At the request of the sponsor, this report puts a particular emphasis on (1) creating working definitions of mis- and disinformation to provide a deeper understanding of the generation and spread of such claims; and (2) understanding how to distinguish consensus views of the scientific community, supported scientific information that may not necessarily be the consensus view, or unresolved or evolving scientific information.

This study focuses on misinformation about emerging infectious diseases (e.g., influenza strains and Ebola virus); targeted disinformation about biological events and materials; inaccurate claims about the purpose of pathogen research and facilities; and poor-quality scientific information such as studies that have little statistical power, methods that do not match the conclusions drawn, or poor reporting of results. The study is not specifically focused on the COVID-19

pandemic, nor was the goal of the study to address any particular claim including the origins of the SARS-CoV-2 pandemic. The study also does not focus on issues of vaccine hesitancy or resistance, primarily owing to the significant complexity of the underlying reasons for these views. However, the outcomes of the study may be broadly helpful to scientists seeking to counter misinformation about vaccines.

The primary audience for the study and its outputs, specifically the engagement strategy report and how-to guide, are scientists and scientific institutions from Southeast Asia. Because mis- and disinformation are global problems, scientists from other regions also may benefit from the study outcomes. For this study, the term “scientist” includes laboratory and field life scientists; clinicians (human and veterinary); public health scientists; social scientists; and scientists from a variety of other natural, physical, and computer sciences and organizations (academia, industry, nongovernmental organizations, government laboratories, and community or unconventional laboratories). Potential broader audiences of corrective messages are policymakers, journalists, and lay and religious leaders. Although the primary focus of the study is to develop the trusted network of scientists rather than to refute claims in broader public discourse or in designed informational or behavioral change campaigns, the outcomes provide informative knowledge to regional scientists who may choose to engage policymakers and other non-scientific stakeholders in refuting misinformation.

The regional focus of the study, which is specified in the Statement of Task, allowed the committee to include structural and cultural considerations and challenges of the region, and develop actionable recommendations based on existing regional resources and scientific networks. Although the focus and framing of the report and recommendations are within the lens of Southeast Asia, the scholarship of network design, misinformation, and science communication may inform efforts by scientists in other parts of the world to counter inaccurate and misleading information given the international nature of mis- and disinformation. In addition, international networks may offer certain advantages to engaging scientists with different expertise and accessing resources from international organizations. However, opportunities for involving scientists from Southeast Asia and for building understanding and trust among network members may be greater at the regional level where the cultures and societal structures may be more similar. Therefore, this strategy report and accompanying how-to guide are tailored to provide regionally appropriate and actionable advice, rather than high-level options that are not specific to any region or country in the world.

To address the Statement of Task, the committee produced the following consensus products: (1) this report, which describes the committee’s recommended strategy for developing a trusted network of qualified scientists who address inaccurate and misleading information that might lead to mis- or disinformation; (2) a how-to guide for scientists to determine whether and how to address a claim and, subsequently, how to correct the scientific information fueling the claim; and (3) a relational mapping of selected online collaboration platforms.1 The strategy report and guide do not provide recommendations that request limitless capacity

___________________

building, workforce training, and funding for a number of reasons. These types of recommendations are less actionable, do not empower partners, do not help scientists navigate potentially challenging policy and practice, and put the focus on long-term funding as opposed to providing the tools and knowledge needed to implement the engagement strategy and guide. The strategy report and guide highlight actions that could have high impact, focus on local solutions, and build on local resources so they can be implemented feasibly by scientists in Southeast Asia. Given the complexity of mis- and disinformation, many stakeholders and efforts are necessary to prevent and otherwise counter these claims. This study involves one set of stakeholders—scientists—in helping to address mis- and disinformation that are fueled by inaccurate and misleading information. Furthermore, the landscape and risks associated with mis- and disinformation, though not new, are evolving rapidly because of the volume of information available, the availability of new online platforms (e.g., social media) and tools (e.g., artificial intelligence [AI]), and changing pandemic and broader societal context. Therefore, critically analyzing all available (often new) resources for addressing mis- and disinformation for their effectiveness is not feasible. The outcomes of this study are intended to present current scholarship and practical knowledge about how to engage scientists in working collaboratively to address inaccurate and misleading information, which could be provided to broader audiences (e.g., policymakers, journalists, lay and religious leaders, and other members of the public) with evidence-supported, robust scientific information that effectively can counter mis- and disinformation.

This report starts with a detailed description of the problem of compounding threats involving inaccurate and misleading information that leads to or perpetuates mis- or disinformation, and their associated consequences to public health and national security. This discussion is followed by a description of cases where life, social, and/or computer scientists have helped to address these problems and challenges. Subsequently, the strategy for a trusted network of scientists from Southeast Asia is described. The strategy builds on scholarship about scientific networks and network influence, countering misinformation, and understanding uncertainty, which are detailed in the last several sections of the report. Drawing on the scholarship presented in this report, the how-to guide2 provides clear instructions for scientists to determine whether and how to address particular claims.

COMPOUNDING THREATS OF FALSE INFORMATION AND INFECTIOUS DISEASES AND OTHER BIOLOGICAL THREATS

Misinformation is defined as the unintentional spread of false or misleading information that is shared by mistake or under the presumption of truth (Sedova 2021). Disinformation is defined as false, misleading, distorted, or isolated factual information that is spread deliberately with the intention to cause harm or damage.

___________________

The lines between accidental and deliberate spread of inaccurate information may be blurry. For example, false claims that may have been introduced deliberately may be spread by actors who believe them to be true and do not intend to spread false information. Similarly, actors who intend to cause harm may spread misinformation deliberately, including inaccurate scientific information that fits their purpose. Further distinguishing among misinformation, disinformation, and other related concepts, such as conspiratorial thinking or a state of being uninformed (Scheufele and Krause 2019), is beyond the scope of this report. Furthermore, the actors and approaches involved in addressing targeted disinformation often are different than those involved in correcting inaccurate and misleading information that contribute to misinformation. Scholarship on countering targeted disinformation is extremely limited, but increasingly social, computer, political, and natural scientists are studying the creation, spread, and correction of misinformation. Therefore, this report and associated guide draw on the available scholarship on misinformation to inform the recommended approach for countering inaccurate and misleading information, regardless of the intention behind its spread.

Consequences of the spread of inaccurate, misleading, or even hyped scientific information include loss of trust in the public health system and/or ineffective public health responses during epidemics, international conflict about the source and responsibility of epidemics, or the targeting of individual scientists and research institutions associated with particular claims. This section provides examples of inaccurate and misleading claims about biological threats.

Mis- and Disinformation Involving Infectious Diseases

During the height of the Cold War, the Soviet Union deliberately spread false claims about the United States conducting biological activities with non-peaceful intent, seeking falsely to identify the United States as a violator of the Biological and Toxins Convention, which bans the development, stockpiling, production, acquisition, and retention of microbial or other biological agents or toxins that have no peaceful or prophylactic purposes (Gamberini and Moodie 2020). Such disinformation did not disappear at the end of the Cold War. During the past 15 years, Russia has continued to spread disinformation about U.S. biological investments in Central Asia, and specifically about the activities of the Richard Lugar Center for Public Health in Tbilisi, Georgia, which Russia claims is a secret biological weapons facility run by the United States that could be responsible for the release of viruses (Fidler 2019; Gamberini and Moodie 2020; Isachenkov 2018; Lentzos 2018). During the COVID-19 pandemic, as in previous epidemics, Russia and China have spread disinformation to discredit the United States and several European countries, which already has adversely affected the public health system and the government during the COVID-19 pandemic (Dubow et al. 2021). These claims are spread deliberately, are motivated politically, and have real consequences in international diplomacy and/or response to public health emergencies.

Inaccurate and misleading claims associated with biological events also can undermine response activities in affected areas and discredit the current

international leaders in biosecurity. For example, during the 2014–2016 West Africa Ebola Virus Disease (EVD) outbreak, distrust of health care and international aid workers by West Africans affected by the virus adversely altered disease dynamics and response activities. The consequences of this distrust and skepticism presented significant challenges to infection control, particularly in identifying, isolating, and treating exposed or newly infected individuals.

Although this study did not focus on vaccine hesitancy, the ongoing coronavirus outbreak (2019–present) has revealed significant vulnerabilities from misinformation about the safety and effectiveness of vaccines and natural products in Southeast Asia. In Thailand, public health officials initially provided incorrect information about the two vaccines (Sinovac-CoronaVac and AstraZeneca COVID-19 vaccine) that the country has acquired, stating their efficacies as significantly higher than experimentally supported (Chulalongkorn University 2021; KIN (![]() ) – Rehabilitation & Homecare n.d.; Mallapaty 2021). Once the incorrect information was discovered, the Ministry of Health made corrections to its materials and communications, but the inaccurate information continues to be accessible online. In Indonesia, numerous claims have been made by non-scientists about the efficacy of natural products in protecting against SARS-CoV-2 infection and the sources of the pandemic (Fitrianingrum 2021; Rahmawati et al. 2021). However, no scientific evidence exists supporting these false claims (Adelayanti 2020) whereas other claims have been corrected (Indonesia COVID-19 Task Force n.d.; Majelis Ulama Indonesia 2021). In Malaysia and Indonesia, false claims about vaccines not being halal (Ministry of Health for Malaysia 2021), specifically that they are made in aborted fetal cells or include cells from pigs (Kassim 2020; Ruzki and Ismail 2021), have adversely affected vaccine acceptance. In addition, translation of information into local languages has contributed to the spread of inaccurate information. In other parts of the world, claims spread about the SARS-CoV-2 vaccine include the following: (1) a means for covert implantation of a microchip, (2) the cause of sterility in women, and (3) a scientific hoax (Goodman and Carmichael 2020; Henley and McIntyre 2020; Imhoff and Lamberty 2020; Mayo Clinic Health System 2021).

) – Rehabilitation & Homecare n.d.; Mallapaty 2021). Once the incorrect information was discovered, the Ministry of Health made corrections to its materials and communications, but the inaccurate information continues to be accessible online. In Indonesia, numerous claims have been made by non-scientists about the efficacy of natural products in protecting against SARS-CoV-2 infection and the sources of the pandemic (Fitrianingrum 2021; Rahmawati et al. 2021). However, no scientific evidence exists supporting these false claims (Adelayanti 2020) whereas other claims have been corrected (Indonesia COVID-19 Task Force n.d.; Majelis Ulama Indonesia 2021). In Malaysia and Indonesia, false claims about vaccines not being halal (Ministry of Health for Malaysia 2021), specifically that they are made in aborted fetal cells or include cells from pigs (Kassim 2020; Ruzki and Ismail 2021), have adversely affected vaccine acceptance. In addition, translation of information into local languages has contributed to the spread of inaccurate information. In other parts of the world, claims spread about the SARS-CoV-2 vaccine include the following: (1) a means for covert implantation of a microchip, (2) the cause of sterility in women, and (3) a scientific hoax (Goodman and Carmichael 2020; Henley and McIntyre 2020; Imhoff and Lamberty 2020; Mayo Clinic Health System 2021).

Another significant focus of disinformation claims during the SARS-CoV-2 pandemic is related to its origin, which has yet to be determined. Numerous articles have been published attributing the introduction of SARS-CoV-2 into humans to deliberate release as a biological weapon by China, the United States, Israel, or the United Kingdom (Gertz 2020; MEMRI 2020; Pamuk and Brunnstrom 2020; Rogin 2020; Stevenson 2020). These claims can affect diplomatic efforts among countries adversely, expose researchers to various personal and security harms (e.g., death threats), and/or limit efforts to enhance safety and security of field and laboratory-based research of emerging infectious diseases.

The volume and variability of scientific information communicated have led the World Health Organization (WHO) to coin the term “infodemics,” which it defines as “a tsunami of information—some accurate, some not—that spreads alongside an epidemic” (WHO 2020). Limited to no evidence exists that suggests the problem with infodemics has gotten worse over time (Simon and Camargo 2021). Limited evidence also exists suggesting that access to more

accurate information is connected to more informed choices by citizens and policymakers. For example, a recent U.S. National Academies’ report stated that science alone rarely changes attitudes and behaviors regardless of whether the audience understands the correct information or suggests their minds would be changed with new information (NASEM 2020). Nevertheless, WHO has maintained the idea that unique challenges exist in addressing the COVID-19 pandemic because of the volume and variable quality and accuracy of available scientific information (WHO 2020, n.d.). According to WHO, these challenges are exacerbated during emergency situations when little information is available, public health and/or security questions are unanswered, and decisions for response are made quickly.

Scientific Information Overload: A New Threat Landscape

Digital communication technologies, in particular the internet and social media, profoundly have changed the way scientific information is produced, consumed, and disseminated and have contributed to access to and spread of mis- and disinformation (Okeleke and Robinson 2021). In the 1990s, scientists were among the earliest to adopt internet technologies primarily to communicate with their peers. Today, scientists can choose to disseminate their work to many different audiences and at many different stages of the scientific process. In addition, scientists now can share data, crowdsource peer review, conduct analyses, request funding (i.e., crowdsourced funding), and establish and maintain collaborations online.

These developments have been complicated by changing norms within the scientific community related to promoting one’s own work and sharing data and results prior to peer review. The choice about whether to use online channels for communication may be influenced by the stage of a scientist’s research and the intended audience of communications about their research. Prior to writing a new scientific article, email, listservs, and various online platforms can be used to connect with colleagues to exchange comments, ideas, and data. After completion of a manuscript but prior to its peer review, preprint servers can be used to disseminate early manuscript drafts to other scientists outside of one’s direct collaboration circles. Two primary preprint servers exist for biological research, bioRxiv and medRxiv, both of which add disclaimers about whether individual papers have been peer reviewed and published. Both preprint servers have internal processes for checking for plagiarism, completeness, non-scientific content, and biosecurity risks. This review process involves trying to identify papers that could cause harm, such as those countering established scientific knowledge (e.g., claims such as “smoking does not cause cancer”). During the past few years, the number of submissions and downloads to bioRxiv has significantly increased, and in 2017 only two-thirds of the papers submitted to bioRxiv were published in peer-reviewed journals (Abdill and Blekhman 2019). As demonstrated during the COVID-19 pandemic, the significant number of papers available to scientists and non-scientists alike, many of which have not been peer reviewed, provide ample opportunities for any inaccurate or misleading

information to be identified and used or shared by interested individuals. Blogs and social media can be used to advertise a newly published manuscript to the broader scientific community or even the public (Yeo et al. 2017).

With so many channels and opportunities for dissemination, risks may exist from an overabundance of information that cannot be assessed effectively for quality and accuracy before broad dissemination. Information overload leads to new threats to the integrity of science and the scientific workflow. Sometimes, errors may occur from misuse of a channel. For example, during the SARSCoV-2 pandemic, individuals, including scientists, discussed the virus, illnesses, and other health- and science-related issues on social media platforms, which included sharing unconfirmed and misleading information (Cinelli et al. 2020). As another example, inaccurate information posted on preprint servers has been amplified by the press or by social media influencers before they could be vetted as part of the regular peer-review process. Other times, errors may occur even as part of the “regular” process of scientific dissemination—for example, when correct, peer-reviewed information is distorted or exaggerated in institutional press releases (Li et al. 2017). As social media increasingly becomes a “hype machine,” developing strategies to prevent dissemination of biased and distorted claims will become crucial (West and Bergstrom 2021).

Types of Scientific Inaccuracies and Misinformation

Producing accurate scientific information in crisis situations is more difficult than in non-crisis situations. Often, a limited amount of information is available, data are sparing, and the situation is evolving rapidly in crisis situations. At the same time, crises demand urgent policy actions, which rely on emerging science, often conducted under immense public scrutiny. Still, scientists have been able to lend their expertise during crises in positive ways. However, the SARS-CoV-2 pandemic has presented a cautious tale of generating and/or sharing sound and evidence-supported information, particularly when the outbreak-causing virus is not well understood. The volume of information produced and communicated through peer-reviewed journals, non-peer-reviewed preprint servers, predatory journals, online platforms and websites, and social media create significant challenges for scientists and other consumers of scientific information to evaluate the accuracy and quality of publicly shared scientific information. Articles that are poorly conducted, involve small sample sizes, and/or demonstrate other significant weaknesses have fueled misinterpretation and misinformation by the media or other groups with particular agendas. For example, the results of a 2020 Danish study on the effectiveness of masks for protecting against SARS-CoV-2 infection were interpreted by the media as “questioning the efficacy of masks” and used by antimasking campaigns to promote its agenda (O’Grady 2021). Because evidence-informed decision-making involves acquiring and aggregating available information, inaccuracies resulting from weak study design, low sample size, and poor data analysis also run the risk of developing ineffective and misinformed policy and public health actions.

In addition, scientific communications that extensively promote a particular product or information (referred to as hype), exaggerate the results and outcomes of a study (referred to as hyperbole), or distort the data or results can lead to and/or perpetuate misinformation, which could have significant downstream consequences to democratic decision-making and public health, both of which are critical during infectious disease epidemics (West and Bergstrom 2021). Other practices, such as not publishing negative results, referred to as publication bias, and selective referencing of articles, referred to as citation bias (e.g., self-citation, citation of authoritative authors, and citation by journal impact factor), present a partial picture of the scientific results and could perpetuate inaccurate and misleading information (Joober et al. 2012; Urlings et al. 2021). Scientists are well positioned to address these challenges to promote accuracy and robustness of scientific data and results (West and Bergstrom 2021).

Malign actors also influence crises when ambiguity exists and information changes rapidly. First impressions of information can be challenging to counter so anticipating and preemptively addressing problematic narratives can help fill information gaps with the correct information before misleading claims have an opportunity to spread. Partnerships with social and mainstream media are critical to reduce the risk of amplification of inaccurate or misleading information. But, if problematic information is not identified before it enters the mainstream venues, other means are necessary to counter those claims within those venues.

SCIENTISTS AS PART OF THE SOLUTION

Scientists can play several roles in addressing inaccurate and misleading claims including the following: (1) countering misinformation that has long-term consequences to public health and research progress and translation among scientists and other key stakeholders; (2) building trust and strengthening communication among scientific and non-scientific communities (e.g., policymakers and journalists); (3) developing defensible, high-quality, accurate scientific information, which results in high confidence even if consensus does not exist; (4) engaging with members of the broader public to convey how scientific progress is made and how to evaluate scientific information critically; (5) developing further a new field of study in developing, monitoring, and countering misinformation, which already has been created and includes social, political, computer, and life scientists; and (6) acting as objective, independent messengers of evidence-supported scientific information.

Scientific inquiry has been crucial for assessing emerging outbreaks and epidemics, and developing effective strategies for countering emerging, reemerging, and persistent infectious disease and other biological threats. Often life and public health scientists from various disciplines, including virology, computational biology, and epidemiology, play numerous roles in preventing, detecting, and responding to biological threats. These roles include detection and characterization of new infectious diseases and/or strains of existing pathogens, analysis of the rate of illnesses and deaths, identification of the source of new

infections and effective control measures, and development of new vaccines and medicines among many other activities. For example, the 2011 Escherichia coli O104:H4 outbreak initially was characterized using bioinformatics analyses of bacterial genomic sequences following an unusual infection pattern—24 infections in France and more than 3,816 cases in Germany (Guy et al. 2012; IOM 2012). The sequence analysis enabled food safety experts to use epidemiological methods to track the source, which was contaminated sprout seeds (Grad et al. 2012), but not until after the outbreak was correlated inaccurately with lettuce, cucumbers, and tomatoes (Foley et al. 2013). These analyses, which were conducted relatively quickly after initial infections were documented, enabled public health officials to prevent further spread of the bacteria and addressed any concern about the deliberate nature of the outbreak, which resulted from the unusual infection patterns. Scientists from other disciplines, including various social science disciplines and computer science, have enabled effective control of biological incidents. The 2014–2016 EVD outbreak in West Africa illustrates the important role of social and computer scientists. Cultural anthropologists shed light on why white protective suits and removal of ill or deceased individuals caused distrust, leading health agencies to change the color of the suit (because white signifies death within the local culture) and develop safe practices for traditional burials (Nyhan 2014). These changes allowed health workers to gain the trust they needed among the local population to control the spread of EVD. Similarly, advances in big data analysis and modeling of infections informed international awareness about and response to the outbreak (Nieddu et al. 2017; Wein 2014). During the SARS-CoV-2 pandemic, computer scientists also bring to infectious disease outbreaks their knowledge of information transfer via social media and other online platforms, and advanced data analytics such as AI for quickly identifying effective existing therapies and near-real-time surveillance during outbreaks (Basu 2021; Begley 2021; Bridgman et al. 2021; Budd et al. 2020; Himelein-Wachowiak et al. 2021; Kostkova et al. 2021; Mohsin et al. 2020; Zhou et al. 2020).

Conclusion 1: Life, social, and computer scientists play critical roles in ensuring their science and the science produced and shared by others within their communities is accurate and supported by well-designed and implemented studies. They can work collaboratively to leverage appropriate expertise to produce and/or disseminate accurate scientific information, peer review and correct inaccurate scientific information, and ensure that scientific information is communicated in an unbiased, objective, and culturally informed manner.

Conclusion 2: Scientists are one of several stakeholders in addressing misinformation. Other stakeholders include policymakers, journalists, and members of the public. Engaging these other stakeholders positively is critical to building trust, communicating corrective information clearly and effectively, and addressing misinformation in a timely manner if and when necessary.

Several online networks of scientists seeking to share information and promote interaction have emerged during the past 10 years, and the number of networks has exploded since the emergence of SARS-CoV-2. During the past 5 months alone, platforms such as nextstrain.org and virological.org have enabled scientists to use publicly deposited viral genomic sequences to examine the similarity/divergence and evolutionary rates of strains from infected individuals, and to estimate dates of viral emergence in the human population (Nextstrain n.d.; Virological n.d.). Other platforms, such as the COVID-19 Global Science Portal, provide opportunities for scientists to share information about the pandemic response, inform members about scientific priorities and investments, and enable discourse about emerging scientific issues associated with the pandemic (ISC n.d.b). Still, other platforms, such as kaggle.com, provide opportunities for data scientists to contribute to answering scientific questions about the pandemic (Allen Institute for AI 2021). In addition to these platforms, bioRxiv and medRxiv have provided readers opportunities to comment on deposited articles and submit articles themselves, providing opportunities for third-party review. Though these reviews are moderated, their accuracy may not be verifiable. Some preprint servers are integrated with publishers, which enables transition of articles from non-peer-reviewed to peer-reviewed articles. In addition, publishers of peer-reviewed journals have provided free access to published research about pathogens causing ongoing outbreaks, epidemics, or pandemics, including to WHO and other public health entities (STM 2021). Some publishers also provide training on research integrity and countering misinformation to their scientist-authors (Elsevier n.d.; Nature Masterclasses n.d.). Finally, virtual platforms, such as ResearchGate, Academia.edu, and Mendeley provide opportunities for scientists to share their articles with others. Together, online platforms, pre-publication databases, and individual groups of scientists enable broader participation in addressing global scientific questions associated with ongoing events. Although few individual groups of scientists and online platforms explicitly address potential biosecurity risks resulting from disinformation campaigns, these platforms suggest that the concept of a virtual community of scientists conducting community-based analyses and peer review to produce accurate, evidence-supported science is possible.

Finding 1: During the past few years, numerous virtual platforms, including and independent of social media platforms, were created to foster communication and collaboration among scientists, share data, and crowdsource analysis. These platforms created opportunities for scientists throughout the world to interact with each other.

Like in many other fields, AI has been considered a helpful tool in identifying misinformation claims so they can be addressed by various individuals or entities, including a few policymakers whom the committee consulted. For example, organizations, such as Meta AI (formerly Facebook), and marketplaces, such as Amazon Mechanical Turk, use or can use AI to detect misinformation

(Horowitz 2021; Meta AI 2020). However, these tools have demonstrated challenges in identifying misinformation related to the COVID-19 pandemic (Siriwardana n.d.). Therefore, understanding the capabilities and limitations of AI, especially in helping to identify false claims, is critical if national and/or nongovernmental entities plan to use AI as part of their fact-checking efforts and publishers and scientists plan to use AI to identify relevant articles for review and analysis. AI refers to the ability of computers to perform tasks that normally require human intelligence and has been studied for decades, primarily focusing on routine software to codify knowledge of human experts in specifically programmed rules (i.e., “if given a specific input, then provide a specific output”) (Sedova 2021). Recent interest in AI focuses mostly on machine learning, a type of AI that is driven by data abundance, innovation in algorithms, and computing power. Machine learning systems learn from data rather than human expertise and involve the development of a model based on a training dataset and defined algorithm architecture that can compensate for bias in the data. Four types of machine learning exist: (1) supervised learning that uses example data that have been curated and labeled by humans; (2) unsupervised learning that uses data that do not require labels but rather identifies patterns in the data, clusters similar groups of data, and can detect new behaviors from previously unidentified patterns; (3) semi-supervised learning that uses labeled and unlabeled data; and (4) reinforcement learning that uses an autonomous AI agent that gathers its own data as it performs goal-oriented tasks to maximize rewards in a learning environment and improve based on trial and error in fictitious or real-world situations.

Conclusion 3: Technical solutions alone are not sufficient to identifying and addressing inaccurate and misleading claims. Scientists from a diversity of disciplines are necessary to address inaccurate and misleading claims about biological threats. What is needed is the establishment of a structure for human interactions, favorable policies for data sharing and analysis, and a process for collaboration to correct inaccurate and misleading information before it has the potential to fuel misinformation.

STRATEGY OF THE TRUSTED NETWORK OF SCIENTISTS

A strategy for engaging scientists in addressing inaccurate and misleading information builds on the role that scientists play in curbing the spread of misinformation of biological threats, benefits from leveraging local and international networks in a dynamic and distributed network, and enables scientists to interact on an ongoing basis to improve scientific accuracy and excellence. A distributed network is a network of networks that enables active involvement of scientists from many disciplines and institutions to assist with correcting inaccurate and misleading information, and draws on existing discipline- or sector-specific networks of scientists. The underlying scholarship and regional considerations for the recommended strategy and considerations for scientists

involved in countering misinformation are described after the description of the recommended strategy.

Recommendation 1: Leaders of established scientific networks in Southeast Asia jointly should create a distributed network of individuals and organizations (i.e., a network of networks) that draws on a diversity of scientific disciplines and sectors needed to correct inaccurate and misleading scientific information about infectious diseases and other biological threats. The network should be regional and have a leadership structure that includes scientists from countries in the regional network. The network itself should be virtual only, leveraging recently developed online collaboration tools, but should be based in a host nation within Southeast Asia to support key operations (e.g., website, email addresses, and resource repositories) and gain credibility by regional and national authorities.

The following sub-recommendations define the scope and boundaries of the network.

Recommendation 1.1: The network should establish (1) a governing board, comprised of knowledgeable scientists who are responsible for making strategic decisions for the network; and (2) an executive team, comprised of scientists who have a proven track record of developing and sustaining scientific networks. The executive team should manage, motivate, and expand the membership; foster diversity among the membership (i.e., gender, age, experience level, country, ethnicity, scientific discipline, type of institution); ensure continuous services are provided to members; and activate the network to address inaccurate and misleading information about biological threats when such claims arise. The executive team should work with individual members and external stakeholders to identify claims and determine which claims to address before activating relevant expert members of the network.

Recommendation 1.2: The network should include scientists from all relevant disciplines—specifically a variety of life and other natural sciences, computer and information science, social sciences including science communication experts, and political science including researchers of misinformation—to ensure that the most relevant expertise is leveraged in addressing inaccurate and misleading information and can be communicated effectively to the appropriate stakeholders, whether within or outside the network. Furthermore, the network should include scientists and country-level networks from academia, industry, and other nongovernmental and government organizations.

Recommendation 1.3: The network should be hosted or sponsored by an organization with authority in Southeast Asia (e.g., the Association of Southeast Asian Nations) to enhance its credibility among scientists, policymakers, and other stakeholders, and to support its work through connections with other networks of experts, share information, provide meeting facilities, and connect the network’s efforts with regional public health and scientific priorities.

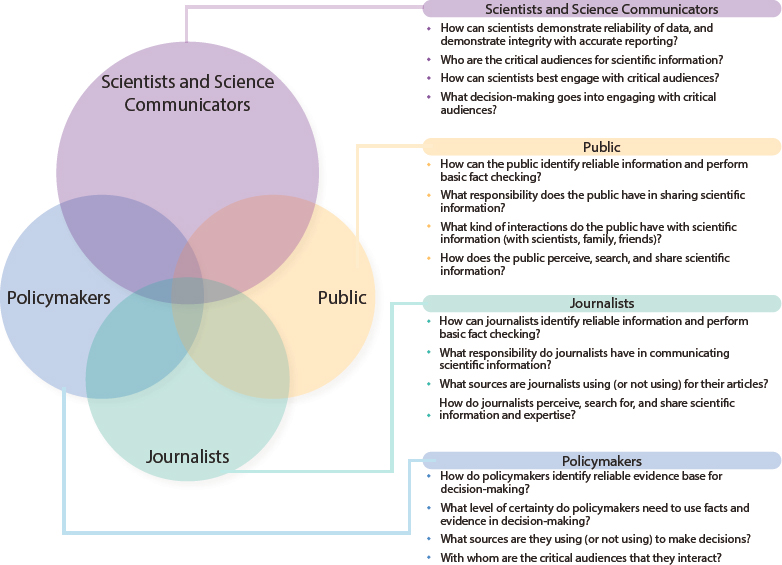

Recommendation 1.4: The primary audience and membership of the network should be scientists. However, the network governing body should develop trusted relationships with policymakers, journalists, and lay and religious leaders to enable broader communication and interaction with these stakeholders.

Recommendation 1.5: The network should engage (1) international scientific networks to improve access to needed expertise; and (2) non-technical organizations, such as established, credible fact-checking organizations, to assist with identification of inaccurate and misleading information, determination about whether to address the inaccurate information, and communication of corrective information.

Recommendation 1.6: The network should focus initially on addressing inaccurate and misleading information relating to infectious disease and other biological threats. However, as the network grows and gains credibility and members, it may expand to countering inaccurate and misleading information in other fields.

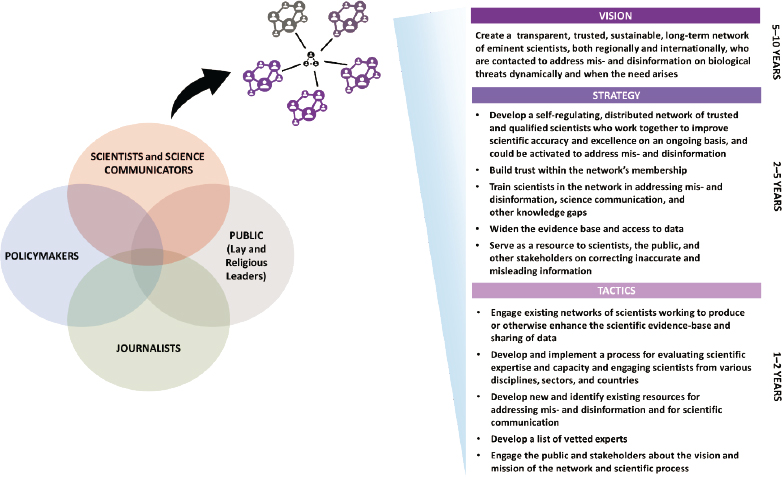

Developing a trusted, regional network of qualified scientists who can work together to counter misinformation based on scientific inaccuracies involves the development of a high-level strategy and structure for the network. A regional network of scientists offers advantages over national initiatives, including access to experts from a diversity of disciplines, minimization of political or social influence of information corrected or discussed, and access to resources such as training programs and guides. A network of life, social (including experts in science communication), and computer scientists may collaborate and communicate at the technical level without raising concern or barriers at the political levels, especially if network leadership and members understand and comply with national policies (see Recommendation 7). Figure 1 illustrates the committee’s vision of the overall strategy of the network. It highlights the need to develop short-, mid-, and long-term goals to achieve full development of the distributed network, and the strategy and tactics need to be iterated over time to achieve the vision. Developing the vision of the network at its beginning ensures that the strategy and tactics work toward realizing the vision and embeds considerations about long-term sustainability of the network at the start. This strategy will need

to (1) empower talent; (2) motivate scientists; (3) engage international, regional, and national efforts; and (4) leverage existing initiatives in responsible science.

Empowering Local and Regional Talent

A critical element for the trusted network is having a diversity of scientific experts who work collaboratively to address inaccurate and misleading information. Many countries in Southeast Asia have invested in developing scientific talent and scientific experts in various biological sciences disciplines. Current measures of scientific output (e.g., number of graduates in specific disciplines and number of papers published) do not characterize local scientific capacity adequately and may present difficulties in generating or recruiting future talent in various scientific fields. Many countries in Southeast Asia have science, technology, and innovation talent development roadmaps, but many do not include similar initiatives to support the development of social scientists because the social sciences are perceived as not being associated with economic gain from innovation (Scott-Kemmis et al. 2021). The pandemic has revealed a significant lack of a robust community of social science experts capable of supporting the laboratory or field-based natural science community in Southeast Asia to address

a variety of societal problems, including capabilities to address inaccurate and misleading claims effectively.

In addition, equipping life and computer scientists from Southeast Asia with science communication and stakeholder engagement skills can empower them to address inaccurate and misleading information. Furthermore, providing skills to foster inclusive leadership and promote trust and sustainability within scientific networks can enable cross-disciplinary, cross-sector, regional collaboration to address these claims. Identifying the talent gaps in specific scientific disciplines in Southeast Asia is critical to ensure local relevance and sufficiency. Furthermore, an inclusive network of scientists can enable all groups to work toward a common goal of addressing misinformation about emerging infectious diseases and biological threats by leveraging each other’s expertise and diverse perspectives.

Finding 2: Training scientists on science communication, public engagement, and science policy is critical to countering misinformation.

Finding 3: In Southeast Asia, research and education in these and other social science disciplines are limited. But they are necessary for ensuring that cross-disciplinary scientific teams have all relevant skills and expertise available when addressing inaccurate information that could lead to or perpetuate misinformation.

Recommendation 2: National competent authorities, whether the Ministries of Science or other Ministries, in Southeast Asian countries should develop undergraduate, graduate, or postgraduate training programs on various social science disciplines, including science communication and public engagement. In addition, national competent authorities should develop informal training programs in science communication and public engagement for life, computer, and other natural scientists and engineers to enhance their communication skills and their ability to build public trust. Countries that already have established education or training programs should share their curricula, best practices, and lessons learned.

Motivating Service for the Purpose of the Network

Another critical element of the trusted network is motivating local scientists to contribute actively in promoting scientific excellence and integrity, building the defensible and accurate scientific knowledge base to correct inaccurate and misleading information, and identifying and addressing misinformation. Examples of ways that scientists may be motivated to contribute to the network include the following: (1) appealing to concepts of scientific responsibility, associating the practice of countering inaccurate information with scientific integrity and excellence; (2) highlighting the potential consequences of misinformation

such as the erosion of trust in science and scientists by members of the public and negative effects on the research and public health ecosystem; (3) recognizing scientists for their contributions to countering inaccuracies; and (4) providing financial support for time spent addressing inaccurate information.

Intrinsic motivation-building strategies include promotion of inclusivity and an understanding of the local culture, trust, and openness. Teams involving Southeast Asian scientists need to be created in a manner that allows for inclusion and productive contribution of scientists who are early in their careers and are from a variety of disciplines and sectors. Promoting trust through a culture of transparency and openness is critical to building a trusted network both for internal interactions among members and external interactions among various stakeholders (e.g., scientists, members of the public, policymakers, and journalists). This transparency is a critical factor in addressing effectively the growing mistrust, skepticism, and various other challenges associated with inaccurate and misleading information that fuels misinformation by supporting greater accountability, quality, and safety, and by promoting ethical attitudes and practices, all of which contribute to scientific excellence. The value of transparency in fostering trust is highly dependent on the external and internal communication processes that are mediated by several relational and contextual factors.

Motivating the scientific community to engage in efforts such as addressing misinformation involves social responsibility and integrity. For example, the Young Scientists Network-Academy of Sciences Malaysia and the Association of Southeast Asian Nations-Young Scientists Network (ASEAN-YSN) promote Responsible Conduct of Research to encourage greater awareness among scientists in upholding the principles of research integrity and addressing contemporary needs of various external stakeholders in Southeast Asia. These existing networks promote multi-sector engagement among academia, industry, government, and broader society and embrace responsible team science among interdisciplinary teams of scientists and others within the scientific ecosystem. Through these efforts, researchers in the region are encouraged to reflect on their own actions to ensure they align with ethical principles associated with correcting scientific errors and addressing misinformation.

Finally, when misinformation involves scientific issues, scientists may be concerned about the presumed harm that these claims have on the reputation of other scientists and the public, which in turn can activate scientists’ support for education and legislation for correcting misinformation. These considerations can become key enablers in encouraging domain experts, such as life, computer, and social scientists, to work together to safeguard the interests of the scientific community and the broader society.

As scientists become motivated to contribute to the network, enhance scientific excellence, and address misinformation, continuous engagement and involvement in the network can be promoted. Continuing engagement can be measured via the ability of scientists to receive continuous professional development opportunities for improving their skills in addressing inaccurate and misleading claims, and enhancement of existing and innovative capacities for addressing inaccurate information at institutional and national levels.

Actively motivating members, providing evolving professional development offerings, and regularly co-designing and implementing efforts to promote a shared vision and mission among network members are critical for ensuring the sustainability and relevance of the network. Members of the networks with diverse interests could be encouraged to pursue their interests as long as they are framed within the overarching goals of the network. Finally, by valuing inclusivity and representation among members, the network provides opportunities for scientists from various disciplines, genders, career stages, accessibility, and cultures to interact. Diversity strengthens scientific excellence, which plays a critical role in the network. Inclusion of diverse views, expertise, and experiences enables the network to incorporate new and innovative approaches; promote scientific responsibility; enhance accuracy and accessibility of science among various audiences; and be nimble, flexible, responsive, and proactive. Box 2 highlights the values of the trusted network.

Engaging International, Regional, and National Efforts

A third critical element of the trusted network is creating an entity that is valued and trusted by its members and various stakeholders (or audiences) and that works in coordination with existing networks, many of which have different but overlapping missions, members, and audiences. Several international, regional, and country-level networks exist to facilitate scientific interaction on general and discipline-specific topics. Some of the examples of international networks of scientists include the Global Young Academy, The InterAcademy Partnership (IAP), and The World Academy of Sciences. Examples of regional networks are ASEAN-YSN and the Association of Academies and Societies of Sciences in Asia. Regional networks such as these can be expedient at implementing

new initiatives and programs, often have a greater cultural understanding of issues and regional context, and may receive financial and political support by regional (e.g., ASEAN) or national authorities. Examples of country-level societies are national academies and national young academies. These networks may be connected to regional and international networks. Networks of scientists also could be formed on a disciplinary- or field-specific basis such as the American Society of Microbiology, the International Society for Infectious Diseases’ ProMed, the American Biological Safety Association International, the International Life Science Institute, the International Union of Biological Sciences, the International Union of Biochemistry and Molecular Biology, the International Network for Government Science Advice, and the Southeast Asia One Health University Network and national one health university networks in Southeast Asian countries.

Trust is built on scientific excellence, clear and concise communications, and active and respectful dialogue with various stakeholders. Although the need for addressing misinformation about biological threats has been recognized by several international organizations (e.g., WHO, the United Nations Interregional Crime and Justice Research Institute), prioritizing regional and national efforts that involve scientists as part of the solution toward addressing inaccurate and misleading information that leads to or otherwise enhances misinformation would provide high-level support for the trusted network and other related activities. For example, if ASEAN prioritizes initiatives for addressing misinformation, members of the trusted network could provide accurate, authoritative, and defensible scientific information that could debunk those claims and also could provide high-level support for the trusted network. In The State of Southeast Asia: 2021 Survey Report, published by the ASEAN Studies Centre at the Institute of Southeast Asian Studies-Yusof Ishak Institute, respondents (from academia, think-tanks or research institutions, government entities, civil society organizations, nongovernmental organizations, business or finance institutions, and regional or international organizations) from ASEAN, in particular Singapore and Brunei, urged scientists and medical doctors to engage in public policy discussions and scientific advice to better address the SARS-CoV-2 pandemic (Seah et al. 2021). Similarly, partnerships between this trusted network and respected international organizations such as the International Science Council, IAP, and national academies (e.g., the U.S. National Academies) further enhance the network’s ability to draw on scientific leaders in a variety of disciplines, including those not yet well represented in Southeast Asia.

Recommendation 3: The distributed network (see Recommendation 1) should leverage existing scientific networks in Southeast Asia, catalyzing collaboration among their members, especially those with needed expertise, to address specific claims. Regional scientists involved in developing this distributed network should develop open and trusted lines of communication and partnership with relevant Ministries to gain high-level support for the network and its outputs.

Leveraging Scientific Responsibility and Ethical Principles

Responsible science is a core part of life science research and development throughout the world and is grounded in international principles on scientific responsibility, which include integrity, respect, fairness, trustworthiness, transparency, recognition of benefits and possible harms, accountability, stewardship, and objectivity in the conduct and communication of science (IAP 2016; ISC n.d.a; NASEM 2017a). Several of these principles align with the 1979 Belmont Report, which created an ethical framework for research involving human subjects and, subsequently, applied more broadly for biomedical ethics in 2001 and the social sciences (Beauchamp and Childress 2001; National Commission for the Protection of Human Subjects of Biomedical and Behavioral Research 1979). These core principles have been applied to many other types of research and scientific activities including ethical considerations associated with research that raises security concerns (Selgelid 2016).

Building on this foundation, the U.S. National Academies and Academy of Sciences Malaysia engaged scientists throughout Southeast Asia in 2013 on responsible science (Chau 2020; Clements et al. 2017). These activities focused on linking responsible conduct of research and other responsible science concepts with scientists’ role in addressing or otherwise minimizing security risks of their research, specifically the potential for accidental or deliberately malicious exploitation of peaceful life sciences research (NAS 2017; NRC 2004; WHO 2010). Following this conference, the Malaysian Educational Module on Responsible Conduct of Research was published in 2018, and the ASEAN-YSN and Southeast Asia regional office of the International Science Council launched a project in 2019 to train instructors on responsible science throughout the region (ASEAN-YSN n.d.; MOSP n.d.). Internationally, the European Union initiated an effort on responsible research and innovation as part of its Horizon 2020 program, and WHO is updating its 2010 report Responsible Life Sciences Research for Global Health Security: A Guidance Document (EC n.d.; WHO 2010).

Finding 4: International and regional efforts toward responsible science provide a strong foundation and framework for guiding the activities of scientists who are interested in correcting inaccurate scientific information that leads to or propagates misinformation in the public sphere.

Implementation Plan

Developing an implementation plan for the creation and maintenance of the distributed network is a critical next step in engaging scientists from a variety of disciplines and sectors to assist in countering misinformation based on inaccurate and misleading information. The recommended strategy lays the foundation, including key considerations for its successful implementation, for the development of this distributed network. The implementation plan would document clear tactics for building strategic partnerships to realize the distributed network and

gain support among the scientific and policy communities in Southeast Asia, adding value to members on an ongoing basis (e.g., through training of new skills), developing and executing the process for adjudicating claims that need to be addressed by the network and for enabling cross-disciplinary and cross-sector collaboration to address those claims, and identifying and implementing improvements of the distributed network. Furthermore, the implementation plan can specify the means and frequency of interaction among network members; the means of interaction with other networks and regional leaders; the process for developing common messages about corrected information that are accessible in multiple languages; and a unified platform through which members and broader stakeholders can communicate and access authoritative, evidence-informed, and defensible scientific information. Finally, the implementation plan can include the development of key operational actions, including (1) a process for maintaining connectivity and interaction among members, stakeholders, and other networks; (2) an action plan for sustaining the network beyond initial funding (i.e., through the development of a fundraising or business plan that identifies potential funders, articulates the value proposition to potential funders, and documents measures of success of the network); (3) a clear definition of the membership types, levels, and costs, if relevant; (4) incentives for member and stakeholder engagement; and (5) consideration of the nation that hosts the network, even if implemented as virtual-only, and associated policies for operating the recommended network.

Recommendation 4: The governing body and executive team of the distributed network (Recommendation 1.1) should establish an implementation plan for the creation of the distributed network prior to its formation. This plan should specify important aspects of the network’s operation such as (1) sustainability of the network once established; (2) interaction with scientific and non-technical stakeholders; (3) ongoing benefits to members (e.g., training, mentorship and coaching opportunities, professional networking, production of publications); and (4) processes for identifying, adjudicating, and, if deemed necessary, addressing inaccurate information relating to biological threats. The plan should build on the key considerations of the strategy, lessons learned about effective correction of inaccurate information that are described in this report (section on Interventions Against Inaccurate and Misleading Information), and the associated how-to guide for identifying and potentially addressing inaccurate information that leads to or perpetuates misinformation. Furthermore, the plan should define the role that scientists play in addressing inaccurate and misleading information and scientific responsibility. This role should be considered within the broader context of other societal and international actors who may be addressing mis- and disinformation.

Recommendation 5: The implementation plan should specify how the network will interact with stakeholders in government, media, and the broader

public to establish itself as a “go-to” regional focal point for addressing inaccurate and misleading information with evidence-supported and clearly communicated scientific information. The implementation plan also should describe the relationships among the network’s leadership, members, external scientists, and other external stakeholders to provide a clear understanding of who makes inquiries about addressing inaccurate statements and how these statements will be evaluated and addressed.

Recommendation 6: The implementation plan should draw on the guide, which is a companion to this strategy report, for addressing inaccurate and misleading statements. Network leaders and members should work with experts in the life sciences, science communication, team science, computer science, and research studying the flow, spread, and correction of misinformation to train network members in using the guide effectively.

NETWORK STRUCTURES CONSIDERED IN THE STUDY

Different approaches to building a network to combat misinformation about biological threats were considered. One approach is a network that involves formal or informal associations of individuals as members. Another approach is a consortium that involves formal association of institutions as members. In this section, both models, practicalities of their creation and maintenance, and limitations and capabilities of each are described along with common considerations that apply to any scientific network model.

Selecting which model is appropriate involves analysis of several key considerations including sustainability, which is a significant concern associated with the longevity and durability of any network, and the ability to be dynamic. Continuous engagement of members in the network’s primary activities and emphasis on the benefits of membership to the network promote its sustainability. Dynamic networks require more active participation of members and specialized domain expertise, especially for events that occur periodically. For example, although misinformation is a constant concern, misinformation about infectious disease research and epidemics may occur periodically and only when specific concerns or events emerge. Therefore, designing the network such that it can activate different members based on changing needs is important for addressing inaccurate information about emerging infectious diseases and other biothreats. Both models described here incorporate these considerations in network design.

Trust is a critical component of any type of network. Creating a stable and trusted network involves the provision of opportunities for affiliation and social exchange, development and mentoring, and support for strategic collective action. Collective learning and collaboration can enable a community to construct moral authority. Known as collective intelligence, both networks of individuals and institutions can foster innovation more effectively to solve highly complex challenges and marshal resources and knowledge fast (Riedl et al. 2021; Suran et al. 2020).

Network of Individuals

The “network of individuals” model is similar to professional associations or Facebook groups that draw together a set of individuals who identify with a particular community or particular cause. For example, most scientific societies have individual members; provide newsletters, awards, meetings, and publications; and appoint fellows. They have an array of membership types including those working in specific fields or disciplines, at experiential levels and/or career stages (students), and with varied citizenship.

The benefit of a network of individuals is that it can be targeted to the individuals who are most invested in and most knowledgeable about the science. Scientists can leverage their domain expertise to identify and address misinformation in ways that reflect problem-solving and critical thinking in their own fields. The network can be crafted with a balance between different domain experts including science communicators and various life scientists, and ensure diversity in membership (e.g., fields, career stage, expertise, geographical and regional representation, sector). Furthermore, these organizations offer opportunities for sub-groups to be established that address particular issues, such as science policy. This sub-group structure could provide opportunities for individual scientists from existing, reputable networks to address misinformation. This initial nucleus of trusted individuals may attract interest from other members who also are able to lend their expertise to common goals, and enable the development and use of common rules or effective procedures for working together.

This model of networks of individuals also has several limitations. A significant limitation is the challenge of sustaining such a network. If the network is activated sporadically, members may not be engaged sufficiently, and membership might experience significant attrition and turnover during the dormant periods. As a newly established network, trust and credibility also will need to be established. Another limitation is that a structure would need to be established to run the network, including a leadership structure, a way to identify who would be included as members, and a process for vetting those members. Additionally, networks of individuals may not be able to draw on available funds that societies have to support their own activities through the collection of dues. Finally, a network of individuals may provide less protection of its members from attacks (e.g., to reputation or oneself and family) than a consortium-based model.

Finding 5: If a network of individuals is created to address inaccurate and misleading information, it would need to have a structure that provides credibility and stability, and be agile and fluid in times of crisis.

Network as a Consortium (of Science Societies and Institutions)

Different from the collective knowledge of individuals, connectional knowledge of a consortium harnesses the value of relationships and networks, thus connecting organizations or networks (Dahwan and Joni 2015). The value of consortia is that the membership is organizations (e.g., universities) or associations,

which already have stable membership of individuals and trust within their communities. A particularly effective example of a formal network of organizations is the Societies Consortium on Sexual Harassment in STEMM, based on recommendations by the U.S. National Academies (NASEM 2018). The mission of this Societies Consortium is to “support academic and professional disciplinary societies in fulfilling their mission-driven roles as standard bearers and standard setters for excellence in science, technology, engineering, mathematics, and medical (STEMM) fields, addressing sexual harassment in all its forms and intersectionalities.” As an organization, the consortium sought to create a strong and influential, collective voice, “backed by action, of a diverse and large group of committed societies (of many sizes and areas of focus) to set standards of excellence in STEMM fields.” In short, the Societies Consortium was created to bring together scientific networks to address a challenge in science and society, and to help create and provide resources and tools for researchers to increase ethical behavior in science, specifically on sexual harassment (Societies Consortium on Sexual Harassment in STEMM n.d.).

The benefit of a consortium of societies (or institutions) is in the strength of a collective and unified voice that can more easily and rapidly unite thousands of scientists. Member societies can get resources and information out rapidly if needed to address immediate, external issues. The focus on the consortium can be more about creating useful resources and leadership guidance on addressing issues, setting standards for ethics in science, or providing other common resources, rather than evolving more slowly as a society of individual members in specific scientific disciplines. Another benefit of a consortium is the potential sustainability of the network. A consortium creating resources and tools for societies that can then be passed down as resources for individual smaller network members is creating value for all through the shared cost and the non-duplication of efforts. Finally, consortia may provide institutional protection to members who contribute to addressing inaccurate information, helping to protect individuals’ privacy and security for particularly sensitive topics.

The limitation of a consortium is that individuals can be lost in the structure. Although the internal personnel may be structured similarly to an association or institution with an executive board and leadership group, the individual voices of members might be more difficult to hear, as membership to the consortium is through a smaller number of representatives of other networks and/or institutions. This structure may have more of a singular leadership role or source of tools rather than a society made up of individual members. Although the benefits of a consortium trickle down to individual society members, the resources and tools developed are vetted by the consortium leadership and developed to address the specific challenge.

Hybrid of Consortium and Individual Models

The committee also considered a hybrid model in which a network of societies (e.g., consortium) also invites individual membership. Such a model would have many of the same benefits as a consortium. An additional benefit

is that individuals not represented by a society or institution are able to participate. However, the institutional verification and validation of members would be diminished in the hybrid model. Although connectivity is of central importance to connective knowledge, connectivity also can come from individual champions or influencers. Rarely will a scientist not belong to either an association or a research institution, so valuable resources of a consortium would be available for their use. Some scientists and fields are impacted more by mis- and disinformation than others, and could use the support and resources straight from the source.

Another advantage of a hybrid model is that it could take advantage of existing social ties among scientists participating in the same societies or with shared institutional affiliation. Membership in and influence of such a hybrid network would thus spread over the existing “social network” of institutional affiliations, taking full advantage of indirect and direct network effects of this underlying network, in a way reminiscent of how social networks like Facebook took advantage of existing ties among college students in its early growth (Sundararajan 2006).

REGIONAL CONSIDERATIONS

Existing regional networks in Southeast Asia already may have resources to support the translation of scientific information into multiple local languages, communication and interaction among scientists from different countries, access to information and expertise in challenging environments, and planning around important cultural traditions and events. Numerous local languages are used by scientists throughout Southeast Asia. For example, Malaysia is a multiracial country with many languages and dialects (e.g., Mandarin, Malay, Tamil, Bjaus, Iban, Cantonese, and Hakka). Building trust and open lines of communication with scientists and local communities requires the use of local languages for communicating science in crises.

As with many regions around the world, each Southeast Asian country has different laws governing scientific activities and data sharing and access, which are helpful to know when determining whether and how to counter inaccurate information. At the heart of some of these policies are different perspectives on trust, respect, and reciprocal benefit of regional countries and scientists and international scientists (Merson et al. 2015). In 2019, Indonesia strengthened its laws governing access to its biodiversity samples by international scientists, nearly banning export of physical or digital information about samples without a material transfer agreement and increasing the legal penalties of violating these requirements (Rochmyaningsih 2019). Simultaneously, Indonesia began supporting open access to public health articles, an action that accelerated during the COVID-19 pandemic (IAKMI n.d.; Universitas Airlangga n.d.). In Malaysia, legislation related to conservation of biological diversity is mainly sector-based and often is implemented by federal or state level entities (sometimes, implementation is shared by federal and state entities) (MyBIS 2015). Malaysia supports open access to scientific data and information, primarily to reap the benefits of research (MOSP n.d.). Since 1992, Thailand has implemented relevant policies and measures to comply with the Convention on Biological Diversity (CBD)

(Anti-Fake News Center Thailand n.d.; Jalli 2020; Office of Environmental Policy and Planning 2000; Schuldt 2021; Tanasugarn et al. 2000). Vietnam has implemented its Law on Biodiversity and strengthened its legal structures for the management of access to genetic resources and sharing of benefits arising from their use to meet its obligations under the CBD and associated Nagoya Protocol on Access to Genetic Resources and Fair and Equitable Sharing of the Benefits Arising from their Utilization (Socialist Republic of Vietnam 2008, 2017, 2019, 2020a,b). Several countries, including Vietnam, have developed open or limited access to digital sequence information (DSI) to promote scientific pursuits, while the countries party to the CBD are exploring whether DSI should be included in the Nagoya Protocol (Strategic Framework for Capacity-building 2017).

Several Southeast Asian countries also have established laws and partnerships to address misinformation that emerged during the COVID-19 pandemic (Jalli 2020). The Indonesian government works with fact-checking organizations to address misinformation, and the Indonesian Anti-Defamation Society (Masyarakat Anti Fitnah Indonesia) was created before the pandemic to counter hoaxes and other false information (MAFINDO n.d.). The Malaysian government created a platform to cross-check information spread through social media, and Malaysia’s Ministry of Health Malaysia (2021) launched a new website, COVIDNOW, for more transparent COVID-19 data sharing with the public to increase public trust. Thailand created an Anti-Fake News Center and Singapore created Factually,3 both of which are designed to correct false information spread through the media (Anti-Fake News Center Thailand 2021; Schuldt 2021).

Finding 6: Many countries in Southeast Asia have established programs to address false claims, but few academic articles have been published to characterize the scale, scope, and enablers of mis- and disinformation in the region. In addition, studies evaluating effective measures to counter false claims in Southeast Asia have not been published.

Recommendation 7: The executive team of the network should develop resources for scientists to understand country-specific laws governing data sharing, scientific research, and combating misinformation. Furthermore, information describing similarities and differences in trust among scientists and with other stakeholders, respect among scientists, and mutual benefit of scientific activities should be provided to network members to enable productive and positive collaboration.

Recommendation 8: The governance body and executive team of the proposed network should begin building trusted connections with country-specific programs aimed at combating misinformation and in local languages to assist in identifying and communicating corrective information.

___________________

NETWORK INFLUENCE

Understanding the flow of information is critical to achieving the vision and mission of the proposed network. With the emergence and widespread use of social media platforms, knowledge and understanding about how information flows and gains acceptance within networks have increased. The idea of opinion leaders (also called “influentials”) has been around since the 1950s (Katz and Lazarsfeld 1955; Keller and Berry 2003; Lazarsfeld et al. 1948). The assumption behind the idea is that information rarely flows directly from a source to a receiver; instead, it is passed along by opinion leaders who are connected closely to an information source in the network and then pass along that information to a wider group of audiences in their respective networks (Shah and Scheufele 2006). With the emergence of online social networks, the concept has shifted both the focus and terminology toward the idea of the “influencer.” The concept of the “influencer” is grounded in the social influence network theory, which is a “process of interpersonal influence that occurs in groups, affects persons’ attitudes and opinions on issues, and produces interpersonal agreement, including group consensus, from an initial state of disagreement” (NRC 2003).