1

Introduction

The military is moving toward multi-domain operations (MDO), which involve dynamic and distributed combinations of actions across the traditionally separate air, land, maritime, space, and cyberspace domains, as well as the information domain and the electromagnetic spectrum domain, to achieve synergistic and combined effects with improved mission outcomes. The goal of MDO command and control (MDC2), also called joint all-domain command and control (JADC2), is to achieve operational and informational advantages by “connecting distributed sensors, shooters, and data from all domains to joint forces, enabling coordinated exercise of authority to integrate planning and synchronize convergence in time, space, and purpose” (USAF, 2020, p. 6). MDO is representative of a wide body of research that considers not only the behaviors and performance of teams, but also interrelated teams of teams (or multi-team systems) (Marks et al., 2005; Schraagen et al., 2021; Zaccaro and DeChurch, 2011).

A key facet of MDO is the need to accelerate and increase the military’s ability to develop timely, decision-quality information, integrated across domains, which includes the ability to rapidly understand relationships between information from different domains. The development of rapid, cross-domain situation awareness (SA) is critical to effective planning and decision making, to optimize the use of available military resources. SA is also important for monitoring across operations (e.g., feedback on effects, synchronization, or tempo), which is needed for mission control and dynamic replanning (see NASEM, 2018, 2021a, and 2021b for more information on MDC2).

Artificial intelligence (AI) is seen as a tool that can potentially play a critical role in supporting the military’s objectives for MDC2. AI can significantly boost the ability to process the massively increasing volume of intelligence, surveillance, and reconnaissance data generated each year (Cook, 2021), automate the mission-planning process, and create faster predictive targeting and systems maintenance (Hill, 2021). Achieving this goal, however, requires that the AI systems be highly reliable and robust within the broad range of missions and conditions in which they might be employed, and that these systems operate seamlessly within a much larger and more complex set of military systems and human operations (USAF, 2015).

The goal of this report is to examine the factors relevant to the design and implementation of AI systems with respect to their operations with humans, and to recommend necessary research for achieving successful performance across the joint human-AI team, particularly with regard to MDO, although many research objectives apply to human-AI teaming across various military and non-military contexts.

STUDY BACKGROUND AND CHARGE TO THE COMMITTEE

The Air Force Research Laboratory (AFRL), 711th Human Performance Wing, sought the assistance of the National Academies of Sciences, Engineering, and Medicine to examine the requirements for appropriate use of AI in future operations. The AFRL was particularly concerned about the state of the research base, to support the design of future systems by promoting effective human performance when AI is a part of the system. In considering the integration of AI into future operations, the AFRL takes a systems approach, which recognizes that people, technology, and operational systems such as policies, procedures, structures, and informational flows can affect each other and overall mission performance. Therefore, determining the methods and infrastructure that will allow humans to effectively and efficiently interact with AI is fundamental to the USAF’s goals. The committee’s overall task was to examine the state of research on human-AI teaming and to determine gaps and future research priorities. The committee’s full statement of task is shown in Box 1.1.

To address this charge, an independent committee with a broad range of expertise was appointed, including individuals with backgrounds in human factors, cognitive engineering, human-computer interaction, industrial-organizational psychology, and AI, as well as experts in military operations related to human-AI teaming. Brief biographies of the 11 committee members are presented in Appendix A.

COMMITTEE APPROACH

The committee was to complete its work as a “fast-track” consensus committee, with a formal report of its findings provided within nine months of its inception in May of 2021. Its process was to

- Review the available research literature on human-automation integration, AI performance and limitations, human teaming, and human-AI interaction to include models and approaches, AI transparency and explainability, shared SA and mental models, trust and bias, communication and collaboration, measures and metrics, and human-systems integration processes for addressing human-AI teaming;

- Host a virtual workshop, Human-AI Teaming for Warfighter-Centered Design, July 28–29, 2021, to gather data on relevant research efforts;

- Conduct a series of virtual online meetings to support committee deliberations and discussions;

- Identify major findings, research gaps, and research needs, based on the workshop and research literature; and

- Prioritize research needs for advancing the state of knowledge on human-AI teaming.

AUTOMATION AND AI

The work of the committee was largely influenced by the extant research base on human-automation interaction developed over the past 40 years, in addition to research on human interaction with autonomous and semi-autonomous systems, as well as AI in its many forms.

Automation, as used in this report, is defined as a technology that performs tasks independently, without continuous input from an operator (Groover, 2020). Automation can be fixed (mechanical) or programmable (based on defined rules and feedback loops), either via a static set of software commands or via flexible, rapid customization by a human operator. Tasks may be fully automated (autonomous) or semi-automated, requiring human oversight and control for portions of the task. Automation is also defined as “the execution by a machine agent of a function that was previously carried out by a human. What is considered automation will, therefore, change with time” (Parasuraman and Riley, 1997, p. 231).

Autonomous systems have a set of intelligence-based capabilities that can respond to situations that were not explicitly programmed or were not anticipated in the design (i.e., systems that can generate decision-based responses) (USAF, 2013). Autonomous systems have a certain amount of self-government and self-directed behavior, and they can serve as human proxies for decisions (USAF, 2015). Systems may be fully autonomous or partially autonomous. Partially autonomous systems require human actions or inputs for portions of the task.

AI seeks to provide intellectual processes similar to those of humans, including the “ability to reason, discover meaning, generalize, or learn from past experience” (Copeland, 2021). AI systems may be applied to parts of a task (e.g., perception and categorization, natural language understanding, problem solving, reasoning, or system control), or to a combination of task-related actions. AI software approaches may involve symbolic approaches (e.g., rule-based or case-based reasoning), often in the form of decision-support systems; or AI software may apply other advanced algorithms, such as Bayesian belief-nets, fuzzy systems, and connectionist or machine learning (ML)-based approaches (e.g., logistic regression, neural networks, deep learning, or decision trees). AI software may also incorporate hybrid architectures that include more than one algorithmic approach.

In the body of this report, the term AI is used to describe a form of highly capable automation directed at highly perceptual and cognitive tasks. AI potentially improves upon previous forms of automation in its ability to sense and interpret situations, adapt to changes in conditions and the environment, prioritize and optimize based on changes in goals, and refine its abilities through learning. These abilities of AI are often only aspirational, however, and today, many systems built with AI software fall short of these capabilities. Although many autonomous or semi-autonomous systems may employ AI, in other cases AI may merely enhance or facilitate operations that are conducted by humans. As such, many of the committee’s findings are based on the research on human-automation interaction and human-autonomy interaction, with additional research objectives made where further extensions are needed to deal with unique aspects of AI systems (e.g., challenges associated with ML-based AI).

It should be noted that AI is currently being used for many different types of functions—from object recognition to decision making to automated control loop execution—and may have different levels of reliability and capabilities over time. Further, in many cases, AI software may not be stand-alone, but may be embedded within a more complex system with which humans interact. While recognizing these differences, throughout this report the committee will refer to AI software or systems with respect to the features and factors that will be important for its successful integration with humans as part of a larger sociotechnical system.

AI software is thought to be potentially beneficial for military operations due to AI’s ability to (1) execute tasks very quickly, advantaging time-critical mission applications; (2) enhance precision-strike capabilities by automating the processing of intelligence, surveillance and reconnaissance data, target recognition, tracking, selection, and engagement; (3) improve coordination of forces across a distributed network; (4) improve the ability to operate in anti-access/area denial areas where human control opportunities may be limited; (5) increase persistence of operations over time; (6) increase the speed and accuracy of SA and decision making, thus improving lethality and deterrence; and (7) provide enhanced endurance over time (Konaev et al., 2020). These gains will not be possible, however, unless the military pays careful attention to the creation of robust AI applications that emphasize safety and security and are well integrated with the warfighter (Konaev et al., 2020).

LIMITS OF AI

Although there is a tendency among many to view AI as highly capable in comparison to humans (National Security Commission on Artificial Intelligence, 2021), in reality, AI software is subject to a number of performance limitations. This list is not meant to be exhaustive, and likely will change over time, but it points out many of the larger challenges associated with current AI software approaches.

- Brittleness: AI will only be capable of performing well in situations that are covered by its programming or its training data (Woods, 2016). When new classes of situations are encountered that require behaviors different from what the AI system has previously learned, it may perform poorly by over-generalizing from previous training. Even if an AI system can learn in real time, such training requires time and repeated experiences, as well as meaningful feedback on decision results, potentially causing performance deficits during the learning cycle.

- Perceptual limitations: Though improvements have been made, many AI algorithms continue to struggle with reliable and accurate object recognition in “noisy” environments, as well as with natural language processing (Akhtar and Mian, 2018; Alcorn et al., 2019; Yadav, Patel, and Shah, 2021). The use of AI for higher-order cognitive processes can be undermined if information inputs are not registered correctly.

- Hidden biases: AI software may incorporate many hidden biases that can result from being created using a limited set of training data, or from biases within that data itself (Ferrer et al., 2021; Howard and Borenstein, 2018). Because ML-based AI software is often opaque (i.e., the features and logic used are not easily subject to human inspection), these biases may go undetected.

- No model of causation: ML-based AI is based on simple pattern recognition; the underlying system has no causal mode (Pearl and Mackenzie, 2018). Because AI cannot use reason to understand cause and effect, it cannot predict future events, simulate the effects of potential actions, reflect on past actions, or learn when to generalize to new situations. Causality has been highlighted as a major research challenge for AI systems (Littman et al., 2021).

In the committee’s judgment, although AI software is capable of rapidly processing large volumes of data for known types of events or situations, the limitations of this software means that, for the foreseeable future, AI will remain inadequate for recognizing and operating in most novel situations. The performance of an AI system could be suboptimal due to unknown biases or limitations in its training data, or the presence of challenges for which no clear “correct response” is known (e.g., cyber operations in which the outcome of an action has not been previously observed). Use of AI in the military context also introduces the particular challenge posed by adversaries who might try to accentuate and exploit the vulnerabilities of AI.

EFFECT OF AI ON HUMAN PERFORMANCE

Automation is known to create challenges for people who must interact with or oversee its performance, and these challenges are also applicable to AI systems.

- Automation confusion: “Poor operator understanding of system functioning is a common problem with automation, leading to inaccurate expectations of system behavior and inappropriate interactions with the automation” (Endsley, 2019, p. 3; see also Sarter and Woods, 1994; Wiener and Curry, 1980). “This is largely due to the fact that automation is inherently complex, and its operations are often not fully understood even by [people] with extensive experience using it” (Endsley, 2019, p. 3; see also Federal Aviation Administration Human Factors Team, 1996; McClumpha and James, 1994). Developing a correct mental model of how an automation works is a major challenge. Furthermore, as people transition from directly performing a task to interacting with automation to accomplish that task, cognitive workload often increases (Hancock and Verwey, 1997; Warm, Dember, and Hancock, 1996; Wiener, 1985).

- Irony of automation: When automation is working correctly, people can easily become bored or occupied with other tasks and fail to attend well to automation performance. Periodically, however, high workload spikes will occur, overstretching human performance (Bainbridge, 1983).

- Poor SA and out-of-the-loop performance degradation: People working with automation can become out-of-the-loop, meaning slower to identify a problem with system performance and slower to understand a detected problem (Moray, 1986; Wiener and Curry, 1980; Young, 1969). The out-of-the-loop problem results from lower SA (both of the automation and of the state of the system and environment) when people oversee automated systems compared to when they perform tasks manually (Endsley and Kiris, 1995). The out-of-the-loop problem can result in catastrophic consequences in novel or unexpected situations (Sebok and Wickens, 2017; Wickens, 1995).

- Human decision biasing: Research has shown that when the recommendations of an automated decision-support system are correct, the automation can improve human performance; however, when an automated system’s recommendations are incorrect, people overseeing the system are more likely to make the same error (Layton, Smith, and McCoy, 1994; Olson and Sarter, 1999; Yeh, Wickens, and Seagull, 1999). That is, human decision making is not independent, but can be biased by errors made by automation (Endsley and Jones, 2012).

- Degradation of manual skills: To effectively oversee automation, people need to remain highly skilled at performing tasks manually, including understanding the cues important for decision making. However, these skills can atrophy if they are not used when tasks become automated (Casner et al., 2014; Young, Fanjoy, and Suckow, 2006). Further, people who are new to tasks may be unable to form the necessary skill sets if they only oversee automation. This loss of skills will be particularly detrimental if computer systems are compromised by a cyber attack (Ackerman and Stavridis, 2021; Hallaq et al., 2017), or if a rapidly changing adversarial situation is encountered for which the automation is not suited (Nelson, Biggio, and Laskov, 2011).

Because AI systems are incapable of adequate performance in novel situations, it will be necessary for substantial portions of certain tasks to be performed by humans, for the foreseeable future. However, the many challenges for human interactions with automated systems will continue, even with more capable automation based on AI. Therefore, in the committee’s judgment, the design and development of effective human-AI teams that can take advantage of the unique capabilities of both people and AI while overcoming the known challenges and limitations of both is an important future research focus.

REPORT STRUCTURE AND SUMMARY

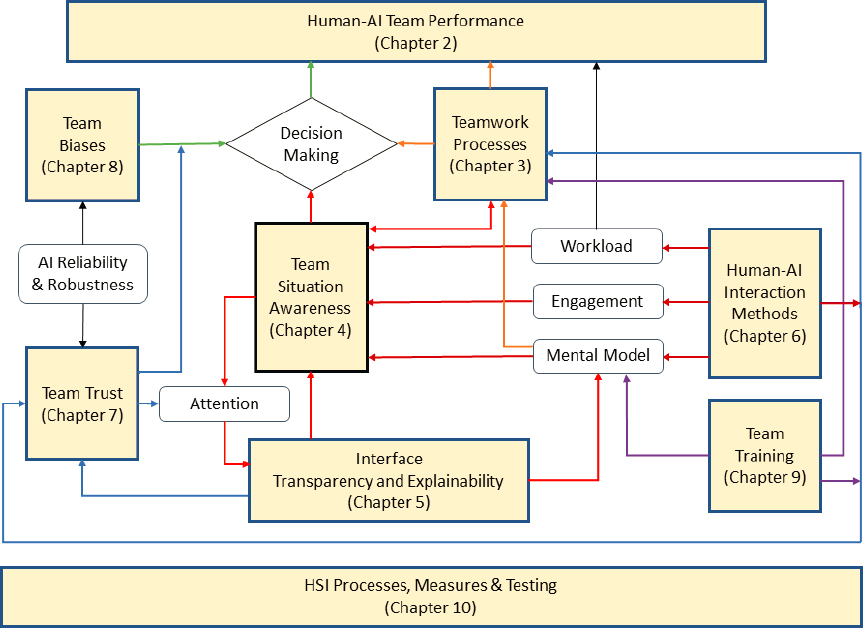

In this report, the committee will examine the role of AI in military operations and MDC2 with respect to that of human decision makers. The report is divided into 11 interrelated chapters, as illustrated in Figure 1-1. The current chapter provides an overview of the problem and the charge to the committee, as described in the statement of task (Box 1.1). Chapter 2 examines teaming strategies and roles for humans and AI, and the effects of these approaches for overall mission performance. Chapter 3 discusses the requirements and processes for effective human-AI teamwork. Chapter 4 focuses on supporting human SA and shared SA in human-AI teams, followed by Chapter 5, which discusses requirements for AI display transparency and explainability. Chapter 6 addresses human-AI interaction design. Chapter 7 focuses on human trust in AI in team contexts. Chapter 8 examines the interactions between AI biases and inaccuracies and human decision biases, as well as methods for addressing them. Chapter 9 focuses on issues related to training human-AI teams. Chapter 10 addresses the overall human-systems integration development and testing process, including measures and metrics of human-AI collaboration. Chapter 11 provides a summary of the committee’s conclusions and a prioritized list of research objectives. As illustrated in Figure 1-1, however, these topics are highly interrelated, and so the committee’s research objectives are best viewed within that light.

Appendix A contains the committee members’ biographies. The agendas and speakers from the data-gathering workshops and open meetings held to fulfill the statement of task are presented in Appendix B. Appendix C provides definitions of the technical terms used in this report.

Throughout the report, the discussion of human-AI teams is pertinent to not only single human-AI teams, but also to multi-team systems that may include AI in various places, at various times, and acting in a variety of roles. It should be noted that many of the research needs discussed in this report that are relevant to human-AI teaming apply to many contexts as well as MDO. Specific concerns for MDO are also discussed throughout the report.

SOURCE: Based on the human-autonomy system oversight model, Endsley, 2017.