2

How Do Living Systems Represent and Process Information?

A traditional introduction to physics emphasizes that the subject is about forces and energies. This might lead us to think that the physics of living systems is about the forces and energies relevant for life, and certainly this is an important part of our subject. But life depends not only on energy; it also depends on information. Organisms and even individual cells need information about what is happening in their environment, and they need information about their own internal states. Many crucial functions operate in a limit where information is scarce, creating pressure to represent and process this information efficiently. Understanding the physics of living systems requires us to understand how information flows across many scales, from single molecules to groups of organisms. From the theoretical side, these explorations often have reinforced the deep connections between statistical physics and information theory, and on the experimental side we have seen the development of extraordinary new measurement techniques. The search for the physical basis of information transmission in living systems has led to foundational discoveries, pointing to new physics problems. A sampling of these issues is provided in Table 2.1.

INFORMATION ENCODED IN DNA SEQUENCE

The fact that humans look like their parents is evidence that information is transmitted from generation to generation. This is perhaps the most fundamental example of information flow in living systems, underlying the persistence of life itself and its evolutionary change. The fact that this information is encoded in DNA,

TABLE 2.1 Physics of Information in Living Systems

| Discovery Area | Page Number | Broad Description of Area | Frontier of New Physics in the “Physics of Life” | Potential Application Areas |

|---|---|---|---|---|

| DNA sequences | 88 | Representation and readout of information in the genetic code, and beyond. | Non-equilibrium physics of proofreading; physical encoding of regulatory signals. | Synthetic biology; personalized medicine. |

| Molecular concentrations | 97 | Extracting information from the environment, sensing and controlling internal states, all through changing concentrations. | Physical limits to sensing and signaling in realistic contexts; information processing in networks; information about spatial patterns. | New tools and models for genetic networks in health and disease. |

| The brain | 104 | Understanding the principles of electrical signal transmission within cells and across synapses; understanding how these signals embody codes for perception, action, memory, and thought. | Theories of coding in single neurons and large networks; physical limits to information transmission; connecting molecular dynamics to macroscopic functions. | New tools for exploring the brain; new ingredients for neural networks in artificial intelligence. |

| Communication and language | 112 | Exploring physical representation of information in communication systems from bacteria to bird song, and organizational principles across these scales. | Discovery of new communication modes; communication and self-organization in bacterial communities; searching for information; long-ranged correlations in “meaningful” signals. | New algorithms for robotic search; tools for understanding language models in artificial intelligence. |

and that the transmission of this information is enabled by the double helical structure, are among the most profound results of 20th-century science. As described previously, these results emerged from an interplay among physics, chemistry, and biology, and these basic facts about DNA and the encoding of genetic information are now taught to high school students. But the search for physical principles of genetic information transmission did not stop with the discovery of the double helix. Efforts to understand how this information is copied so reliably from one generation to the next, how is it “read” by the cell, and how it can be rewritten all

have driven the development of new experimental tools and new theoretical ideas in the physics community.

The Genetic Code

The structure of DNA immediately suggested that information encoded in the sequence could be transmitted reliably through base pairing: In the four letters or bases of the DNA alphabet, A pairs with T and C pairs with G, and the structure of the molecule makes the “wrong” pairings much less favorable. This is an example of “complementarity,” in which molecules find their correct partners because their structures literally fit together. This is important not only for A to find T as DNA is copied, but for many other molecules to find their partners in the complex environment of the cell.

But the fidelity with which DNA is copied is extraordinary. In humans, there are a few billion letters in the DNA sequence, and when a cell makes a copy of its DNA there are only a handful of mistakes. The structure of DNA favors the correct AT and CG pairings, strongly, but not strongly enough to explain that mistakes occur with a probability of only one in a billion.

In more formal terms, the molecular structure determines an energy difference between the correct and incorrect pairings. The correct pairing has lower energy, and thus is favored, much as a ball will roll downhill to lower its energy in the gravitational field of Earth. But unlike the ball, a single molecule is jostled, significantly, by the random motions of neighboring molecules. As a result, there is some probability that the system will find itself in higher energy, less favorable states, and this is determined precisely by the energy difference and the temperature of the surroundings. For base pairing in copying DNA, this probability is roughly one in 10,000. This is small, but nowhere near the level of one in a billion found in real organisms.

The discrepancy between error probabilities determined by molecular structure and the observed error probabilities observed in living cells exists at every step in the processing of information encoded in the DNA: in the copying of the DNA itself; in the transcription of DNA into mRNA; in the connection of transfer RNA (tRNA) molecules to amino acids; and in the binding of tRNAs to mRNA during the translation of the mRNA sequence into the amino acid sequence of proteins. In each case living cells achieve a sorting of molecular components that is vastly more accurate than would be expected from energy differences alone. In the 1970s, it was proposed that these very different biochemical processes all face a common physics problem.

In order to drive error probabilities below the levels expected from energy differences, cells must perform a function very much like the hypothetical “Maxwell’s demon” that was introduced in the 1800s as a challenge to our molecular under-

standing of thermodynamics. Briefly, the demon could sort molecules, so that for example it could arrange for all the fast-moving molecules to be collected on one side of a container. But a collection of fast moving molecules is hotter than a collection of slow-moving molecules, so the demon would produce a temperature difference, and in this way could build an engine—out of nothing. While the original description of the demon focused on molecular speeds, sorting by any molecular property would be sufficient to power some kind of engine. Thus the seemingly innocuous sorting of molecules, if it could be done, would make it possible to build a perpetual motion machine, violating the second law of thermodynamics. The solution to the problem of Maxwell’s demon drove a much deeper understanding of the connections among energy, entropy, and information.

The short answer is that Maxwell’s demon cannot sort molecules reliably without itself expending energy, and the minimum energy expenditure is enough to compensate for the energy that could be extracted by an engine after the molecules were sorted. Similarly, in order to lower the error probabilities in processing information encoded in DNA, the cell expends extra energy in the processes of DNA replication, transcription, tRNA charging, and translation. Although the details vary, all of these processes involve steps that dissipate energy, sometimes in apparently wasteful ways, but these futile steps serve to increase precision. These mechanisms are called “proofreading,” analogous to the correction of spelling errors in text. Proofreading is an important example of how diverse mechanisms of life can be understood as addressing a common physics problem, and these molecular events provided important inspiration for understanding the thermodynamics of information and computation.

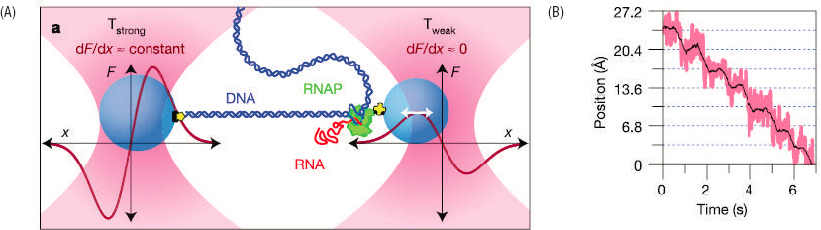

Optical trapping and single molecule manipulation, as described in Chapter 1, created the opportunity to observe proofreading in action, step by step. Figure 2.1 shows a schematic of experiments that probe the transcription of DNA into mRNA, in a minimal system that includes just the one essential protein, RNA polymerase (RNAP). In order to “read” the DNA sequence and produce the corresponding mRNA, RNAP moves along the DNA strand, trailing the growing mRNA behind it. After several generations of improvement, it was possible to measure this motion with a precision comparable to the diameter of an atom, and thereby resolve the discrete steps from one letter to the next along the DNA sequence.

Watching RNAP walk, base by base, along the DNA, reveals that there are occasional pauses, and even reversals. These reversals are enhanced when the polymerase is forced to make mistakes by providing an excess of incorrect bases. It is known that errors in transcription increase if a discrete subunit of RNAP is removed, and when this is done the reversals disappear. It seems very likely that these experiments thus have observed directly the proofreading steps in transcription of mRNA. Single molecule experiments on the ribosome have given similar insight into the proofreading processes involved in translation.

The sequence of bases along DNA encodes two very different kinds of information, genes and their regulatory elements. In genes, the DNA sequence represents the amino acid sequence of a protein molecule that the cell will synthesize, and this mapping from DNA to amino acids is “the genetic code.” The genetic code is almost universal across the entire tree of life, although there are important variations. One of the questions that has intrigued both physicists and biologists is whether the code(s) used were selected for some functional property, or whether it is just an accident of history. Since cells expend energy to minimize the errors in reading the code, it might be that evolution has selected codes that are more tolerant to errors, or perhaps even error correcting, as in the codes used in engineered communication and data transmission systems. The standard genetic code does have the property that the most common errors in translation lead to amino acids that have very similar physical properties, thus minimizing the impact of errors. Direct theoretical calculations indicate that only a tiny fraction of all possible codes could be more error tolerant in this sense.

In order to make use of encoded information, one needs the codebook. In living cells, the codebook for the genetic code is embodied in the tRNA molecules that physically connect the bases along mRNA to the corresponding amino acids. But these molecules themselves need to be synthesized by the cell, and there is a separate protein molecule (enzyme) that attaches each of the 20 amino acids to the corresponding tRNAs. Thus, while genetic information is localized in DNA, the codebook is distributed across this family of proteins. Analysis of the sequence

of chemical reactions catalyzed by these enzymes provided the first convincing evidence for proofreading.

Regulatory Sequences

Beyond the DNA sequences that encode the amino acid sequences of proteins, there are sequences that carry information about how the synthesis of proteins is regulated. This reading out of genetically encoded information is referred to as “expression” of the corresponding genes. In bacteria, regulatory sequences often are physically close to the genes that they control. Proteins called transcription factors (TFs) bind to these sequences and can interact directly with RNAP or act through an intermediate protein. The geometry can be such that TF binding gets in the way of RNAP binding at the site where transcription begins, in which case the transcription factor inhibits or represses the expression of the gene. Alternatively, TF binding can stabilize the binding of RNAP to the start site, enhancing or activating transcription.

Even in a bacterium such as Escherichia coli, there are more than 200 different transcription factor proteins; in human cells, there are more than 1,000. Classical studies focused on the interaction of one transcription factor with one gene. But early work made clear that a single TF could bind to many different short sequences of DNA, either to provide more complex regulation of a single gene or parallel regulation of multiple genes. From the earliest data, there was an effort to build models of TF binding to DNA based on equilibrium statistical mechanics, and also effective statistical mechanics for the ensemble of target sequences. These theoretical ideas linked gene regulation, protein/DNA interactions, and the evolution of sequence variation in ways that continue to influence our thinking.

In the 21st century, it became possible to explore regulatory sequences on a much larger scale, exploiting tools from genetic engineering and concepts from physics. An example of such an experiment is to insert the gene for a fluorescent protein (Chapter 6) into Escherichia coli and place a known regulatory sequence close enough to this that it will control expression. As a result, when an environmental signal is read by the regulatory sequence leading to the expression of the gene under its control, this will trigger the synthesis of the fluorescent protein, so that the bacteria literally will glow in response to light. But rather than inserting a single regulatory sequence into all the bacteria in a population, one can insert tens of thousands of different regulatory sequences into different bacteria. The binding of the TF will be stronger or weaker depending on the sequence, and this will change the amount of the fluorescent protein that is synthesized and hence the brightness of the fluorescence. Measuring the fluorescence from each individual bacterium and sequencing makes it possible to measure quite precisely how much

information each individual letter along the DNA carries about the fluorescence signal.

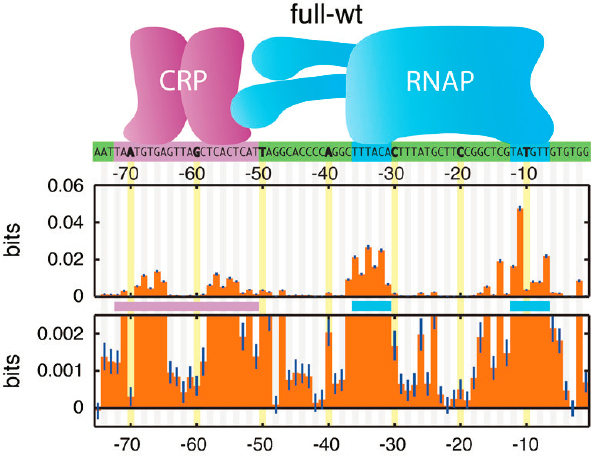

The “information footprint” in Figure 2.2 corresponds directly with what is known about the contacts between these proteins and DNA from their molecular structures. RNAP and the transcription factor (here called CRP) bind at particular positions along the DNA, measured from the point at which RNAP starts to transcribe messenger RNA (position 0). Strikingly, there are positions where the DNA base carries zero information, and thus can be chosen at random without changing the level of transcription. Although the contribution of any single base is small, combinations of bases carry much more information. This can be captured by a model in which every base makes an additive contribution to two numbers, one of which defines the interaction of CRP with DNA and one of which defines the interaction of RNAP with DNA. This is exactly what is expected from the equilibrium statistical mechanics models—these two numbers are the free energy

for binding of CRP and RNAP to DNA. But statistical mechanics predicts that the numbers combine in a very specific way, with one more parameter describing the interaction energy between the two proteins when they are both bound. This combination captures all the available information, and the inferred value of the CRP/RNAP interaction agrees with independent measurements.

The information footprinting experiments provide strong support for relatively simple statistical physics models of protein/DNA interaction and the regulation of transcription, at least in bacteria. These models predict a wide range of phenomena: the dependence of TF effectiveness on DNA sequence, the dependence of gene expression levels on TF concentration, and more. With the advent of techniques for measuring gene expression in single cells, many of these predictions have been tested in quantitative detail, providing the kind of dialogue between experiments and theory that is the hallmark of other areas of physics.

Sometimes the regulatory sequence is more distant from the gene and in order for it to influence the expression of the gene, it has to contact the distant RNA polymerase, thereby looping out the intervening DNA. Since DNA is a polymer, this looping costs (free) energy, which can be incorporated in statistical physics models of gene expression. Such models have been successful in explaining how the amount of gene expression depends on the distance between the regulatory sites and the gene. Because DNA is a double helix, bending is not enough to bring two sites into contact; the molecule must also twist. The twist entails an additional energy cost, and this depends periodically on the distance between sites, with the periodicity matching the periodicity of the helix itself. One can engineer the genome to vary distances with single base pair resolution, and it is dramatic to see how these sub-nanometer rearrangements are propagated, quantitatively, to order of magnitude variations in the macroscopic level of gene expression. It now is possible to measure directly the looping of DNA in response to transcription factor binding, although it remains challenging to connect these single molecule experiments to the macroscopic behavior of gene expression, quantitatively.

The relatively simple picture of these processes in bacteria, where a small number of transcription factors regulate the expression of nearby genes, stands in contrast to what has been learned about the control of gene expression in higher organisms. In these cases, a single gene can be regulated by transcription factor binding to dozens of enhancer sites, spread over a length of DNA covering tens of thousands of bases. Recent work tracks these individual molecular components in several systems, showing that activation of transcription requires an occupied enhancer site to come into close proximity to the transcriptional start site, but super-resolution microscopy suggests that proximity is not contact. Independent measurements show that there is condensation of a droplet of protein molecules around active transcription sites, and this could mediate the interactions that are thought to occur by direct contact in bacteria. Interestingly, similar droplets now

have been observed in bacteria. All of these results point toward a view in which the regulation of gene expression is more of a collective effect, emerging from interactions among a large number of individual protein molecules. This connects to other examples of protein condensation (Chapter 3) and raises theoretical questions about how the specificity of individual molecular interactions is preserved in the presence of these collective effects.

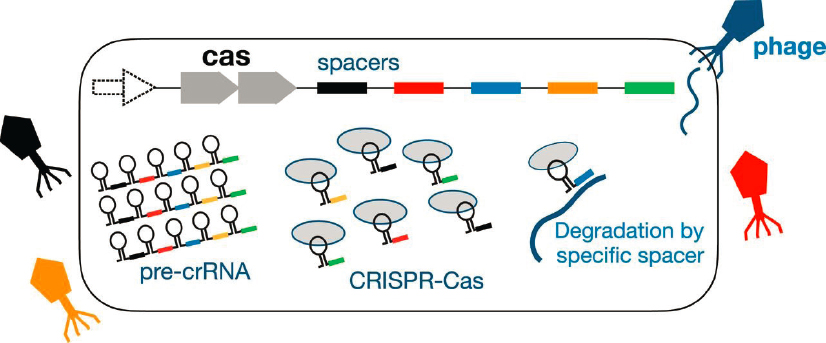

CRISPR

Finally, bacteria can edit their own DNA, storing memories of their experiences. The edited sequences are CRISPR—clustered regularly interspaced short palindromic repeats. These sequences include segments that are extracted from bacteriophages that infect the bacterium, and are inserted into the bacterial DNA by the enzyme Cas9 (see Figure 2.3). The CRISPR/Cas9 apparatus has been adapted into a tool for modifying the genomes of other organisms, and this has had a revolutionary impact on biology, on many areas of biological physics, and on prospects for gene therapy. This work was recognized with a Nobel Prize in 2020. In the life of the bacterium, however, CRISPR/Cas9 serves as a kind of immune system, carrying a memory of previous infection and allowing more rapid recognition and response to future infections. Our immune system also carries a memory, but humans do not pass this information on to their offspring. Theorists in the biological physics community have tried to understand how the different dynamics of environmental challenges drive the emergence of these different strategies in different classes of organisms.

Bacteria have limited resources that can be devoted to the CRISPR system. If the cell tries to keep a memory of too many different phages in the environment, then there simply will not be enough copies of the Cas proteins to do the job. On the other hand, in an environment with diverse challenges, keeping track of too few phages can leave the cell vulnerable. With reasonable assumptions, one can calculate the probability of survival as a function of the number of stored memories and the number of different phages in the environment, and the result is that the optimal number of memories is close to the size of the CRISPR systems in real cells. This is just the start of an ambitious program to understand features of this remarkable system as responses to the environment, quantitatively.

Perspective

Information encoded in DNA has been the source of deep questions about the physics of life for nearly 70 years. This section highlights some of the major results and points to several frontiers where progress is expected in the coming decade. A

common theme is a shift from thinking about isolated bits of information to seeing this information in context, particularly important since DNA forms the substrate on which evolution takes place (Chapter 4). This context may be provided by the whole population of tRNA molecules that embody the genetic codebook, by the large number of molecules that are involved in controlling the expression of even single genes in higher organisms, or by the ensemble of phages that challenge the health of individual bacteria. In each of these cases and more, it is reasonable to expect progress in the coming decade both from the introduction of new experimental methods and from the formulation of sharper theoretical questions about how these systems function.

INFORMATION IN MOLECULAR CONCENTRATIONS

Throughout the living world, information crucial to life’s functions is represented or encoded in the concentrations of specific molecules. This happens on a macroscopic scale, as when insects find their mates by following the odor of pheromones, or when cells in our body secrete and respond to hormones that circulate in the blood. It also happens on a microscopic scale as cells control their internal states by changing the concentrations of signaling molecules. While such molecular

signaling is ubiquitous in the living world, it is less connected to the traditional subject matter of physics, where information most often is carried by electrical or optical signals. On the theoretical side, this raises new physics problems: Which physical principles of information transmission are universal, and what new principles emerge for the case of molecular signaling? On the experimental side, methods from physics have combined with those from chemistry and biology to provide an unprecedented ability to observe and manipulate molecular signals in living cells. These foundational developments set the stage for today’s excitement in this crucial area of biological physics.

Signaling, Growth, and Division

In describing the ability of the visual system to count single photons, and the ability of bacteria to count the molecules arriving at their surface (Chapter 1), we have encountered signaling via changing molecular concentrations as intermediate steps along the path from input to output (see Figures 1.11 and 1.12). In vision, the input is light, but the absorption of one photon triggers a structural change in one rhodopsin molecule, and it is useful to think of the cell as having to “smell” this one molecule out of 1 billion others. The output of the cell is an electrical voltage or current across the membrane, but this current flows through channels whose state is controlled by the concentration of a small signaling molecule, cGMP. In this sense, light intensity is represented internally by the concentration of cGMP, and this concentration in turn is the result of a cascade of molecular events. Similarly, in bacterial chemotaxis there is a cascade from the cell surface receptors to the phosphorylation of the CheY protein, which ultimately controls the flagellar motor much as cGMP controls ion channels. In both cases, prolonged inputs generate adaptation, which reduces the response, but this requires the accumulation of another internal molecular signal (Chapter 4). Amplification via molecular cascades is widespread, and phosphorylation is both a common signal and a central component of many cascades. Proteins that act as enzymes to phosphorylate other proteins are called kinases, and there are kinase kinases as well as kinase kinase kinases, testimony to the ubiquity of these cascades.

As cells grow and divide, their size, structure, and timing are encoded in the concentrations of several different molecules. One example are the Min proteins discussed in Chapter 1. The discovery of molecules that control the cycle of cell division in eukaryotic cells was recognized by a Nobel Prize in 2001, but controversies remain about the connection of these core molecules to the phenomenology of growth. It has been proposed that there are master molecules which trigger cell division at a threshold concentration, so that the accumulation or dilution of these molecules is analogous to the accumulation of evidence for a decision. Candidates for these molecules are not universal, and it is not clear whether this is the right

picture. Many groups in the biological physics community have gone back to the macroscopic features of growth and division in bacteria, discovering for example that fluctuations in growth rate can be inherited and correlations maintained across a dozen generations; the molecules whose concentrations represent this information have not been identified.

From Transcription Factors to Genetic Networks

An important class of molecules that convey information through their concentrations are transcription factors. As explained in relation to Figure 2.2, these proteins bind to particular DNA sequences and regulate the synthesis of mRNA from nearby genes. At the start of the 21st century, the study of information flow in transcriptional control was revolutionized by the introduction of new experimental methods. The discovery and engineering of green fluorescent proteins, described in Chapter 6, opened a path to genetically engineering organisms so that TFs could control the synthesis of fluorescent proteins, or so that the TFs themselves could be fused with fluorescent proteins. In addition, DNA sequences of interesting genes could be modified so that the resulting mRNA molecules attract other fluorescent proteins engineered into the genome, providing a readout of transcription at its start. Improved optical microscopes then allow visualization of these molecules in the living cell. Even classical ideas about tagging mRNA molecules with fluorescent labels could be pushed, with better microscopes, to the point of counting each individual molecule, one by one.

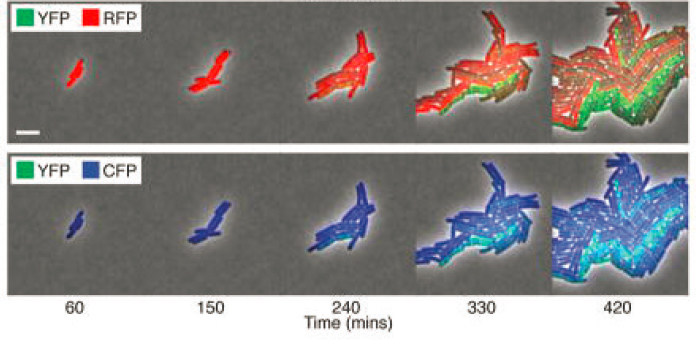

Measurement with fluorescent proteins in single cells allowed the first measurements of noise in transcriptional control, separating intrinsic noise in the control mechanism from extrinsic variations in cellular conditions. This led to a flurry of theoretical work, trying both to understand the precise physical origins of this noise and to explore the implications of noise for information transmission. A second generation of experiments, such as that in Figure 2.4, follows the dynamics of multiple proteins to resolve the direction of information flow from the transcription factor to its target gene. Careful measurements of correlations in the fluctuations of these protein concentrations provide a detailed test for models of the regulatory interactions, which in turn provide a foundation for engineering new genetic circuits (Chapter 7).

Transcription factors are proteins, and their expression is regulated by other TFs, resulting in networks of genetic control. There is considerable interest in understanding information flow through these networks, and the possibility that they generate emergent, collective states. Interest comes both from the biological physics community (e.g., in the spirit of Chapter 3) and from the biology community (Chapter 6). Because fluorescent proteins have broad absorption and emission profiles, however, it is difficult to adapt these methods to studying many

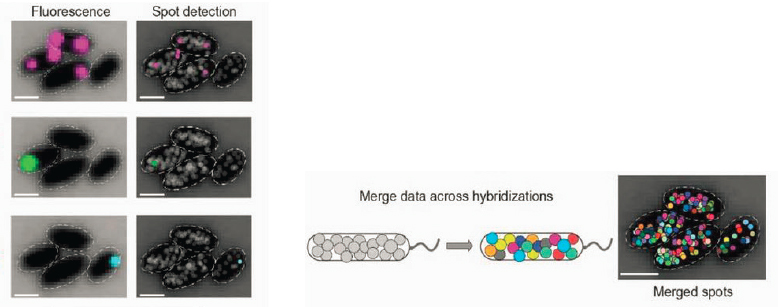

genes simultaneously in live cells. As a result, many investigators have looked to counting mRNA molecules rather than proteins. One approach uses microfluidic methods developed in the biological physics community to manipulate large numbers of single cells, ultimately breaking them open and identifying all of their mRNA molecules using biochemical sequencing methods (Chapter 6). These single cell sequencing methods have swept through the biology community over the last decade. A different strategy, based again on optical methods, is to fix the cell so that one can look for longer times, perhaps at many genes in sequence. An example of this is in Figure 2.5, where bacteria are fixed and then labeled by fluorescently tagged DNA molecules that are complementary to the mRNA molecules of different genes. With modern microscopy methods, one can count these molecules, one by one, and do this for each of many genes to build up a snapshot of the state of a single cell.

The comparison of Figures 2.4 and 2.5 highlights an important point about experimental methods. In one case (see Figure 2.4), genetic engineering turns the concentration of a handful of proteins into fluorescence signals with different colors, and this allows measuring molecular concentrations over time in live cells. While there are many technical issues, in favorable cases this really provides a readout of the information at several nodes of a genetic network, in a live, functioning cell. A basic limitation of these methods is that the absorption and emission spectra of fluorescent proteins are broad, so that if we try to monitor too many nodes simultaneously the signals will be corrupted by crosstalk. In the other case (see Figure 2.5), the action is stopped, providing time for multiple measurements that eventually probe the concentrations of several thousand different molecular species, with single molecule precision. While these observations on fixed cells cannot provide dynamical information, we can sample the distribution of signals across large populations of cells. Both approaches still are developing rapidly, and we expect to see deeper insights emerging from the analysis of these measurements in the coming years.

Positional Information

It has long been appreciated that developing embryos are a system in which ideas about information and the control of gene expression come together. Embryos begin as one fertilized egg cell, and then go through many rounds of cell division. For several cycles, the cells are functionally equivalent, and could in principle grow to become any part of the organism’s body. But at some time in the course of development, cells need to adopt distinct identities. Cellular identities are defined, in part, by their patterns of gene expression, and these need to be matched to their spatial location in the body. In this sense, cells need to “know” their positions.

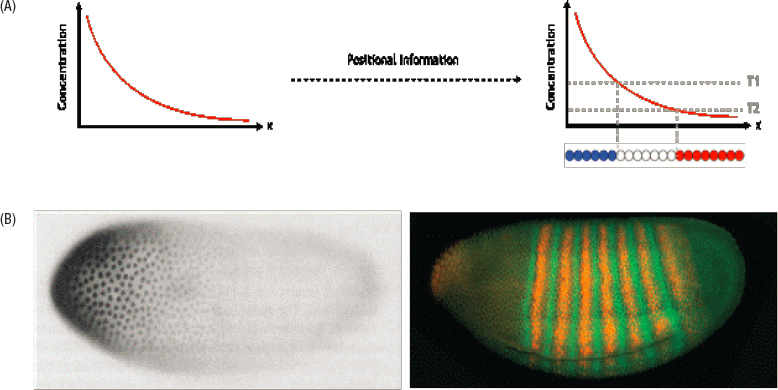

Cells in a developing embryo could acquire positional information in many ways. One common theme is that this information is carried by the concentrations of specific molecules—morphogens—as schematized in Figure 2.6. But how do the necessary spatial variations in molecular concentration arise? One possibility that we have encountered in Chapter 1 is that patterns can arise out of instabilities in the dynamics of some underlying biochemical or genetic network, operating in an otherwise homogeneous embryo. Another possibility is that the homogeneity or symmetry is broken not spontaneously but by some external event, perhaps at the moment of fertilization or even in the construction of the egg itself. These two different scenarios lead to different physics problems, and real living systems almost certainly combine elements of both.

A dramatic step forward was the identification of these morphogens in particular systems. In the fruit fly, which had been a favorite experimental testing

ground for ideas about genes since the early years of the 20th century, there had long been indications that mutations in a single gene could cause macroscopic changes in the pattern of development, leading to the deletion, rearrangement, or duplication of body parts. In the 1970s, there was a systematic search for all the genes that control pattern formation in the fly embryo, and this led to the startling conclusion that there are only about 100 such molecules, and even fewer involved in patterning the long axis into body segments. Furthermore, these molecules are organized into a layered network, starting with molecules that are placed in the egg by the mother. These primary maternal morphogens control the expression of a collection of gap genes, so named because mutations in these genes lead to gaps in the body plan, and then the gap genes control the expression of pair rule genes that form striped patterns, an approximate blueprint for the segments of the fully developed organism.

The demonstration that just a small number of molecules were responsible for pattern formation in the fly embryo came at an opportune time. In molecular biology, new tools were making it possible to sequence these genes, to synthesize the proteins that they code for in the laboratory, and thus to make probes that could measure their concentration in the embryo. Almost all of the relevant molecules are transcription factors, so these earliest steps in pattern formation involve only the control of gene expression, rather than changes in cell structure or overall geometry of the embryo. These macroscopic, mechanical manifestations of pattern formation come later. The whole process, however, is quite rapid: The egg hatches in 24 hours, and the striped patterns of pair rule gene expression are visible just 3 hours after the egg is laid.

At the start of the 21st century, several groups had the idea that the fly embryo could be turned into a physics laboratory. These experimental efforts were launched in a background of theoretical work on noise and information transmission in molecular networks, and ideas about the robustness of these networks to parameter variation. The new generation of physics experiments on the fly embryo revealed the system to be much more precise than anyone had anticipated. Concentrations of just a handful of the proteins encoded by the gap genes provide enough information to specify the position of cells to 1 percent accuracy. Primary morphogens have absolute concentrations that are reproducible from embryo to embryo with ∼8 percent accuracy. This precision occurs despite the fact that relevant molecules are present at low concentrations, as with other transcription factors, and these notions of precision could be formalized in the language of statistical physics and information theory.

Results on the precision of positional information processing in the fly embryo have stimulated the exploration of many theoretical questions. What are the physical limits to information transmission through genetic networks given the limited number of molecules and low concentrations involved? What strategies

can cells use to reach these limits, allowing the construction of more complex and reproducible body plans? Is there something special about the fly that allows all these events to happen so reliably and so quickly? Alternatively, what new possibilities emerge for organisms that use a longer time scale for development? Approaches to these theoretical questions are connecting quite abstract considerations to the quantitative details of experiments on particular systems, including the fly embryo. It is especially interesting that ideas about information flow in these genetic and biochemical networks have parallels with ideas about information flow in the brain.

Perspective

Physicists are accustomed to the idea that information can be carried by electrical currents and by light. More abstractly, correlations in the fluctuations of any field can be thought of as carrying information across space and time. But living systems’ use of changing molecular concentrations to convey information poses new challenges. Basic physical limits to this mode of communication have been known for decades, but there still are new discoveries being made as the community generalizes these ideas to contexts more relevant to real cells. In some systems, foundational work in biology has provided a nearly complete list of the relevant molecules, so there is a closed system within which to ask about the representation of information and the physical principles that govern life’s choice of this representation. In other cases, the set of relevant molecules expands as experiments probe more widely, and in the limit one can ask about information contained in the patterns of expression across the entire genome. These developments hint at a more collective view of signaling and information flow, connecting to the ideas of Chapter 3.

INFORMATION IN THE BRAIN

When we run our fingers across a textured surface, receptor cells in our fingertips convert the pressure on our skin into electrical currents that flow across the membrane of these cells, much as happens in other sensing systems (Chapter 1). But it is a long way from our fingertips to our brain, or even to the spinal cord. For this long distance communication of information, the continuously varying currents are converted into discrete electrical pulses, called action potentials or spikes. Spikes can propagate without decaying or changing shape, at an essentially constant speed, until they reach the synapse where one cell connects to another. This conversion to spikes is essentially universal, happening in all our senses, and in almost all organisms. What humans see and hear, smell and feel thus are not light and sound or odors and textures; instead,

what is sensed are the patterns of action potentials over time arriving from our sensory neurons. When we move, the brain sends action potentials in the other direction, along the motor nerve cells out to the muscles. Spikes also are what neurons inside the brain use in sending signals to one another. Patterns of action potentials are the language of the brain, and all the information that the brain uses—thoughts and perceptions, memories and actions—is at some point encoded in spikes.

Action Potentials and Ion Channels

The uncovering of the mechanisms by which nerve cells generate action potentials is another great success story in the interaction between physics and biology. There was a long path from quantitative observations on the relatively macroscopic electrical dynamics of single neurons to a precise mathematical and physical description of the underlying molecular events, including the structures of the relevant molecules, the ion channel proteins that are embedded in the cell membrane. Along the way were the very first direct measurements of dynamics in single molecules, the observations of electrical current flow through these channels. This work in the biological physics community was contemporary with the first measurements of electrical current flow through nanoscale devices in the condensed matter physics community. For decades, this research program was in the reductionist spirit, finding the elementary building blocks that generate the electrical behaviors of cells. We describe this largely complete program here because it provides a model for understanding the phenomena of life, quantitatively, with our understanding summarized in mathematical terms, as expected in physics. These discoveries also provide a foundation for many currently exciting questions in the physics of living systems, from understanding the energetic costs for reliable information processing to the way in which life explores the parameter space of microscopic mechanisms (Chapter 4).

All cells are bounded by a membrane that separates inside from outside. The membrane by itself is a good electrical insulator, allowing very little current flow. Interesting electrical dynamics in cells happen because there are specific protein molecules inserted into the membrane. Currents that do flow are carried by ions, not by electrons as in familiar electronic devices. All cells have pumps to maintain concentration differences for these ions between the inside and outside of the cell, effectively acting as batteries, and the inside of a cell typically is 0.03−0.05 volts negative relative to the outside.

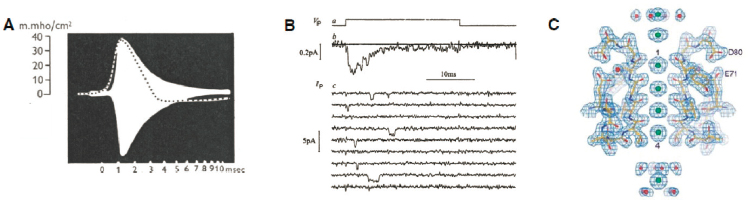

The action potential is a brief pulse, lasting a few thousandths of a second, during which the voltage difference between the inside and outside of the cell changes by roughly one tenth of a volt. The first major clue to the dynamics of the action potential was that during the spike, the resistance of the membrane is

reduced, or equivalently the conductance is increased (see Figure 2.7A). A series of beautiful experiments and mathematical analyses showed that these changes in conductance could be dissected into one component specific to the flow of sodium ions, and one specific to potassium ions. These conductances have their own dynamics in response to the changing voltage across the cell membrane, and the coupled dynamics of voltage and conductances have a remarkable mathematical structure—in a long cylinder shaped cell, as with the axons that extend outward from most neurons, the solutions to these equations converge to stereotyped pulses that propagate at a constant speed, and this provides a nearly perfect quantitative description of the action potential.

A natural interpretation of the equations describing the sodium and potassium conductances is that the membrane has “channels” that allow these ions to pass, and that the dynamics of current flow are the dynamics of these channels opening and closing. If this is correct, then small patches of membrane will have small numbers of these channel molecules, and since single molecules behave randomly, the resulting current flow will have measurable randomness or noise, and it does.

In sufficiently small patches of the membrane, with sufficiently sensitive amplifiers, one can see individual channel molecules opening and closing (see Figure 2.8), and reconstruct the original macroscopic description of current flow by averaging over these random molecular events. Looking more closely at the original equations, the dynamics of the channels are described by multiple “gates” that open and close. For gates to open and close in response to voltage, basic statistical mechanical principles require that opening and closing is associated with structural changes in the channel that move charge across the membrane; this small gating charge movement was eventually measured and agrees quantitatively with theory. Once it was possible to isolate the channel proteins, a major effort to determine their structure by X-ray diffraction resulted in a clear view of how ions pass through the channel (see Figure 2.7C).

It took nearly 30 years to go from the mathematical description of conductances and voltages to the observation of current flow through single channels, and another 20 years to reveal the structure of the channels. As always, between these milestones many important results accumulated. An interesting feature of the history is that decades of work were driven by the search for the physical embodiment of individual terms in the equations—channels, gates, and more—taking the mathematical description literally. Although the results of this search have become part of the mainstream of biology, this style of exploration is very much grounded in physics. Several of the milestones in our understanding of the action potential were recognized with Nobel Prizes: the mathematical description of membrane conductances (1963), the observation of single channel currents (1991), and the structure of ion channels (2003).

The reductionist program that brought us from action potentials to ion channels is largely complete, though there are open questions about the physics of ion transport through the channel. But while the squid axon that was the subject of early studies has dynamics dominated by two types of channels, it now is known that the genomes of many animals, including humans, encode over one hundred different kinds of channels, and a typical neuron in the brain might use seven of these different types. Our precise understanding of individual channel dynamics sets the stage for asking questions at the next scale of organization. In particular, how do cells choose the number of each type of channel to insert into the membrane? This provides an accessible example of a larger question about how organisms navigate the large parameter space accessible to them, as described in Chapter 4. In a different direction, electrical signaling through action potentials, or through smaller amplitude graded changes in voltage, provide concrete examples where we can understand the energy costs of coding and computation in the nervous system. Our understanding of the inherently stochastic molecular dynamics of the channel molecules also means we can characterize the reliability or fidelity of information transmission and processing, and relate these measures of performance to the

energy costs. Can we connect these concrete ideas to more abstract ideas from non-equilibrium statistical physics about dissipation and noise? Are there lessons for engineering, in the choice of analog versus digital computation? Finally, while it is tempting to think that the details of molecular dynamics are erased once we mark the occurrence of relatively macroscopic action potentials, there are hints that some features of these dynamics, which determine the sharpness of the threshold for triggering spikes, may influence even the collective dynamics in large networks of neurons (Chapter 3).

Coding in Single Neurons

Beyond the mechanisms of action potential generation, one can ask how these signals represent information. The earliest observations on spikes in single sensory neurons showed that constant sensory input, such a steady light shining into the eye or a constant weight on the mechanical sensors in muscles, resulted in spikes generated at a rate that increased with the strength of the stimulus. This idea of “rate coding” has had considerable influence, moving attention away from the discrete nature of action potentials. But under natural conditions, sensory inputs are seldom constant, and there is considerable evidence that many sensory neurons generate roughly one spike before the inputs change. Confirming that these spikes are not just noise, experiments have demonstrated the reproducibility of the response across multiple experiences of the same sensory inputs, down to a time resolution of a few thousandths of a second. With care, this reproducibility is visible in the responses of neurons much deeper in the brain. Moving away from the sensory inputs, however, neurons receive input from many places; a typical cell deep in the cortex receives thousands of inputs from other neurons, and it becomes more difficult to separate signal and noise convincingly. Approaching the brain’s output, one can once again see the correlation of single spikes and patterns of spikes with particular trajectories of muscle activity.

The biological physics community has been keenly interested in the more abstract properties of the code by which sensory signals and motor commands are represented by sequences of action potentials. Information can be conveyed only if the sequences of spikes vary. This variability can be characterized by an entropy, the same quantity that arises in statistical mechanics, and the information (in bits) that spikes represent cannot be larger than this entropy. In several systems there is evidence that the information carried by spikes comes close to the physical limit set by their entropy, down to millisecond time resolution; that this coding efficiency is higher for sensory signals with statistics more like those that occur in the natural environment; and that this efficiency is achieved through adaptation processes that match neural coding strategies to the statistics of their inputs (see also Chapter 4). There are more ambitious efforts, with roots in the 1960s and continuing until

today, to derive these strategies directly from optimization principles, maximizing information transmission subject to physical constraints. As an example, these theories predict that on a dark night the neurons in the retina average over space and time to combat the noise of random photon arrivals, while as light levels increase they respond to more rapid spatial and temporal variations in image contrast, to remove redundancy in the signals transmitted to the brain. These predictions are qualitatively correct, and there are similar ideas about the nature of filtering in the auditory system. There is a continuing effort to push these theories into a regime that includes the full dynamics of real neurons, and to connect abstract models of coding to the known dynamics of ion channels.

The abstract measure of information in bits may seem mismatched to the concrete tasks that organisms need to accomplish in order to survive. Which bits are relevant for life? There have been efforts to define relevance by reference to other signals, or to the animal’s behavioral output. Alternatively, many tasks require organisms to make predictions, and perhaps it this predictive information which is almost always relevant. These ideas have deep connections to many problems in statistical physics and dynamical systems, and have even led to experiments that estimate the amount of information that small populations of neurons carry about the future of their sensory inputs.

Coding in Populations of Neurons

Although the spike sequences from individual cells carry surprisingly large amounts of information, it is clear that most functions of the brain require the coordinated activity of many neurons. This coordination can be so extensive that currents flowing into and out of neurons add up to generate macroscopic current flows, leading to voltage differences that are measurable even outside the skull—the electroencephalogram, or EEG. The coordinated current flows also generate magnetic fields that are detectable outside the skull, and the analysis of these signals has been called magnetoencephalography, or MEG. Modern MEG often is done with arrays of superconducting quantum interference devices (SQUIDs), connecting the frontiers of precision low-temperature physics techniques to the study of brains and minds. While these methods certainly have limitations, it remains striking to see how the electrical activity of the brain is correlated with our internal mental life. It is a classical demonstration, for example, that humans can change the structure of their own EEG simply by thinking about different things, even in the absence of immediate sensory inputs or motor outputs.

The representation of information by populations of neurons connects naturally to the search for collective dynamical behavior of these populations, as described in Chapter 3. One way in which collective phenomena emerge, across many physical systems, is through symmetry and the breaking of symmetry. In the

context of a population or network of neurons, symmetry would be manifest as a range of possible, reasonably stable states for the network that are all equivalent to one another; the breaking of symmetry occurs when the network chooses one of these states. If the possible states, or attractors, form a discrete set, then each of these states—which correspond to different patterns of activity across the network—can represent an object, a person, or a category of things. The set of states, taken together, represent a list of things or people that the brain can identify, a list that must have been learned (Chapter 4). The network may be driven into one of these states, breaking the symmetry, by an external stimulus, for example, when the brain recognizes an object from its appearance, or a person from the sound of her voice. But the network may break symmetry spontaneously, recalling the memory of an object or person from the most feeble of reminders. Models of neural networks with discrete symmetry breaking thus provide ideas for how the brain solves the problems of object recognition, memory, and more. Over the last two decades these seemingly abstract models have been connected, at increasing levels of detail, to experiments on real brains.

A more subtle possibility is that the set of attractors is continuous rather than discrete. Such states in a network of neurons could then represent the orientation of an object, the position of an animal in space, the direction in which an animal is looking or moving. It is known that neurons in particular brain regions provide animals with a “map” of the world in which they are moving; this discovery was recognized by a Nobel Prize in 2014. Some of the most successful models for the origin of these maps rely on the ideas of a continuous underlying symmetry in the network dynamics. As in other statistical physics problems, signatures of the interactions that allow the emergence of these collective states are found not in the mean behavior of the individual neurons but in the correlations among fluctuations in their behavior, and this has opened new directions for the analysis of these networks. Correlations also can limit or enhance the transmission of information by neurons, and this has been a central theme in experiments that probe the relation of activity in populations of neurons to the reliability of decisions.

The patterns of correlation among activity in large populations of neurons also provides hints of more general collective behaviors in the network, as discussed in Chapter 3. Theories of collective behavior make predictions for the structure of measurable correlations, but one can also turn the argument around and ask for the simplest collective states that are consistent with the measured correlations. These ideas are deeply grounded in statistical physics, and have connections to other examples of emergent behaviors of living systems, as discussed in Chapter 3. Throughout these developments, the example of neural networks has been a touchstone, providing some of the earliest and deepest examples of new physics.

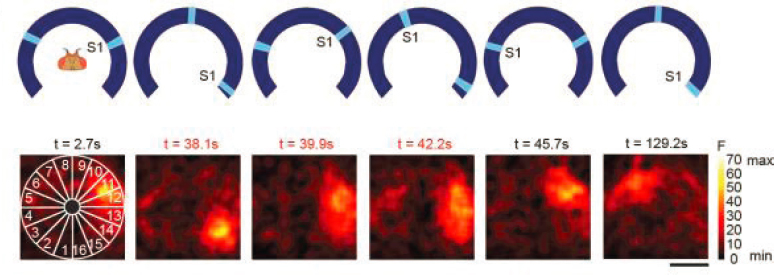

A network of neurons that represents orientation or direction would be very special, because the stable states of activity must map to a closed circle or ring. This structure could be implicit in a very densely interconnected network, so that there is no ring of neurons even if there is a ring of attractors. It came as a great surprise, then, when it was discovered that neurons in a genuinely ring shaped structure deep in the fly’s brain behave very much as predicted for the class of ring attractor networks (see Figure 2.8). This system has fewer than 100 neurons, so it is now feasible to map the connections among all these cells, and some of their sensory inputs, part of the larger efforts to map the full patterns of connectivity (the “connectome”) of substantial pieces of the brain or even the whole brain (Chapters 3 and 6).

Perspective

The search for the physical principles underlying the representation and transmission of information in the brain led to the discovery of ion channels. This effort is important on its own, but also provides perhaps the most successful mathematical description of the interactions among many different species of protein. As such, ion channels in neurons constitute a laboratory for many more general questions about the physics of life. The exploration of information flow in the brain also has led to remarkable experimental methods for monitoring the

electrical activity of neurons, and to a broad range of theoretical ideas about the way in which information is represented, and why. These developments highlight the gap between the reductionist view that ends with molecules and the functional view that ends with abstract descriptions of neural codes and network dynamics. Measurement of simultaneous activity in large numbers of neurons already has been transformational, and the growing data deluge—while presenting its own challenges for analysis—is poised in the coming years to sharply refine theoretical models, both for the representation of information and for collective behavior in neural networks (Chapter 3). The problem of connecting molecules to more phenomenological characterizations of function reappears in many contexts, and is likely to emerge as a theme in biological physics research over the coming decade.

COMMUNICATION AND LANGUAGE

Although it is tempting to “simplify” the phenomena of life by thinking about organisms in isolation, in fact many organisms communicate actively with others. As humans, we are especially fascinated by our own abilities to communicate through language, and search for analogs in other species such as songbirds. But even bacteria communicate, secreting and sensing a collection of molecules that allow individual bacteria to know about the density of other bacteria in the environment, and many insects find their mates across long distances though odor cues alone. Physicists have engaged with the full range of these problems, working to understand the mechanisms involved in each case but also searching for new unifying physical principles.

Bacterial Communication

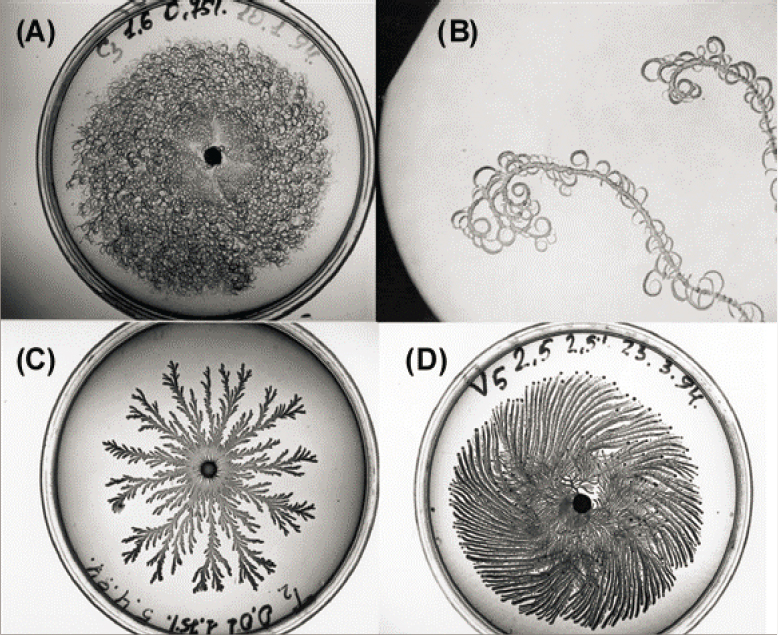

Communities of bacteria communicate with one another and form beautiful patterns as they grow (see Figure 2.9). These patterns have fascinated physicists and biologists alike. As the 20th century drew to a close, these patterns attracted renewed interest from the biological physics community. This was driven in part by progress in understanding pattern formation in inanimate systems, such as snowflakes. Bacterial communication could be a byproduct of more basic processes: As bacteria grow and move through a medium, they consume resources, and other bacteria navigate the resulting concentration gradients. But it had been known since 1970 that single celled organisms can communicate more directly, and in parallel with the biological physics community’s interest in pattern formation, microbiologists were exploring the molecular basis of this communication and realizing that it is widespread among bacteria. In particular, there are “quorum sensing” mechanisms in which individual cells excrete particular molecules, and

these accumulate, so that the concentration provides information about the local cell density.

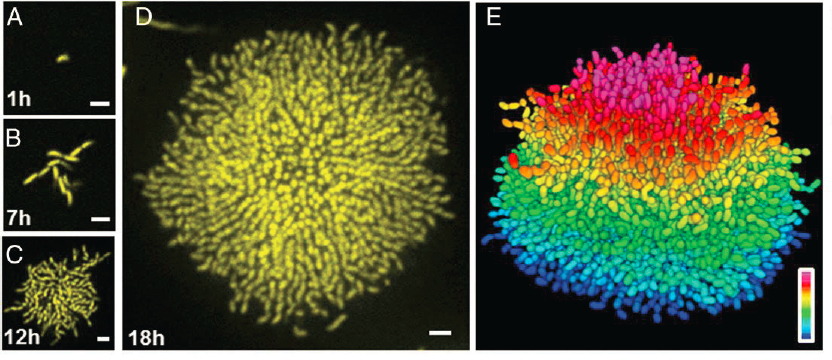

Quorum sensing is vital because cells make decisions based on their local density. Luminescent bacteria only generate light when in a group large enough to make a difference for their symbiotic partners. Infectious bacteria only carry out their nefarious program when present in high enough density not to be overwhelmed by the immune system. More modestly, cells make decisions to attach to surfaces and grow communally only when there are enough compatriots in the neighborhood. As with modern work from the biological physics community on flocks and swarms (Chapter 3), the experimental frontier is to monitor each of the thousands of individuals in one of these communities, as in Figure 2.10.

With bacteria one can do more than track their movements as they engage in collective behaviors. By genetically engineering the cells one can induce them to report on the concentration of crucial internal signaling molecules that respond to the quorum sensing signals. This connects the problem of communication among cells to the problem of representing information through changing concentrations (Chapter 2) and even the problem of chemical sensing itself (Chapter 1), illustrating how one system embodies many different physics problems, each of which arises in many systems. Much is now known about the molecular mechanisms of information flow through the quorum sensing system, and there are even efforts to understand aspects of these mechanisms as solving the problem of maximizing information flow with limited molecular resources.

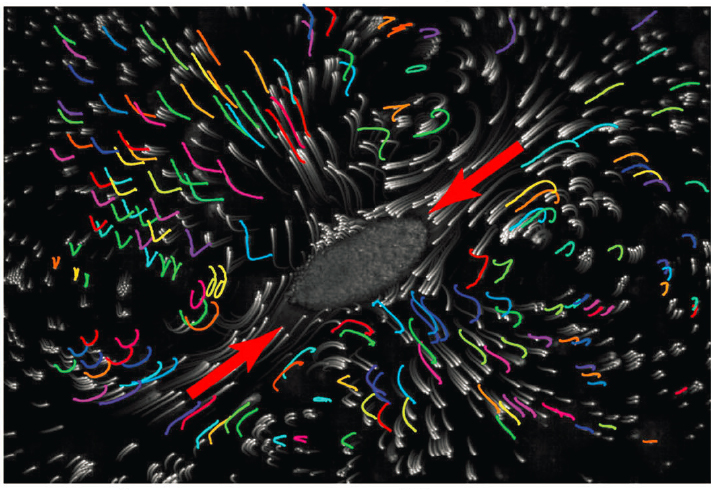

A different kind of communication among single celled organisms occurs in communities of Spirostomum ambiguum (see Figure 2.11). Living in aqueous environments, a single cell can contract its body by more than 50 percent on millisecond time scales, creating accelerations reaching 14 times that of gravity. These contractions create hydrodynamic flows that trigger other cells to contract, resulting in a wave that passes through the entire community. Cell contractions release toxins, and it is possible that the cells use this fast form of communication to repel predators or immobilize prey. The physics of flow and mechanical sensing is very different from diffusion and detection of molecules, and comparing these

different modes of communication may provide novel ways of testing ideas about the organizational principles of communication in biological systems.

Searching for Sources

Chemical communication is central not only in the lives of bacteria, but also in the lives of insects. In particular, many species of insects find their mates by following the odor of pheromones over extraordinary distances, up to 30 miles in the case of silkworm moths. In some ways this is similar to the ability of bacteria to swim toward sources of nutrients by sensing the concentration of the relevant molecules along the way (chemotaxis, Chapter 1), but the physics in these two cases is very different. On the micron scale of bacterial life, molecules move through water by diffusion, and as a result, concentrations become smooth functions of position. For insects, molecules move through the air carried by the wind, which is turbulent.

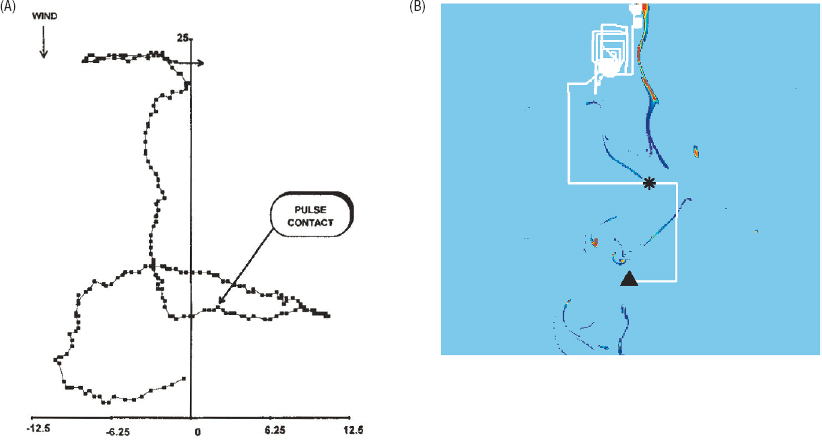

In a turbulent flow, odors are carried by plumes. Standing in one place, the odors are intermittent, as plumes pass by, providing only very limited cues about the direction of the source. Classic experiments, however, show that moths “know”

about this challenging physics problem, and actually fail to find the source of odors when turbulent flows are replaced by smooth (laminar) patterns of airflow in a wind tunnel. In the wild, insects searching for a source fly into the wind, occasionally casting sideways, and these sideways motion become more frequent when they lose track of the odor plumes (see Figure 2.12A).

The full problem of insect flight control involves synthesizing many different cues—from wind, odors, and vision—and connecting to the aerodynamics of flight itself. But the strategy behind this control might be simpler. In order to fly toward the source of an odor, the insect must make some inference about the location of that source from the limited data it has collected. Although “information” can appear as an abstraction, the minimum search time is related directly to the amount of information, in bits, that the insect has about the location of the source. In moving through the world, it might thus make sense to use a strategy that collects as much information as possible. By analogy with chemotaxis, this strategy has been called infotaxis. Infotaxis generates flight trajectories that are quite similar to the trajectories of real insects (see Figure 2.12B); the strategy works well enough that it can be used to guide robots in real world search problems, and infotaxis has revitalized the discussion of search and foraging strategies in animals more generally. More deeply, it is an example where the abstract goal of gaining information can be used in place of more detailed descriptions of underlying mechanisms, unifying our understanding of diverse animal behaviors.

Vocal Communication

Our human preoccupation with vocal communication leads to special interest in other animals that use the same modality. Frog calls, bird songs, and the mysterious sounds of whales and dolphins all attract our attention, and the attention of the physics community. Thus, the inner ear of frogs has been an important experimental testing ground for ideas about the mechanics of hearing (Chapter 1). Songbirds share with us the fact that vocalizations are learned, and thus provide an important test case for theories and experiments on learning (Chapter 4), as well as interesting examples for the neural coding of complex, naturalistic signals (Chapter 2). Bird song also provides an opportunity to explore the statistical structure of complex behaviors (Chapter 1) across time scales and species. Zebra finches sing individual songs that are stereotyped to millisecond precision, while these songs are linked together in more complex and variable structure on longer time scales. In other species, such as Bengalese finches, even individual songs are variable, and sequences of song elements are strongly non-Markovian.

And what of human language itself? Physicists have long been fascinated by the evidence for scale invariant correlations in written texts and speech. These observations are reminiscent of scale invariant behaviors near critical points, which

have a deep meaning, but it has been controversial whether this connection is more than a metaphor. What it not controversial is that our linguistic interactions with machines—from automatic speech recognition to colloquial queries in search engines and machine translation of text from one language to another—have been revolutionized in just the past few years by new computational models. These models, including “long short-term memories” and transformers, are dynamical, recurrent versions of the deep neural network models that have had such a huge impact on artificial intelligence more generally. As discussed in Chapters 4 and 7, these models have their roots in statistical physics models for networks of real neurons in the brain, and many people see statistical physics as a natural language within which to understand why such systems work so well. Among other features,

the new language models capture correlations over much longer time scales than previous models, and this certainly is part of what gives them their power.

In many ways, the frontier of this subject has moved from the examination of natural language to the problem of understanding why artificial neural networks are so successful at language processing tasks. Although this effort involved contributions from many disciplines, there has been special interest from the biological and statistical physics communities. As emphasized in Chapter 3, neural networks are a continuing source of new physics problems, and the language processing systems push these questions into new and unexplored regimes.

Perspective

The biological physics community’s exploration of communication spans from hydrodynamic trigger waves to language, from songbirds to information-based search, and more. From the biological point of view, these are vastly different systems, the subjects of quite separate literatures. It is too soon to know if the search for common physical principles will succeed, but this search certainly is motivating many exciting developments. The comparison of these different systems can seem like a primarily theoretical question, but these comparisons also highlight opportunities for new and more precise experiments that will be realized in the coming decade. The idea that concrete tasks can be solved by methods that refer only to abstract goals, such as gathering information, provides a hint for how we may able to generalize away from microscopic details in a wider range of problems, connecting macroscopic functions more directly to new physical principles. The connections to artificial intelligence strengthen one of the most important paths for biological physics research to have impact on technology and society (Chapter 7).